"Information": models, code, and papers

Skyline variations allow estimating distance to trees on landscape photos using semantic segmentation

Jan 14, 2022

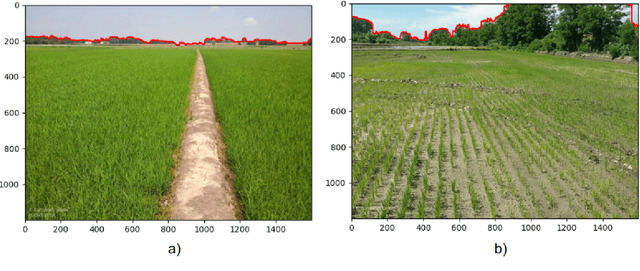

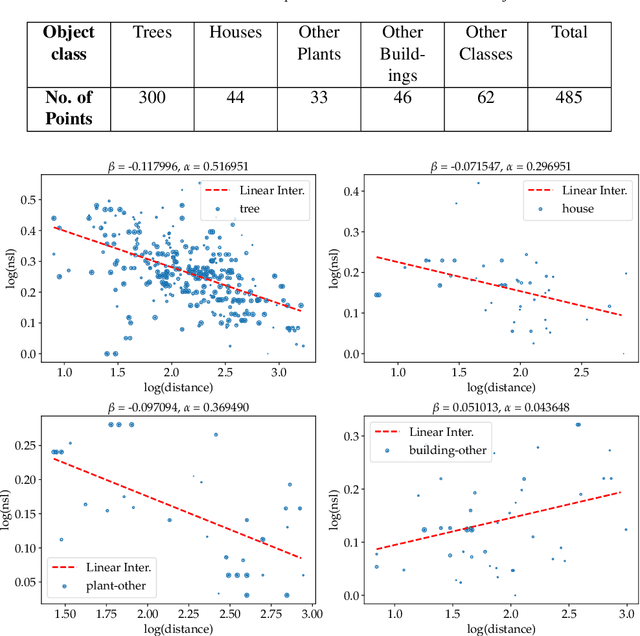

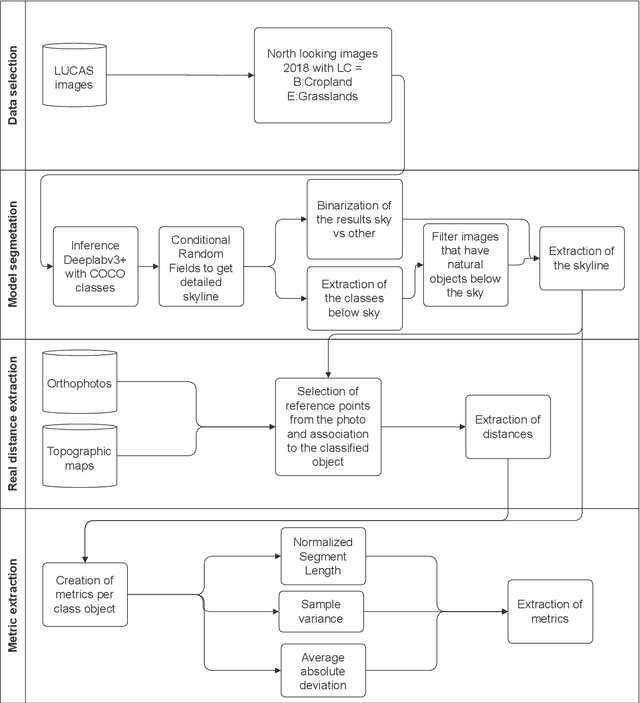

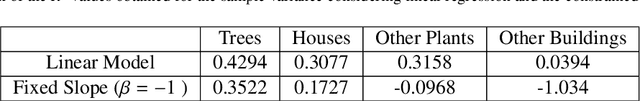

Approximate distance estimation can be used to determine fundamental landscape properties including complexity and openness. We show that variations in the skyline of landscape photos can be used to estimate distances to trees on the horizon. A methodology based on the variations of the skyline has been developed and used to investigate potential relationships with the distance to skyline objects. The skyline signal, defined by the skyline height expressed in pixels, was extracted for several Land Use/Cover Area frame Survey (LUCAS) landscape photos. Photos were semantically segmented with DeepLabV3+ trained with the Common Objects in Context (COCO) dataset. This provided pixel-level classification of the objects forming the skyline. A Conditional Random Fields (CRF) algorithm was also applied to increase the details of the skyline signal. Three metrics, able to capture the skyline signal variations, were then considered for the analysis. These metrics shows a functional relationship with distance for the class of trees, whose contours have a fractal nature. In particular, regression analysis was performed against 475 ortho-photo based distance measurements, and, in the best case, a R2 score equal to 0.47 was achieved. This is an encouraging result which shows the potential of skyline variation metrics for inferring distance related information.

Strategies in JPEG compression using Convolutional Neural Network (CNN)

Dec 06, 2021

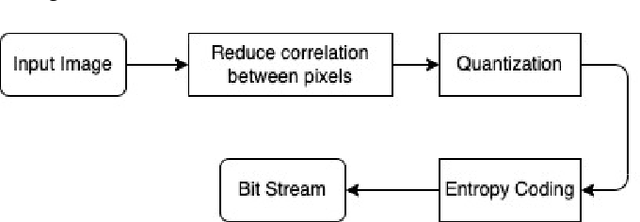

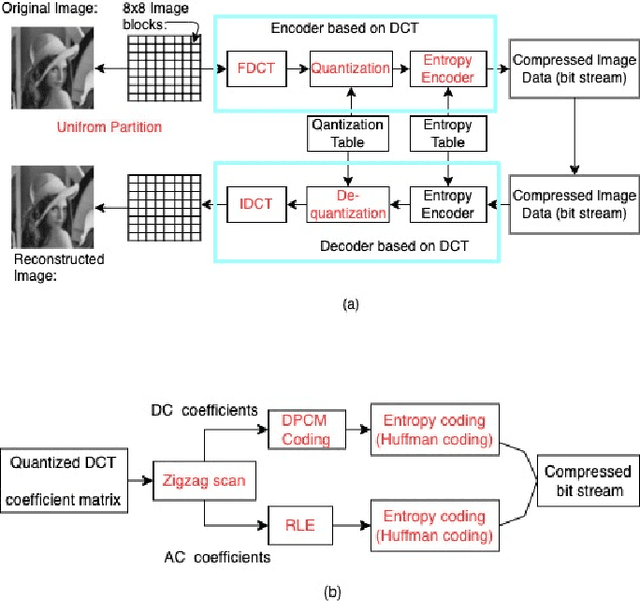

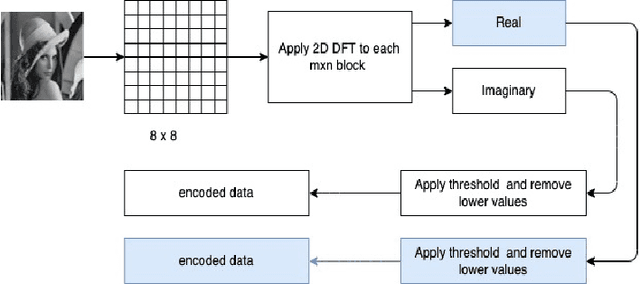

Interests in digital image processing are growing enormously in recent decades. As a result, different data compression techniques have been proposed which are concerned mostly with the minimization of information used for the representation of images. With the advances of deep neural networks, image compression can be achieved to a higher degree. This paper describes an overview of JPEG Compression, Discrete Fourier Transform (DFT), Convolutional Neural Network (CNN), quality metrics to measure the performance of image compression and discuss the advancement of deep learning for image compression mostly focused on JPEG, and suggests that adaptation of model improve the compression.

Implications of Topological Imbalance for Representation Learning on Biomedical Knowledge Graphs

Dec 13, 2021

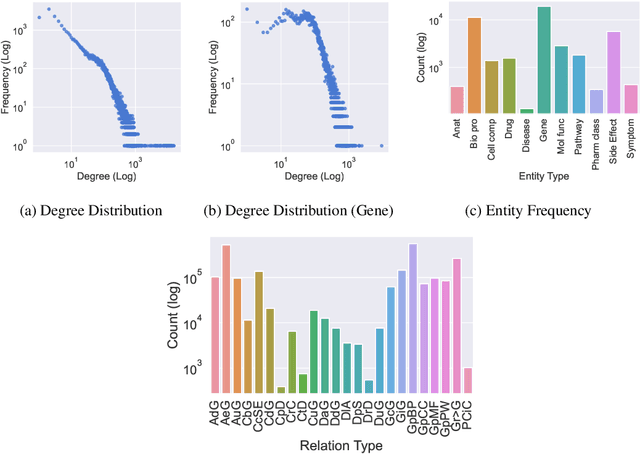

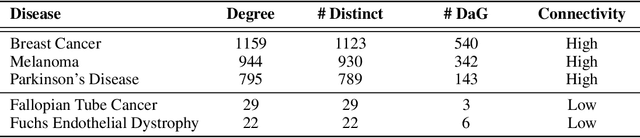

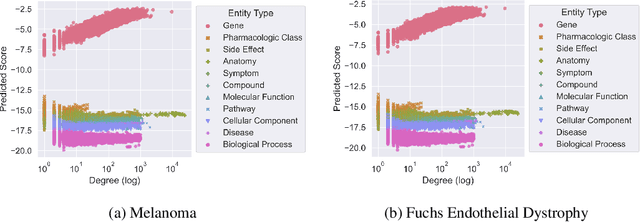

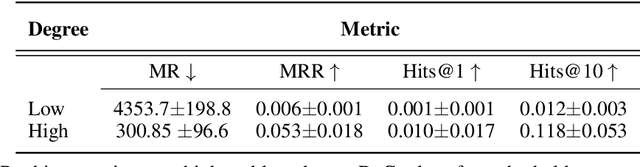

Improving on the standard of care for diseases is predicated on better treatments, which in turn relies on finding and developing new drugs. However, drug discovery is a complex and costly process. Adoption of methods from machine learning has given rise to creation of drug discovery knowledge graphs which utilize the inherent interconnected nature of the domain. Graph-based data modelling, combined with knowledge graph embeddings provide a more intuitive representation of the domain and are suitable for inference tasks such as predicting missing links. One such example would be producing ranked lists of likely associated genes for a given disease, often referred to as target discovery. It is thus critical that these predictions are not only pertinent but also biologically meaningful. However, knowledge graphs can be biased either directly due to the underlying data sources that are integrated or due to modeling choices in the construction of the graph, one consequence of which is that certain entities can get topologically overrepresented. We show how knowledge graph embedding models can be affected by this structural imbalance, resulting in densely connected entities being highly ranked no matter the context. We provide support for this observation across different datasets, models and predictive tasks. Further, we show how the graph topology can be perturbed to artificially alter the rank of a gene via random, biologically meaningless information. This suggests that such models can be more influenced by the frequency of entities rather than biological information encoded in the relations, creating issues when entity frequency is not a true reflection of underlying data. Our results highlight the importance of data modeling choices and emphasizes the need for practitioners to be mindful of these issues when interpreting model outputs and during knowledge graph composition.

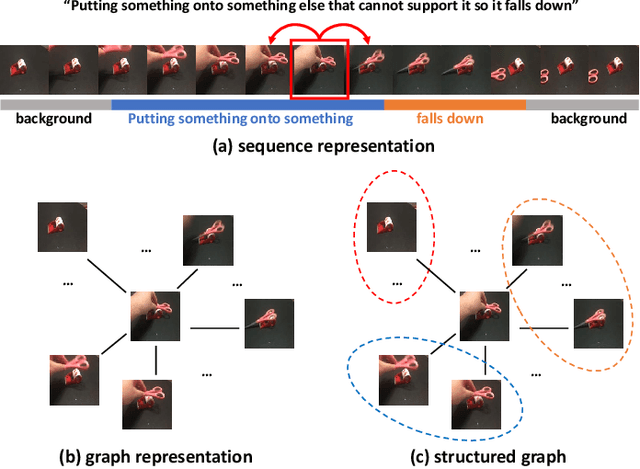

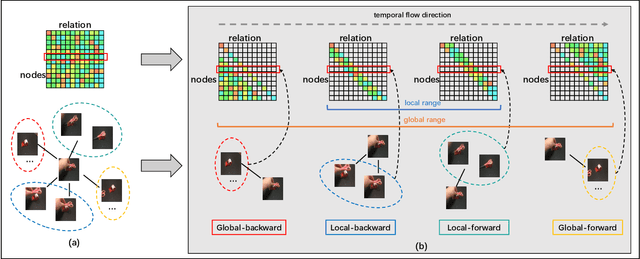

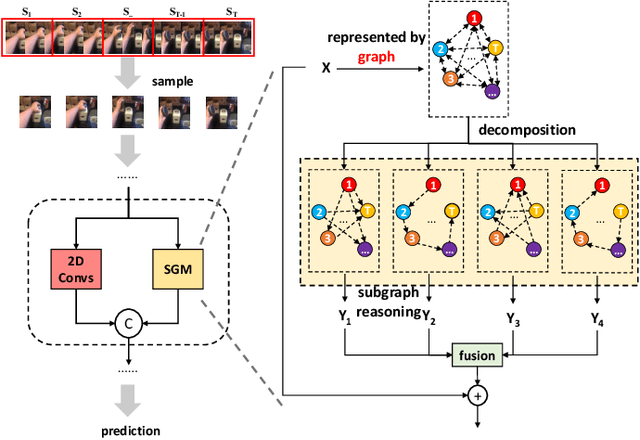

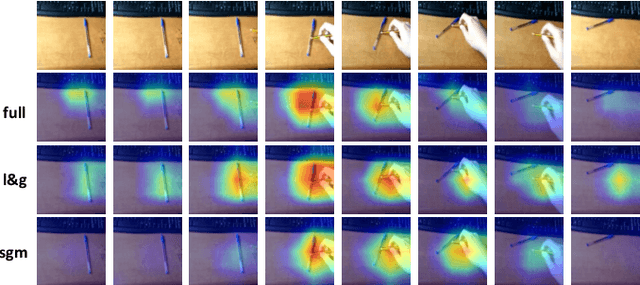

Video Is Graph: Structured Graph Module for Video Action Recognition

Oct 12, 2021

In the field of action recognition, video clips are always treated as ordered frames for subsequent processing. To achieve spatio-temporal perception, existing approaches propose to embed adjacent temporal interaction in the convolutional layer. The global semantic information can therefore be obtained by stacking multiple local layers hierarchically. However, such global temporal accumulation can only reflect the high-level semantics in deep layers, neglecting the potential low-level holistic clues in shallow layers. In this paper, we first propose to transform a video sequence into a graph to obtain direct long-term dependencies among temporal frames. To preserve sequential information during transformation, we devise a structured graph module (SGM), achieving fine-grained temporal interactions throughout the entire network. In particular, SGM divides the neighbors of each node into several temporal regions so as to extract global structural information with diverse sequential flows. Extensive experiments are performed on standard benchmark datasets, i.e., Something-Something V1 & V2, Diving48, Kinetics-400, UCF101, and HMDB51. The reported performance and analysis demonstrate that SGM can achieve outstanding precision with less computational complexity.

The cluster structure function

Jan 04, 2022

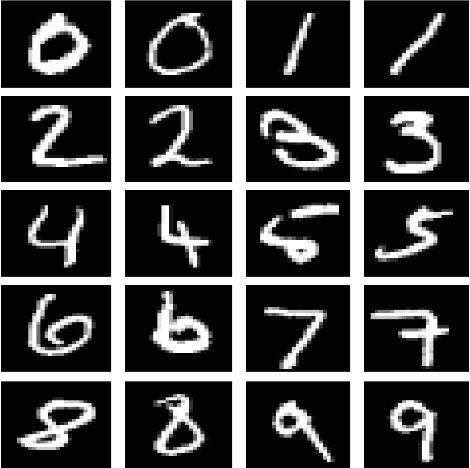

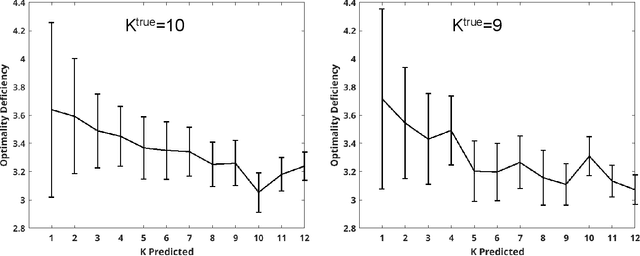

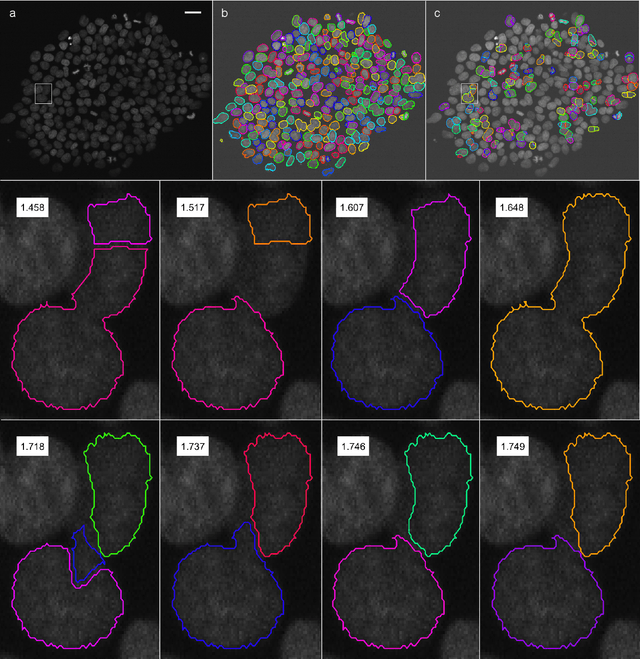

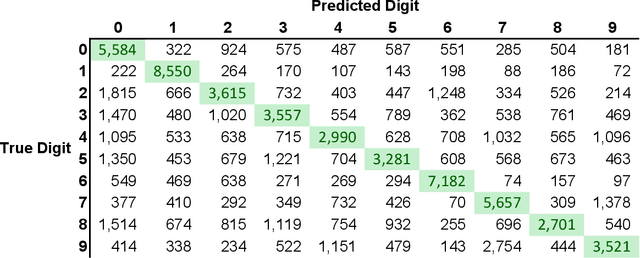

For each partition of a data set into a given number of parts there is a partition such that every part is as much as possible a good model (an "algorithmic sufficient statistic") for the data in that part. Since this can be done for every number between one and the number of data, the result is a function, the cluster structure function. It maps the number of parts of a partition to values related to the deficiencies of being good models by the parts. Such a function starts with a value at least zero for no partition of the data set and descents to zero for the partition of the data set into singleton parts. The optimal clustering is the one chosen to minimize the cluster structure function. The theory behind the method is expressed in algorithmic information theory (Kolmogorov complexity). In practice the Kolmogorov complexities involved are approximated by a concrete compressor. We give examples using real data sets: the MNIST handwritten digits and the segmentation of real cells as used in stem cell research.

Lifelong Dynamic Optimization for Self-Adaptive Systems: Fact or Fiction?

Jan 18, 2022

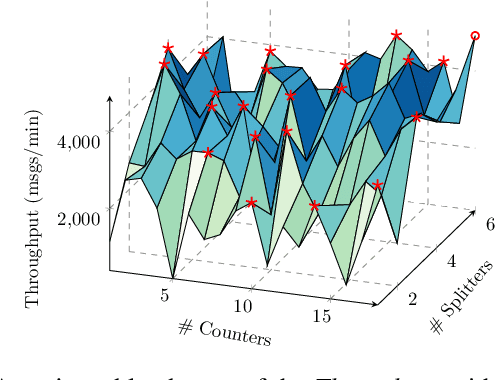

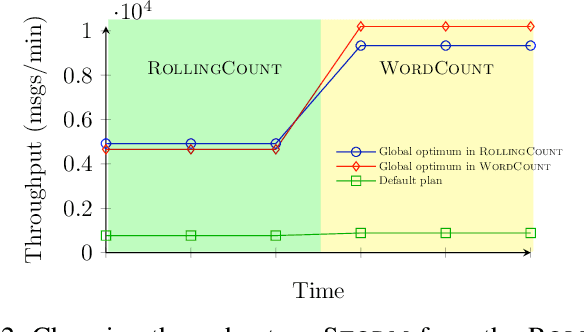

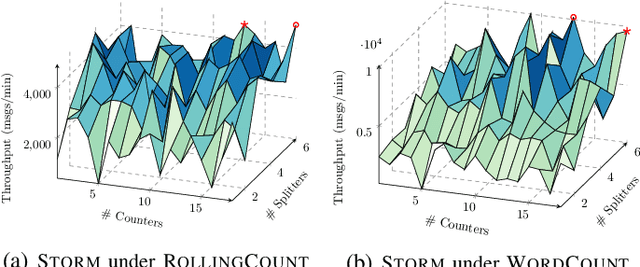

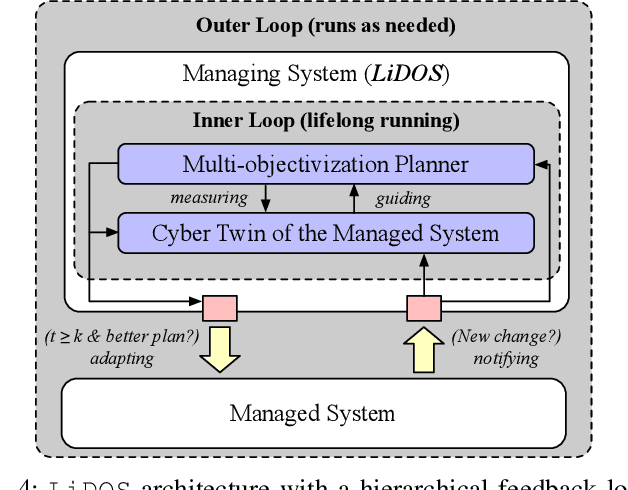

When faced with changing environment, highly configurable software systems need to dynamically search for promising adaptation plan that keeps the best possible performance, e.g., higher throughput or smaller latency -- a typical planning problem for self-adaptive systems (SASs). However, given the rugged and complex search landscape with multiple local optima, such a SAS planning is challenging especially in dynamic environments. In this paper, we propose LiDOS, a lifelong dynamic optimization framework for SAS planning. What makes LiDOS unique is that to handle the "dynamic", we formulate the SAS planning as a multi-modal optimization problem, aiming to preserve the useful information for better dealing with the local optima issue under dynamic environment changes. This differs from existing planners in that the "dynamic" is not explicitly handled during the search process in planning. As such, the search and planning in LiDOS run continuously over the lifetime of SAS, terminating only when it is taken offline or the search space has been covered under an environment. Experimental results on three real-world SASs show that the concept of explicitly handling dynamic as part of the search in the SAS planning is effective, as LiDOS outperforms its stationary counterpart overall with up to 10x improvement. It also achieves better results in general over state-of-the-art planners and with 1.4x to 10x speedup on generating promising adaptation plans.

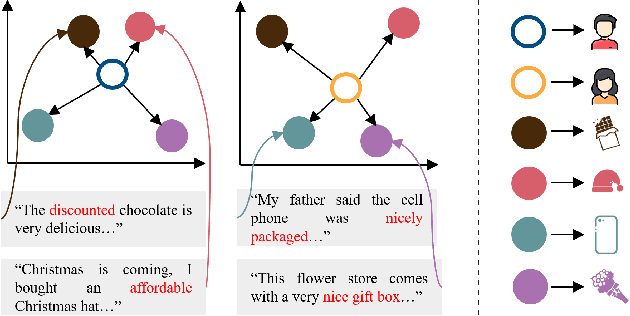

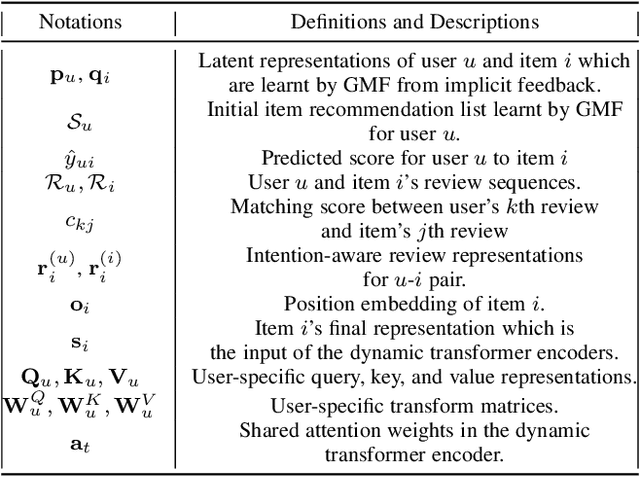

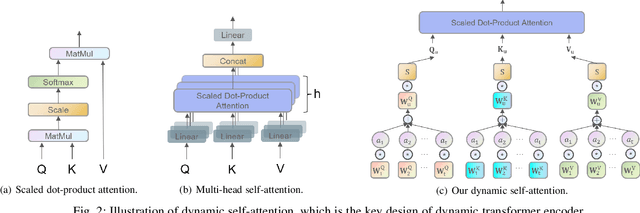

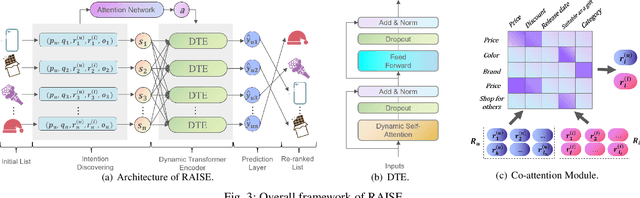

Attention over Self-attention:Intention-aware Re-ranking with Dynamic Transformer Encoders for Recommendation

Jan 14, 2022

Re-ranking models refine the item recommendation list generated by the prior global ranking model with intra-item relationships. However, most existing re-ranking solutions refine recommendation list based on the implicit feedback with a shared re-ranking model, which regrettably ignore the intra-item relationships under diverse user intentions. In this paper, we propose a novel Intention-aware Re-ranking Model with Dynamic Transformer Encoder (RAISE), aiming to perform user-specific prediction for each target user based on her intentions. Specifically, we first propose to mine latent user intentions from text reviews with an intention discovering module (IDM). By differentiating the importance of review information with a co-attention network, the latent user intention can be explicitly modeled for each user-item pair. We then introduce a dynamic transformer encoder (DTE) to capture user-specific intra-item relationships among item candidates by seamlessly accommodating the learnt latent user intentions via IDM. As such, RAISE is able to perform user-specific prediction without increasing the depth (number of blocks) and width (number of heads) of the prediction model. Empirical study on four public datasets shows the superiority of our proposed RAISE, with up to 13.95%, 12.30%, and 13.03% relative improvements evaluated by Precision, MAP, and NDCG respectively.

Deep Task-Based Analog-to-Digital Conversion

Jan 29, 2022

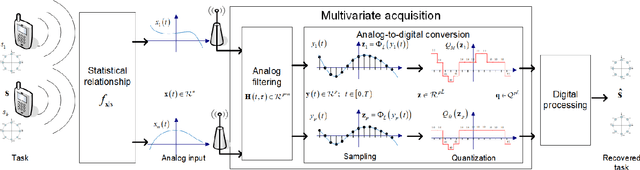

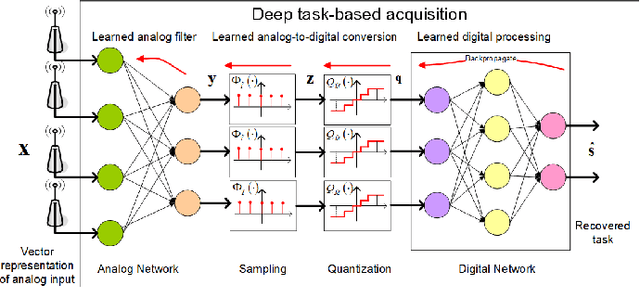

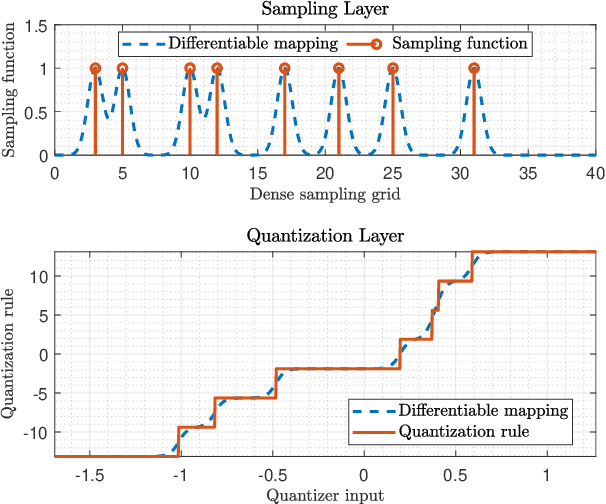

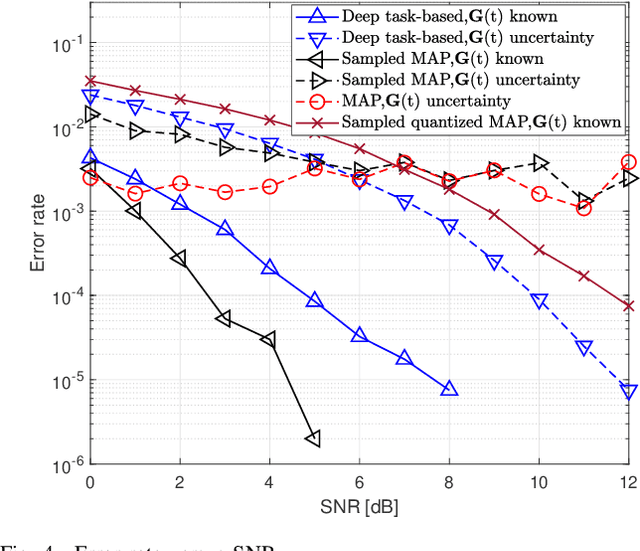

Analog-to-digital converters (ADCs) allow physical signals to be processed using digital hardware. Their conversion consists of two stages: Sampling, which maps a continuous-time signal into discrete-time, and quantization, i.e., representing the continuous-amplitude quantities using a finite number of bits. ADCs typically implement generic uniform conversion mappings that are ignorant of the task for which the signal is acquired, and can be costly when operating in high rates and fine resolutions. In this work we design task-oriented ADCs which learn from data how to map an analog signal into a digital representation such that the system task can be efficiently carried out. We propose a model for sampling and quantization that facilitates the learning of non-uniform mappings from data. Based on this learnable ADC mapping, we present a mechanism for optimizing a hybrid acquisition system comprised of analog combining, tunable ADCs with fixed rates, and digital processing, by jointly learning its components end-to-end. Then, we show how one can exploit the representation of hybrid acquisition systems as deep network to optimize the sampling rate and quantization rate given the task by utilizing Bayesian meta-learning techniques. We evaluate the proposed deep task-based ADC in two case studies: the first considers symbol detection in multi-antenna digital receivers, where multiple analog signals are simultaneously acquired in order to recover a set of discrete information symbols. The second application is the beamforming of analog channel data acquired in ultrasound imaging. Our numerical results demonstrate that the proposed approach achieves performance which is comparable to operating with high sampling rates and fine resolution quantization, while operating with reduced overall bit rate.

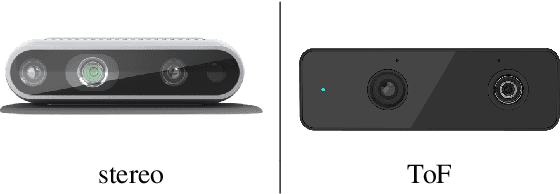

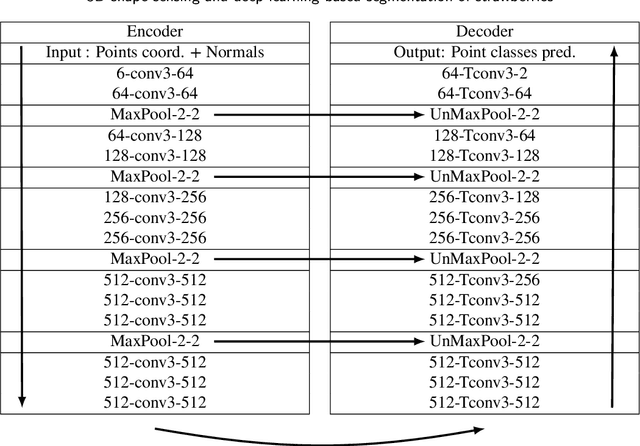

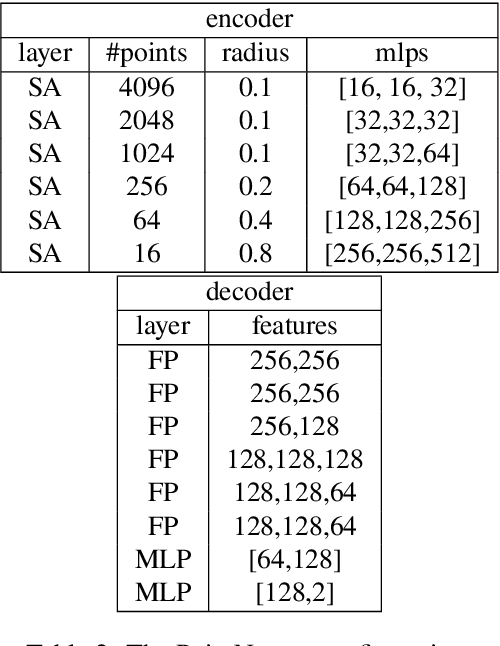

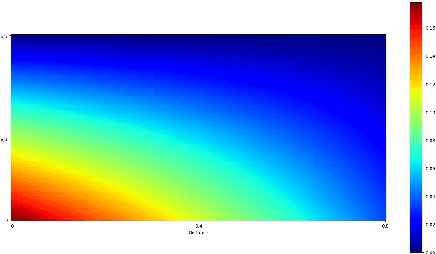

3D shape sensing and deep learning-based segmentation of strawberries

Nov 26, 2021

Automation and robotisation of the agricultural sector are seen as a viable solution to socio-economic challenges faced by this industry. This technology often relies on intelligent perception systems providing information about crops, plants and the entire environment. The challenges faced by traditional 2D vision systems can be addressed by modern 3D vision systems which enable straightforward localisation of objects, size and shape estimation, or handling of occlusions. So far, the use of 3D sensing was mainly limited to indoor or structured environments. In this paper, we evaluate modern sensing technologies including stereo and time-of-flight cameras for 3D perception of shape in agriculture and study their usability for segmenting out soft fruit from background based on their shape. To that end, we propose a novel 3D deep neural network which exploits the organised nature of information originating from the camera-based 3D sensors. We demonstrate the superior performance and efficiency of the proposed architecture compared to the state-of-the-art 3D networks. Through a simulated study, we also show the potential of the 3D sensing paradigm for object segmentation in agriculture and provide insights and analysis of what shape quality is needed and expected for further analysis of crops. The results of this work should encourage researchers and companies to develop more accurate and robust 3D sensing technologies to assure their wider adoption in practical agricultural applications.

* 14 pages, 13 figures, accepted to Computers and Electronics in Agriculture

Measuring Attribution in Natural Language Generation Models

Dec 23, 2021

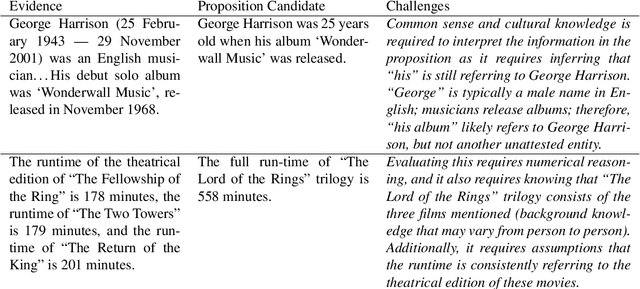

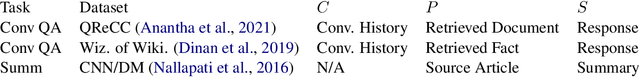

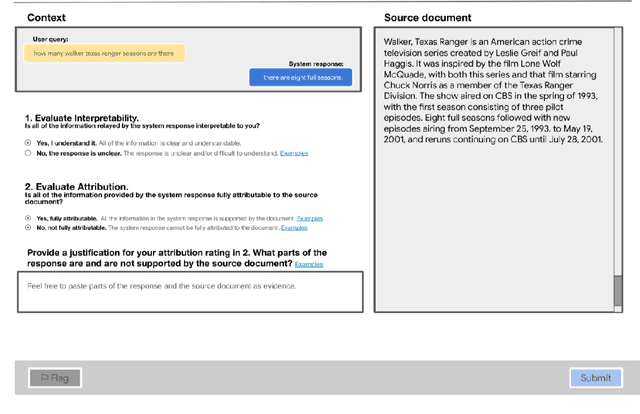

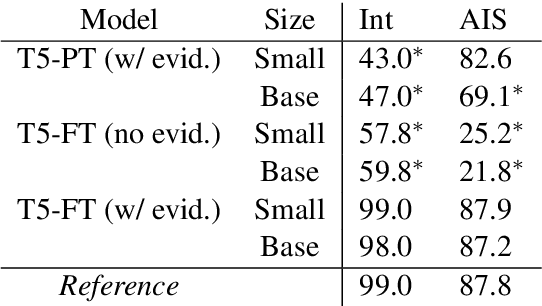

With recent improvements in natural language generation (NLG) models for various applications, it has become imperative to have the means to identify and evaluate whether NLG output is only sharing verifiable information about the external world. In this work, we present a new evaluation framework entitled Attributable to Identified Sources (AIS) for assessing the output of natural language generation models, when such output pertains to the external world. We first define AIS and introduce a two-stage annotation pipeline for allowing annotators to appropriately evaluate model output according to AIS guidelines. We empirically validate this approach on three generation datasets (two in the conversational QA domain and one in summarization) via human evaluation studies that suggest that AIS could serve as a common framework for measuring whether model-generated statements are supported by underlying sources. We release guidelines for the human evaluation studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge