"Information": models, code, and papers

SciBERTSUM: Extractive Summarization for Scientific Documents

Jan 21, 2022

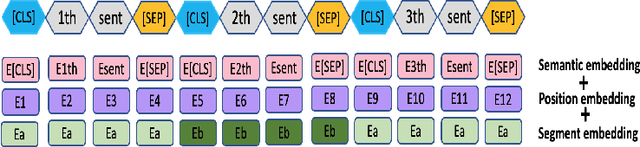

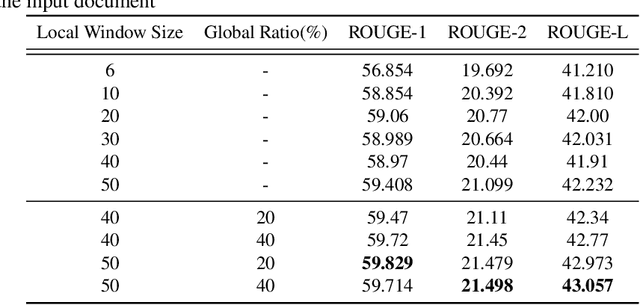

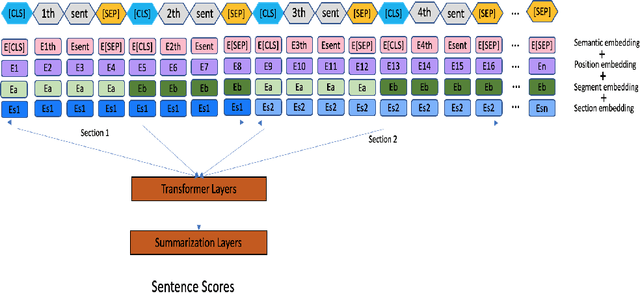

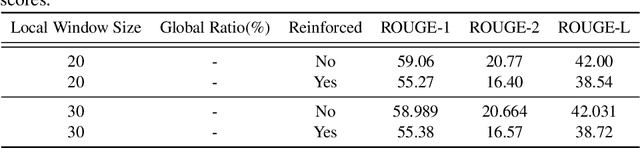

The summarization literature focuses on the summarization of news articles. The news articles in the CNN-DailyMail are relatively short documents with about 30 sentences per document on average. We introduce SciBERTSUM, our summarization framework designed for the summarization of long documents like scientific papers with more than 500 sentences. SciBERTSUM extends BERTSUM to long documents by 1) adding a section embedding layer to include section information in the sentence vector and 2) applying a sparse attention mechanism where each sentences will attend locally to nearby sentences and only a small number of sentences attend globally to all other sentences. We used slides generated by the authors of scientific papers as reference summaries since they contain the technical details from the paper. The results show the superiority of our model in terms of ROUGE scores.

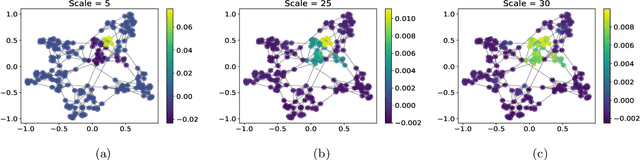

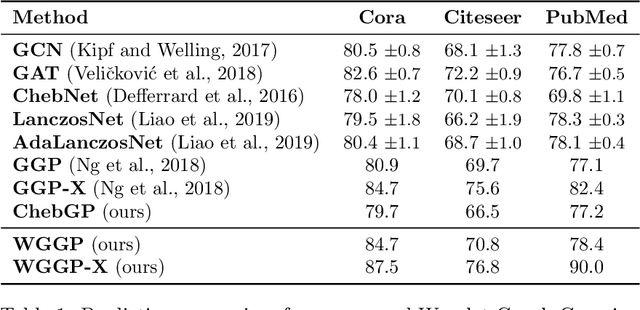

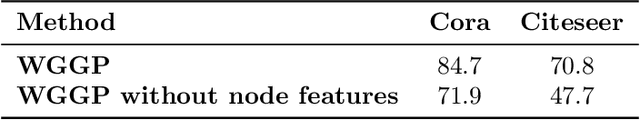

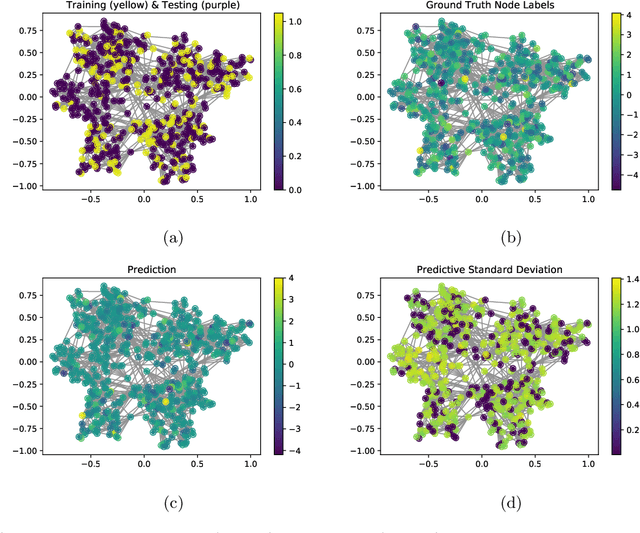

Adaptive Gaussian Processes on Graphs via Spectral Graph Wavelets

Oct 25, 2021

Graph-based models require aggregating information in the graph from neighbourhoods of different sizes. In particular, when the data exhibit varying levels of smoothness on the graph, a multi-scale approach is required to capture the relevant information. In this work, we propose a Gaussian process model using spectral graph wavelets, which can naturally aggregate neighbourhood information at different scales. Through maximum likelihood optimisation of the model hyperparameters, the wavelets automatically adapt to the different frequencies in the data, and as a result our model goes beyond capturing low frequency information. We achieve scalability to larger graphs by using a spectrum-adaptive polynomial approximation of the filter function, which is designed to yield a low approximation error in dense areas of the graph spectrum. Synthetic and real-world experiments demonstrate the ability of our model to infer scales accurately and produce competitive performances against state-of-the-art models in graph-based learning tasks.

Visual attention analysis of pathologists examining whole slide images of Prostate cancer

Feb 17, 2022

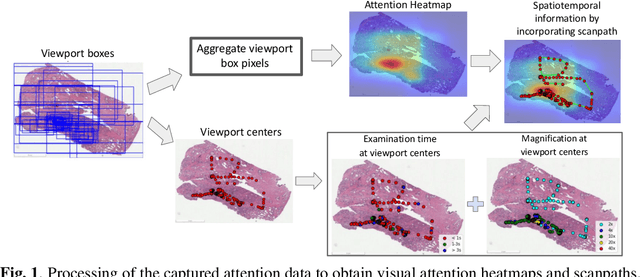

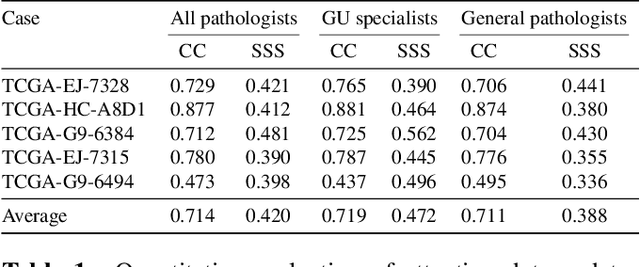

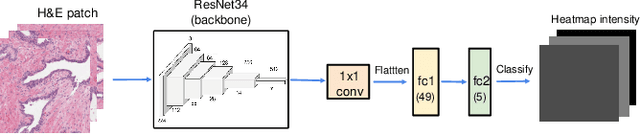

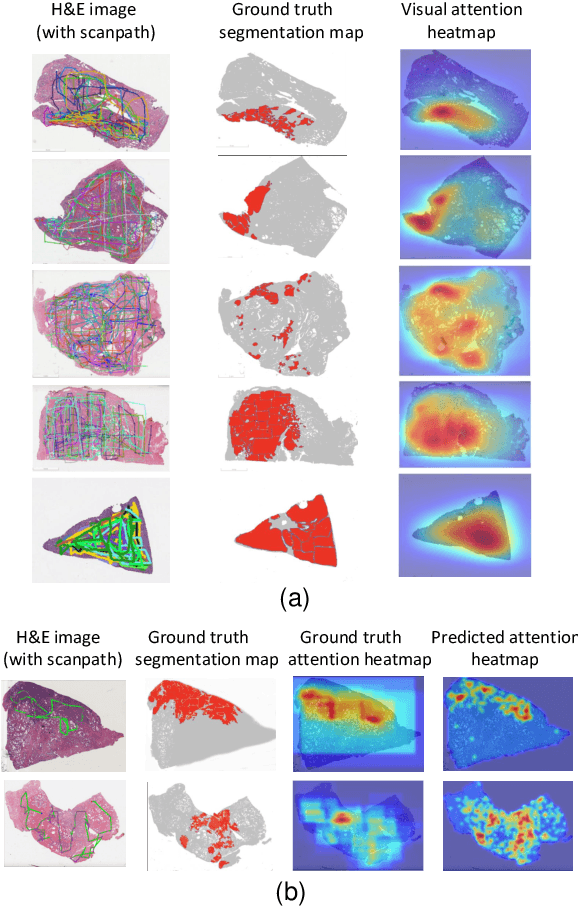

We study the attention of pathologists as they examine whole-slide images (WSIs) of prostate cancer tissue using a digital microscope. To the best of our knowledge, our study is the first to report in detail how pathologists navigate WSIs of prostate cancer as they accumulate information for their diagnoses. We collected slide navigation data (i.e., viewport location, magnification level, and time) from 13 pathologists in 2 groups (5 genitourinary (GU) specialists and 8 general pathologists) and generated visual attention heatmaps and scanpaths. Each pathologist examined five WSIs from the TCGA PRAD dataset, which were selected by a GU pathology specialist. We examined and analyzed the distributions of visual attention for each group of pathologists after each WSI was examined. To quantify the relationship between a pathologist's attention and evidence for cancer in the WSI, we obtained tumor annotations from a genitourinary specialist. We used these annotations to compute the overlap between the distribution of visual attention and annotated tumor region to identify strong correlations. Motivated by this analysis, we trained a deep learning model to predict visual attention on unseen WSIs. We find that the attention heatmaps predicted by our model correlate quite well with the ground truth attention heatmap and tumor annotations on a test set of 17 WSIs by using various spatial and temporal evaluation metrics.

Pattern Recognition and Event Detection on IoT Data-streams

Mar 02, 2022

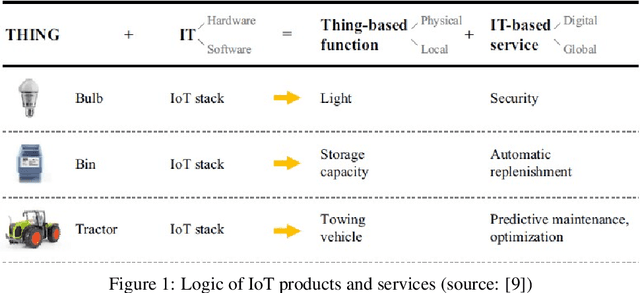

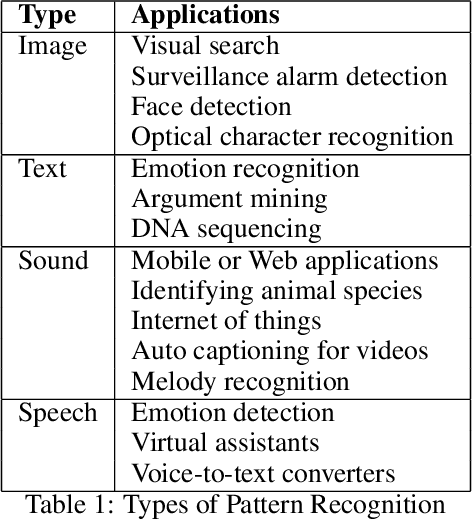

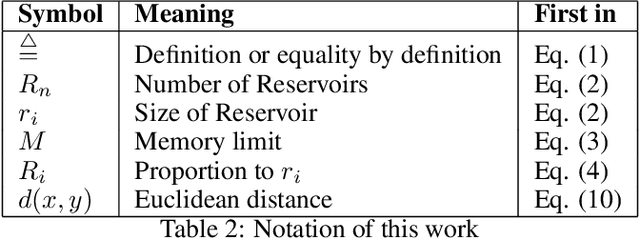

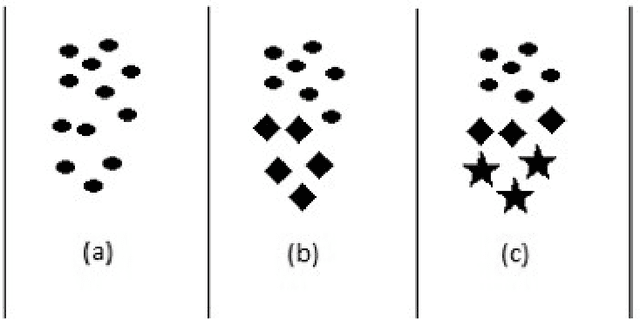

Big data streams are possibly one of the most essential underlying notions. However, data streams are often challenging to handle owing to their rapid pace and limited information lifetime. It is difficult to collect and communicate stream samples while storing, transmitting and computing a function across the whole stream or even a large segment of it. In answer to this research issue, many streaming-specific solutions were developed. Stream techniques imply a limited capacity of one or more resources such as computing power and memory, as well as time or accuracy limits. Reservoir sampling algorithms choose and store results that are probabilistically significant. A weighted random sampling approach using a generalised sampling algorithmic framework to detect unique events is the key research goal of this work. Briefly, a gradually developed estimate of the joint stream distribution across all feasible components keeps k stream elements judged representative for the full stream. Once estimate confidence is high, k samples are chosen evenly. The complexity is O(min(k,n-k)), where n is the number of items inspected. Due to the fact that events are usually considered outliers, it is sufficient to extract element patterns and push them to an alternate version of k-means as proposed here. The suggested technique calculates the sum of squared errors (SSE) for each cluster, and this is utilised not only as a measure of convergence, but also as a quantification and an indirect assessment of the element distribution's approximation accuracy. This clustering enables for the detection of outliers in the stream based on their distance from the usual event centroids. The findings reveal that weighted sampling and res-means outperform typical approaches for stream event identification. Detected events are shown as knowledge graphs, along with typical clusters of events.

vol2Brain: A new online Pipeline for whole Brain MRI analysis

Feb 08, 2022

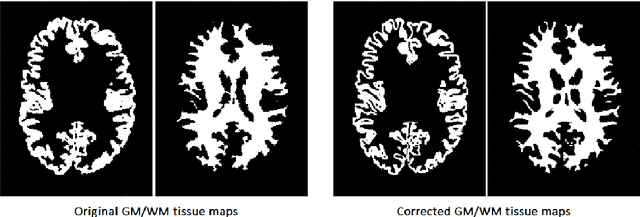

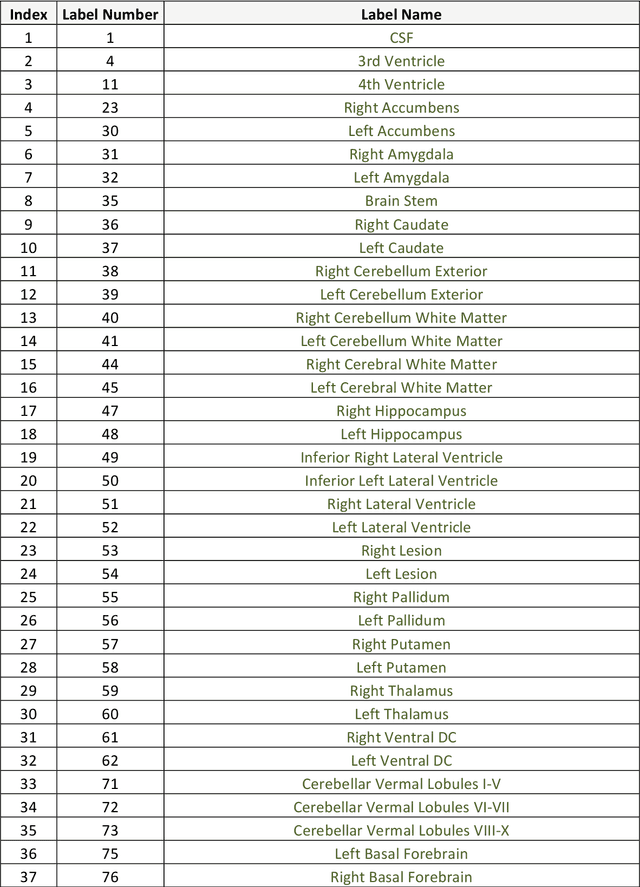

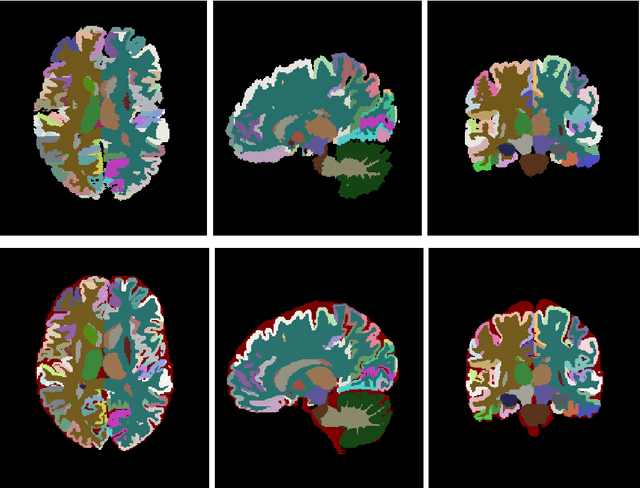

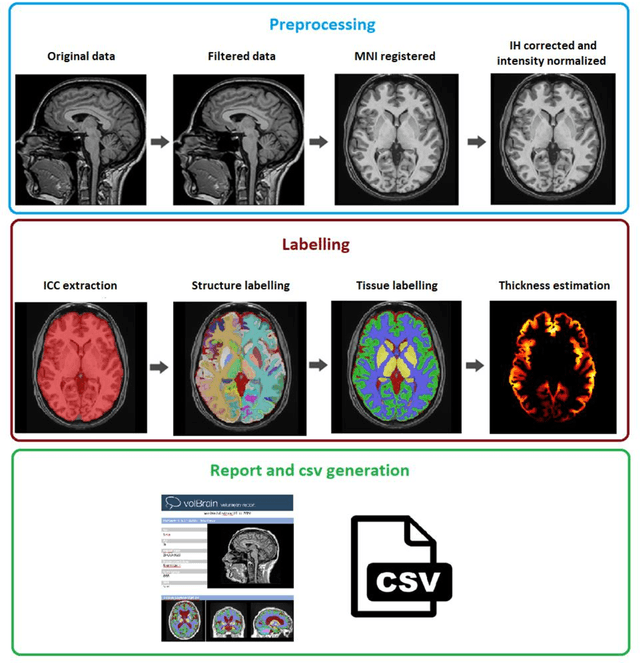

Automatic and reliable quantitative tools for MR brain image analysis are a very valuable resources for both clinical and research environments. In the last years, this field has experienced many advances with successful techniques based on label fusion and more recently deep learning. However, few of them have been specifically designed to provide a dense anatomical labelling at multiscale level and to deal with brain anatomical alterations such as white matter lesions. In this work, we present a fully automatic pipeline (vol2Brain) for whole brain segmentation and analysis which densely labels (N>100) the brain while being robust to the presence of white matter lesions. This new pipeline is an evolution of our previous volBrain pipeline that extends significantly the number of regions that can be analyzed. Our proposed method is based on a fast multiscale multi-atlas label fusion technology with systematic error correction able to provide accurate volumetric information in few minutes. We have deployed our new pipeline within our platform volBrain (www.volbrain.upv.es) which has been already demonstrated to be an efficient and effective manner to share our technology with users worldwide

Unsupervised Time-Series Representation Learning with Iterative Bilinear Temporal-Spectral Fusion

Feb 08, 2022

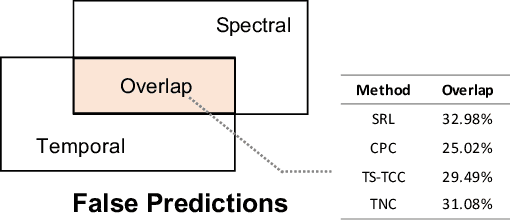

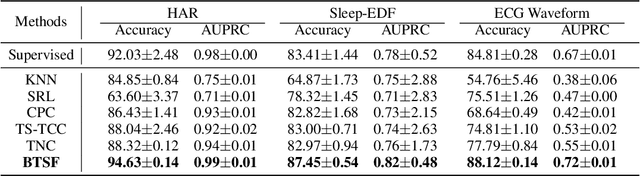

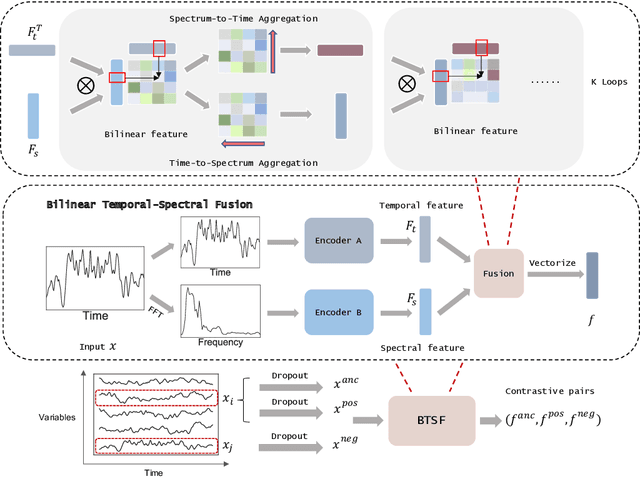

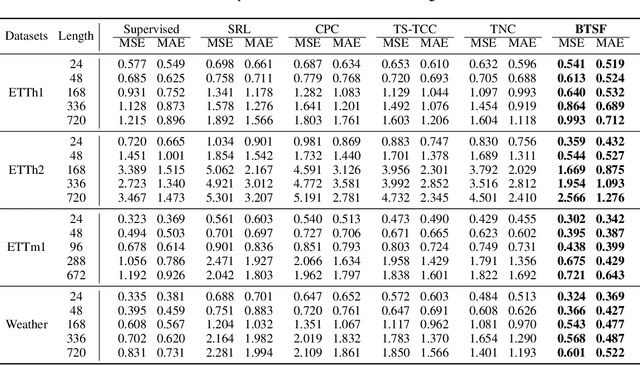

Unsupervised/self-supervised time series representation learning is a challenging problem because of its complex dynamics and sparse annotations. Existing works mainly adopt the framework of contrastive learning with the time-based augmentation techniques to sample positives and negatives for contrastive training. Nevertheless, they mostly use segment-level augmentation derived from time slicing, which may bring about sampling bias and incorrect optimization with false negatives due to the loss of global context. Besides, they all pay no attention to incorporate the spectral information in feature representation. In this paper, we propose a unified framework, namely Bilinear Temporal-Spectral Fusion (BTSF). Specifically, we firstly utilize the instance-level augmentation with a simple dropout on the entire time series for maximally capturing long-term dependencies. We devise a novel iterative bilinear temporal-spectral fusion to explicitly encode the affinities of abundant time-frequency pairs, and iteratively refines representations in a fusion-and-squeeze manner with Spectrum-to-Time (S2T) and Time-to-Spectrum (T2S) Aggregation modules. We firstly conducts downstream evaluations on three major tasks for time series including classification, forecasting and anomaly detection. Experimental results shows that our BTSF consistently significantly outperforms the state-of-the-art methods.

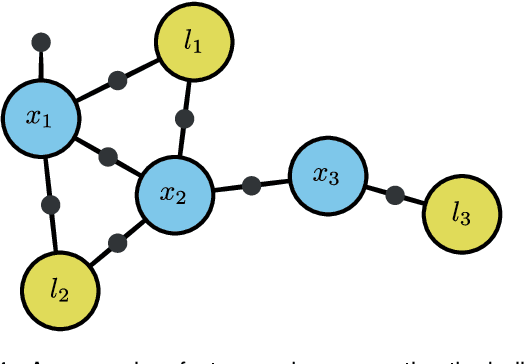

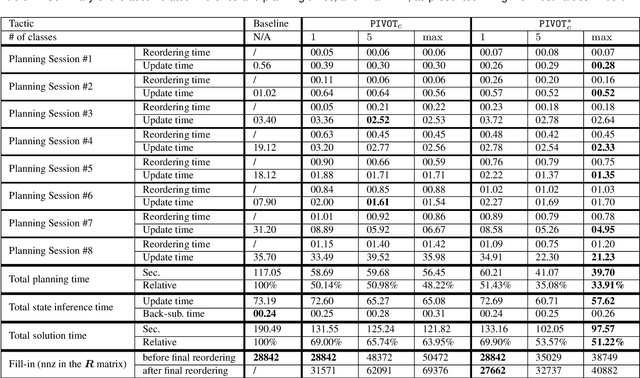

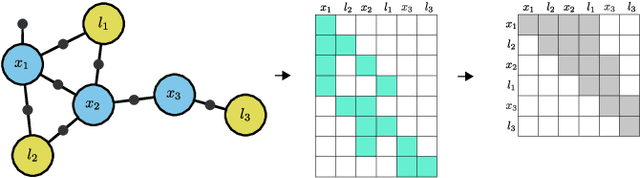

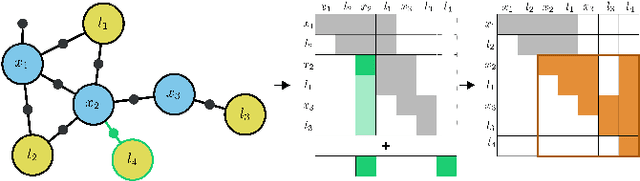

Efficient Belief Space Planning in High-Dimensional State Spaces using PIVOT: Predictive Incremental Variable Ordering Tactic

Dec 29, 2021

In this work, we examine the problem of online decision making under uncertainty, which we formulate as planning in the belief space. Maintaining beliefs (i.e., distributions) over high-dimensional states (e.g., entire trajectories) was not only shown to significantly improve accuracy, but also allows planning with information-theoretic objectives, as required for the tasks of active SLAM and information gathering. Nonetheless, planning under this "smoothing" paradigm holds a high computational complexity, which makes it challenging for online solution. Thus, we suggest the following idea: before planning, perform a standalone state variable reordering procedure on the initial belief, and "push forwards" all the predicted loop closing variables. Since the initial variable order determines which subset of them would be affected by incoming updates, such reordering allows us to minimize the total number of affected variables, and reduce the computational complexity of candidate evaluation during planning. We call this approach PIVOT: Predictive Incremental Variable Ordering Tactic. Applying this tactic can also improve the state inference efficiency; if we maintain the PIVOT order after the planning session, then we should similarly reduce the cost of loop closures, when they actually occur. To demonstrate its effectiveness, we applied PIVOT in a realistic active SLAM simulation, where we managed to significantly reduce the computation time of both the planning and inference sessions. The approach is applicable to general distributions, and induces no loss in accuracy.

Interpolation-based Contrastive Learning for Few-Label Semi-Supervised Learning

Feb 24, 2022

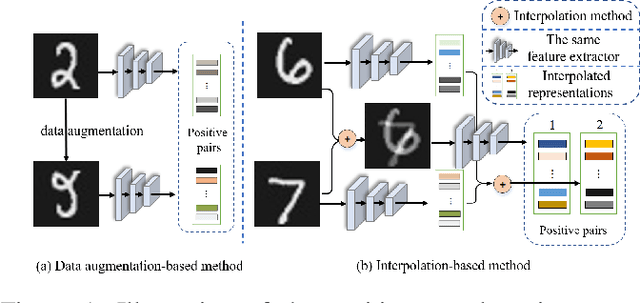

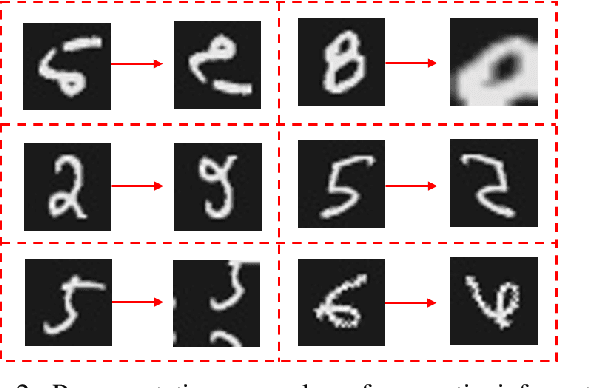

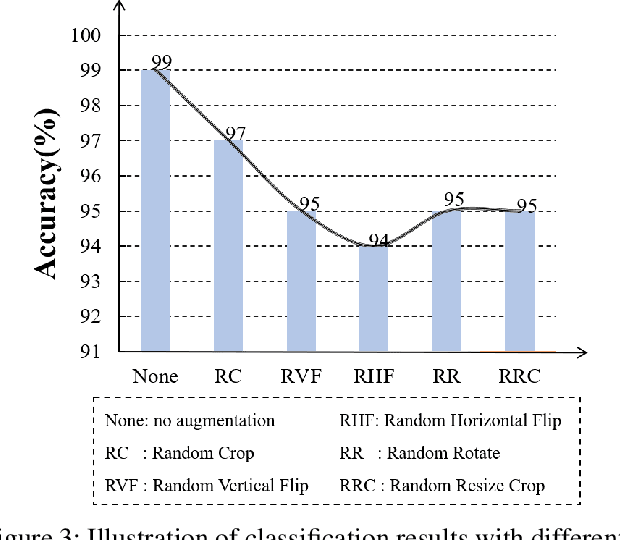

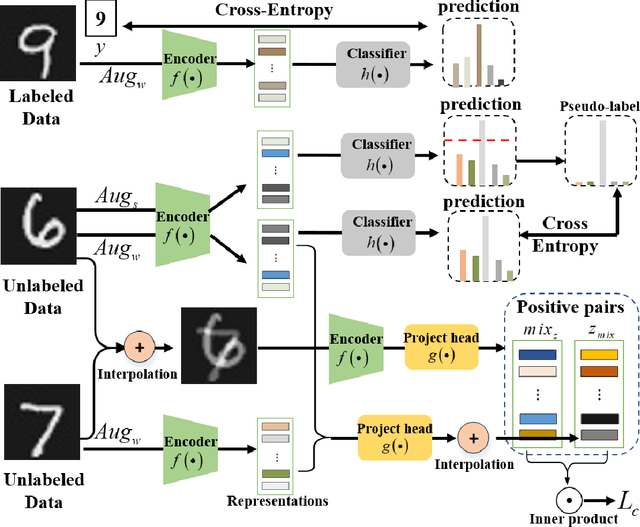

Semi-supervised learning (SSL) has long been proved to be an effective technique to construct powerful models with limited labels. In the existing literature, consistency regularization-based methods, which force the perturbed samples to have similar predictions with the original ones have attracted much attention for their promising accuracy. However, we observe that, the performance of such methods decreases drastically when the labels get extremely limited, e.g., 2 or 3 labels for each category. Our empirical study finds that the main problem lies with the drifting of semantic information in the procedure of data augmentation. The problem can be alleviated when enough supervision is provided. However, when little guidance is available, the incorrect regularization would mislead the network and undermine the performance of the algorithm. To tackle the problem, we (1) propose an interpolation-based method to construct more reliable positive sample pairs; (2) design a novel contrastive loss to guide the embedding of the learned network to change linearly between samples so as to improve the discriminative capability of the network by enlarging the margin decision boundaries. Since no destructive regularization is introduced, the performance of our proposed algorithm is largely improved. Specifically, the proposed algorithm outperforms the second best algorithm (Comatch) with 5.3% by achieving 88.73% classification accuracy when only two labels are available for each class on the CIFAR-10 dataset. Moreover, we further prove the generality of the proposed method by improving the performance of the existing state-of-the-art algorithms considerably with our proposed strategy.

Predictive Beamforming for Integrated Sensing and Communication in Vehicular Networks: A Deep Learning Approach

Feb 08, 2022

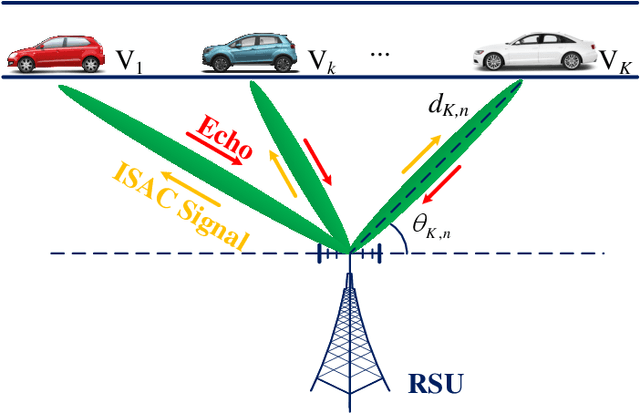

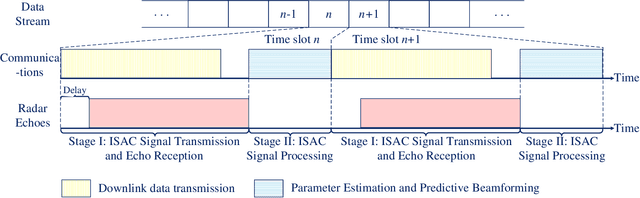

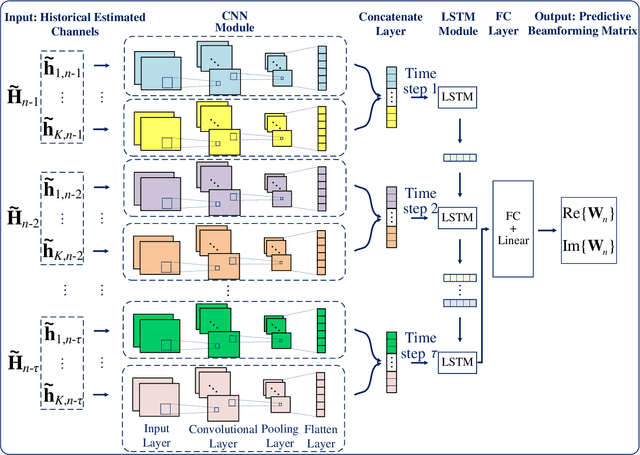

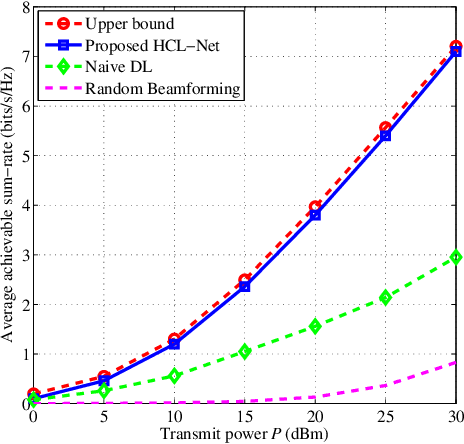

The implementation of integrated sensing and communication (ISAC) highly depends on the effective beamforming design exploiting accurate instantaneous channel state information (ICSI). However, channel tracking in ISAC requires large amount of training overhead and prohibitively large computational complexity. To address this problem, in this paper, we focus on ISAC-assisted vehicular networks and exploit a deep learning approach to implicitly learn the features of historical channels and directly predict the beamforming matrix for the next time slot to maximize the average achievable sum-rate of system, thus bypassing the need of explicit channel tracking for reducing the system signaling overhead. To this end, a general sum-rate maximization problem with Cramer-Rao lower bounds-based sensing constraints is first formulated for the considered ISAC system. Then, a historical channels-based convolutional long short-term memory network is designed for predictive beamforming that can exploit the spatial and temporal dependencies of communication channels to further improve the learning performance. Finally, simulation results show that the proposed method can satisfy the requirement of sensing performance, while its achievable sum-rate can approach the upper bound obtained by a genie-aided scheme with perfect ICSI available.

Towards Coherent and Consistent Use of Entities in Narrative Generation

Feb 03, 2022

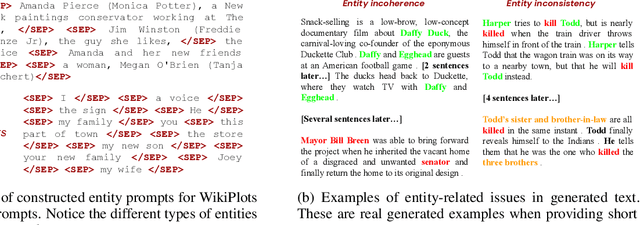

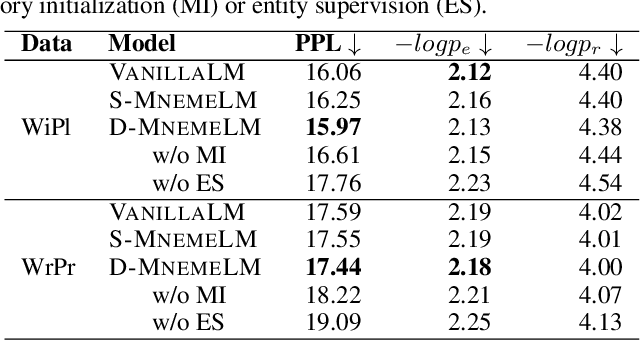

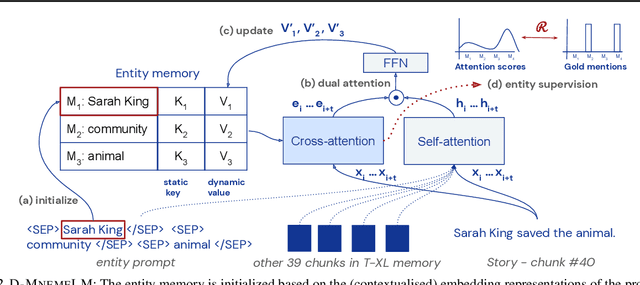

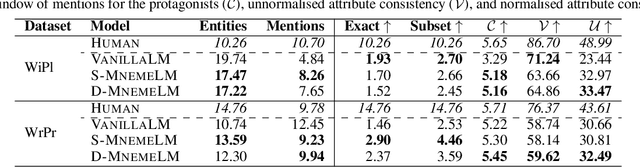

Large pre-trained language models (LMs) have demonstrated impressive capabilities in generating long, fluent text; however, there is little to no analysis on their ability to maintain entity coherence and consistency. In this work, we focus on the end task of narrative generation and systematically analyse the long-range entity coherence and consistency in generated stories. First, we propose a set of automatic metrics for measuring model performance in terms of entity usage. Given these metrics, we quantify the limitations of current LMs. Next, we propose augmenting a pre-trained LM with a dynamic entity memory in an end-to-end manner by using an auxiliary entity-related loss for guiding the reads and writes to the memory. We demonstrate that the dynamic entity memory increases entity coherence according to both automatic and human judgment and helps preserving entity-related information especially in settings with a limited context window. Finally, we also validate that our automatic metrics are correlated with human ratings and serve as a good indicator of the quality of generated stories.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge