"Information": models, code, and papers

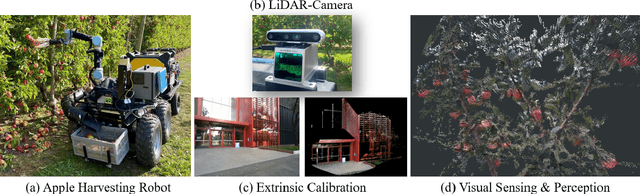

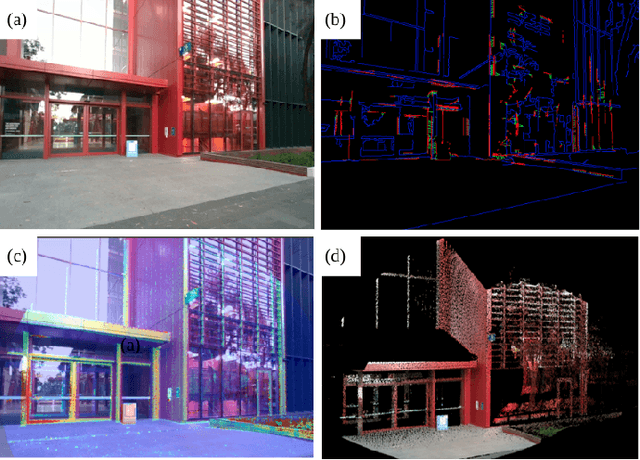

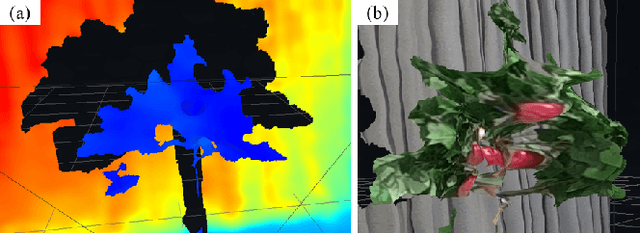

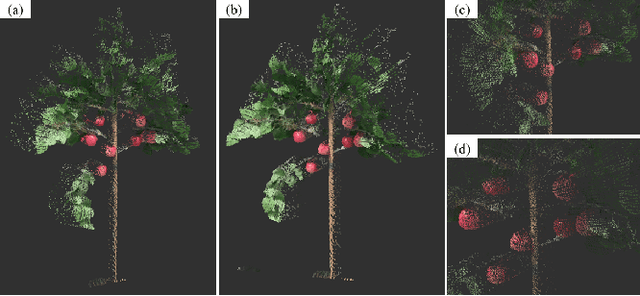

Accurate Fruit Localisation for Robotic Harvesting using High Resolution LiDAR-Camera Fusion

May 01, 2022

Accurate depth-sensing plays a crucial role in securing a high success rate of robotic harvesting in natural orchard environments. Solid-state LiDAR (SSL), a recently introduced LiDAR technique, can perceive high-resolution geometric information of the scenes, which can be potential utilised to receive accurate depth information. Meanwhile, the fusion of the sensory information from LiDAR and camera can significantly enhance the sensing ability of the harvesting robots. This work introduces a LiDAR-camera fusion-based visual sensing and perception strategy to perform accurate fruit localisation for a harvesting robot in the apple orchards. Two SOTA extrinsic calibration methods, target-based and targetless-based, are applied and evaluated to obtain the accurate extrinsic matrix between the LiDAR and camera. With the extrinsic calibration, the point clouds and color images are fused to perform fruit localisation using a one-stage instance segmentation network. Experimental shows that LiDAR-camera achieves better quality on visual sensing in the natural environments. Meanwhile, introducing the LiDAR-camera fusion largely improves the accuracy and robustness of the fruit localisation. Specifically, the standard deviations of fruit localisation by using LiDAR-camera at 0.5 m, 1.2 m, and 1.8 m are 0.245, 0.227, and 0.275 cm respectively. These measurement error is only one one fifth of that from Realsense D455. Lastly, we have attached our visualised point cloud to demonstrate the highly accurate sensing method.

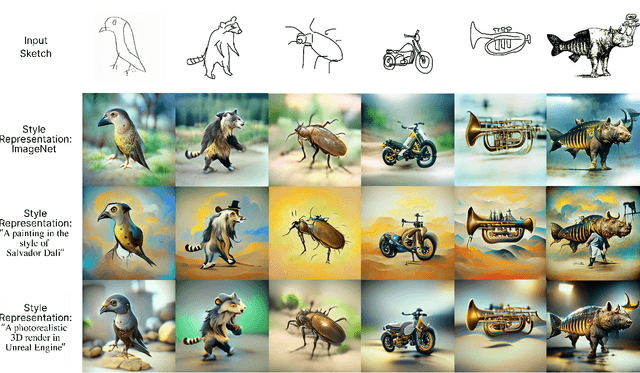

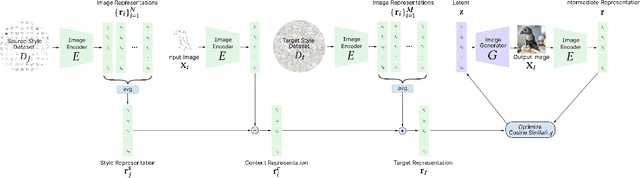

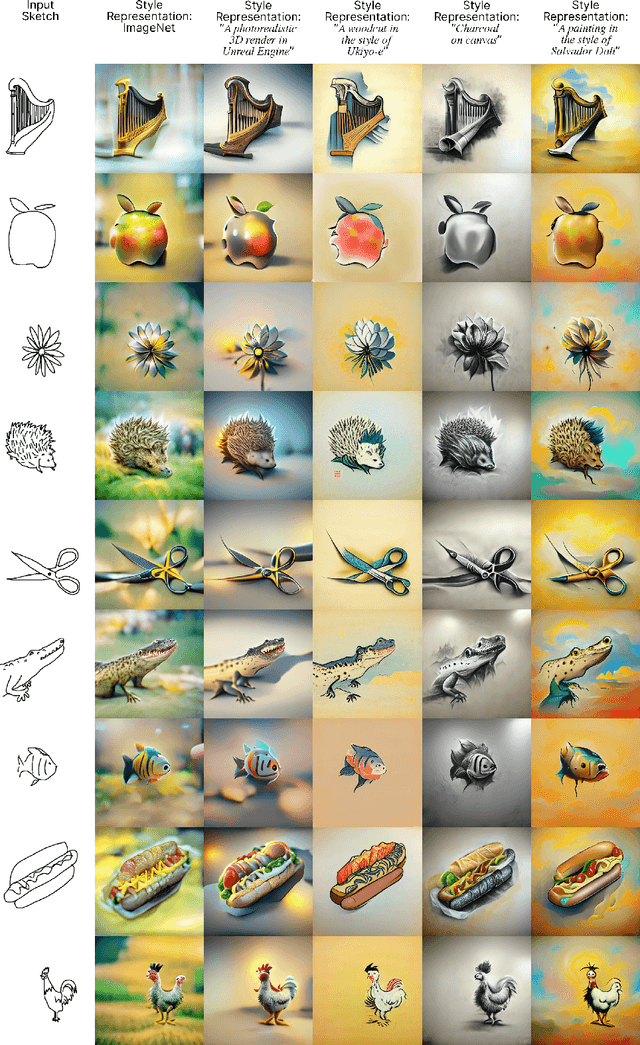

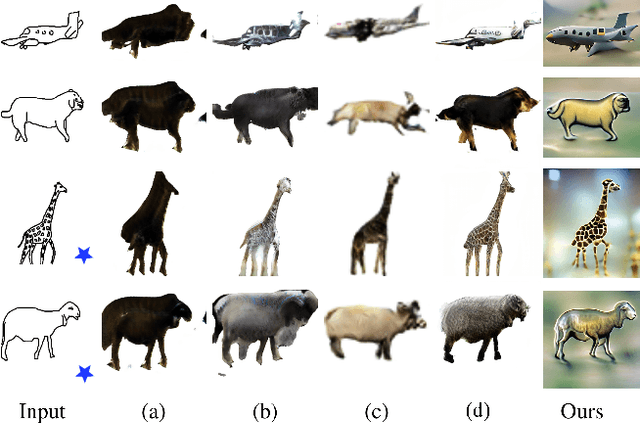

Style-Content Disentanglement in Language-Image Pretraining Representations for Zero-Shot Sketch-to-Image Synthesis

Jun 03, 2022

In this work, we propose and validate a framework to leverage language-image pretraining representations for training-free zero-shot sketch-to-image synthesis. We show that disentangled content and style representations can be utilized to guide image generators to employ them as sketch-to-image generators without (re-)training any parameters. Our approach for disentangling style and content entails a simple method consisting of elementary arithmetic assuming compositionality of information in representations of input sketches. Our results demonstrate that this approach is competitive with state-of-the-art instance-level open-domain sketch-to-image models, while only depending on pretrained off-the-shelf models and a fraction of the data.

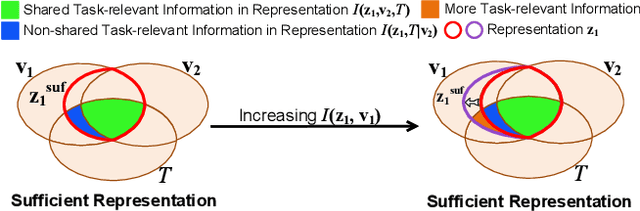

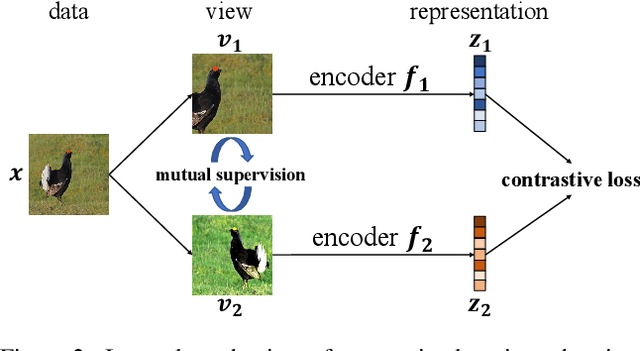

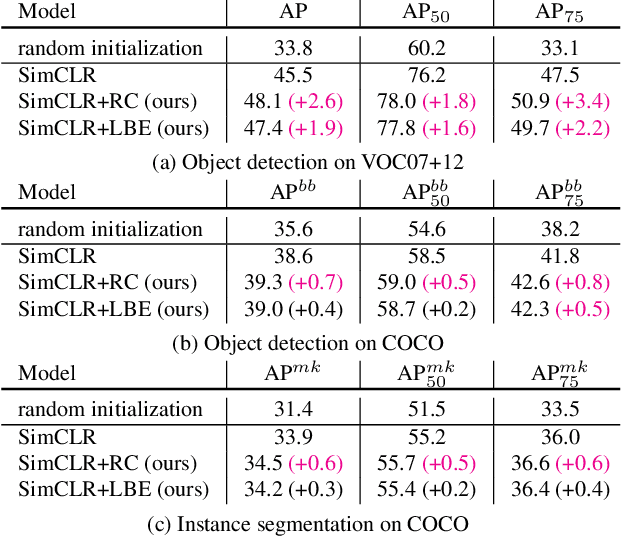

Rethinking Minimal Sufficient Representation in Contrastive Learning

Mar 14, 2022

Contrastive learning between different views of the data achieves outstanding success in the field of self-supervised representation learning and the learned representations are useful in broad downstream tasks. Since all supervision information for one view comes from the other view, contrastive learning approximately obtains the minimal sufficient representation which contains the shared information and eliminates the non-shared information between views. Considering the diversity of the downstream tasks, it cannot be guaranteed that all task-relevant information is shared between views. Therefore, we assume the non-shared task-relevant information cannot be ignored and theoretically prove that the minimal sufficient representation in contrastive learning is not sufficient for the downstream tasks, which causes performance degradation. This reveals a new problem that the contrastive learning models have the risk of over-fitting to the shared information between views. To alleviate this problem, we propose to increase the mutual information between the representation and input as regularization to approximately introduce more task-relevant information, since we cannot utilize any downstream task information during training. Extensive experiments verify the rationality of our analysis and the effectiveness of our method. It significantly improves the performance of several classic contrastive learning models in downstream tasks. Our code is available at \url{https://github.com/Haoqing-Wang/InfoCL}.

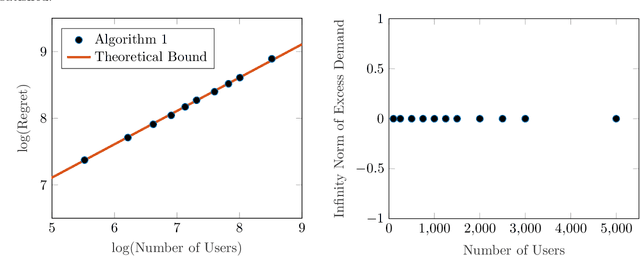

Online Learning in Fisher Markets with Unknown Agent Preferences

Apr 27, 2022

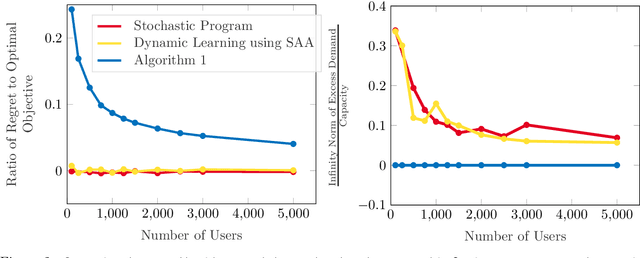

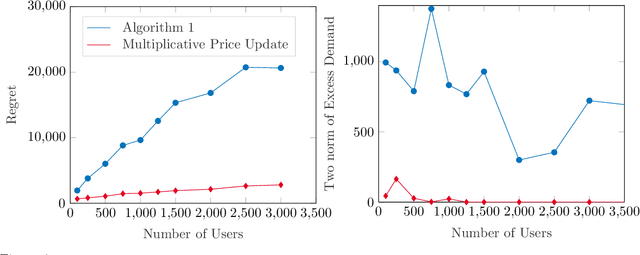

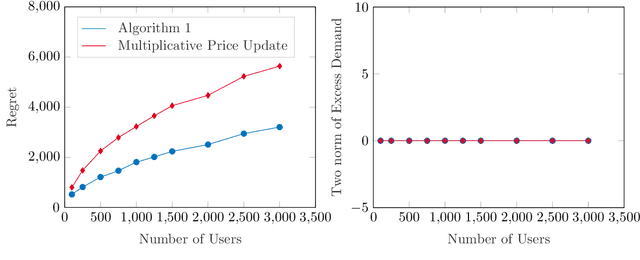

In a Fisher market, agents (users) spend a budget of (artificial) currency to buy goods that maximize their utilities, and producers set prices on capacity-constrained goods such that the market clears. The equilibrium prices in such a market are typically computed through the solution of a convex program, e.g., the Eisenberg-Gale program, that aggregates users' preferences into a centralized social welfare objective. However, the computation of equilibrium prices using convex programs assumes that all transactions happen in a static market wherein all users are present simultaneously and relies on complete information on each user's budget and utility function. Since, in practice, information on users' utilities and budgets is unknown and users tend to arrive over time in the market, we study an online variant of Fisher markets, wherein users enter the market sequentially. We focus on the setting where users have linear utilities with privately known utility and budget parameters drawn i.i.d. from a distribution $\mathcal{D}$. In this setting, we develop a simple yet effective algorithm to set prices that preserves user privacy while achieving a regret and capacity violation of $O(\sqrt{n})$, where $n$ is the number of arriving users and the capacities of the goods scale as $O(n)$. Here, our regret measure represents the optimality gap in the objective of the Eisenberg-Gale program between the online allocation policy and that of an offline oracle with complete information on users' budgets and utilities. To establish the efficacy of our approach, we show that even an algorithm that sets expected equilibrium prices with perfect information on the distribution $\mathcal{D}$ cannot achieve both a regret and constraint violation of better than $\Omega(\sqrt{n})$. Finally, we present numerical experiments to demonstrate the performance of our approach relative to several benchmarks.

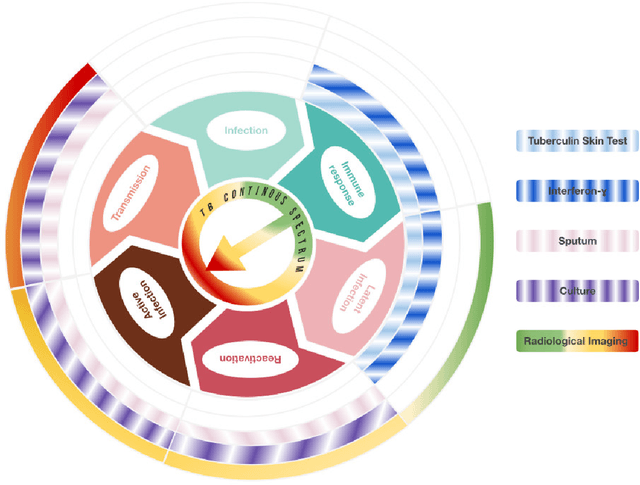

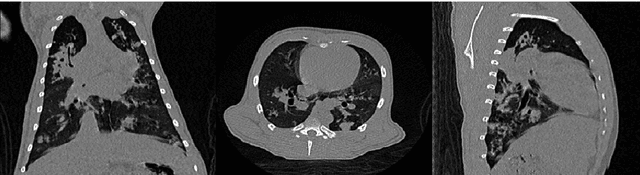

PhD Thesis. Computer-Aided Assessment of Tuberculosis with Radiological Imaging: From rule-based methods to Deep Learning

May 31, 2022

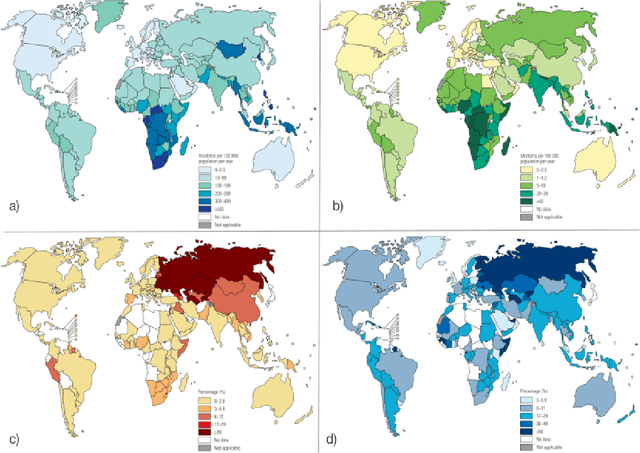

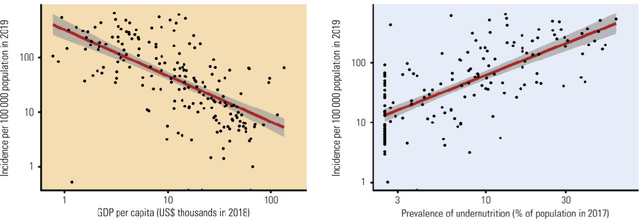

Tuberculosis (TB) is an infectious disease caused by Mycobacterium tuberculosis (Mtb.) that produces pulmonary damage due to its airborne nature. This fact facilitates the disease fast-spreading, which, according to the World Health Organization (WHO), in 2021 caused 1.2 million deaths and 9.9 million new cases. Fortunately, X-Ray Computed Tomography (CT) images enable capturing specific manifestations of TB that are undetectable using regular diagnostic tests. However, this procedure is unfeasible to process the thousands of volume images belonging to the different TB animal models and humans required for a suitable (pre-)clinical trial. To achieve suitable results, automatization of different image analysis processes is a must to quantify TB. Thus, in this thesis, we introduce a set of novel methods based on the state of the art Artificial Intelligence (AI) and Computer Vision (CV). Initially, we present an algorithm to assess Pathological Lung Segmentation (PLS). Next, a Gaussian Mixture Model ruled by an Expectation-Maximization (EM) algorithm is employed to automatically. Chapter 3 introduces a model to automate the identification of TB lesions and the characterization of disease progression. Chapter 4 extends the classification of TB lesions. Namely, we introduce a computational model to infer TB manifestations present in each lung lobe of CT scans by employing the associated radiologist reports as ground truth. In Chapter 5, we present a DL model capable of extracting disentangled information from images of different animal models, as well as information of the mechanisms that generate the CT volumes. To sum up, the thesis presents a collection of valuable tools to automate the quantification of pathological lungs. Chapter 6 elaborates on these conclusions.

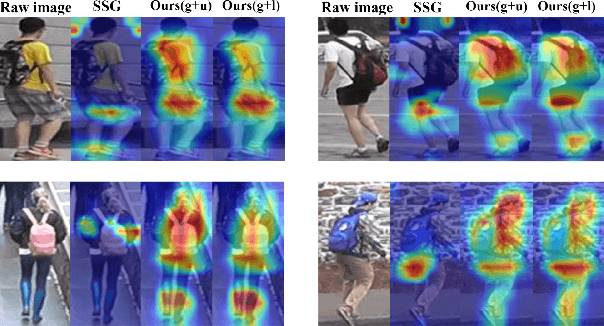

Learning Feature Fusion for Unsupervised Domain Adaptive Person Re-identification

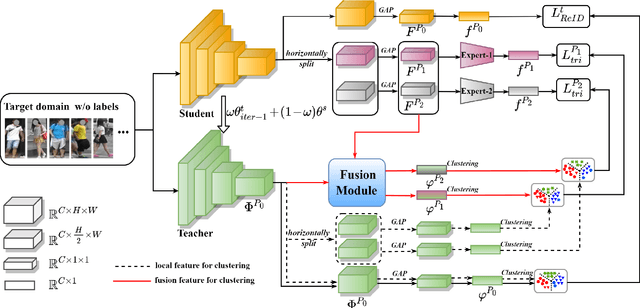

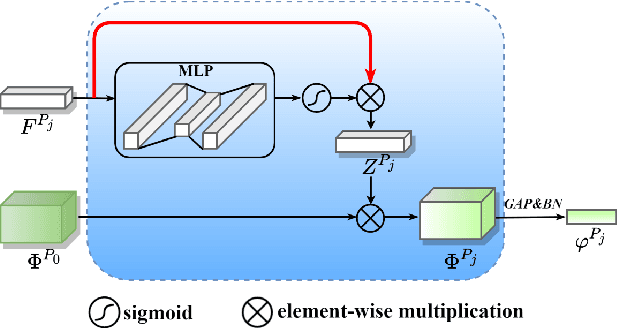

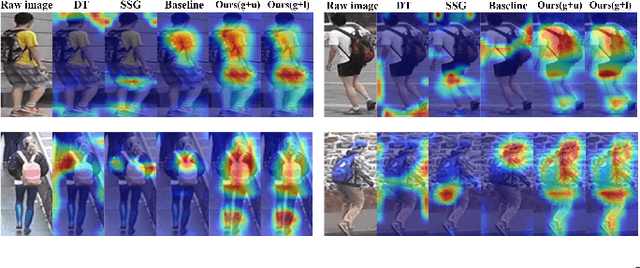

May 19, 2022

Unsupervised domain adaptive (UDA) person re-identification (ReID) has gained increasing attention for its effectiveness on the target domain without manual annotations. Most fine-tuning based UDA person ReID methods focus on encoding global features for pseudo labels generation, neglecting the local feature that can provide for the fine-grained information. To handle this issue, we propose a Learning Feature Fusion (LF2) framework for adaptively learning to fuse global and local features to obtain a more comprehensive fusion feature representation. Specifically, we first pre-train our model within a source domain, then fine-tune the model on unlabeled target domain based on the teacher-student training strategy. The average weighting teacher network is designed to encode global features, while the student network updating at each iteration is responsible for fine-grained local features. By fusing these multi-view features, multi-level clustering is adopted to generate diverse pseudo labels. In particular, a learnable Fusion Module (FM) for giving prominence to fine-grained local information within the global feature is also proposed to avoid obscure learning of multiple pseudo labels. Experiments show that our proposed LF2 framework outperforms the state-of-the-art with 73.5% mAP and 83.7% Rank1 on Market1501 to DukeMTMC-ReID, and achieves 83.2% mAP and 92.8% Rank1 on DukeMTMC-ReID to Market1501.

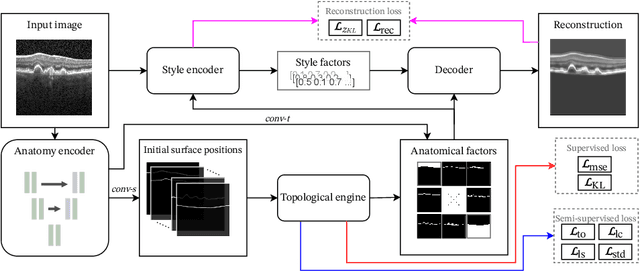

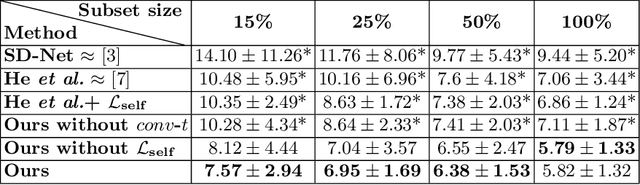

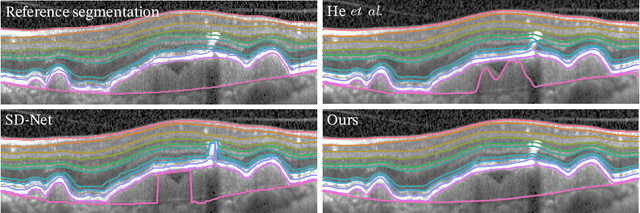

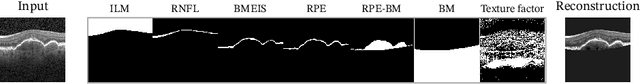

SD-LayerNet: Semi-supervised retinal layer segmentation in OCT using disentangled representation with anatomical priors

Jul 01, 2022

Optical coherence tomography (OCT) is a non-invasive 3D modality widely used in ophthalmology for imaging the retina. Achieving automated, anatomically coherent retinal layer segmentation on OCT is important for the detection and monitoring of different retinal diseases, like Age-related Macular Disease (AMD) or Diabetic Retinopathy. However, the majority of state-of-the-art layer segmentation methods are based on purely supervised deep-learning, requiring a large amount of pixel-level annotated data that is expensive and hard to obtain. With this in mind, we introduce a semi-supervised paradigm into the retinal layer segmentation task that makes use of the information present in large-scale unlabeled datasets as well as anatomical priors. In particular, a novel fully differentiable approach is used for converting surface position regression into a pixel-wise structured segmentation, allowing to use both 1D surface and 2D layer representations in a coupled fashion to train the model. In particular, these 2D segmentations are used as anatomical factors that, together with learned style factors, compose disentangled representations used for reconstructing the input image. In parallel, we propose a set of anatomical priors to improve network training when a limited amount of labeled data is available. We demonstrate on the real-world dataset of scans with intermediate and wet-AMD that our method outperforms state-of-the-art when using our full training set, but more importantly largely exceeds state-of-the-art when it is trained with a fraction of the labeled data.

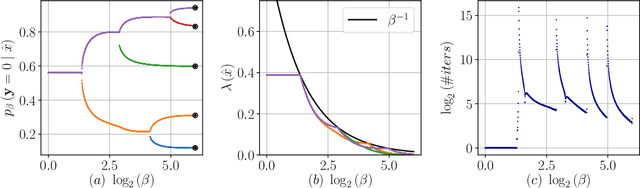

The Dual Information Bottleneck

Jun 08, 2020

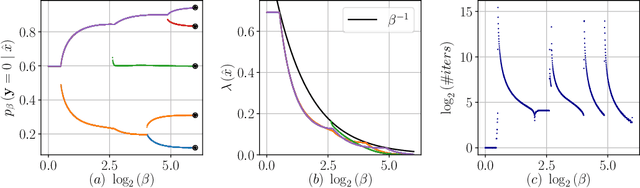

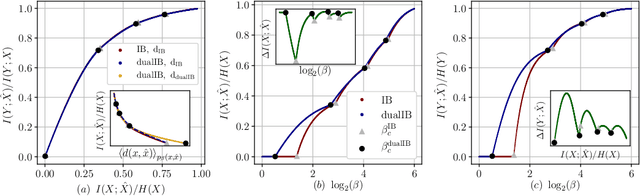

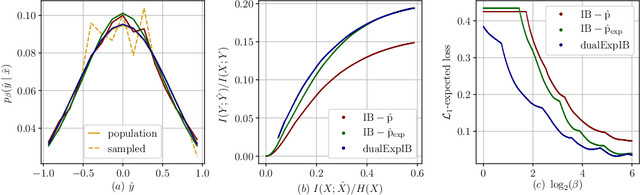

The Information Bottleneck (IB) framework is a general characterization of optimal representations obtained using a principled approach for balancing accuracy and complexity. Here we present a new framework, the Dual Information Bottleneck (dualIB), which resolves some of the known drawbacks of the IB. We provide a theoretical analysis of the dualIB framework; (i) solving for the structure of its solutions (ii) unraveling its superiority in optimizing the mean prediction error exponent and (iii) demonstrating its ability to preserve exponential forms of the original distribution. To approach large scale problems, we present a novel variational formulation of the dualIB for Deep Neural Networks. In experiments on several data-sets, we compare it to a variational form of the IB. This exposes superior Information Plane properties of the dualIB and its potential in improvement of the error.

Channel Estimation in RIS-Assisted MIMO Systems Operating Under Imperfections

Jul 06, 2022

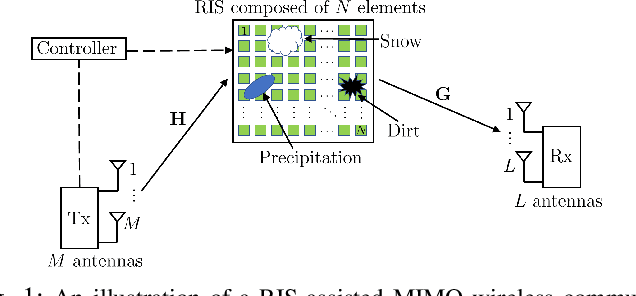

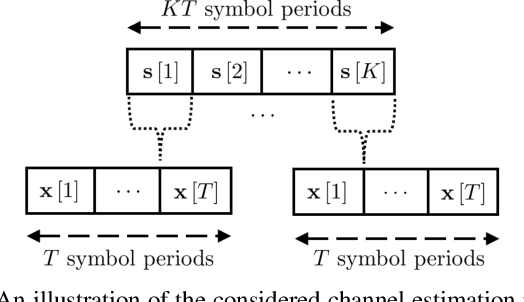

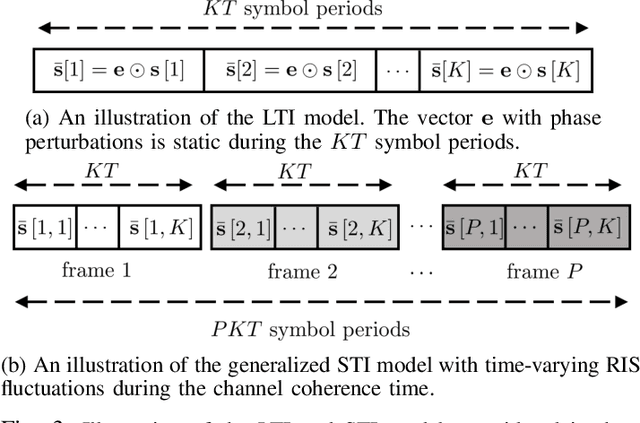

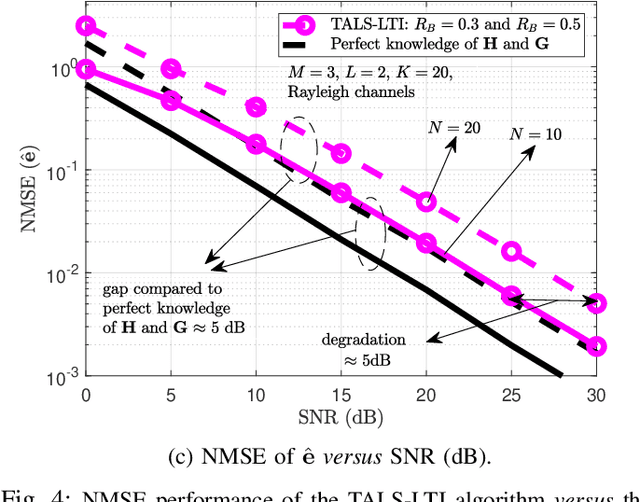

Reconfigurable intelligent surface is a potential technology component of future wireless networks due to its capability of shaping the wireless environment. The promising MIMO systems in terms of extended coverage and enhanced capacity are, however, critically dependent on the accuracy of the channel state information. However, traditional channel estimation schemes are not applicable in RIS-assisted MIMO networks, since passive RISs typically lack the signal processing capabilities that are assumed by channel estimation algorithms. This becomes most problematic when physical imperfections or electronic impairments affect the RIS due to its exposition to different environmental effects or caused by hardware limitations from the circuitry. While these real-world effects are typically ignored in the literature, in this paper we propose efficient channel estimation schemes for RIS-assisted MIMO systems taking different imperfections into account. Specifically, we propose two sets of tensor-based algorithms, based on the parallel factor analysis decomposition schemes. First, by assuming a long-term model in which the RIS imperfections, modeled as unknown phase shifts, are static within the channel coherence time we formulate an iterative alternating least squares (ALS)-based algorithm for the joint estimation of the communication channels and the unknown phase deviations. Next, we develop the short-term imperfection model, which allows both amplitude and phase RIS imperfections to be non-static with respect to the channel coherence time. We propose two iterative ALS-based and closed-form higher order singular value decomposition-based algorithms for the joint estimation of the channels and the unknown impairments. Moreover, we analyze the identifiability and computational complexity of the proposed algorithms and study the effects of various imperfections on the channel estimation quality.

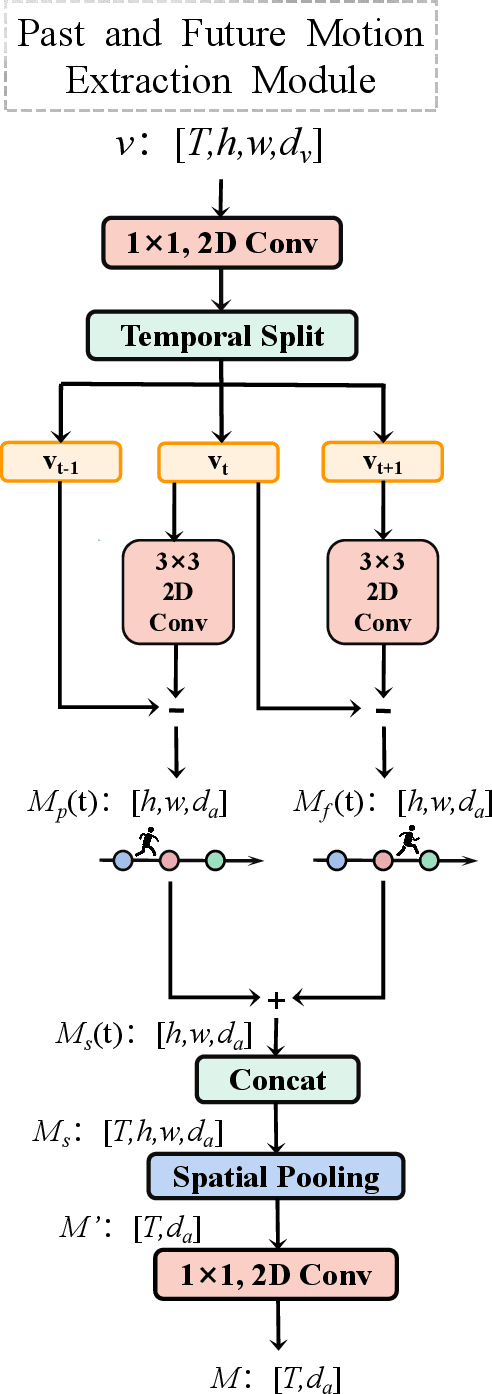

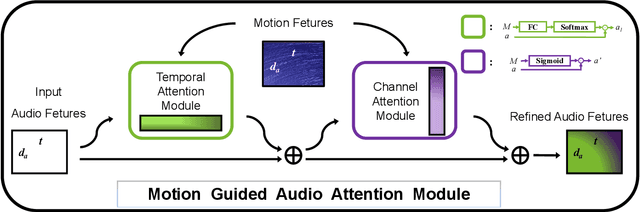

Past and Future Motion Guided Network for Audio Visual Event Localization

May 08, 2022

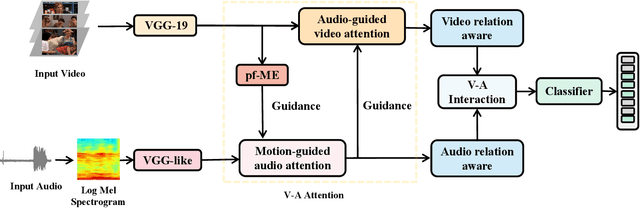

In recent years, audio-visual event localization has attracted much attention. It's purpose is to detect the segment containing audio-visual events and recognize the event category from untrimmed videos. Existing methods use audio-guided visual attention to lead the model pay attention to the spatial area of the ongoing event, devoting to the correlation between audio and visual information but ignoring the correlation between audio and spatial motion. We propose a past and future motion extraction (pf-ME) module to mine the visual motion from videos ,embedded into the past and future motion guided network (PFAGN), and motion guided audio attention (MGAA) module to achieve focusing on the information related to interesting events in audio modality through the past and future visual motion. We choose AVE as the experimental verification dataset and the experiments show that our method outperforms the state-of-the-arts in both supervised and weakly-supervised settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge