"Information": models, code, and papers

Information Extraction from Visually Rich Documents with Font Style Embeddings

Nov 07, 2021

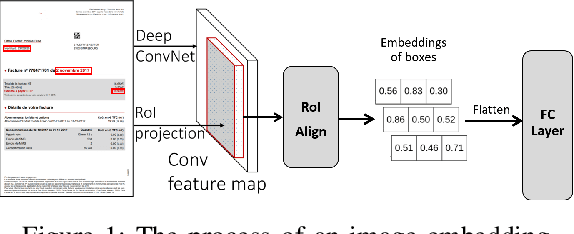

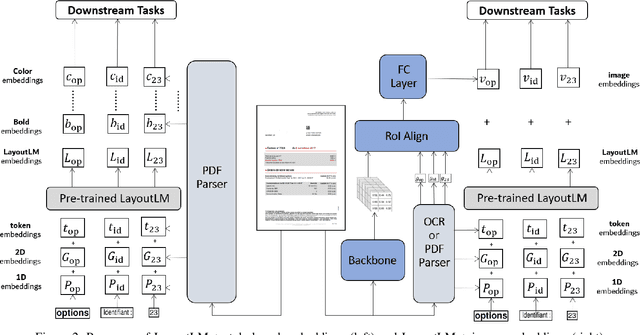

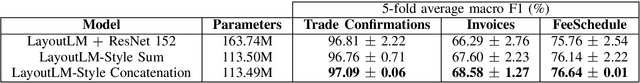

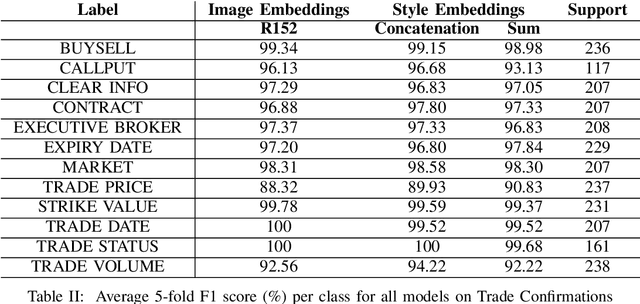

Information extraction (IE) from documents is an intensive area of research with a large set of industrial applications. Current state-of-the-art methods focus on scanned documents with approaches combining computer vision, natural language processing and layout representation. We propose to challenge the usage of computer vision in the case where both token style and visual representation are available (i.e native PDF documents). Our experiments on three real-world complex datasets demonstrate that using token style attributes based embedding instead of a raw visual embedding in LayoutLM model is beneficial. Depending on the dataset, such an embedding yields an improvement of 0.18% to 2.29% in the weighted F1-score with a decrease of 30.7% in the final number of trainable parameters of the model, leading to an improvement in both efficiency and effectiveness.

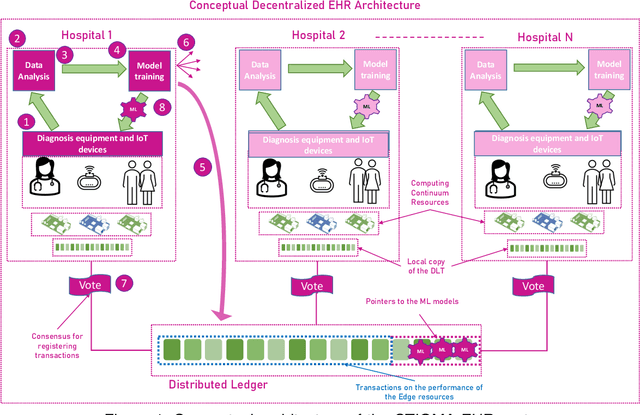

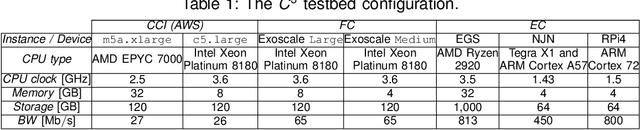

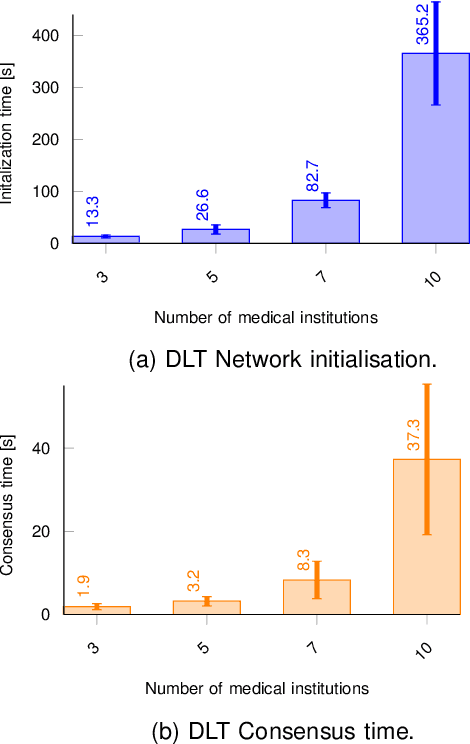

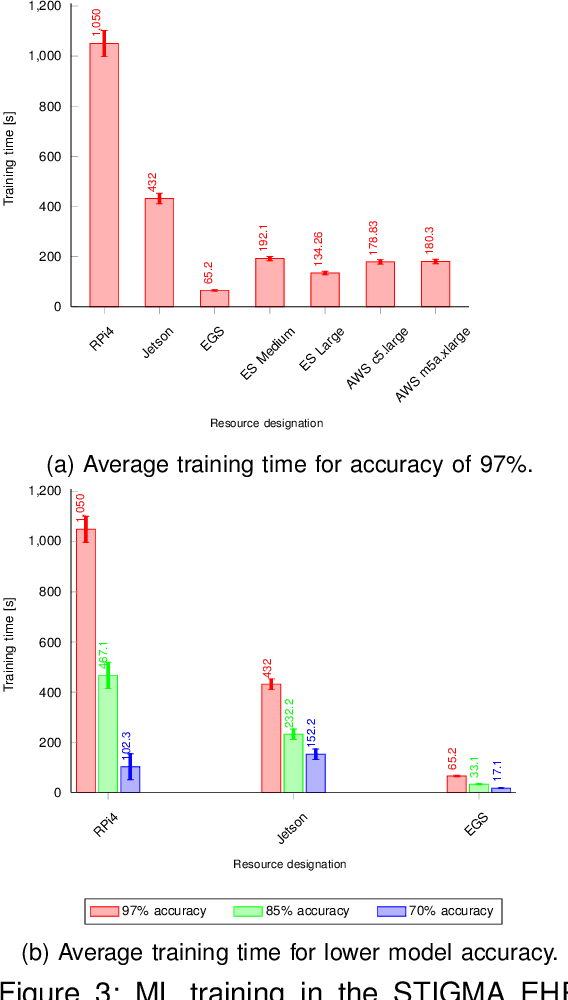

Decentralized Machine Learning for Intelligent Health Care Systems on the Computing Continuum

Jul 29, 2022

The introduction of electronic personal health records (EHR) enables nationwide information exchange and curation among different health care systems. However, the current EHR systems do not provide transparent means for diagnosis support, medical research or can utilize the omnipresent data produced by the personal medical devices. Besides, the EHR systems are centrally orchestrated, which could potentially lead to a single point of failure. Therefore, in this article, we explore novel approaches for decentralizing machine learning over distributed ledgers to create intelligent EHR systems that can utilize information from personal medical devices for improved knowledge extraction. Consequently, we proposed and evaluated a conceptual EHR to enable anonymous predictive analysis across multiple medical institutions. The evaluation results indicate that the decentralized EHR can be deployed over the computing continuum with reduced machine learning time of up to 60% and consensus latency of below 8 seconds.

Distributed Learning over a Wireless Network with Non-coherent Majority Vote Computation

Sep 10, 2022

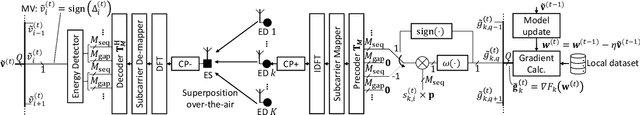

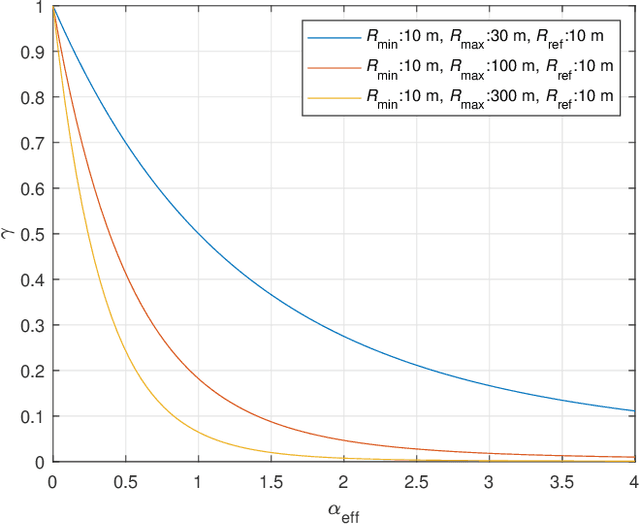

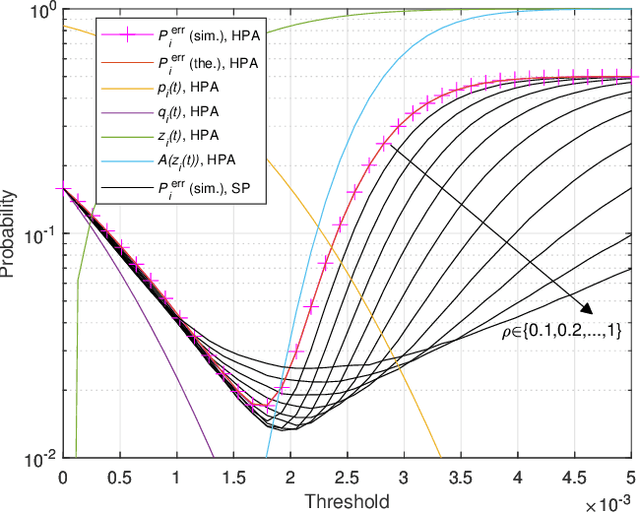

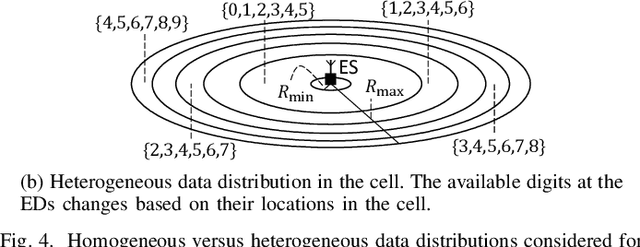

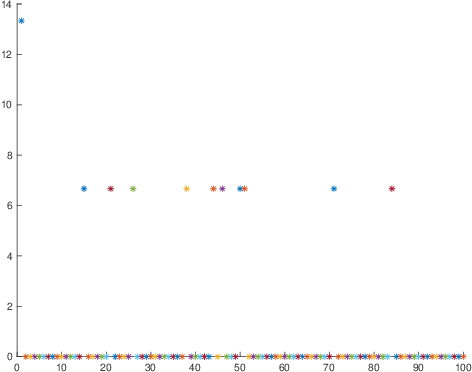

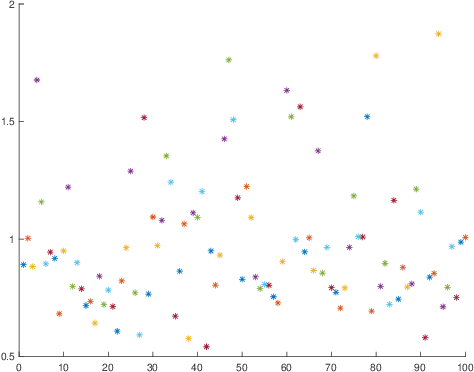

In this study, we propose an over-the-air computation (OAC) scheme to calculate the majority vote (MV) for federated edge learning (FEEL). With the proposed approach, edge devices (EDs) transmit the signs of local stochastic gradients, i.e., votes, by activating one of two orthogonal resources. The MVs at the edge server (ES) are obtained with non-coherent detectors by exploiting the accumulations on the resources. Hence, the proposed scheme eliminates the need for channel state information (CSI) at the EDs and ES. In this study, we analyze various gradient-encoding strategies through the weight functions and waveform configurations over orthogonal frequency division multiplexing (OFDM). We show that specific weight functions that enable absentee EDs (i.e., hard-coded participation with absentees (HPA)) or weighted votes (i.e., soft-coded participation (SP)) can substantially reduce the probability of detecting the incorrect MV. By taking path loss, power control, cell size, and fading channel into account, we prove the convergence of the distributed learning for a non-convex function for HPA. Through simulations, we show that the proposed scheme with HPA and SP can provide high test accuracy even when the time-synchronization and the power control are not ideal under heterogeneous data distribution scenarios.

Johnson-Lindenstrauss embeddings for noisy vectors -- taking advantage of the noise

Sep 01, 2022

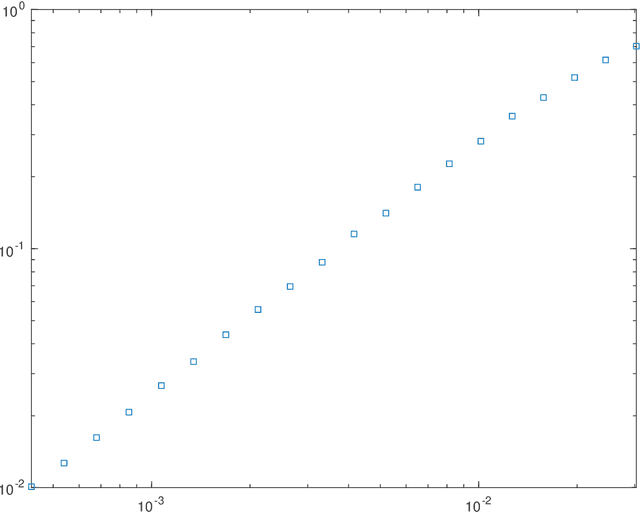

This paper investigates theoretical properties of subsampling and hashing as tools for approximate Euclidean norm-preserving embeddings for vectors with (unknown) additive Gaussian noises. Such embeddings are sometimes called Johnson-lindenstrauss embeddings due to their celebrated lemma. Previous work shows that as sparse embeddings, the success of subsampling and hashing closely depends on the $l_\infty$ to $l_2$ ratios of the vector to be mapped. This paper shows that the presence of noise removes such constrain in high-dimensions, in other words, sparse embeddings such as subsampling and hashing with comparable embedding dimensions to dense embeddings have similar approximate norm-preserving dimensionality-reduction properties. The key is that the noise should be treated as an information to be exploited, not simply something to be removed. Theoretical bounds for subsampling and hashing to recover the approximate norm of a high dimension vector in the presence of noise are derived, with numerical illustrations showing better performances are achieved in the presence of noise.

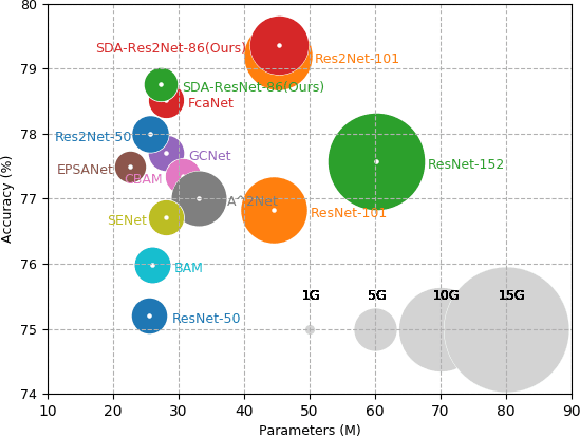

SDA-$x$Net: Selective Depth Attention Networks for Adaptive Multi-scale Feature Representation

Sep 21, 2022

Existing multi-scale solutions lead to a risk of just increasing the receptive field sizes while neglecting small receptive fields. Thus, it is a challenging problem to effectively construct adaptive neural networks for recognizing various spatial-scale objects. To tackle this issue, we first introduce a new attention dimension, i.e., depth, in addition to existing attention dimensions such as channel, spatial, and branch, and present a novel selective depth attention network to symmetrically handle multi-scale objects in various vision tasks. Specifically, the blocks within each stage of a given neural network, i.e., ResNet, output hierarchical feature maps sharing the same resolution but with different receptive field sizes. Based on this structural property, we design a stage-wise building module, namely SDA, which includes a trunk branch and a SE-like attention branch. The block outputs of the trunk branch are fused to globally guide their depth attention allocation through the attention branch. According to the proposed attention mechanism, we can dynamically select different depth features, which contributes to adaptively adjusting the receptive field sizes for the variable-sized input objects. In this way, the cross-block information interaction leads to a long-range dependency along the depth direction. Compared with other multi-scale approaches, our SDA method combines multiple receptive fields from previous blocks into the stage output, thus offering a wider and richer range of effective receptive fields. Moreover, our method can be served as a pluggable module to other multi-scale networks as well as attention networks, coined as SDA-$x$Net. Their combination further extends the range of the effective receptive fields towards small receptive fields, enabling interpretable neural networks. Our source code is available at \url{https://github.com/QingbeiGuo/SDA-xNet.git}.

Extend and Explain: Interpreting Very Long Language Models

Sep 07, 2022

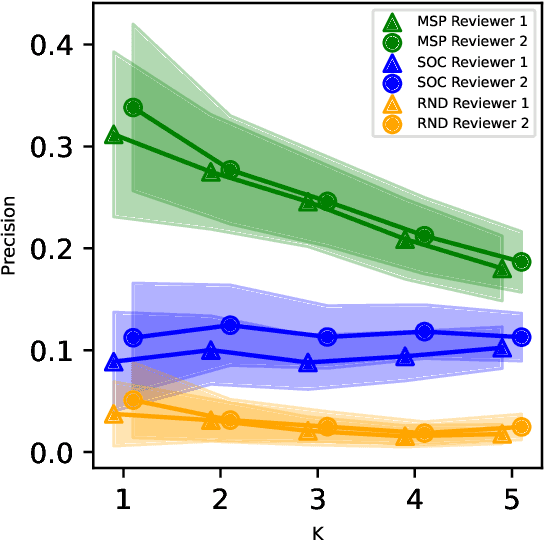

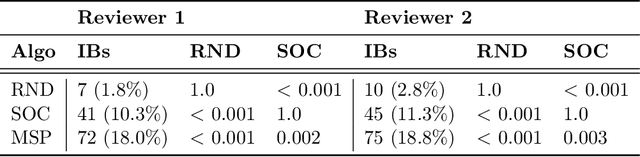

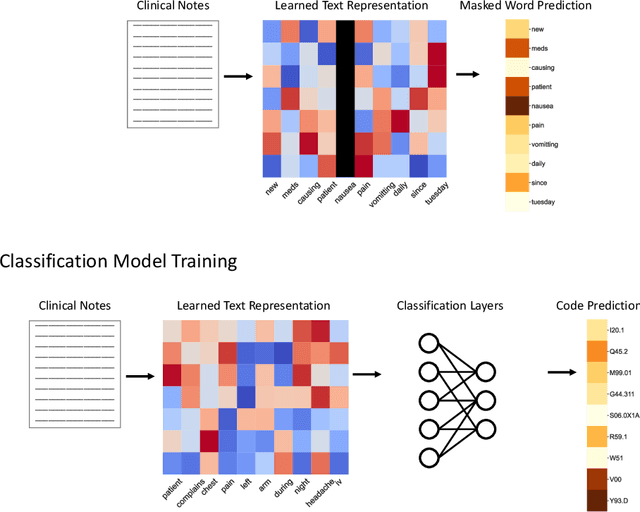

While Transformer language models (LMs) are state-of-the-art for information extraction, long text introduces computational challenges requiring suboptimal preprocessing steps or alternative model architectures. Sparse-attention LMs can represent longer sequences, overcoming performance hurdles. However, it remains unclear how to explain predictions from these models, as not all tokens attend to each other in the self-attention layers, and long sequences pose computational challenges for explainability algorithms when runtime depends on document length. These challenges are severe in the medical context where documents can be very long, and machine learning (ML) models must be auditable and trustworthy. We introduce a novel Masked Sampling Procedure (MSP) to identify the text blocks that contribute to a prediction, apply MSP in the context of predicting diagnoses from medical text, and validate our approach with a blind review by two clinicians. Our method identifies about 1.7x more clinically informative text blocks than the previous state-of-the-art, runs up to 100x faster, and is tractable for generating important phrase pairs. MSP is particularly well-suited to long LMs but can be applied to any text classifier. We provide a general implementation of MSP.

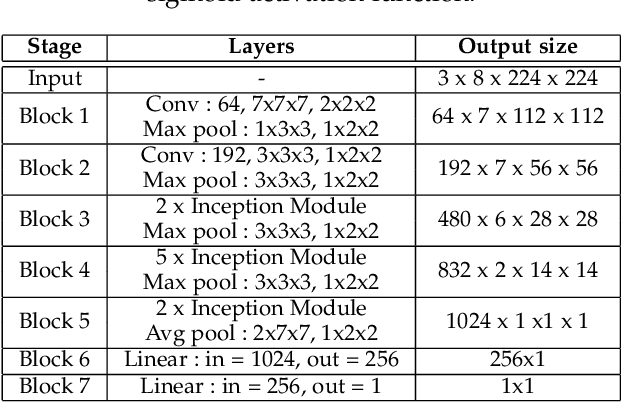

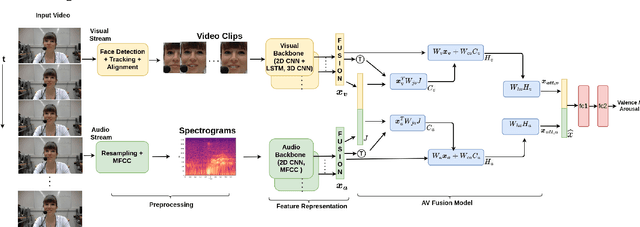

Audio-Visual Fusion for Emotion Recognition in the Valence-Arousal Space Using Joint Cross-Attention

Sep 19, 2022

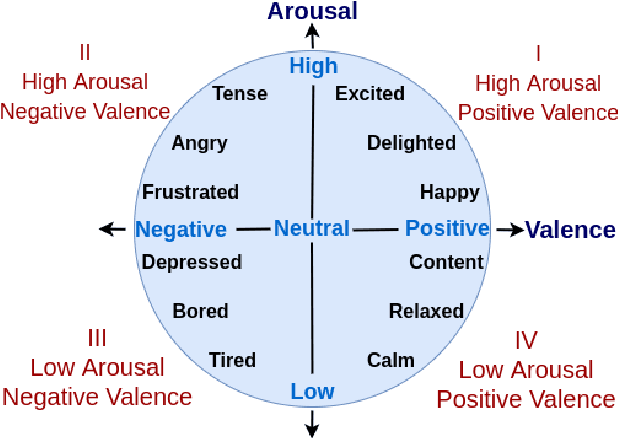

Automatic emotion recognition (ER) has recently gained lot of interest due to its potential in many real-world applications. In this context, multimodal approaches have been shown to improve performance (over unimodal approaches) by combining diverse and complementary sources of information, providing some robustness to noisy and missing modalities. In this paper, we focus on dimensional ER based on the fusion of facial and vocal modalities extracted from videos, where complementary audio-visual (A-V) relationships are explored to predict an individual's emotional states in valence-arousal space. Most state-of-the-art fusion techniques rely on recurrent networks or conventional attention mechanisms that do not effectively leverage the complementary nature of A-V modalities. To address this problem, we introduce a joint cross-attentional model for A-V fusion that extracts the salient features across A-V modalities, that allows to effectively leverage the inter-modal relationships, while retaining the intra-modal relationships. In particular, it computes the cross-attention weights based on correlation between the joint feature representation and that of the individual modalities. By deploying the joint A-V feature representation into the cross-attention module, it helps to simultaneously leverage both the intra and inter modal relationships, thereby significantly improving the performance of the system over the vanilla cross-attention module. The effectiveness of our proposed approach is validated experimentally on challenging videos from the RECOLA and AffWild2 datasets. Results indicate that our joint cross-attentional A-V fusion model provides a cost-effective solution that can outperform state-of-the-art approaches, even when the modalities are noisy or absent.

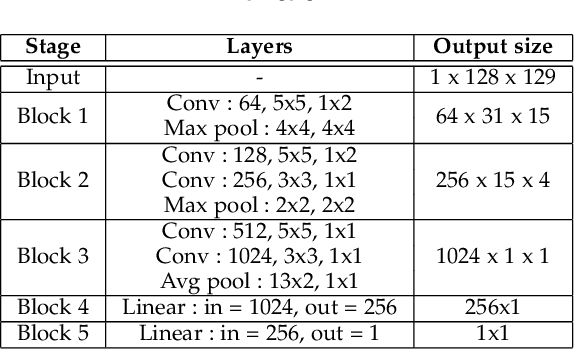

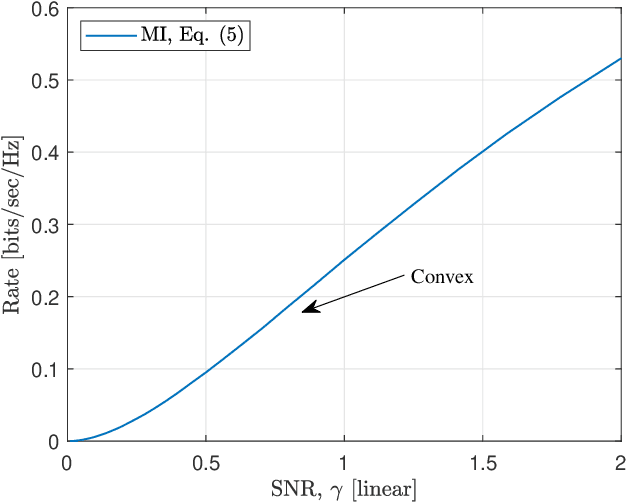

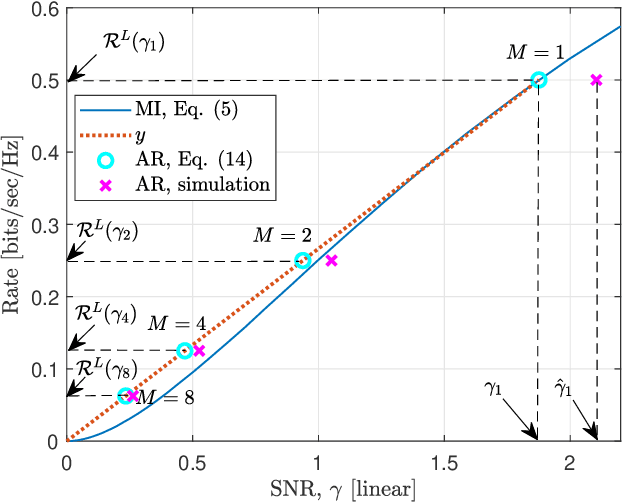

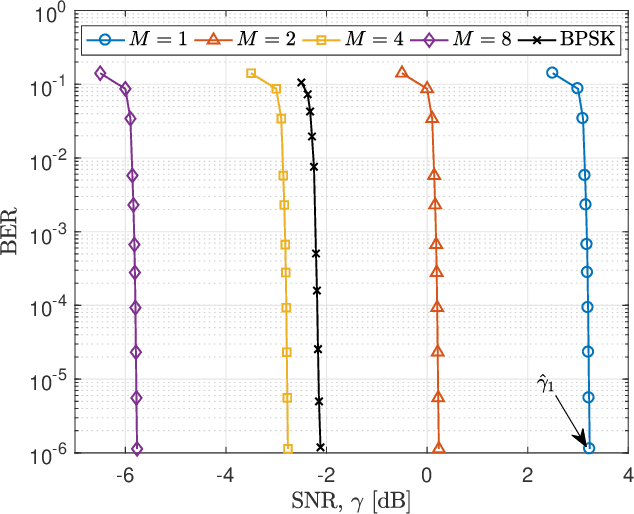

A Practical Consideration on Convex Mutual Information

May 24, 2021

In this paper, we focus on the convex mutual information, which was found at the lowest level split in multilevel coding schemes with communications over the additive white Gaussian noise (AWGN) channel. Theoretical analysis shows that communication achievable rates (ARs) do not necessarily below mutual information in the convex region. In addition, simulation results are provided as an evidence.

Multi-Modal Masked Autoencoders for Medical Vision-and-Language Pre-Training

Sep 15, 2022Medical vision-and-language pre-training provides a feasible solution to extract effective vision-and-language representations from medical images and texts. However, few studies have been dedicated to this field to facilitate medical vision-and-language understanding. In this paper, we propose a self-supervised learning paradigm with multi-modal masked autoencoders (M$^3$AE), which learn cross-modal domain knowledge by reconstructing missing pixels and tokens from randomly masked images and texts. There are three key designs to make this simple approach work. First, considering the different information densities of vision and language, we adopt different masking ratios for the input image and text, where a considerably larger masking ratio is used for images. Second, we use visual and textual features from different layers to perform the reconstruction to deal with different levels of abstraction in visual and language. Third, we develop different designs for vision and language decoders (i.e., a Transformer for vision and a multi-layer perceptron for language). To perform a comprehensive evaluation and facilitate further research, we construct a medical vision-and-language benchmark including three tasks. Experimental results demonstrate the effectiveness of our approach, where state-of-the-art results are achieved on all downstream tasks. Besides, we conduct further analysis to better verify the effectiveness of different components of our approach and various settings of pre-training. The source code is available at~\url{https://github.com/zhjohnchan/M3AE}.

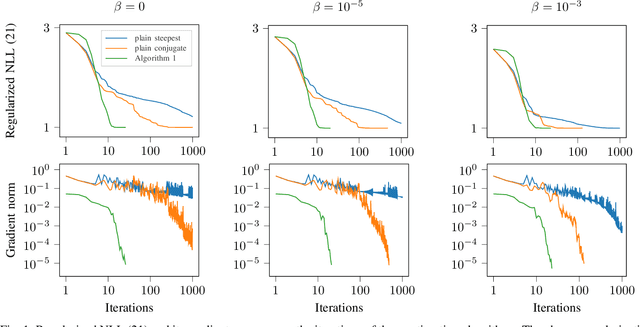

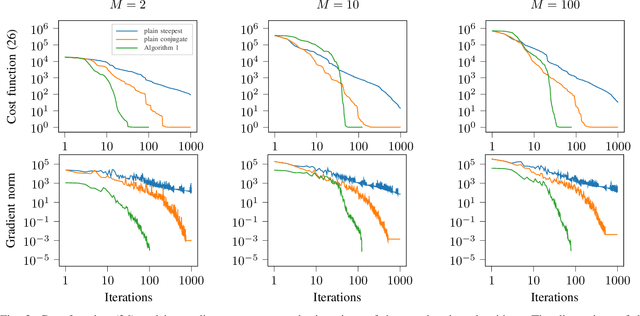

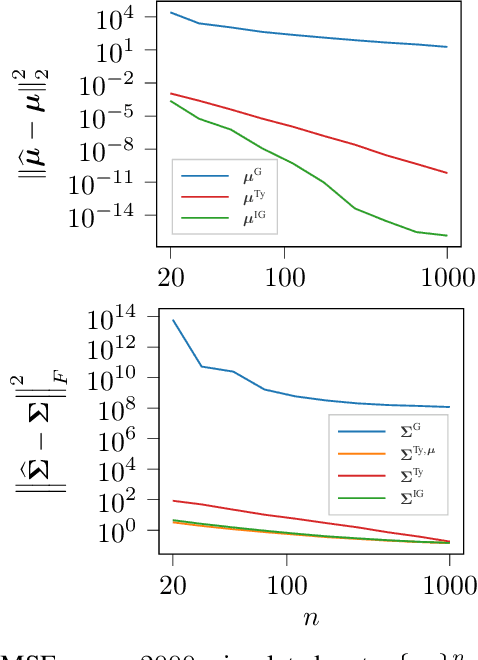

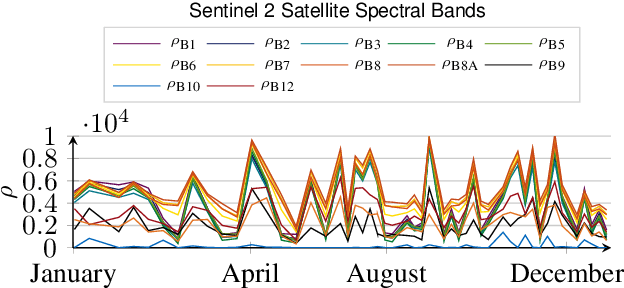

Riemannian optimization for non-centered mixture of scaled Gaussian distributions

Sep 07, 2022

This paper studies the statistical model of the non-centered mixture of scaled Gaussian distributions (NC-MSG). Using the Fisher-Rao information geometry associated to this distribution, we derive a Riemannian gradient descent algorithm. This algorithm is leveraged for two minimization problems. The first one is the minimization of a regularized negative log- likelihood (NLL). The latter makes the trade-off between a white Gaussian distribution and the NC-MSG. Conditions on the regularization are given so that the existence of a minimum to this problem is guaranteed without assumptions on the samples. Then, the Kullback-Leibler (KL) divergence between two NC-MSG is derived. This divergence enables us to define a minimization problem to compute centers of mass of several NC-MSGs. The proposed Riemannian gradient descent algorithm is leveraged to solve this second minimization problem. Numerical experiments show the good performance and the speed of the Riemannian gradient descent on the two problems. Finally, a Nearest centroid classifier is implemented leveraging the KL divergence and its associated center of mass. Applied on the large scale dataset Breizhcrops, this classifier shows good accuracies as well as robustness to rigid transformations of the test set.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge