"Information": models, code, and papers

FedToken: Tokenized Incentives for Data Contribution in Federated Learning

Sep 20, 2022

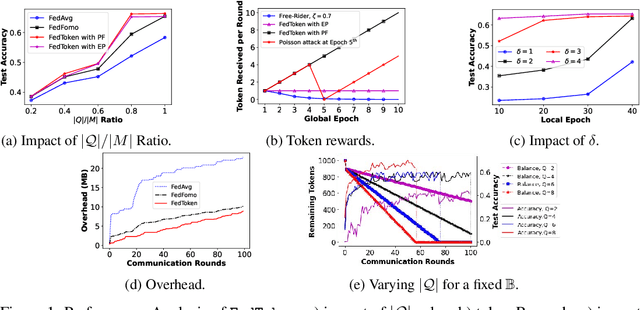

Incentives that compensate for the involved costs in the decentralized training of a Federated Learning (FL) model act as a key stimulus for clients' long-term participation. However, it is challenging to convince clients for quality participation in FL due to the absence of: (i) full information on the client's data quality and properties; (ii) the value of client's data contributions; and (iii) the trusted mechanism for monetary incentive offers. This often leads to poor efficiency in training and communication. While several works focus on strategic incentive designs and client selection to overcome this problem, there is a major knowledge gap in terms of an overall design tailored to the foreseen digital economy, including Web 3.0, while simultaneously meeting the learning objectives. To address this gap, we propose a contribution-based tokenized incentive scheme, namely \texttt{FedToken}, backed by blockchain technology that ensures fair allocation of tokens amongst the clients that corresponds to the valuation of their data during model training. Leveraging the engineered Shapley-based scheme, we first approximate the contribution of local models during model aggregation, then strategically schedule clients lowering the communication rounds for convergence and anchor ways to allocate \emph{affordable} tokens under a constrained monetary budget. Extensive simulations demonstrate the efficacy of our proposed method.

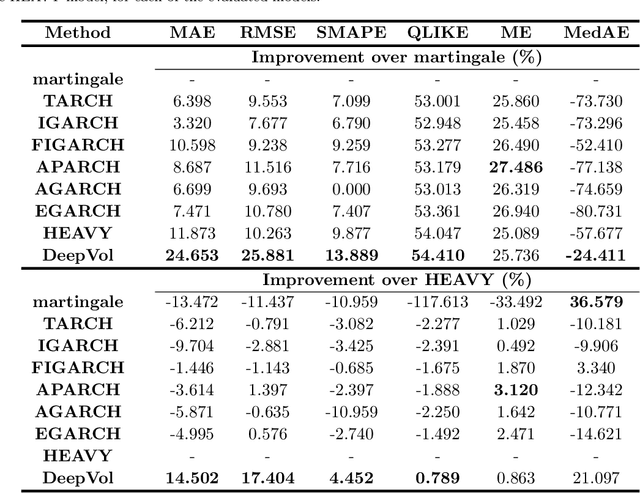

DeepVol: Volatility Forecasting from High-Frequency Data with Dilated Causal Convolutions

Sep 23, 2022

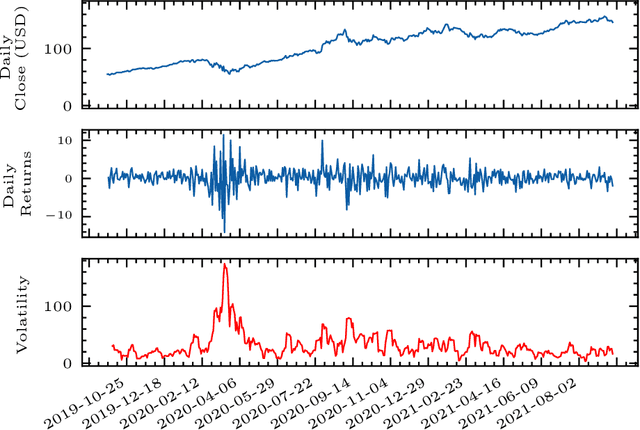

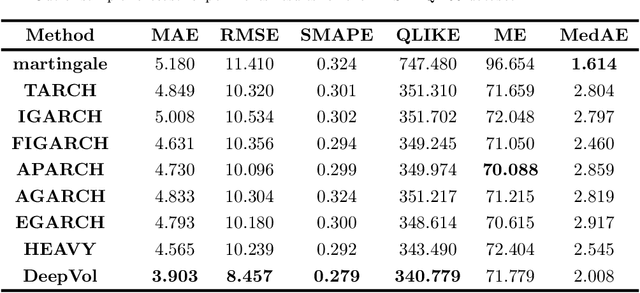

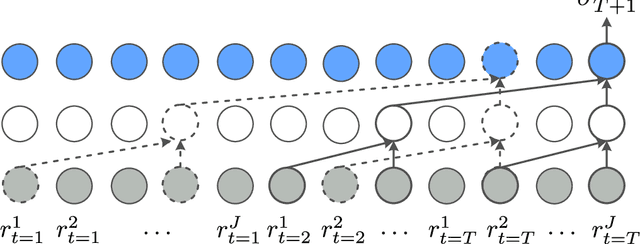

Volatility forecasts play a central role among equity risk measures. Besides traditional statistical models, modern forecasting techniques, based on machine learning, can readily be employed when treating volatility as a univariate, daily time-series. However, econometric studies have shown that increasing the number of daily observations with high-frequency intraday data helps to improve predictions. In this work, we propose DeepVol, a model based on Dilated Causal Convolutions to forecast day-ahead volatility by using high-frequency data. We show that the dilated convolutional filters are ideally suited to extract relevant information from intraday financial data, thereby naturally mimicking (via a data-driven approach) the econometric models which incorporate realised measures of volatility into the forecast. This allows us to take advantage of the abundance of intraday observations, helping us to avoid the limitations of models that use daily data, such as model misspecification or manually designed handcrafted features, whose devise involves optimising the trade-off between accuracy and computational efficiency and makes models prone to lack of adaptation into changing circumstances. In our analysis, we use two years of intraday data from NASDAQ-100 to evaluate DeepVol's performance. The reported empirical results suggest that the proposed deep learning-based approach learns global features from high-frequency data, achieving more accurate predictions than traditional methodologies, yielding to more appropriate risk measures.

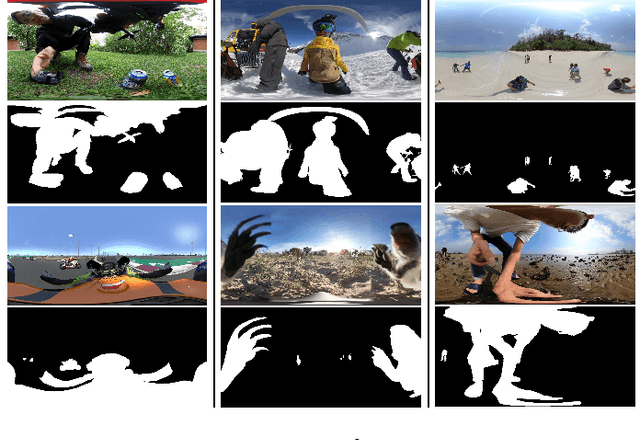

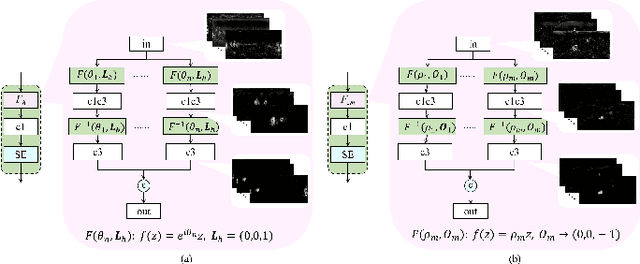

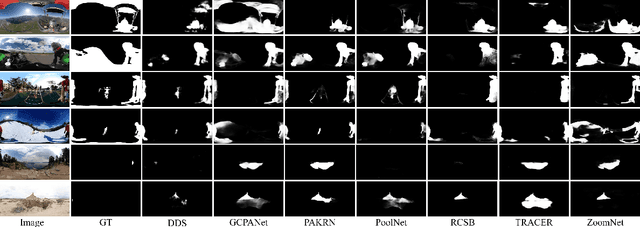

View-aware Salient Object Detection for 360° Omnidirectional Image

Sep 27, 2022

Image-based salient object detection (ISOD) in 360{\deg} scenarios is significant for understanding and applying panoramic information. However, research on 360{\deg} ISOD has not been widely explored due to the lack of large, complex, high-resolution, and well-labeled datasets. Towards this end, we construct a large scale 360{\deg} ISOD dataset with object-level pixel-wise annotation on equirectangular projection (ERP), which contains rich panoramic scenes with not less than 2K resolution and is the largest dataset for 360{\deg} ISOD by far to our best knowledge. By observing the data, we find current methods face three significant challenges in panoramic scenarios: diverse distortion degrees, discontinuous edge effects and changeable object scales. Inspired by humans' observing process, we propose a view-aware salient object detection method based on a Sample Adaptive View Transformer (SAVT) module with two sub-modules to mitigate these issues. Specifically, the sub-module View Transformer (VT) contains three transform branches based on different kinds of transformations to learn various features under different views and heighten the model's feature toleration of distortion, edge effects and object scales. Moreover, the sub-module Sample Adaptive Fusion (SAF) is to adjust the weights of different transform branches based on various sample features and make transformed enhanced features fuse more appropriately. The benchmark results of 20 state-of-the-art ISOD methods reveal the constructed dataset is very challenging. Moreover, exhaustive experiments verify the proposed approach is practical and outperforms the state-of-the-art methods.

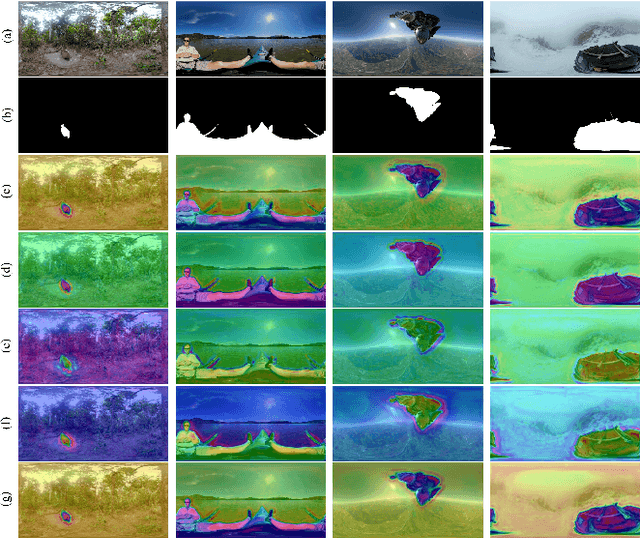

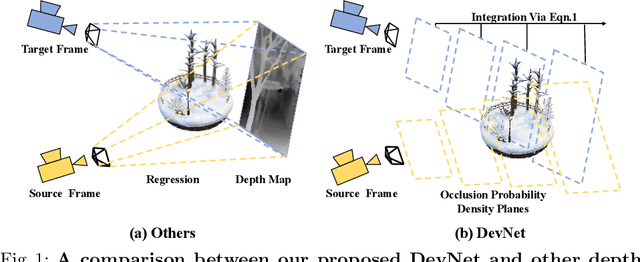

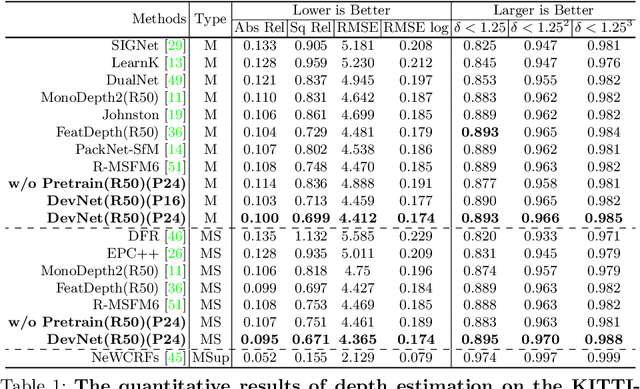

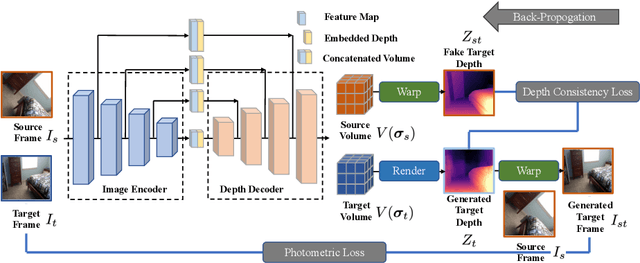

DevNet: Self-supervised Monocular Depth Learning via Density Volume Construction

Sep 20, 2022

Self-supervised depth learning from monocular images normally relies on the 2D pixel-wise photometric relation between temporally adjacent image frames. However, they neither fully exploit the 3D point-wise geometric correspondences, nor effectively tackle the ambiguities in the photometric warping caused by occlusions or illumination inconsistency. To address these problems, this work proposes Density Volume Construction Network (DevNet), a novel self-supervised monocular depth learning framework, that can consider 3D spatial information, and exploit stronger geometric constraints among adjacent camera frustums. Instead of directly regressing the pixel value from a single image, our DevNet divides the camera frustum into multiple parallel planes and predicts the pointwise occlusion probability density on each plane. The final depth map is generated by integrating the density along corresponding rays. During the training process, novel regularization strategies and loss functions are introduced to mitigate photometric ambiguities and overfitting. Without obviously enlarging model parameters size or running time, DevNet outperforms several representative baselines on both the KITTI-2015 outdoor dataset and NYU-V2 indoor dataset. In particular, the root-mean-square-deviation is reduced by around 4% with DevNet on both KITTI-2015 and NYU-V2 in the task of depth estimation. Code is available at https://github.com/gitkaichenzhou/DevNet.

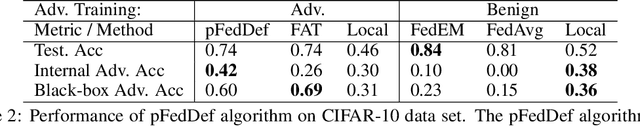

pFedDef: Defending Grey-Box Attacks for Personalized Federated Learning

Sep 17, 2022

Personalized federated learning allows for clients in a distributed system to train a neural network tailored to their unique local data while leveraging information at other clients. However, clients' models are vulnerable to attacks during both the training and testing phases. In this paper we address the issue of adversarial clients crafting evasion attacks at test time to deceive other clients. For example, adversaries may aim to deceive spam filters and recommendation systems trained with personalized federated learning for monetary gain. The adversarial clients have varying degrees of personalization based on the method of distributed learning, leading to a "grey-box" situation. We are the first to characterize the transferability of such internal evasion attacks for different learning methods and analyze the trade-off between model accuracy and robustness depending on the degree of personalization and similarities in client data. We introduce a defense mechanism, pFedDef, that performs personalized federated adversarial training while respecting resource limitations at clients that inhibit adversarial training. Overall, pFedDef increases relative grey-box adversarial robustness by 62% compared to federated adversarial training and performs well even under limited system resources.

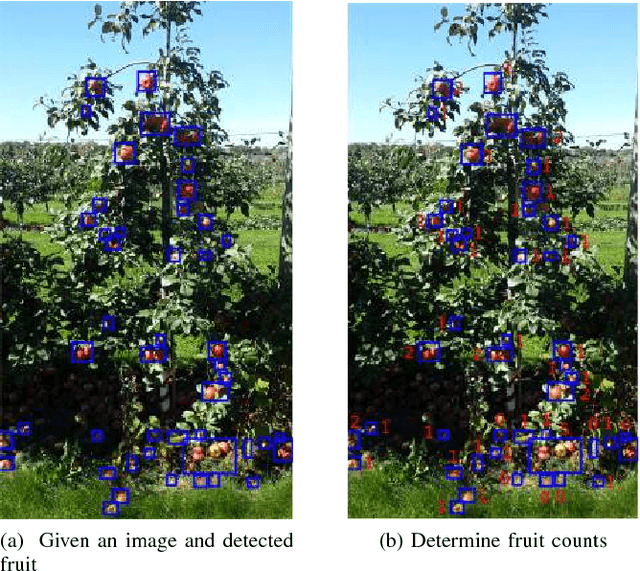

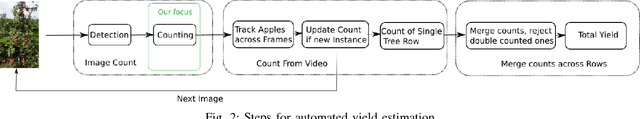

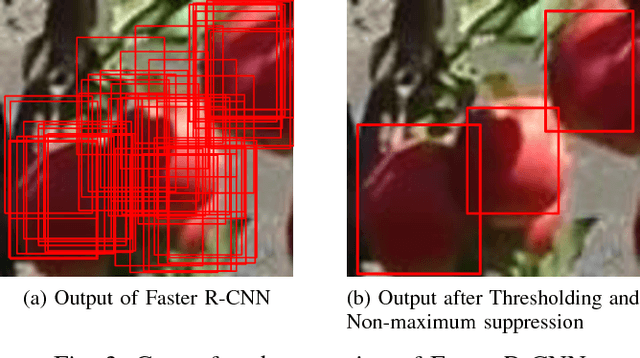

Apple Counting using Convolutional Neural Networks

Aug 24, 2022

Estimating accurate and reliable fruit and vegetable counts from images in real-world settings, such as orchards, is a challenging problem that has received significant recent attention. Estimating fruit counts before harvest provides useful information for logistics planning. While considerable progress has been made toward fruit detection, estimating the actual counts remains challenging. In practice, fruits are often clustered together. Therefore, methods that only detect fruits fail to offer general solutions to estimate accurate fruit counts. Furthermore, in horticultural studies, rather than a single yield estimate, finer information such as the distribution of the number of apples per cluster is desirable. In this work, we formulate fruit counting from images as a multi-class classification problem and solve it by training a Convolutional Neural Network. We first evaluate the per-image accuracy of our method and compare it with a state-of-the-art method based on Gaussian Mixture Models over four test datasets. Even though the parameters of the Gaussian Mixture Model-based method are specifically tuned for each dataset, our network outperforms it in three out of four datasets with a maximum of 94\% accuracy. Next, we use the method to estimate the yield for two datasets for which we have ground truth. Our method achieved 96-97\% accuracies. For additional details please see our video here: https://www.youtube.com/watch?v=Le0mb5P-SYc}{https://www.youtube.com/watch?v=Le0mb5P-SYc.

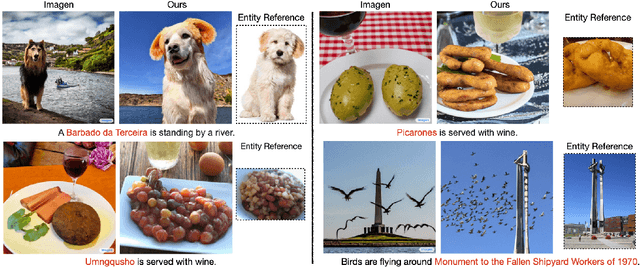

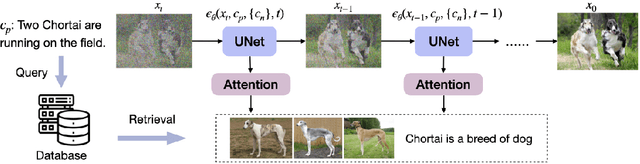

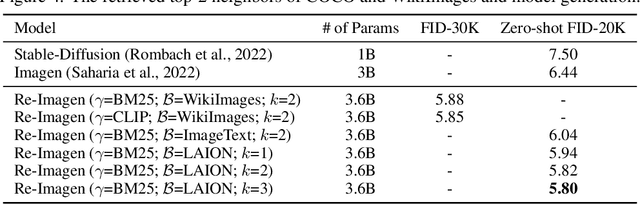

Re-Imagen: Retrieval-Augmented Text-to-Image Generator

Oct 01, 2022

Research on text-to-image generation has witnessed significant progress in generating diverse and photo-realistic images, driven by diffusion and auto-regressive models trained on large-scale image-text data. Though state-of-the-art models can generate high-quality images of common entities, they often have difficulty generating images of uncommon entities, such as `Chortai (dog)' or `Picarones (food)'. To tackle this issue, we present the Retrieval-Augmented Text-to-Image Generator (Re-Imagen), a generative model that uses retrieved information to produce high-fidelity and faithful images, even for rare or unseen entities. Given a text prompt, Re-Imagen accesses an external multi-modal knowledge base to retrieve relevant (image, text) pairs, and uses them as references to generate the image. With this retrieval step, Re-Imagen is augmented with the knowledge of high-level semantics and low-level visual details of the mentioned entities, and thus improves its accuracy in generating the entities' visual appearances. We train Re-Imagen on a constructed dataset containing (image, text, retrieval) triples to teach the model to ground on both text prompt and retrieval. Furthermore, we develop a new sampling strategy to interleave the classifier-free guidance for text and retrieval condition to balance the text and retrieval alignment. Re-Imagen achieves new SoTA FID results on two image generation benchmarks, such as COCO (ie, FID = 5.25) and WikiImage (ie, FID = 5.82) without fine-tuning. To further evaluate the capabilities of the model, we introduce EntityDrawBench, a new benchmark that evaluates image generation for diverse entities, from frequent to rare, across multiple visual domains. Human evaluation on EntityDrawBench shows that Re-Imagen performs on par with the best prior models in photo-realism, but with significantly better faithfulness, especially on less frequent entities.

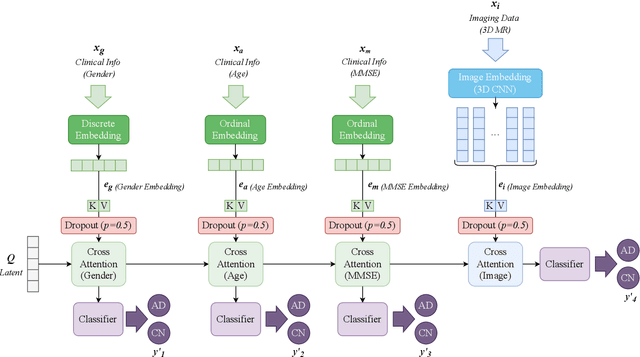

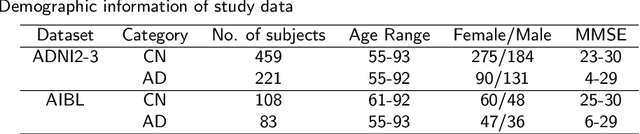

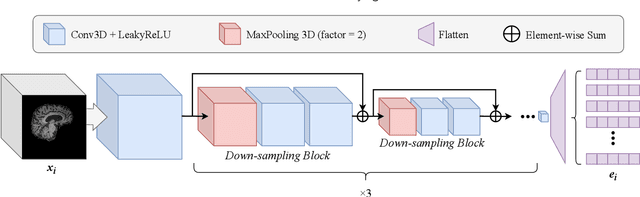

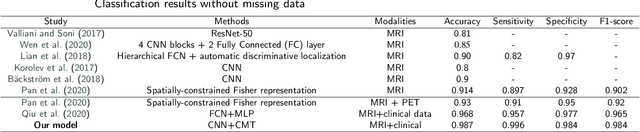

Cascaded Multi-Modal Mixing Transformers for Alzheimer's Disease Classification with Incomplete Data

Oct 01, 2022

Accurate medical classification requires a large number of multi-modal data, and in many cases, in different formats. Previous studies have shown promising results when using multi-modal data, outperforming single-modality models on when classifying disease such as AD. However, those models are usually not flexible enough to handle missing modalities. Currently, the most common workaround is excluding samples with missing modalities which leads to considerable data under-utilisation. Adding to the fact that labelled medical images are already scarce, the performance of data-driven methods like deep learning is severely hampered. Therefore, a multi-modal method that can gracefully handle missing data in various clinical settings is highly desirable. In this paper, we present the Multi-Modal Mixing Transformer (3MT), a novel Transformer for disease classification based on multi-modal data. In this work, we test it for \ac{AD} or \ac{CN} classification using neuroimaging data, gender, age and MMSE scores. The model uses a novel Cascaded Modality Transformers architecture with cross-attention to incorporate multi-modal information for more informed predictions. Auxiliary outputs and a novel modality dropout mechanism were incorporated to ensure an unprecedented level of modality independence and robustness. The result is a versatile network that enables the mixing of an unlimited number of modalities with different formats and full data utilization. 3MT was first tested on the ADNI dataset and achieved state-of-the-art test accuracy of $0.987\pm0.0006$. To test its generalisability, 3MT was directly applied to the AIBL after training on the ADNI dataset, and achieved a test accuracy of $0.925\pm0.0004$ without fine-tuning. Finally, we show that Grad-CAM visualizations are also possible with our model for explainable results.

(Non)-Coherent MU-MIMO Block Fading Channels with Finite Blocklength and Linear Processing

Oct 01, 2022

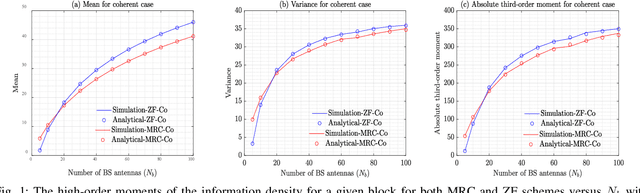

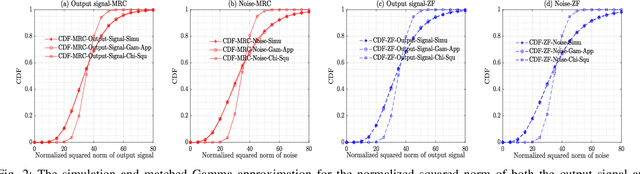

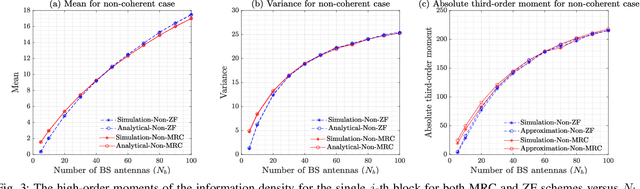

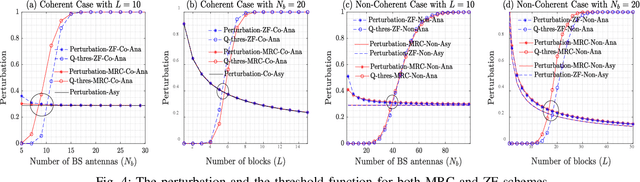

This paper studies the coherent and non-coherent multiuser multiple-input multiple-output (MU-MIMO) uplink system in the finite blocklength regime. The i.i.d. Gaussian codebook is assumed for each user. To be more specific, the BS first uses two popular linear processing schemes to combine the signals transmitted from all users, namely, MRC and ZF. Following it, the matched maximum-likelihood (ML) and mismatched nearest-neighbour (NN) decoding metric for the coherent and non-coherent cases are respectively employed at the BS. Under these conditions, the refined third-order achievable coding rate, expressed as a function of the blocklength, average error probability, and the third-order term of the information density (called as the channel perturbation), is derived. With this result in hand, a detailed performance analysis is then pursued, through which, we derive the asymptotic results of the channel perturbation, achievable coding rate, channel capacity, and the channel dispersion. These theoretical results enable us to obtain a number of interesting insights related to the impact of the finite blocklength: i) in our system setting, massive MIMO helps to reduce the channel perturbation of the achievable coding rate, which can even be discarded without affecting the performance with just a small-to-moderate number of BS antennas and number of blocks; ii) under the non-coherent case, even with massive MIMO, the channel estimation errors cannot be eliminated unless the transmit powers in both the channel estimation and data transmission phases for each user are made inversely proportional to the square root of the number of BS antennas; iii) in the non-coherent case and for fixed total blocklength, the scenarios with longer coherence intervals and smaller number of blocks offer higher achievable coding rate.

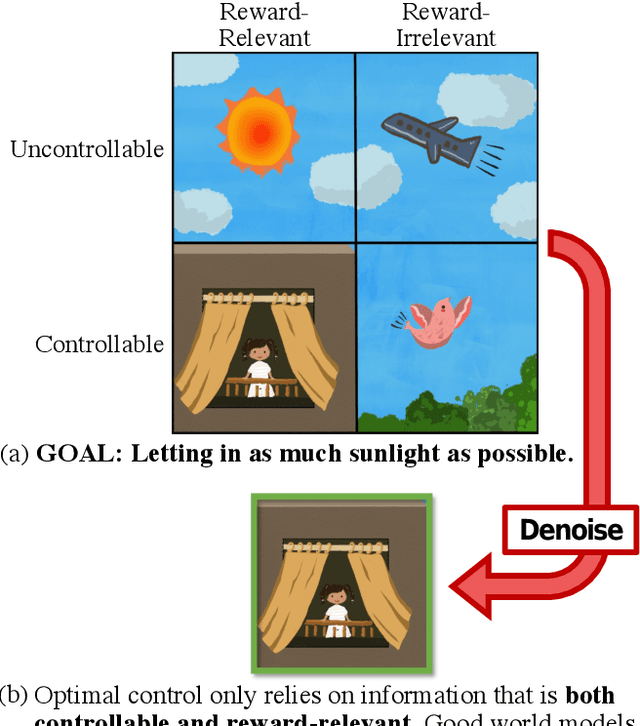

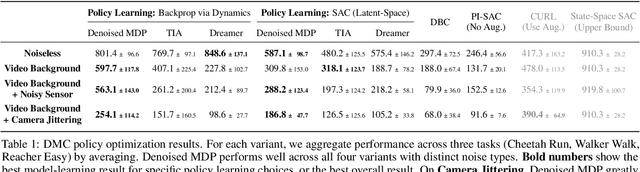

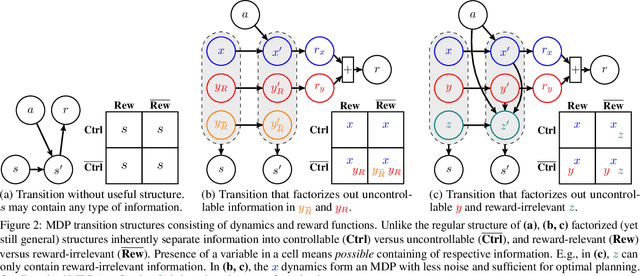

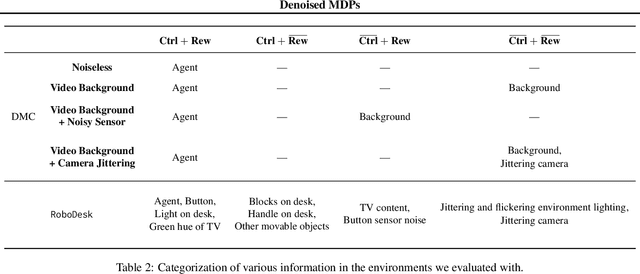

Denoised MDPs: Learning World Models Better Than the World Itself

Jul 18, 2022

The ability to separate signal from noise, and reason with clean abstractions, is critical to intelligence. With this ability, humans can efficiently perform real world tasks without considering all possible nuisance factors.How can artificial agents do the same? What kind of information can agents safely discard as noises? In this work, we categorize information out in the wild into four types based on controllability and relation with reward, and formulate useful information as that which is both controllable and reward-relevant. This framework clarifies the kinds information removed by various prior work on representation learning in reinforcement learning (RL), and leads to our proposed approach of learning a Denoised MDP that explicitly factors out certain noise distractors. Extensive experiments on variants of DeepMind Control Suite and RoboDesk demonstrate superior performance of our denoised world model over using raw observations alone, and over prior works, across policy optimization control tasks as well as the non-control task of joint position regression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge