"Information": models, code, and papers

Proton: Probing Schema Linking Information from Pre-trained Language Models for Text-to-SQL Parsing

Jun 28, 2022

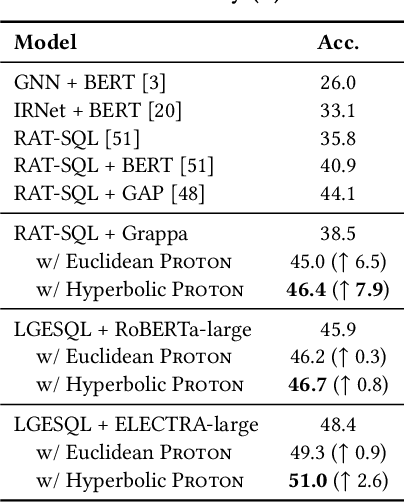

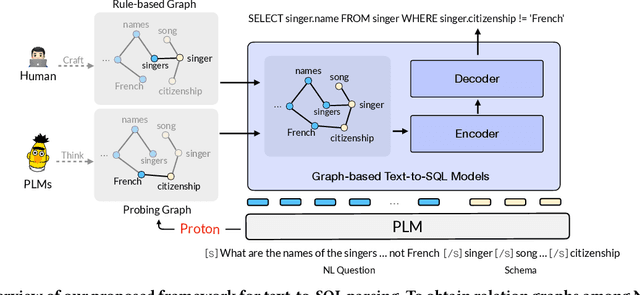

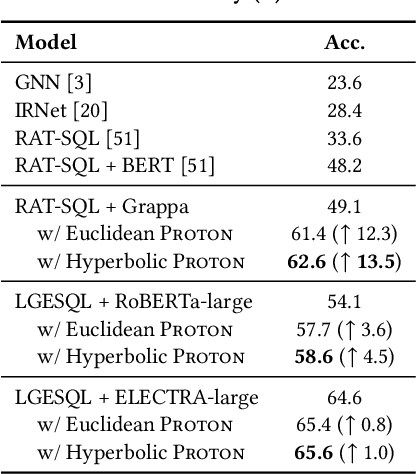

The importance of building text-to-SQL parsers which can be applied to new databases has long been acknowledged, and a critical step to achieve this goal is schema linking, i.e., properly recognizing mentions of unseen columns or tables when generating SQLs. In this work, we propose a novel framework to elicit relational structures from large-scale pre-trained language models (PLMs) via a probing procedure based on Poincar\'e distance metric, and use the induced relations to augment current graph-based parsers for better schema linking. Compared with commonly-used rule-based methods for schema linking, we found that probing relations can robustly capture semantic correspondences, even when surface forms of mentions and entities differ. Moreover, our probing procedure is entirely unsupervised and requires no additional parameters. Extensive experiments show that our framework sets new state-of-the-art performance on three benchmarks. We empirically verify that our probing procedure can indeed find desired relational structures through qualitative analysis.

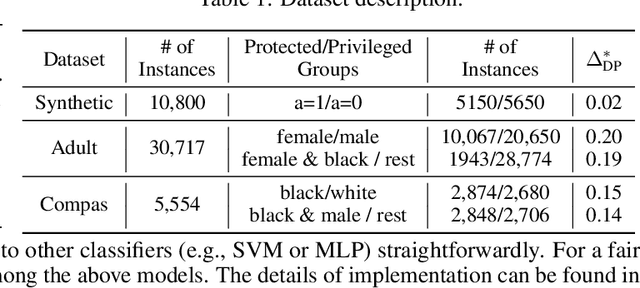

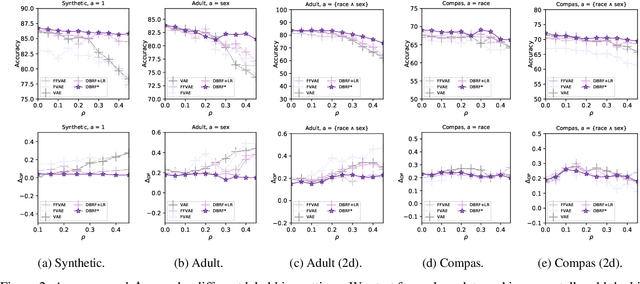

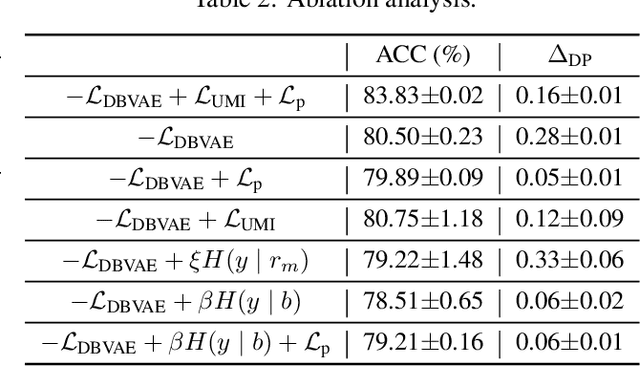

De-biased Representation Learning for Fairness with Unreliable Labels

Aug 01, 2022

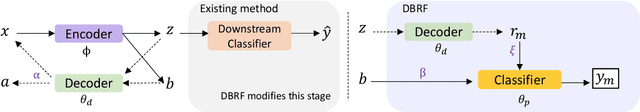

Removing bias while keeping all task-relevant information is challenging for fair representation learning methods since they would yield random or degenerate representations w.r.t. labels when the sensitive attributes correlate with labels. Existing works proposed to inject the label information into the learning procedure to overcome such issues. However, the assumption that the observed labels are clean is not always met. In fact, label bias is acknowledged as the primary source inducing discrimination. In other words, the fair pre-processing methods ignore the discrimination encoded in the labels either during the learning procedure or the evaluation stage. This contradiction puts a question mark on the fairness of the learned representations. To circumvent this issue, we explore the following question: \emph{Can we learn fair representations predictable to latent ideal fair labels given only access to unreliable labels?} In this work, we propose a \textbf{D}e-\textbf{B}iased \textbf{R}epresentation Learning for \textbf{F}airness (DBRF) framework which disentangles the sensitive information from non-sensitive attributes whilst keeping the learned representations predictable to ideal fair labels rather than observed biased ones. We formulate the de-biased learning framework through information-theoretic concepts such as mutual information and information bottleneck. The core concept is that DBRF advocates not to use unreliable labels for supervision when sensitive information benefits the prediction of unreliable labels. Experiment results over both synthetic and real-world data demonstrate that DBRF effectively learns de-biased representations towards ideal labels.

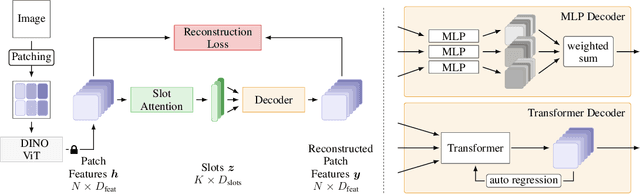

Bridging the Gap to Real-World Object-Centric Learning

Sep 29, 2022

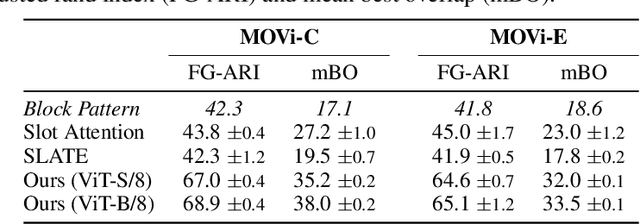

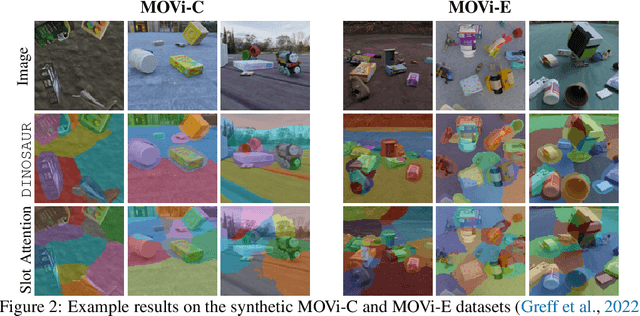

Humans naturally decompose their environment into entities at the appropriate level of abstraction to act in the world. Allowing machine learning algorithms to derive this decomposition in an unsupervised way has become an important line of research. However, current methods are restricted to simulated data or require additional information in the form of motion or depth in order to successfully discover objects. In this work, we overcome this limitation by showing that reconstructing features from models trained in a self-supervised manner is a sufficient training signal for object-centric representations to arise in a fully unsupervised way. Our approach, DINOSAUR, significantly out-performs existing object-centric learning models on simulated data and is the first unsupervised object-centric model that scales to real world-datasets such as COCO and PASCAL VOC. DINOSAUR is conceptually simple and shows competitive performance compared to more involved pipelines from the computer vision literature.

oViT: An Accurate Second-Order Pruning Framework for Vision Transformers

Oct 14, 2022

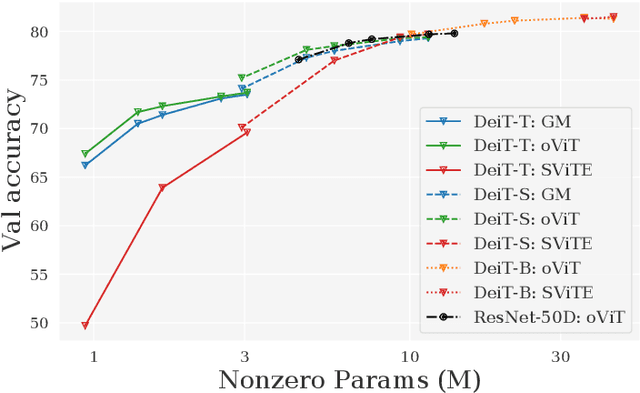

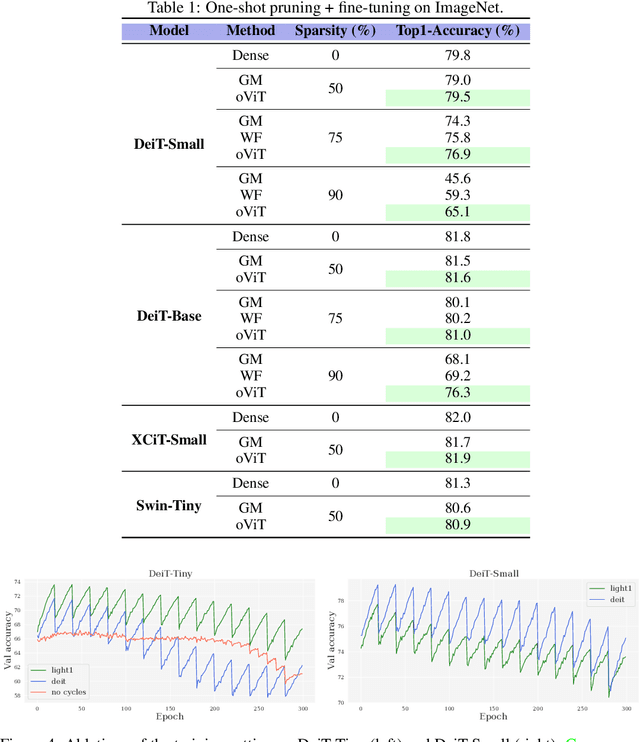

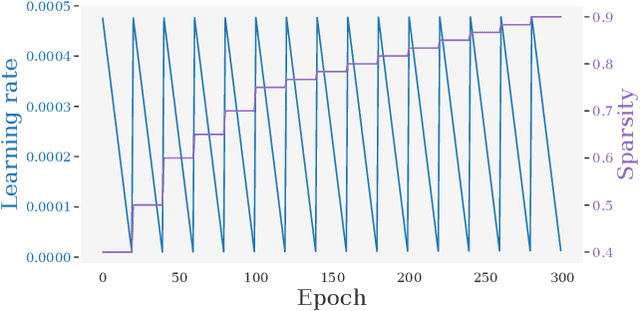

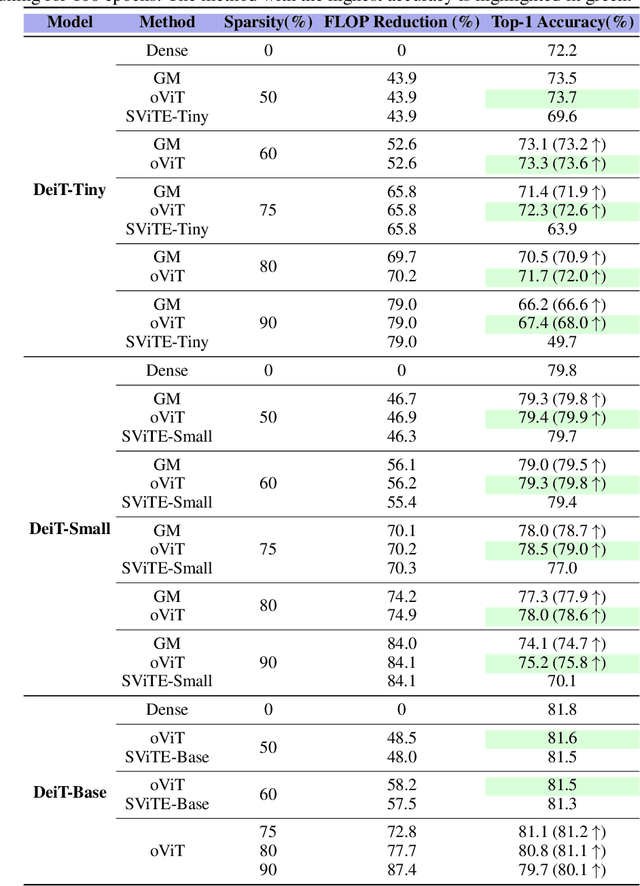

Models from the Vision Transformer (ViT) family have recently provided breakthrough results across image classification tasks such as ImageNet. Yet, they still face barriers to deployment, notably the fact that their accuracy can be severely impacted by compression techniques such as pruning. In this paper, we take a step towards addressing this issue by introducing Optimal ViT Surgeon (oViT), a new state-of-the-art method for the weight sparsification of Vision Transformers (ViT) models. At the technical level, oViT introduces a new weight pruning algorithm which leverages second-order information, specifically adapted to be both highly-accurate and efficient in the context of ViTs. We complement this accurate one-shot pruner with an in-depth investigation of gradual pruning, augmentation, and recovery schedules for ViTs, which we show to be critical for successful ViT compression. We validate our method via extensive experiments on classical ViT and DeiT models, as well as on newer variants, such as XCiT, EfficientFormer and Swin. Moreover, our results are even relevant to recently-proposed highly-accurate ResNets. Our results show for the first time that ViT-family models can in fact be pruned to high sparsity levels (e.g. $\geq 75\%$) with low impact on accuracy ($\leq 1\%$ relative drop), and that our approach outperforms prior methods by significant margins at high sparsities. In addition, we show that our method is compatible with structured pruning methods and quantization, and that it can lead to significant speedups on a sparsity-aware inference engine.

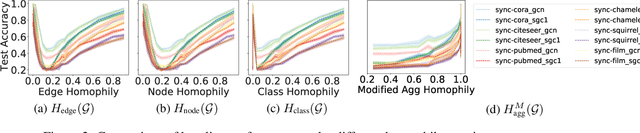

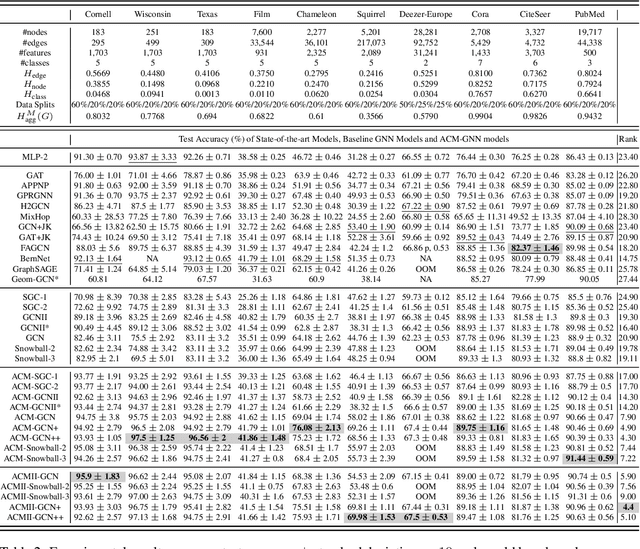

Revisiting Heterophily For Graph Neural Networks

Oct 14, 2022

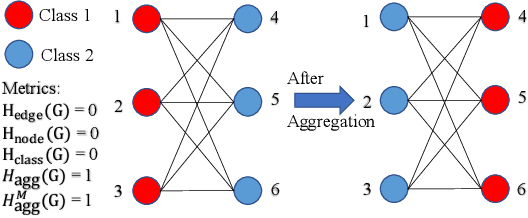

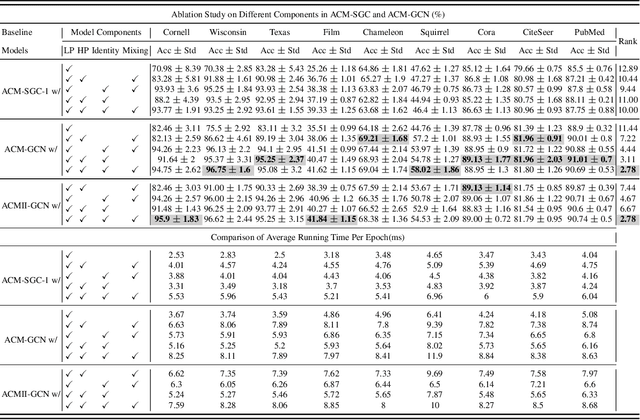

Graph Neural Networks (GNNs) extend basic Neural Networks (NNs) by using graph structures based on the relational inductive bias (homophily assumption). While GNNs have been commonly believed to outperform NNs in real-world tasks, recent work has identified a non-trivial set of datasets where their performance compared to NNs is not satisfactory. Heterophily has been considered the main cause of this empirical observation and numerous works have been put forward to address it. In this paper, we first revisit the widely used homophily metrics and point out that their consideration of only graph-label consistency is a shortcoming. Then, we study heterophily from the perspective of post-aggregation node similarity and define new homophily metrics, which are potentially advantageous compared to existing ones. Based on this investigation, we prove that some harmful cases of heterophily can be effectively addressed by local diversification operation. Then, we propose the Adaptive Channel Mixing (ACM), a framework to adaptively exploit aggregation, diversification and identity channels node-wisely to extract richer localized information for diverse node heterophily situations. ACM is more powerful than the commonly used uni-channel framework for node classification tasks on heterophilic graphs and is easy to be implemented in baseline GNN layers. When evaluated on 10 benchmark node classification tasks, ACM-augmented baselines consistently achieve significant performance gain, exceeding state-of-the-art GNNs on most tasks without incurring significant computational burden.

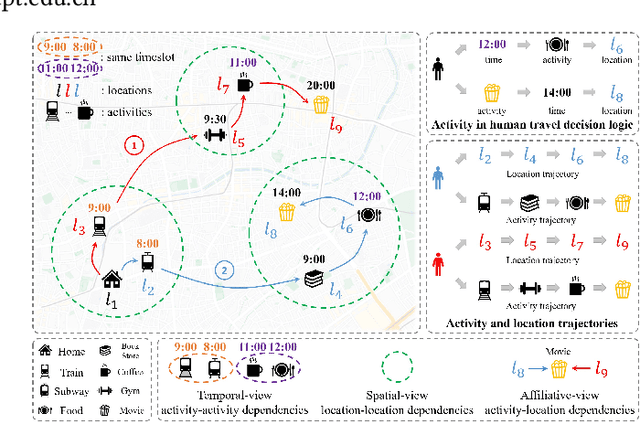

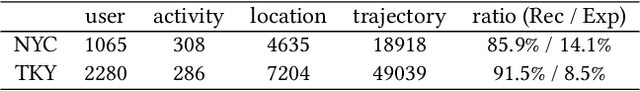

HGARN: Hierarchical Graph Attention Recurrent Network for Human Mobility Prediction

Oct 14, 2022

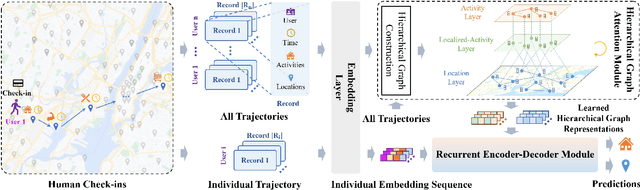

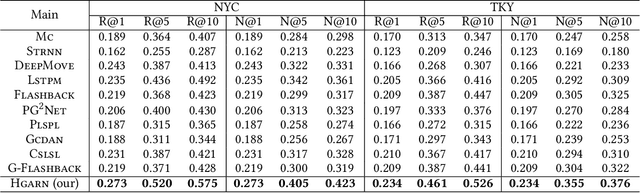

Human mobility prediction is a fundamental task essential for various applications, including urban planning, transportation services, and location recommendation. Existing approaches often ignore activity information crucial for reasoning human preferences and routines, or adopt a simplified representation of the dependencies between time, activities and locations. To address these issues, we present Hierarchical Graph Attention Recurrent Network (HGARN) for human mobility prediction. Specifically, we construct a hierarchical graph based on all users' history mobility records and employ a Hierarchical Graph Attention Module to capture complex time-activity-location dependencies. This way, HGARN can learn representations with rich contextual semantics to model user preferences at the global level. We also propose a model-agnostic history-enhanced confidence (MaHec) label to focus our model on each user's individual-level preferences. Finally, we introduce a Recurrent Encoder-Decoder Module, which employs recurrent structures to jointly predict users' next activities (as an auxiliary task) and locations. For model evaluation, we test the performances of our Hgarn against existing SOTAs in recurring and explorative settings. The recurring setting focuses more on assessing models' capabilities to capture users' individual-level preferences. In contrast, the results in the explorative setting tend to reflect the power of different models to learn users' global-level preferences. Overall, our model outperforms other baselines significantly in the main, recurring, and explorative settings based on two real-world human mobility data benchmarks. Source codes of HGARN are available at https://github.com/YihongT/HGARN.

Transformer-Based Speech Synthesizer Attribution in an Open Set Scenario

Oct 14, 2022

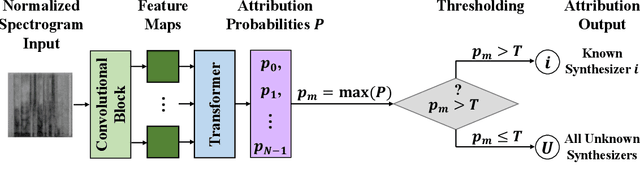

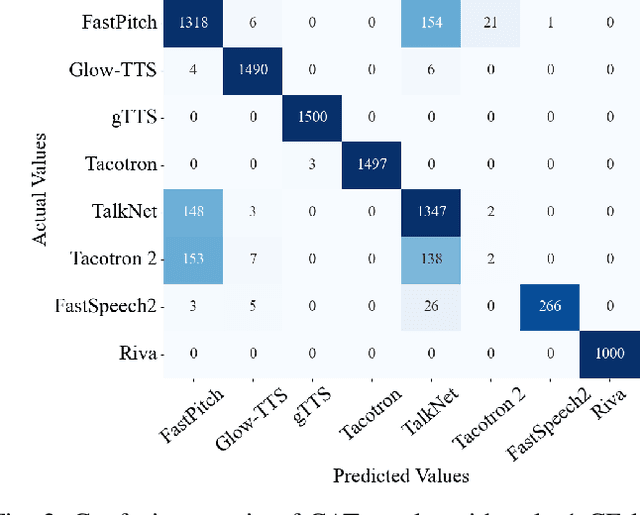

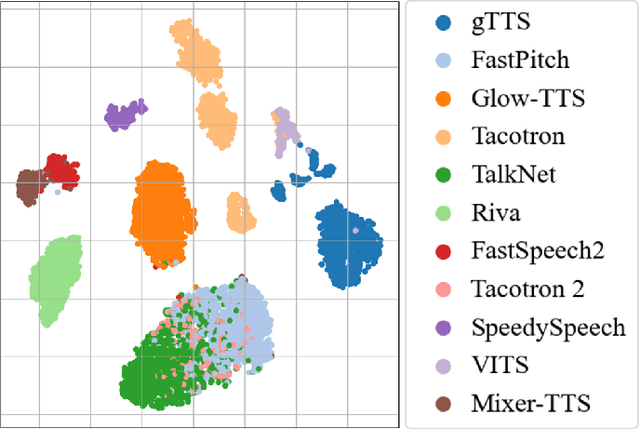

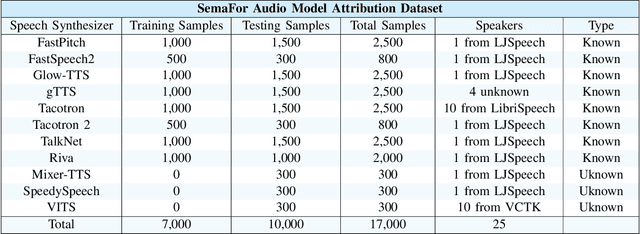

Speech synthesis methods can create realistic-sounding speech, which may be used for fraud, spoofing, and misinformation campaigns. Forensic methods that detect synthesized speech are important for protection against such attacks. Forensic attribution methods provide even more information about the nature of synthesized speech signals because they identify the specific speech synthesis method (i.e., speech synthesizer) used to create a speech signal. Due to the increasing number of realistic-sounding speech synthesizers, we propose a speech attribution method that generalizes to new synthesizers not seen during training. To do so, we investigate speech synthesizer attribution in both a closed set scenario and an open set scenario. In other words, we consider some speech synthesizers to be "known" synthesizers (i.e., part of the closed set) and others to be "unknown" synthesizers (i.e., part of the open set). We represent speech signals as spectrograms and train our proposed method, known as compact attribution transformer (CAT), on the closed set for multi-class classification. Then, we extend our analysis to the open set to attribute synthesized speech signals to both known and unknown synthesizers. We utilize a t-distributed stochastic neighbor embedding (tSNE) on the latent space of the trained CAT to differentiate between each unknown synthesizer. Additionally, we explore poly-1 loss formulations to improve attribution results. Our proposed approach successfully attributes synthesized speech signals to their respective speech synthesizers in both closed and open set scenarios.

* Accepted to the 2022 IEEE International Conference on Machine Learning and Applications

Robust, General, and Low Complexity Acoustic Scene Classification Systems and An Effective Visualization for Presenting a Sound Scene Context

Oct 16, 2022

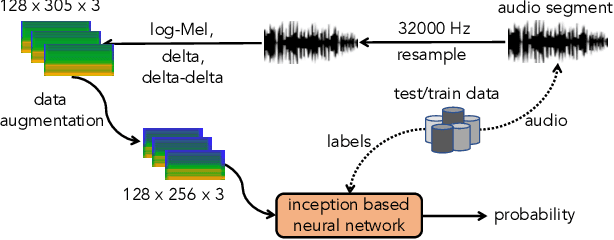

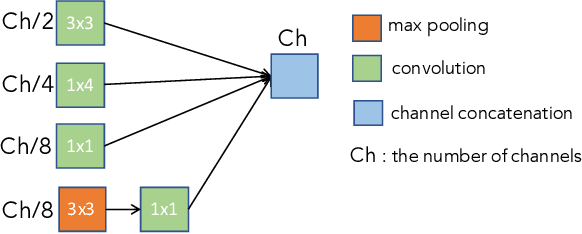

In this paper, we present a comprehensive analysis of Acoustic Scene Classification (ASC), the task of identifying the scene of an audio recording from its acoustic signature. In particular, we firstly propose an inception-based and low footprint ASC model, referred to as the ASC baseline. The proposed ASC baseline is then compared with benchmark and high-complexity network architectures of MobileNetV1, MobileNetV2, VGG16, VGG19, ResNet50V2, ResNet152V2, DenseNet121, DenseNet201, and Xception. Next, we improve the ASC baseline by proposing a novel deep neural network architecture which leverages residual-inception architectures and multiple kernels. Given the novel residual-inception (NRI) model, we further evaluate the trade off between the model complexity and the model accuracy performance. Finally, we evaluate whether sound events occurring in a sound scene recording can help to improve ASC accuracy, then indicate how a sound scene context is well presented by combining both sound scene and sound event information. We conduct extensive experiments on various ASC datasets, including Crowded Scenes, IEEE AASP Challenge on Detection and Classification of Acoustic Scenes and Events (DCASE) 2018 Task 1A and 1B, 2019 Task 1A and 1B, 2020 Task 1A, 2021 Task 1A, 2022 Task 1. The experimental results on several different ASC challenges highlight two main achievements; the first is to propose robust, general, and low complexity ASC systems which are suitable for real-life applications on a wide range of edge devices and mobiles; the second is to propose an effective visualization method for comprehensively presenting a sound scene context.

CAIR: Fast and Lightweight Multi-Scale Color Attention Network for Instagram Filter Removal

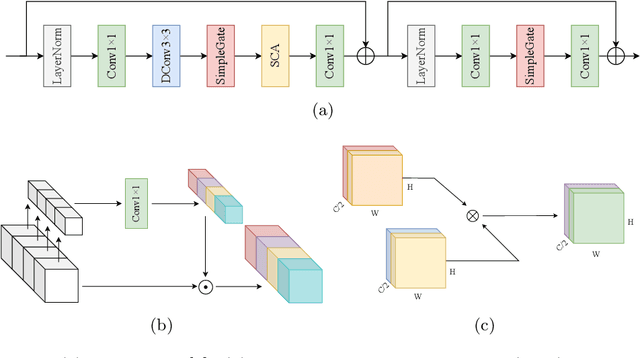

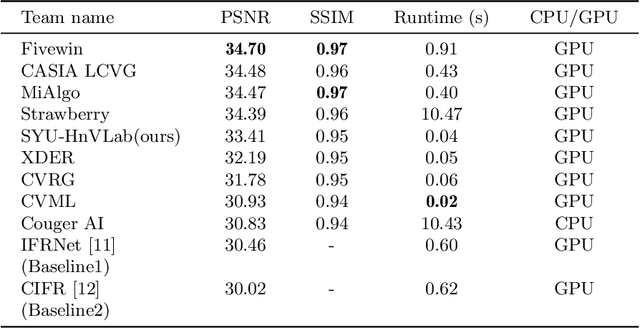

Aug 30, 2022

Image restoration is an important and challenging task in computer vision. Reverting a filtered image to its original image is helpful in various computer vision tasks. We employ a nonlinear activation function free network (NAFNet) for a fast and lightweight model and add a color attention module that extracts useful color information for better accuracy. We propose an accurate, fast, lightweight network with multi-scale and color attention for Instagram filter removal (CAIR). Experiment results show that the proposed CAIR outperforms existing Instagram filter removal networks in fast and lightweight ways, about 11$\times$ faster and 2.4$\times$ lighter while exceeding 3.69 dB PSNR on IFFI dataset. CAIR can successfully remove the Instagram filter with high quality and restore color information in qualitative results. The source code and pretrained weights are available at \url{https://github.com/HnV-Lab/CAIR}.

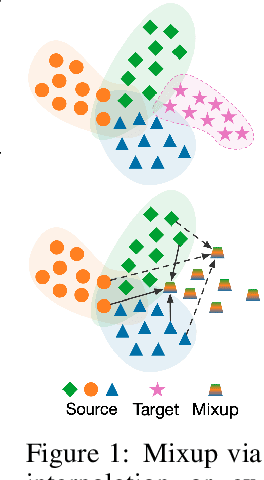

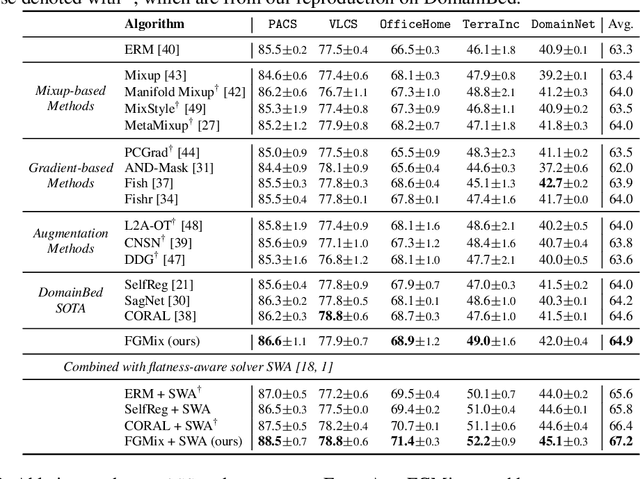

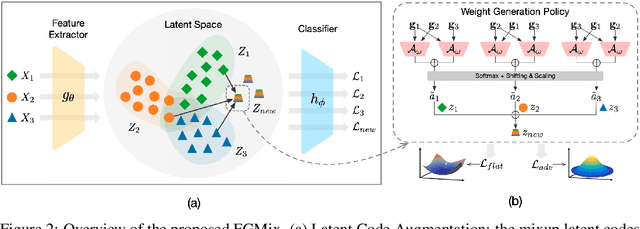

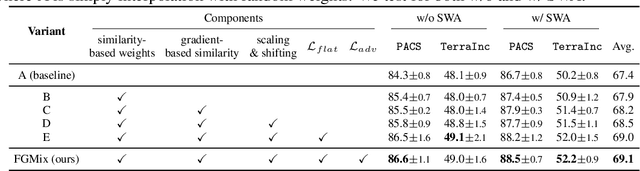

Learning Gradient-based Mixup towards Flatter Minima for Domain Generalization

Sep 29, 2022

To address the distribution shifts between training and test data, domain generalization (DG) leverages multiple source domains to learn a model that generalizes well to unseen domains. However, existing DG methods generally suffer from overfitting to the source domains, partly due to the limited coverage of the expected region in feature space. Motivated by this, we propose to perform mixup with data interpolation and extrapolation to cover the potential unseen regions. To prevent the detrimental effects of unconstrained extrapolation, we carefully design a policy to generate the instance weights, named Flatness-aware Gradient-based Mixup (FGMix). The policy employs a gradient-based similarity to assign greater weights to instances that carry more invariant information, and learns the similarity function towards flatter minima for better generalization. On the DomainBed benchmark, we validate the efficacy of various designs of FGMix and demonstrate its superiority over other DG algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge