"Information": models, code, and papers

WebFormer: The Web-page Transformer for Structure Information Extraction

Feb 01, 2022

Structure information extraction refers to the task of extracting structured text fields from web pages, such as extracting a product offer from a shopping page including product title, description, brand and price. It is an important research topic which has been widely studied in document understanding and web search. Recent natural language models with sequence modeling have demonstrated state-of-the-art performance on web information extraction. However, effectively serializing tokens from unstructured web pages is challenging in practice due to a variety of web layout patterns. Limited work has focused on modeling the web layout for extracting the text fields. In this paper, we introduce WebFormer, a Web-page transFormer model for structure information extraction from web documents. First, we design HTML tokens for each DOM node in the HTML by embedding representations from their neighboring tokens through graph attention. Second, we construct rich attention patterns between HTML tokens and text tokens, which leverages the web layout for effective attention weight computation. We conduct an extensive set of experiments on SWDE and Common Crawl benchmarks. Experimental results demonstrate the superior performance of the proposed approach over several state-of-the-art methods.

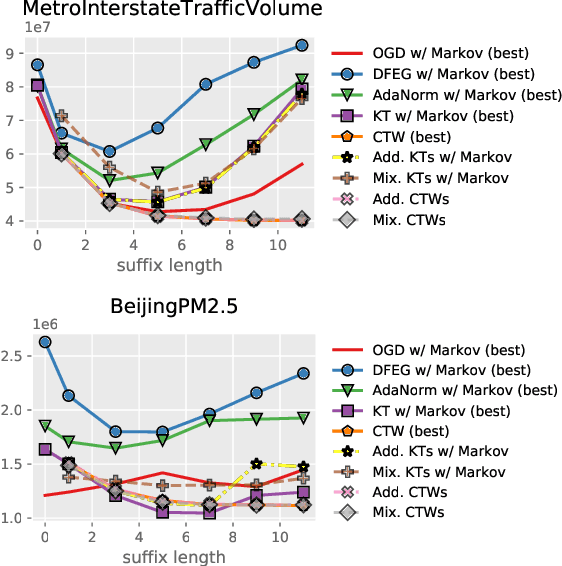

Parameter-free Online Linear Optimization with Side Information via Universal Coin Betting

Feb 04, 2022

A class of parameter-free online linear optimization algorithms is proposed that harnesses the structure of an adversarial sequence by adapting to some side information. These algorithms combine the reduction technique of Orabona and P{\'a}l (2016) for adapting coin betting algorithms for online linear optimization with universal compression techniques in information theory for incorporating sequential side information to coin betting. Concrete examples are studied in which the side information has a tree structure and consists of quantized values of the previous symbols of the adversarial sequence, including fixed-order and variable-order Markov cases. By modifying the context-tree weighting technique of Willems, Shtarkov, and Tjalkens (1995), the proposed algorithm is further refined to achieve the best performance over all adaptive algorithms with tree-structured side information of a given maximum order in a computationally efficient manner.

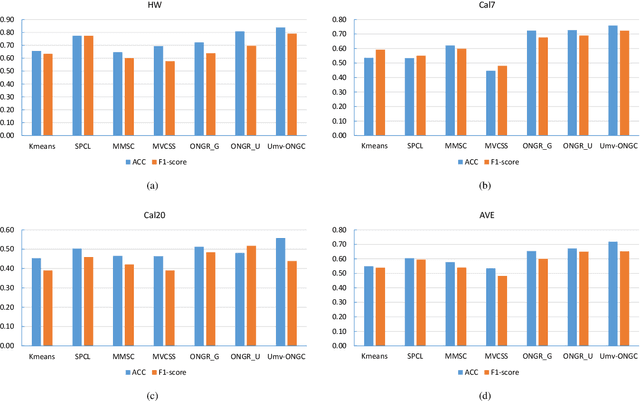

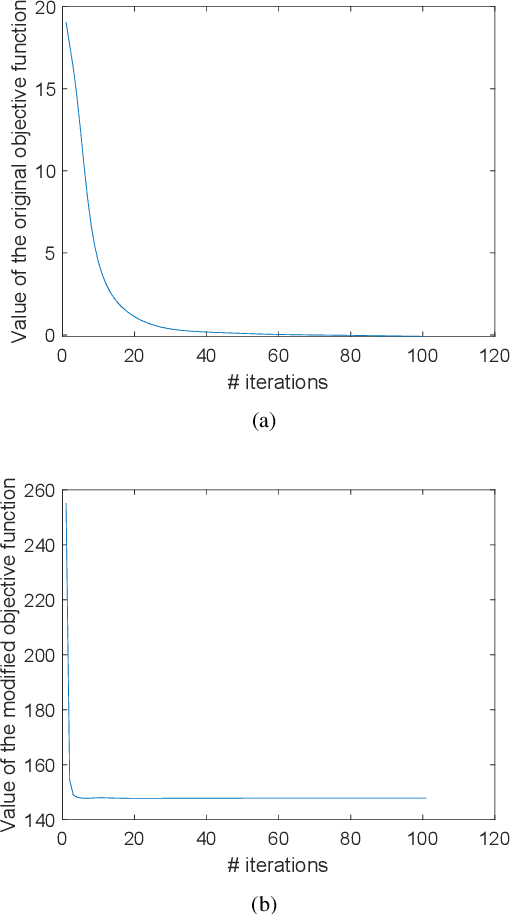

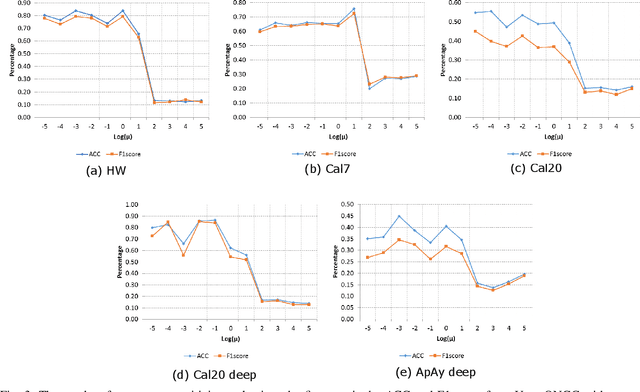

Unified Multi-View Orthonormal Non-Negative Graph Based Clustering Framework

Nov 03, 2022

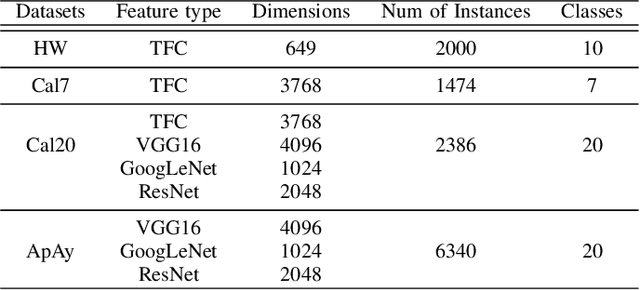

Spectral clustering is an effective methodology for unsupervised learning. Most traditional spectral clustering algorithms involve a separate two-step procedure and apply the transformed new representations for the final clustering results. Recently, much progress has been made to utilize the non-negative feature property in real-world data and to jointly learn the representation and clustering results. However, to our knowledge, no previous work considers a unified model that incorporates the important multi-view information with those properties, which severely limits the performance of existing methods. In this paper, we formulate a novel clustering model, which exploits the non-negative feature property and, more importantly, incorporates the multi-view information into a unified joint learning framework: the unified multi-view orthonormal non-negative graph based clustering framework (Umv-ONGC). Then, we derive an effective three-stage iterative solution for the proposed model and provide analytic solutions for the three sub-problems from the three stages. We also explore, for the first time, the multi-model non-negative graph-based approach to clustering data based on deep features. Extensive experiments on three benchmark data sets demonstrate the effectiveness of the proposed method.

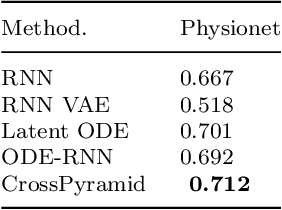

CrossPyramid: Neural Ordinary Differential Equations Architecture for Partially-observed Time-series

Dec 07, 2022

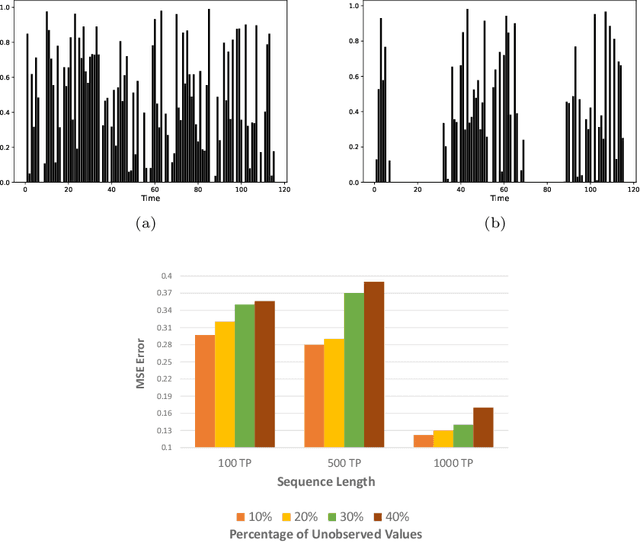

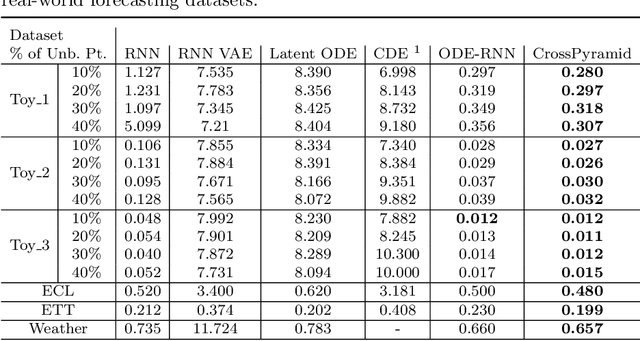

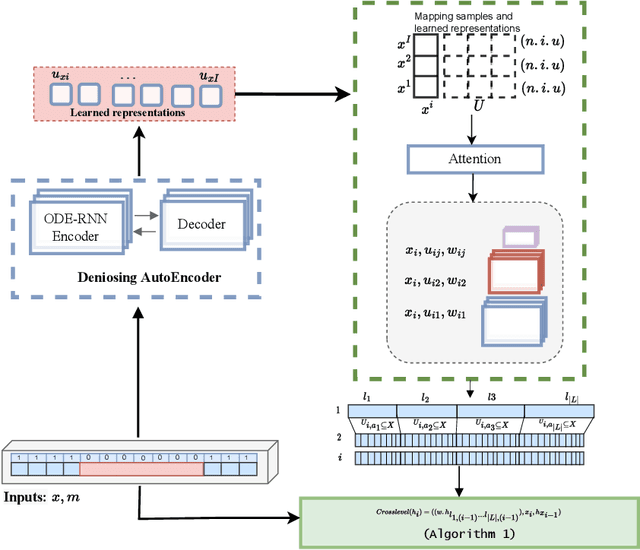

Ordinary Differential Equations (ODE)-based models have become popular foundation models to solve many time-series problems. Combining neural ODEs with traditional RNN models has provided the best representation for irregular time series. However, ODE-based models require the trajectory of hidden states to be defined based on the initial observed value or the last available observation. This fact raises questions about how long the generated hidden state is sufficient and whether it is effective when long sequences are used instead of the typically used shorter sequences. In this article, we introduce CrossPyramid, a novel ODE-based model that aims to enhance the generalizability of sequences representation. CrossPyramid does not rely only on the hidden state from the last observed value; it also considers ODE latent representations learned from other samples. The main idea of our proposed model is to define the hidden state for the unobserved values based on the non-linear correlation between samples. Accordingly, CrossPyramid is built with three distinctive parts: (1) ODE Auto-Encoder to learn the best data representation. (2) Pyramidal attention method to categorize the learned representations (hidden state) based on the relationship characteristics between samples. (3) Cross-level ODE-RNN to integrate the previously learned information and provide the final latent state for each sample. Through extensive experiments on partially-observed synthetic and real-world datasets, we show that the proposed architecture can effectively model the long gaps in intermittent series and outperforms state-of-the-art approaches. The results show an average improvement of 10\% on univariate and multivariate datasets for both forecasting and classification tasks.

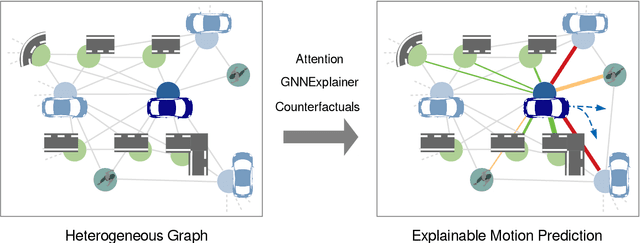

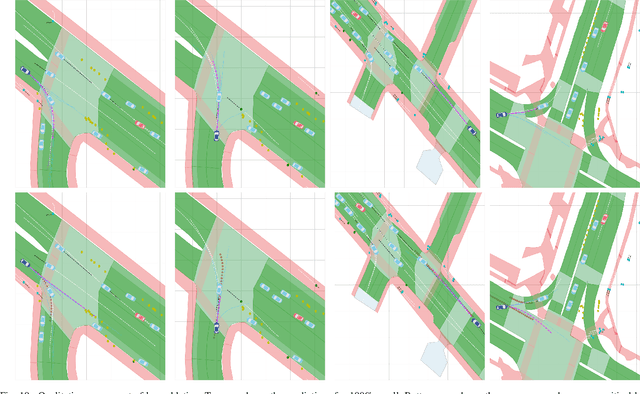

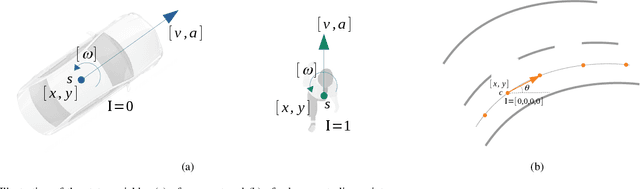

Towards Explainable Motion Prediction using Heterogeneous Graph Representations

Dec 07, 2022

Motion prediction systems aim to capture the future behavior of traffic scenarios enabling autonomous vehicles to perform safe and efficient planning. The evolution of these scenarios is highly uncertain and depends on the interactions of agents with static and dynamic objects in the scene. GNN-based approaches have recently gained attention as they are well suited to naturally model these interactions. However, one of the main challenges that remains unexplored is how to address the complexity and opacity of these models in order to deal with the transparency requirements for autonomous driving systems, which includes aspects such as interpretability and explainability. In this work, we aim to improve the explainability of motion prediction systems by using different approaches. First, we propose a new Explainable Heterogeneous Graph-based Policy (XHGP) model based on an heterograph representation of the traffic scene and lane-graph traversals, which learns interaction behaviors using object-level and type-level attention. This learned attention provides information about the most important agents and interactions in the scene. Second, we explore this same idea with the explanations provided by GNNExplainer. Third, we apply counterfactual reasoning to provide explanations of selected individual scenarios by exploring the sensitivity of the trained model to changes made to the input data, i.e., masking some elements of the scene, modifying trajectories, and adding or removing dynamic agents. The explainability analysis provided in this paper is a first step towards more transparent and reliable motion prediction systems, important from the perspective of the user, developers and regulatory agencies. The code to reproduce this work is publicly available at https://github.com/sancarlim/Explainable-MP/tree/v1.1.

Imitation Learning-based Implicit Semantic-aware Communication Networks: Multi-layer Representation and Collaborative Reasoning

Oct 28, 2022

Semantic communication has recently attracted significant interest from both industry and academia due to its potential to transform the existing data-focused communication architecture towards a more generally intelligent and goal-oriented semantic-aware networking system. Despite its promising potential, semantic communications and semantic-aware networking are still at their infancy. Most existing works focus on transporting and delivering the explicit semantic information, e.g., labels or features of objects, that can be directly identified from the source signal. The original definition of semantics as well as recent results in cognitive neuroscience suggest that it is the implicit semantic information, in particular the hidden relations connecting different concepts and feature items that plays the fundamental role in recognizing, communicating, and delivering the real semantic meanings of messages. Motivated by this observation, we propose a novel reasoning-based implicit semantic-aware communication network architecture that allows multiple tiers of CDC and edge servers to collaborate and support efficient semantic encoding, decoding, and interpretation for end-users. We introduce a new multi-layer representation of semantic information taking into consideration both the hierarchical structure of implicit semantics as well as the personalized inference preference of individual users. We model the semantic reasoning process as a reinforcement learning process and then propose an imitation-based semantic reasoning mechanism learning (iRML) solution for the edge servers to leaning a reasoning policy that imitates the inference behavior of the source user. A federated GCN-based collaborative reasoning solution is proposed to allow multiple edge servers to jointly construct a shared semantic interpretation model based on decentralized knowledge datasets.

MMGA: Multimodal Learning with Graph Alignment

Oct 18, 2022Multimodal pre-training breaks down the modality barriers and allows the individual modalities to be mutually augmented with information, resulting in significant advances in representation learning. However, graph modality, as a very general and important form of data, cannot be easily interacted with other modalities because of its non-regular nature. In this paper, we propose MMGA (Multimodal learning with Graph Alignment), a novel multimodal pre-training framework to incorporate information from graph (social network), image and text modalities on social media to enhance user representation learning. In MMGA, a multi-step graph alignment mechanism is proposed to add the self-supervision from graph modality to optimize the image and text encoders, while using the information from the image and text modalities to guide the graph encoder learning. We conduct experiments on the dataset crawled from Instagram. The experimental results show that MMGA works well on the dataset and improves the fans prediction task's performance. We release our dataset, the first social media multimodal dataset with graph, of 60,000 users labeled with specific topics based on 2 million posts to facilitate future research.

PHO-LID: A Unified Model Incorporating Acoustic-Phonetic and Phonotactic Information for Language Identification

Mar 31, 2022

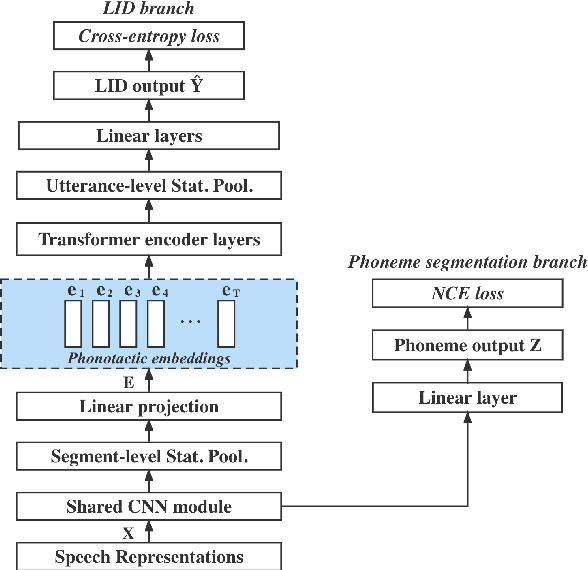

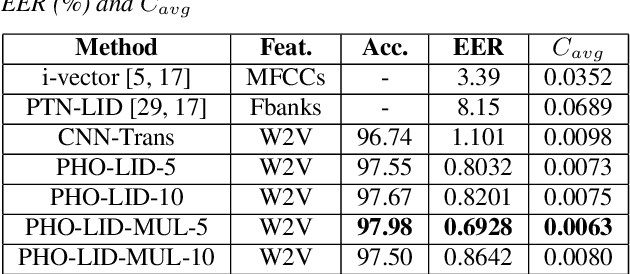

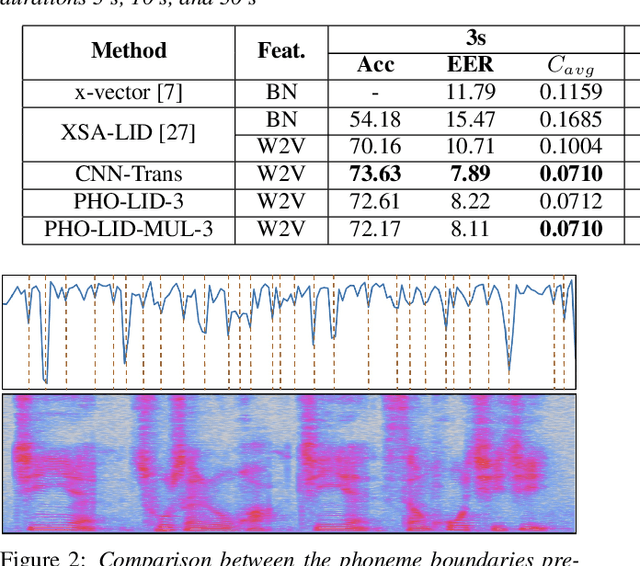

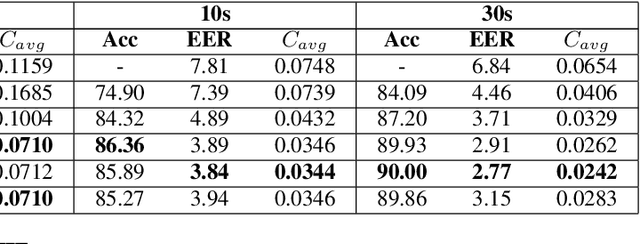

We propose a novel model to hierarchically incorporate phoneme and phonotactic information for language identification (LID) without requiring phoneme annotations for training. In this model, named PHO-LID, a self-supervised phoneme segmentation task and a LID task share a convolutional neural network (CNN) module, which encodes both language identity and sequential phonemic information in the input speech to generate an intermediate sequence of phonotactic embeddings. These embeddings are then fed into transformer encoder layers for utterance-level LID. We call this architecture CNN-Trans. We evaluate it on AP17-OLR data and the MLS14 set of NIST LRE 2017, and show that the PHO-LID model with multi-task optimization exhibits the highest LID performance among all models, achieving over 40% relative improvement in terms of average cost on AP17-OLR data compared to a CNN-Trans model optimized only for LID. The visualized confusion matrices imply that our proposed method achieves higher performance on languages of the same cluster in NIST LRE 2017 data than the CNN-Trans model. A comparison between predicted phoneme boundaries and corresponding audio spectrograms illustrates the leveraging of phoneme information for LID.

Sketching for First Order Method: Efficient Algorithm for Low-Bandwidth Channel and Vulnerability

Oct 15, 2022

Sketching is one of the most fundamental tools in large-scale machine learning. It enables runtime and memory saving via randomly compressing the original large problem onto lower dimensions. In this paper, we propose a novel sketching scheme for the first order method in large-scale distributed learning setting, such that the communication costs between distributed agents are saved while the convergence of the algorithms is still guaranteed. Given gradient information in a high dimension $d$, the agent passes the compressed information processed by a sketching matrix $R\in \R^{s\times d}$ with $s\ll d$, and the receiver de-compressed via the de-sketching matrix $R^\top$ to ``recover'' the information in original dimension. Using such a framework, we develop algorithms for federated learning with lower communication costs. However, such random sketching does not protect the privacy of local data directly. We show that the gradient leakage problem still exists after applying the sketching technique by showing a specific gradient attack method. As a remedy, we prove rigorously that the algorithm will be differentially private by adding additional random noises in gradient information, which results in a both communication-efficient and differentially private first order approach for federated learning tasks. Our sketching scheme can be further generalized to other learning settings and might be of independent interest itself.

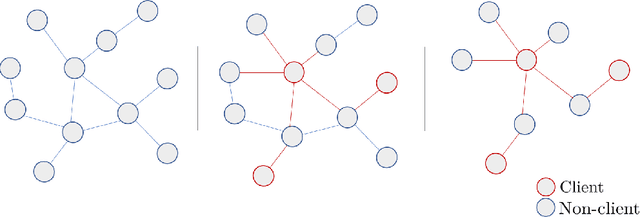

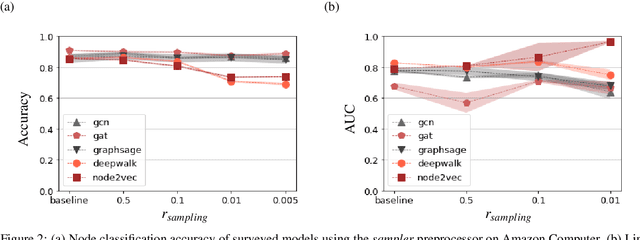

Are Graph Representation Learning Methods Robust to Graph Sparsity and Asymmetric Node Information?

May 19, 2022

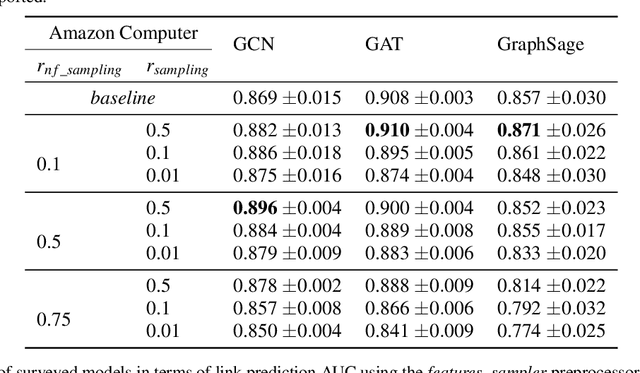

The growing popularity of Graph Representation Learning (GRL) methods has resulted in the development of a large number of models applied to a miscellany of domains. Behind this diversity of domains, there is a strong heterogeneity of graphs, making it difficult to estimate the expected performance of a model on a new graph, especially when the graph has distinctive characteristics that have not been encountered in the benchmark yet. To address this, we have developed an experimental pipeline, to assess the impact of a given property on the models performances. In this paper, we use this pipeline to study the effect of two specificities encountered on banks transactional graphs resulting from the partial view a bank has on all the individuals and transactions carried out on the market. These specific features are graph sparsity and asymmetric node information. This study demonstrates the robustness of GRL methods to these distinctive characteristics. We believe that this work can ease the evaluation of GRL methods to specific characteristics and foster the development of such methods on transactional graphs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge