"Information": models, code, and papers

Uniform Passive Fault-Tolerant Control of a Quadcopter with One, Two, or Three Rotor Failure

Nov 23, 2022

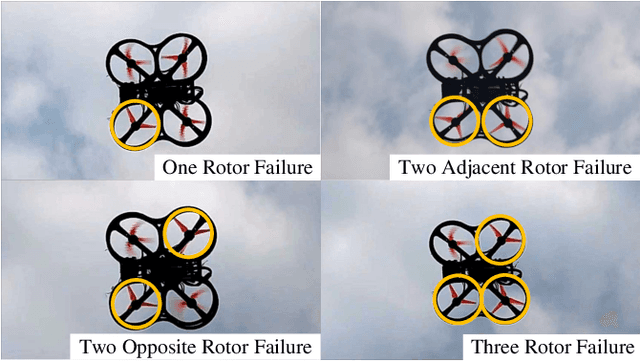

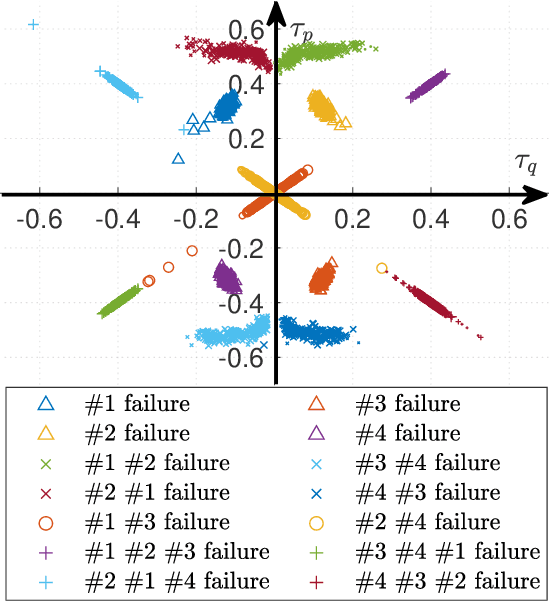

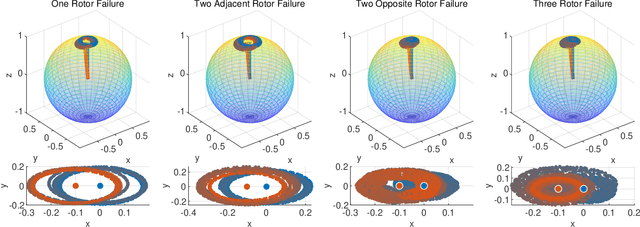

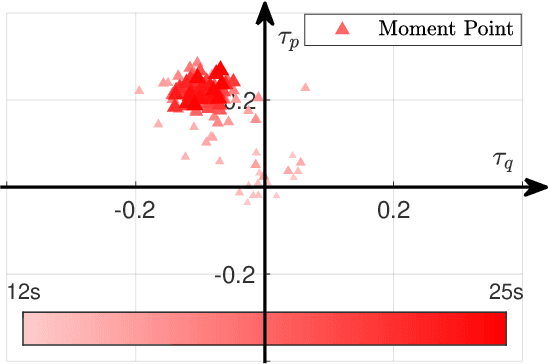

This study proposes a uniform passive fault-tolerant control (FTC) method for a quadcopter that does not rely on fault information subject to one, two adjacent, two opposite, or three rotors failure. The uniform control implies that the passive FTC is able to cover the condition from quadcopter fault-free to rotor failure without controller switching. To achieve the purpose of the passive FTC, the rotors' fault is modeled as a disturbance acting on the virtual control of the quadcopter system. The disturbance estimate is used directly for the passive FTC with rotor failure. To avoid controller switching between normal control and FTC, a dynamic control allocation is used. In addition, the closed-loop stability has been analyzed and a virtual control feedback is adopted to achieve the passive FTC for the quadcopter with two and three rotor failure. To validate the proposed uniform passive FTC method, outdoor experiments are performed for the first time, which have demonstrated that the hovering quadcopter is able to recover from one rotor failure by the proposed controller and continue to fly even if two adjacent, two opposite, or three rotors fail, without any rotor fault information and controller switching.

Incentive-Aware Recommender Systems in Two-Sided Markets

Nov 23, 2022

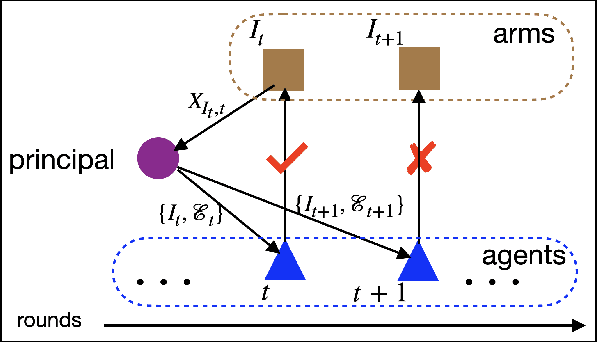

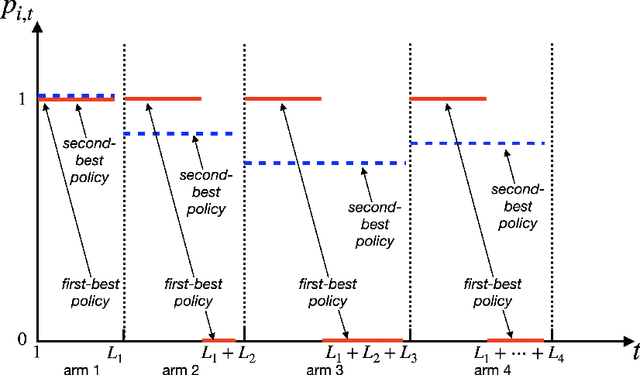

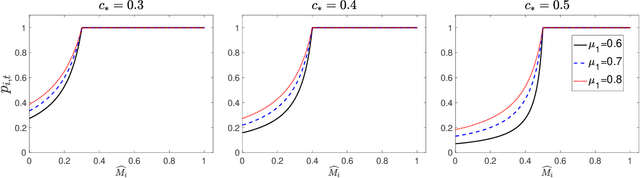

Online platforms in the Internet Economy commonly incorporate recommender systems that recommend arms (e.g., products) to agents (e.g., users). In such platforms, a myopic agent has a natural incentive to exploit, by choosing the best product given the current information rather than to explore various alternatives to collect information that will be used for other agents. We propose a novel recommender system that respects agents' incentives and enjoys asymptotically optimal performances expressed by the regret in repeated games. We model such an incentive-aware recommender system as a multi-agent bandit problem in a two-sided market which is equipped with an incentive constraint induced by agents' opportunity costs. If the opportunity costs are known to the principal, we show that there exists an incentive-compatible recommendation policy, which pools recommendations across a genuinely good arm and an unknown arm via a randomized and adaptive approach. On the other hand, if the opportunity costs are unknown to the principal, we propose a policy that randomly pools recommendations across all arms and uses each arm's cumulative loss as feedback for exploration. We show that both policies also satisfy an ex-post fairness criterion, which protects agents from over-exploitation.

Discovering Influencers in Opinion Formation over Social Graphs

Nov 23, 2022

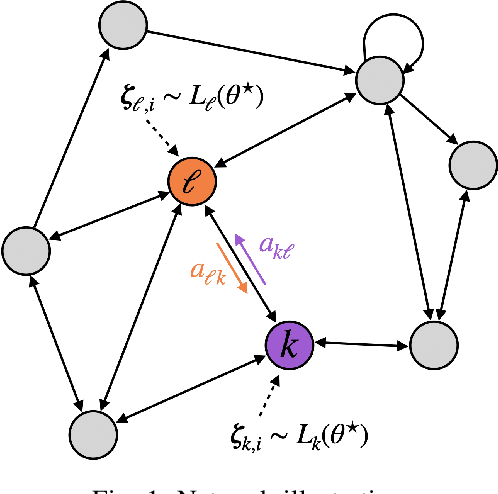

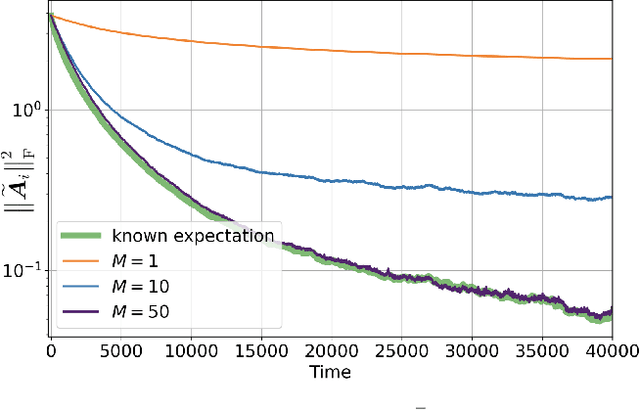

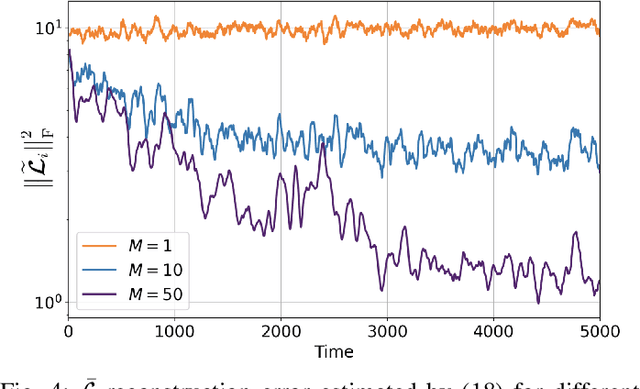

The adaptive social learning paradigm helps model how networked agents are able to form opinions on a state of nature and track its drifts in a changing environment. In this framework, the agents repeatedly update their beliefs based on private observations and exchange the beliefs with their neighbors. In this work, it is shown how the sequence of publicly exchanged beliefs over time allows users to discover rich information about the underlying network topology and about the flow of information over graph. In particular, it is shown that it is possible (i) to identify the influence of each individual agent to the objective of truth learning, (ii) to discover how well informed each agent is, (iii) to quantify the pairwise influences between agents, and (iv) to learn the underlying network topology. The algorithm derived herein is also able to work under non-stationary environments where either the true state of nature or the network topology are allowed to drift over time. We apply the proposed algorithm to different subnetworks of Twitter users, and identify the most influential and central agents merely by using their public tweets (posts).

LG-Hand: Advancing 3D Hand Pose Estimation with Locally and Globally Kinematic Knowledge

Nov 06, 2022

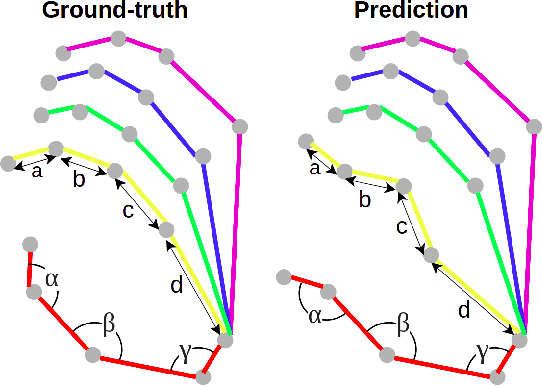

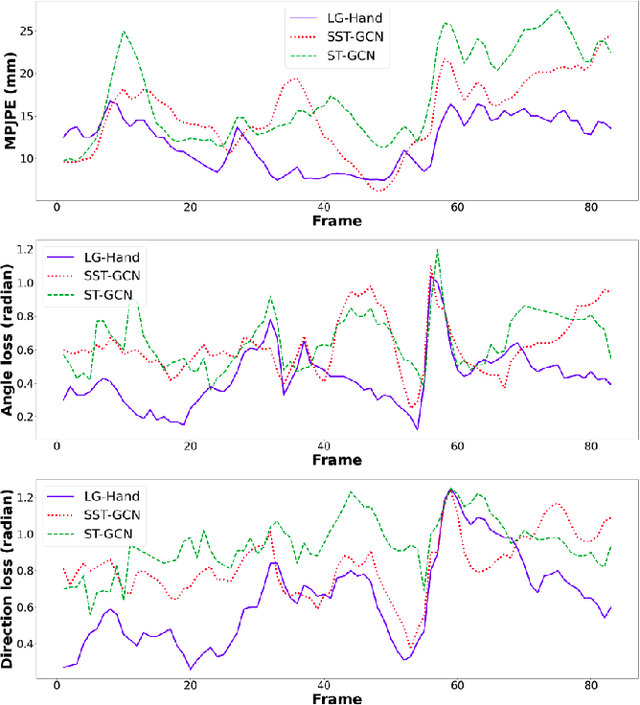

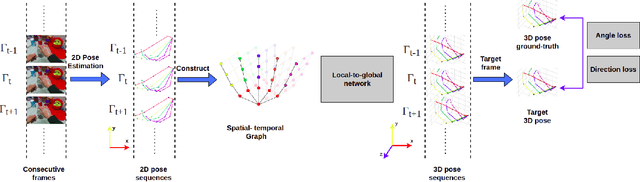

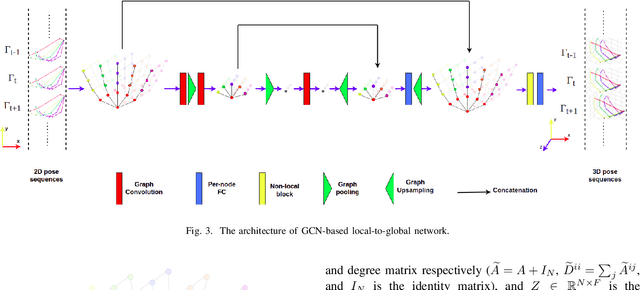

3D hand pose estimation from RGB images suffers from the difficulty of obtaining the depth information. Therefore, a great deal of attention has been spent on estimating 3D hand pose from 2D hand joints. In this paper, we leverage the advantage of spatial-temporal Graph Convolutional Neural Networks and propose LG-Hand, a powerful method for 3D hand pose estimation. Our method incorporates both spatial and temporal dependencies into a single process. We argue that kinematic information plays an important role, contributing to the performance of 3D hand pose estimation. We thereby introduce two new objective functions, Angle and Direction loss, to take the hand structure into account. While Angle loss covers locally kinematic information, Direction loss handles globally kinematic one. Our LG-Hand achieves promising results on the First-Person Hand Action Benchmark (FPHAB) dataset. We also perform an ablation study to show the efficacy of the two proposed objective functions.

Dynamic Feature Pruning and Consolidation for Occluded Person Re-Identification

Nov 27, 2022

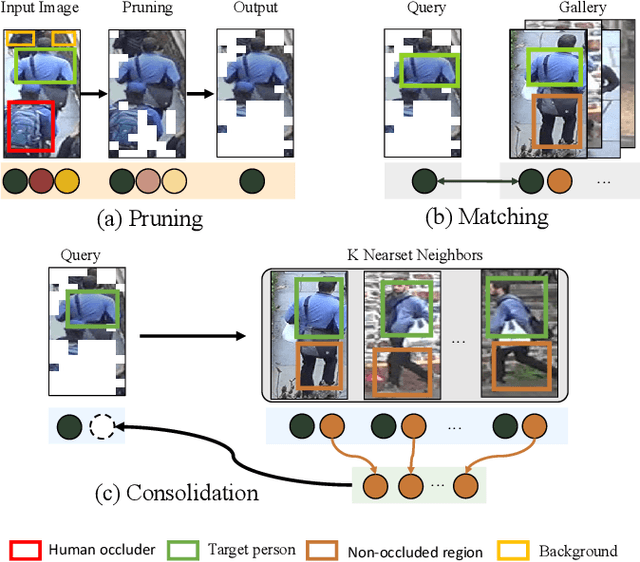

Occluded person re-identification (ReID) is a challenging problem due to contamination from occluders, and existing approaches address the issue with prior knowledge cues, eg human body key points, semantic segmentations and etc, which easily fails in the presents of heavy occlusion and other humans as occluders. In this paper, we propose a feature pruning and consolidation (FPC) framework to circumvent explicit human structure parse, which mainly consists of a sparse encoder, a global and local feature ranking module, and a feature consolidation decoder. Specifically, the sparse encoder drops less important image tokens (mostly related to background noise and occluders) solely according to correlation within the class token attention instead of relying on prior human shape information. Subsequently, the ranking stage relies on the preserved tokens produced by the sparse encoder to identify k-nearest neighbors from a pre-trained gallery memory by measuring the image and patch-level combined similarity. Finally, we use the feature consolidation module to compensate pruned features using identified neighbors for recovering essential information while disregarding disturbance from noise and occlusion. Experimental results demonstrate the effectiveness of our proposed framework on occluded, partial and holistic Re-ID datasets. In particular, our method outperforms state-of-the-art results by at least 8.6% mAP and 6.0% Rank-1 accuracy on the challenging Occluded-Duke dataset.

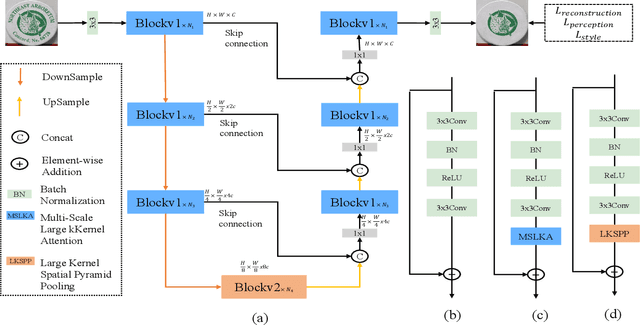

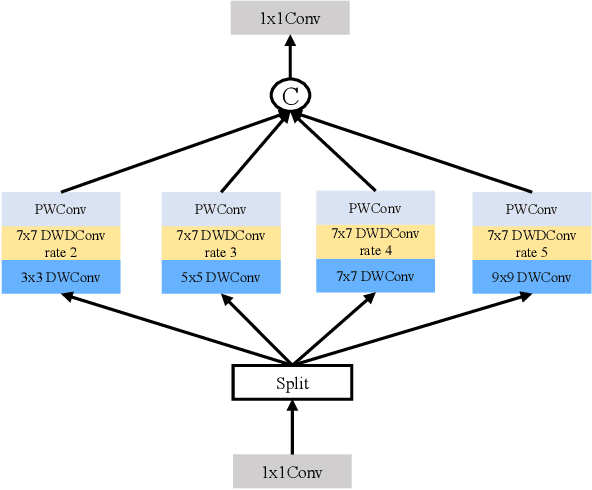

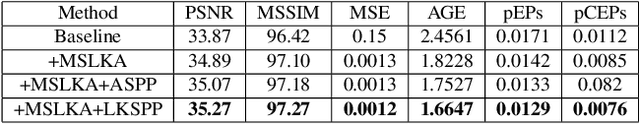

MSLKANet: A Multi-Scale Large Kernel Attention Network for Scene Text Removal

Nov 12, 2022

Scene text removal aims to remove the text and fill the regions with perceptually plausible background information in natural images. It has attracted increasing attention due to its various applications in privacy protection, scene text retrieval, and text editing. With the development of deep learning, the previous methods have achieved significant improvements. However, most of the existing methods seem to ignore the large perceptive fields and global information. The pioneer method can get significant improvements by only changing training data from the cropped image to the full image. In this paper, we present a single-stage multi-scale network MSLKANet for scene text removal in full images. For obtaining large perceptive fields and global information, we propose multi-scale large kernel attention (MSLKA) to obtain long-range dependencies between the text regions and the backgrounds at various granularity levels. Furthermore, we combine the large kernel decomposition mechanism and atrous spatial pyramid pooling to build a large kernel spatial pyramid pooling (LKSPP), which can perceive more valid pixels in the spatial dimension while maintaining large receptive fields and low cost of computation. Extensive experimental results indicate that the proposed method achieves state-of-the-art performance on both synthetic and real-world datasets and the effectiveness of the proposed components MSLKA and LKSPP.

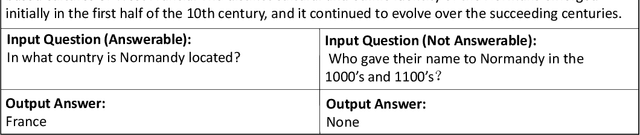

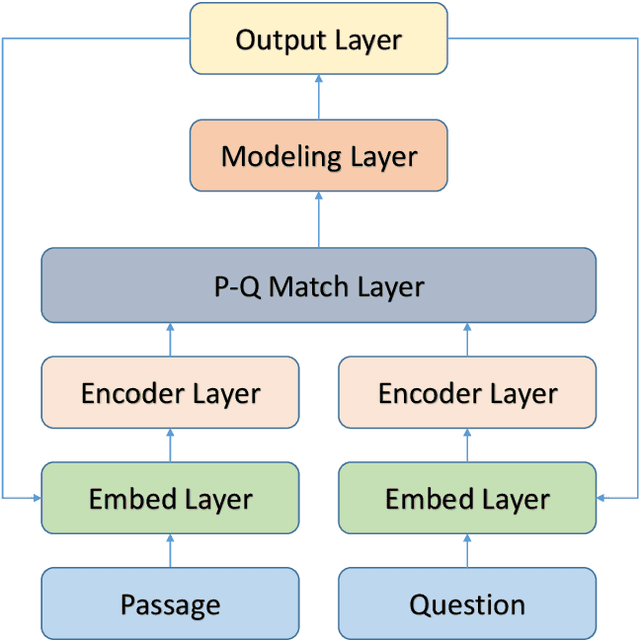

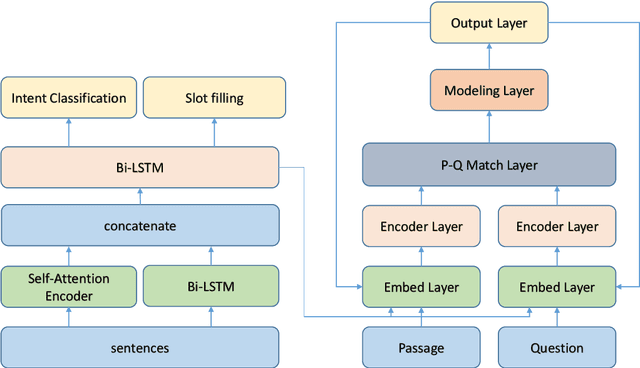

Feature-augmented Machine Reading Comprehension with Auxiliary Tasks

Nov 17, 2022

While most successful approaches for machine reading comprehension rely on single training objective, it is assumed that the encoder layer can learn great representation through the loss function we define in the predict layer, which is cross entropy in most of time, in the case that we first use neural networks to encode the question and paragraph, then directly fuse the encoding result of them. However, due to the distantly loss backpropagating in reading comprehension, the encoder layer cannot learn effectively and be directly supervised. Thus, the encoder layer can not learn the representation well at any time. Base on this, we propose to inject multi granularity information to the encoding layer. Experiments demonstrate the effect of adding multi granularity information to the encoding layer can boost the performance of machine reading comprehension system. Finally, empirical study shows that our approach can be applied to many existing MRC models.

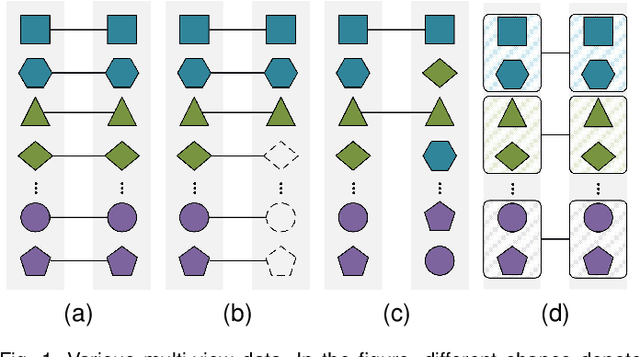

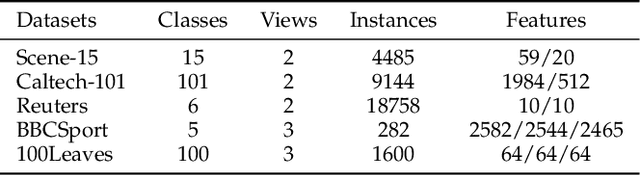

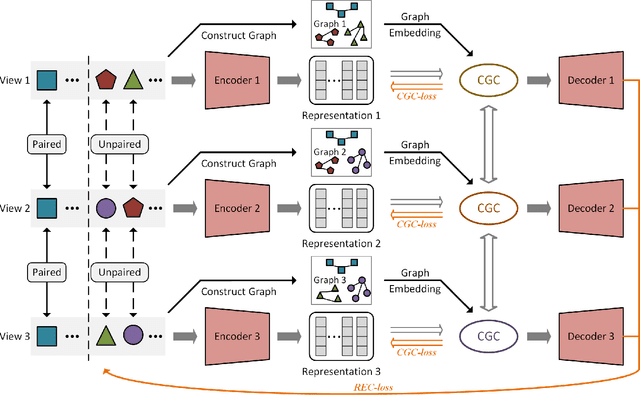

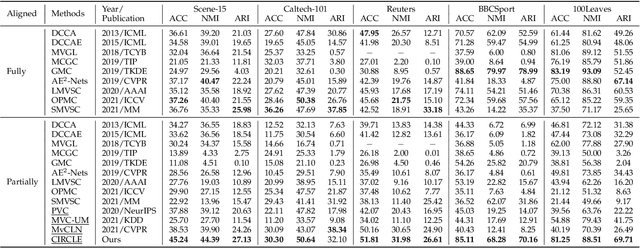

Cross-view Graph Contrastive Representation Learning on Partially Aligned Multi-view Data

Nov 08, 2022

Multi-view representation learning has developed rapidly over the past decades and has been applied in many fields. However, most previous works assumed that each view is complete and aligned. This leads to an inevitable deterioration in their performance when encountering practical problems such as missing or unaligned views. To address the challenge of representation learning on partially aligned multi-view data, we propose a new cross-view graph contrastive learning framework, which integrates multi-view information to align data and learn latent representations. Compared with current approaches, the proposed method has the following merits: (1) our model is an end-to-end framework that simultaneously performs view-specific representation learning via view-specific autoencoders and cluster-level data aligning by combining multi-view information with the cross-view graph contrastive learning; (2) it is easy to apply our model to explore information from three or more modalities/sources as the cross-view graph contrastive learning is devised. Extensive experiments conducted on several real datasets demonstrate the effectiveness of the proposed method on the clustering and classification tasks.

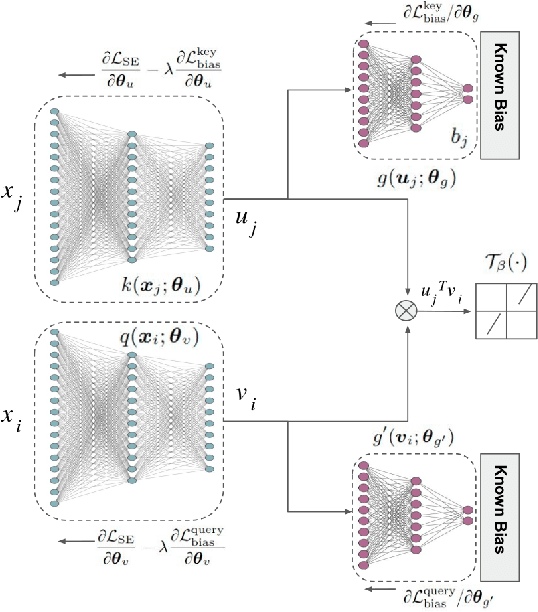

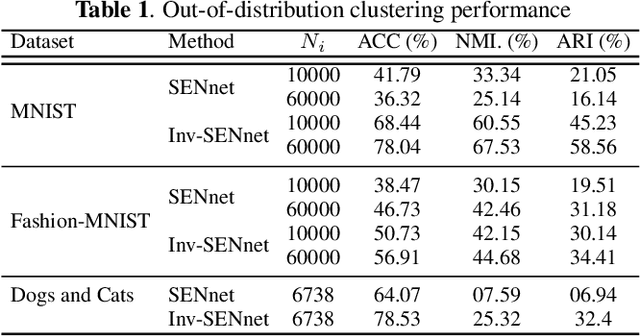

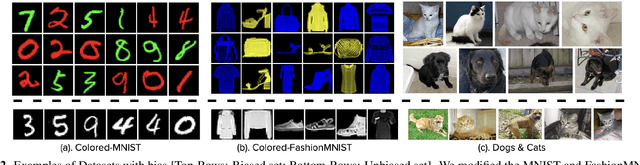

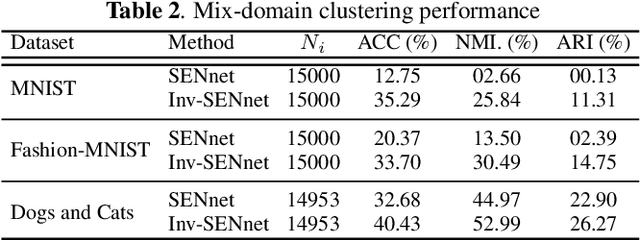

Inv-SENnet: Invariant Self Expression Network for clustering under biased data

Nov 13, 2022

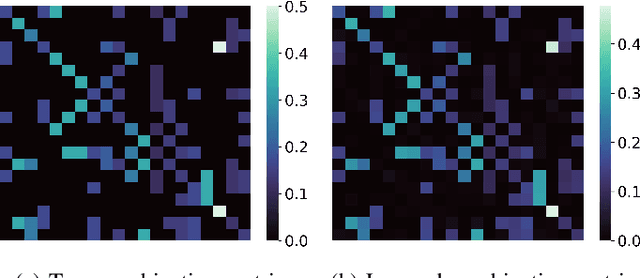

Subspace clustering algorithms are used for understanding the cluster structure that explains the dataset well. These methods are extensively used for data-exploration tasks in various areas of Natural Sciences. However, most of these methods fail to handle unwanted biases in datasets. For datasets where a data sample represents multiple attributes, naively applying any clustering approach can result in undesired output. To this end, we propose a novel framework for jointly removing unwanted attributes (biases) while learning to cluster data points in individual subspaces. Assuming we have information about the bias, we regularize the clustering method by adversarially learning to minimize the mutual information between the data and the unwanted attributes. Our experimental result on synthetic and real-world datasets demonstrate the effectiveness of our approach.

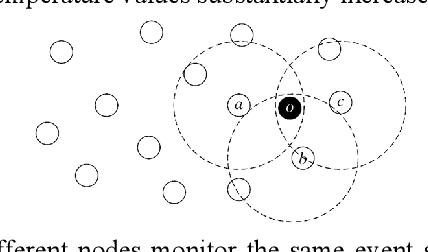

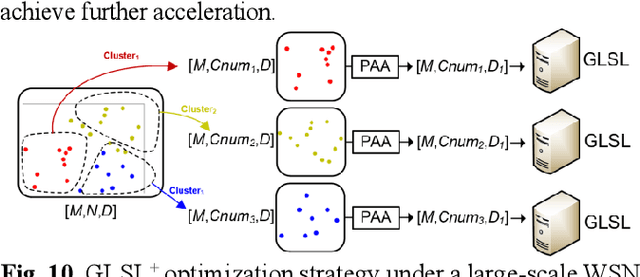

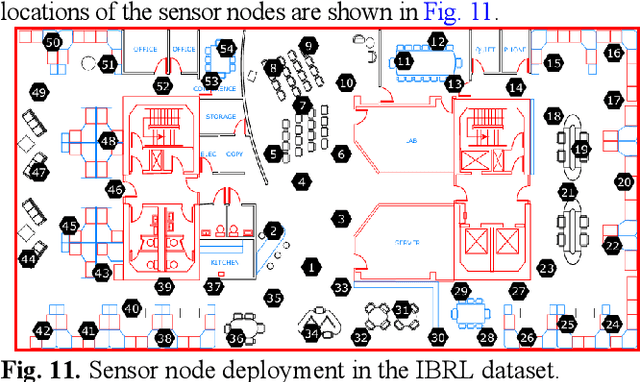

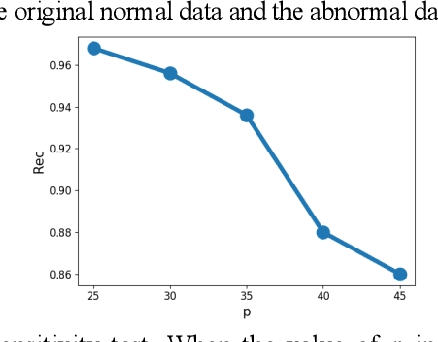

A Novel Self-Supervised Learning-Based Anomaly Node Detection Method Based on an Autoencoder in Wireless Sensor Networks

Dec 26, 2022

Due to the issue that existing wireless sensor network (WSN)-based anomaly detection methods only consider and analyze temporal features, in this paper, a self-supervised learning-based anomaly node detection method based on an autoencoder is designed. This method integrates temporal WSN data flow feature extraction, spatial position feature extraction and intermodal WSN correlation feature extraction into the design of the autoencoder to make full use of the spatial and temporal information of the WSN for anomaly detection. First, a fully connected network is used to extract the temporal features of nodes by considering a single mode from a local spatial perspective. Second, a graph neural network (GNN) is used to introduce the WSN topology from a global spatial perspective for anomaly detection and extract the spatial and temporal features of the data flows of nodes and their neighbors by considering a single mode. Then, the adaptive fusion method involving weighted summation is used to extract the relevant features between different models. In addition, this paper introduces a gated recurrent unit (GRU) to solve the long-term dependence problem of the time dimension. Eventually, the reconstructed output of the decoder and the hidden layer representation of the autoencoder are fed into a fully connected network to calculate the anomaly probability of the current system. Since the spatial feature extraction operation is advanced, the designed method can be applied to the task of large-scale network anomaly detection by adding a clustering operation. Experiments show that the designed method outperforms the baselines, and the F1 score reaches 90.6%, which is 5.2% higher than those of the existing anomaly detection methods based on unsupervised reconstruction and prediction. Code and model are available at https://github.com/GuetYe/anomaly_detection/GLSL

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge