"Information": models, code, and papers

Domain Generalization Emerges from Dreaming

Feb 02, 2023

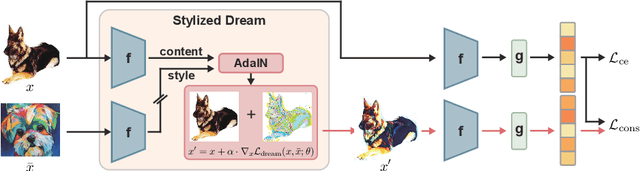

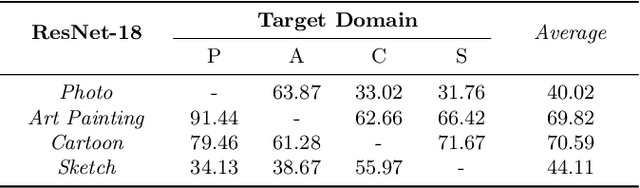

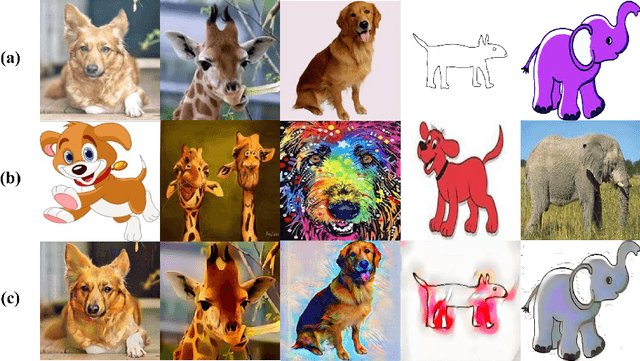

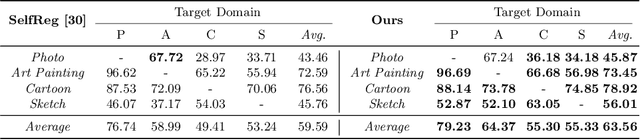

Recent studies have proven that DNNs, unlike human vision, tend to exploit texture information rather than shape. Such texture bias is one of the factors for the poor generalization performance of DNNs. We observe that the texture bias negatively affects not only in-domain generalization but also out-of-distribution generalization, i.e., Domain Generalization. Motivated by the observation, we propose a new framework to reduce the texture bias of a model by a novel optimization-based data augmentation, dubbed Stylized Dream. Our framework utilizes adaptive instance normalization (AdaIN) to augment the style of an original image yet preserve the content. We then adopt a regularization loss to predict consistent outputs between Stylized Dream and original images, which encourages the model to learn shape-based representations. Extensive experiments show that the proposed method achieves state-of-the-art performance in out-of-distribution settings on public benchmark datasets: PACS, VLCS, OfficeHome, TerraIncognita, and DomainNet.

Advances and Challenges in Multimodal Remote Sensing Image Registration

Feb 02, 2023

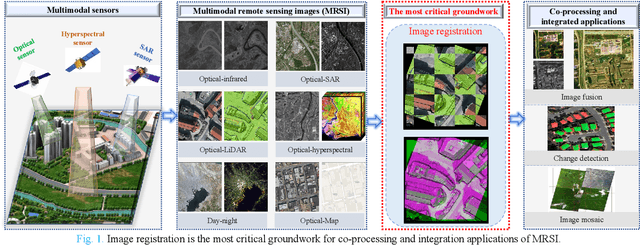

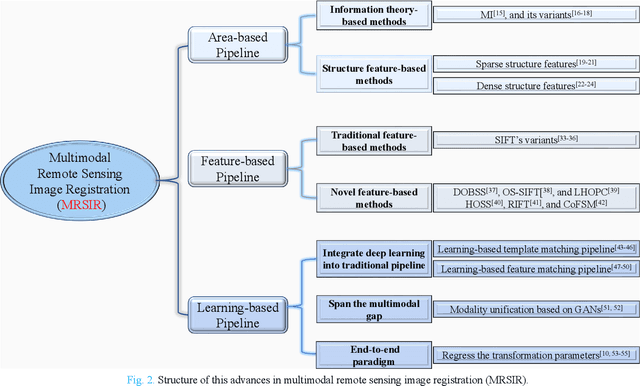

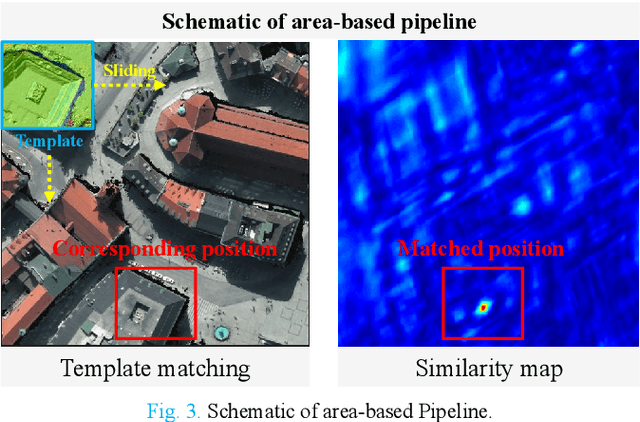

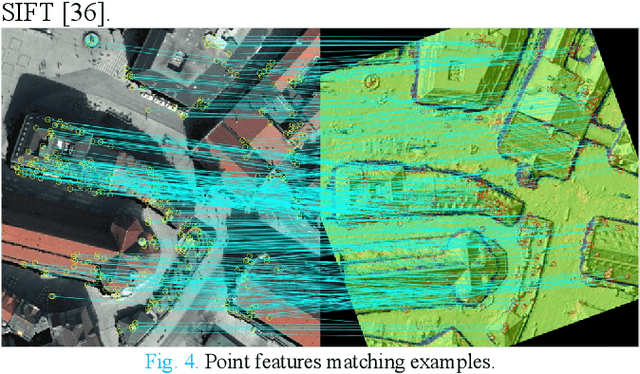

Over the past few decades, with the rapid development of global aerospace and aerial remote sensing technology, the types of sensors have evolved from the traditional monomodal sensors (e.g., optical sensors) to the new generation of multimodal sensors [e.g., multispectral, hyperspectral, light detection and ranging (LiDAR) and synthetic aperture radar (SAR) sensors]. These advanced devices can dynamically provide various and abundant multimodal remote sensing images with different spatial, temporal, and spectral resolutions according to different application requirements. Since then, it is of great scientific significance to carry out the research of multimodal remote sensing image registration, which is a crucial step for integrating the complementary information among multimodal data and making comprehensive observations and analysis of the Earths surface. In this work, we will present our own contributions to the field of multimodal image registration, summarize the advantages and limitations of existing multimodal image registration methods, and then discuss the remaining challenges and make a forward-looking prospect for the future development of the field.

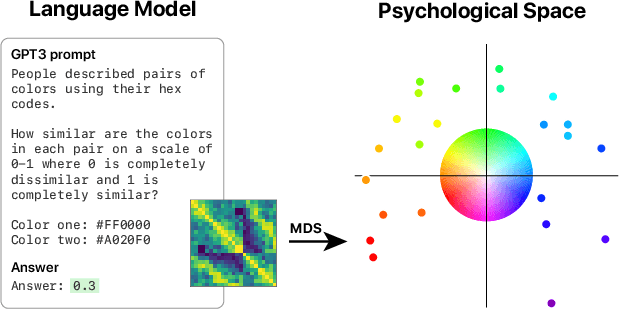

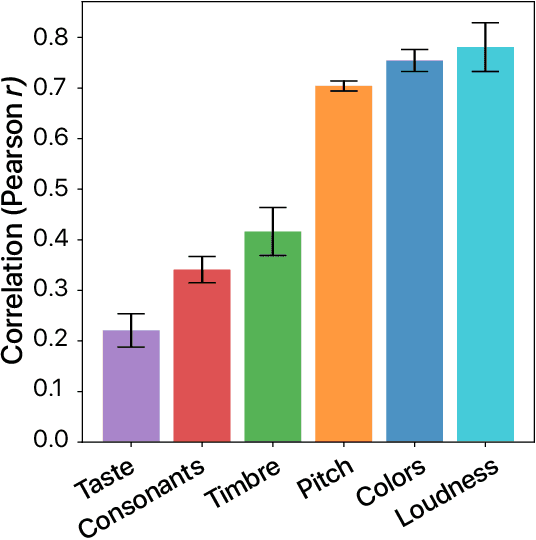

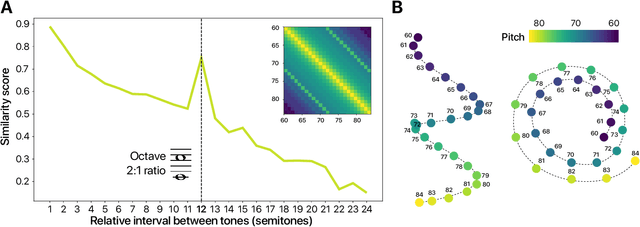

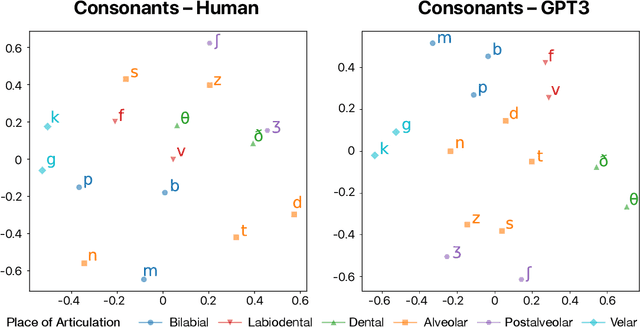

What Language Reveals about Perception: Distilling Psychophysical Knowledge from Large Language Models

Feb 02, 2023

Understanding the extent to which the perceptual world can be recovered from language is a fundamental problem in cognitive science. We reformulate this problem as that of distilling psychophysical information from text and show how this can be done by combining large language models (LLMs) with a classic psychophysical method based on similarity judgments. Specifically, we use the prompt auto-completion functionality of GPT3, a state-of-the-art LLM, to elicit similarity scores between stimuli and then apply multidimensional scaling to uncover their underlying psychological space. We test our approach on six perceptual domains and show that the elicited judgments strongly correlate with human data and successfully recover well-known psychophysical structures such as the color wheel and pitch spiral. We also explore meaningful divergences between LLM and human representations. Our work showcases how combining state-of-the-art machine models with well-known cognitive paradigms can shed new light on fundamental questions in perception and language research.

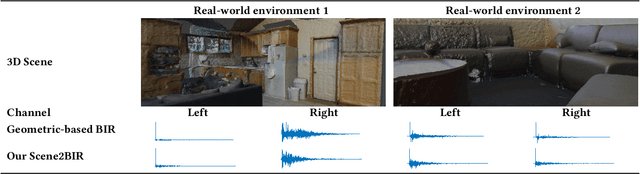

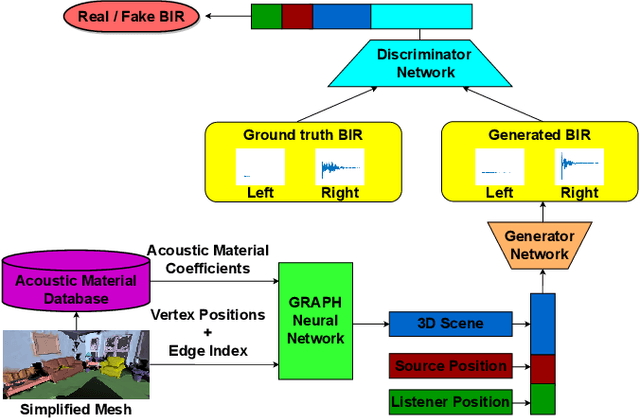

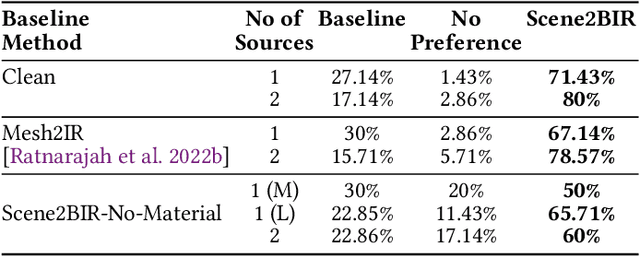

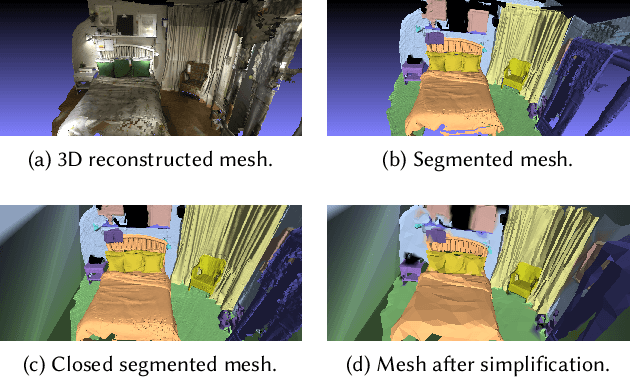

Scene2BIR: Material-aware learning-based binaural impulse response generator for reconstructed real-world 3D scenes

Feb 02, 2023

We present an end-to-end binaural impulse response generator (BIR) to generate plausible sounds in real-time for real-world models. Our approach uses a novel neural-network-based BIR generator (Scene2BIR) for the reconstructed 3D model. We propose a graph neural network that uses both the material and the topology information of the 3D scenes and generates a scene latent vector. Moreover, we use a conditional generative adversarial network (CGAN) to generate BIRs from the scene latent vector. Our network is able to handle holes or other artifacts in the reconstructed 3D mesh model. We present an efficient cost function to the generator network to incorporate spatial audio effects. Given the source and the listener position, our approach can generate a BIR in 0.1 milliseconds on an NVIDIA GeForce RTX 2080 Ti GPU and can easily handle multiple sources. We have evaluated the accuracy of our approach with real-world captured BIRs and an interactive geometric sound propagation algorithm.

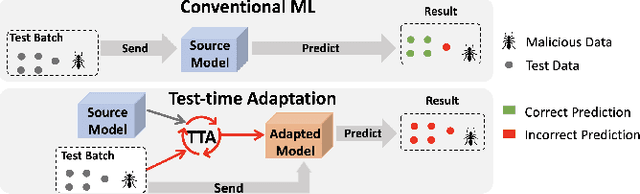

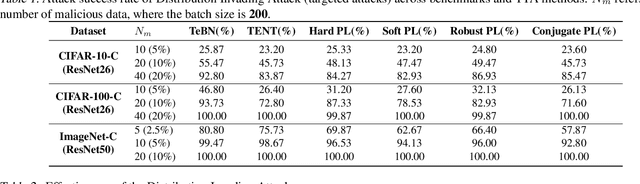

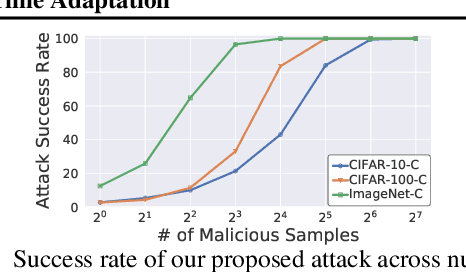

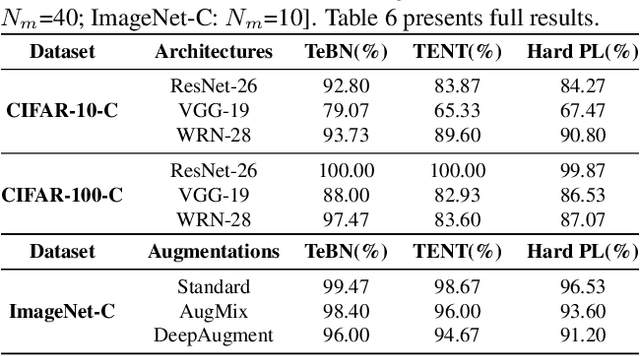

Uncovering Adversarial Risks of Test-Time Adaptation

Feb 04, 2023

Recently, test-time adaptation (TTA) has been proposed as a promising solution for addressing distribution shifts. It allows a base model to adapt to an unforeseen distribution during inference by leveraging the information from the batch of (unlabeled) test data. However, we uncover a novel security vulnerability of TTA based on the insight that predictions on benign samples can be impacted by malicious samples in the same batch. To exploit this vulnerability, we propose Distribution Invading Attack (DIA), which injects a small fraction of malicious data into the test batch. DIA causes models using TTA to misclassify benign and unperturbed test data, providing an entirely new capability for adversaries that is infeasible in canonical machine learning pipelines. Through comprehensive evaluations, we demonstrate the high effectiveness of our attack on multiple benchmarks across six TTA methods. In response, we investigate two countermeasures to robustify the existing insecure TTA implementations, following the principle of "security by design". Together, we hope our findings can make the community aware of the utility-security tradeoffs in deploying TTA and provide valuable insights for developing robust TTA approaches.

State Machine-based Waveforms for Channels With 1-Bit Quantization and Oversampling With Time-Instance Zero-Crossing Modulation

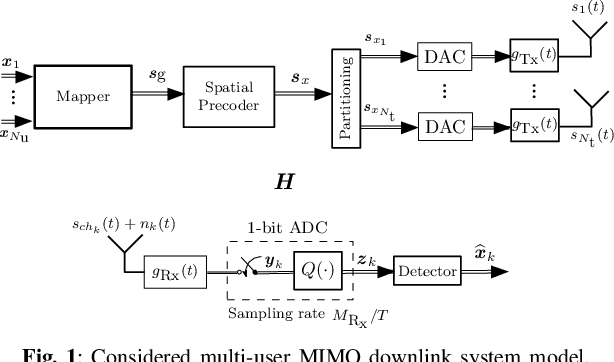

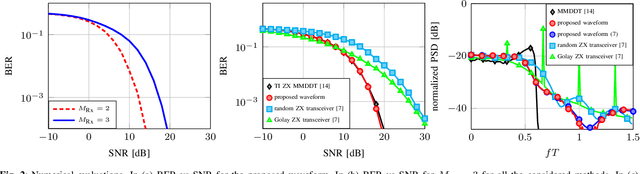

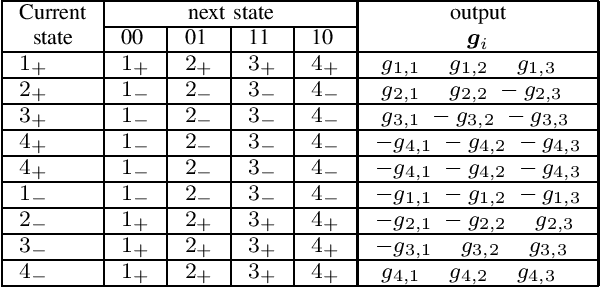

Feb 04, 2023

Systems with 1-bit quantization and oversampling are promising for the Internet of Things (IoT) devices in order to reduce the power consumption of the analog-to-digital-converters. The novel time-instance zero-crossing (TI ZX) modulation is a promising approach for this kind of channels but existing studies rely on optimization problems with high computational complexity and delay. In this work, we propose a practical waveform design based on the established TI ZX modulation for a multiuser multi-input multi-output (MIMO) downlink scenario with 1-bit quantization and temporal oversampling at the receivers. In this sense, the proposed temporal transmit signals are constructed by concatenating segments of coefficients which convey the information into the time-instances of zero-crossings according to the TI ZX mapping rules. The proposed waveform design is compared with other methods from the literature. The methods are compared in terms of bit error rate and normalized power spectral density. Numerical results show that the proposed technique is suitable for multiuser MIMO system with 1-bit quantization while tolerating some small amount of out-of-band radiation.

Design of a Multi-User Wireless Powered Communication System Employing Either Active IRS or AF Relay

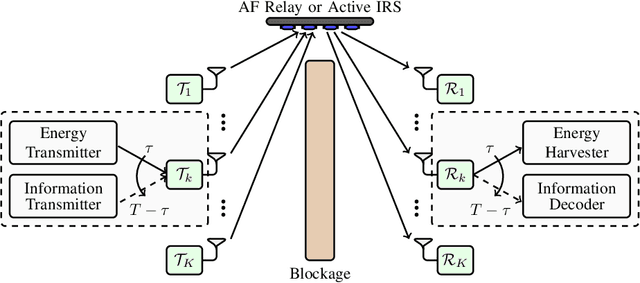

Dec 31, 2022

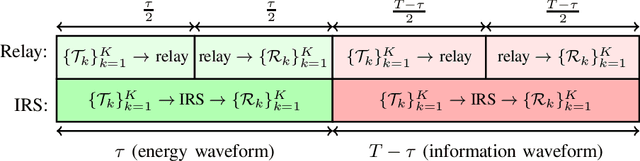

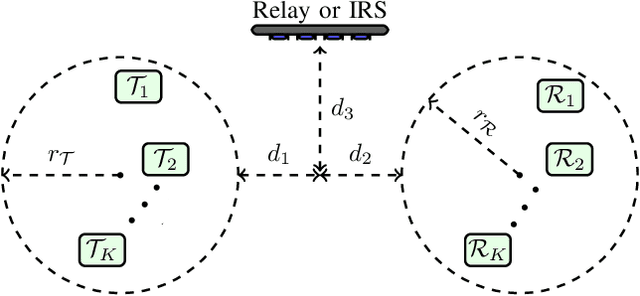

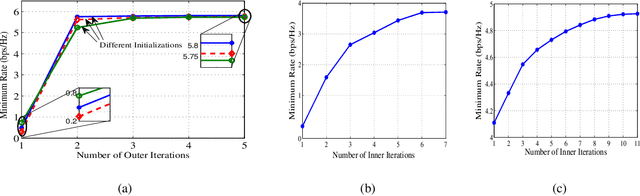

In this paper, we optimize a Wireless Powered Communication (WPC) system including multiple pair of users, where transmitters employ single-antenna to transmit their information and power to their receivers with the help of one multiple-antennas Amplify-and-Forward (AF) relay or an active Intelligent Reflecting Surface (IRS). We propose a joint Time Switching (TS) scheme in which transmitters, receivers, and the relay/IRS are either in their energy or information transmission/reception modes. The transmitted multi-carrier unmodulated and modulated waveforms are used for Energy Harvesting (EH) and Information Decoding (ID) modes, respectively. In order to design an optimal fair system, we maximize the minimum rate of all pairs for both relay and IRS systems through a unified framework. This framework allows us to simultaneously design energy waveforms, find optimal relay/IRS amplification/reflection matrices, allocate powers for information waveforms, and allocate time durations for various phases. In addition, we take into account the non-linearity of the EH circuits in our problem. This problem turns out to be non-convex. Thus, we propose an iterative algorithm by using the Minorization-Maximization (MM) technique, which quickly converges to the optimal solution. Numerical examples show that the proposed method improves the performance of the multi-pair WPC relay/IRS system under various setups.

Denoising and Prompt-Tuning for Multi-Behavior Recommendation

Feb 12, 2023

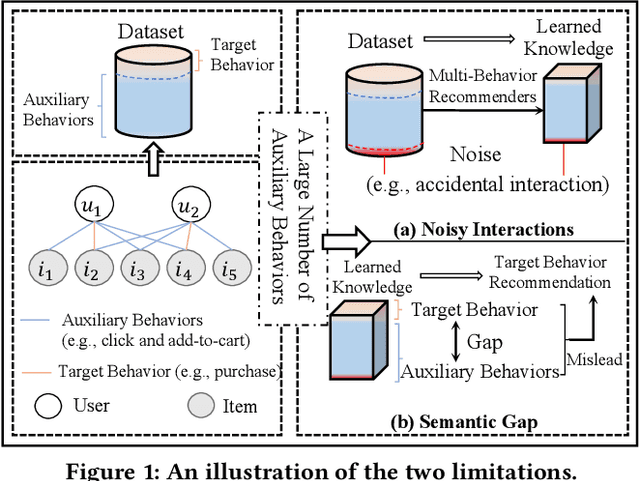

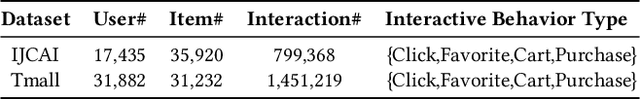

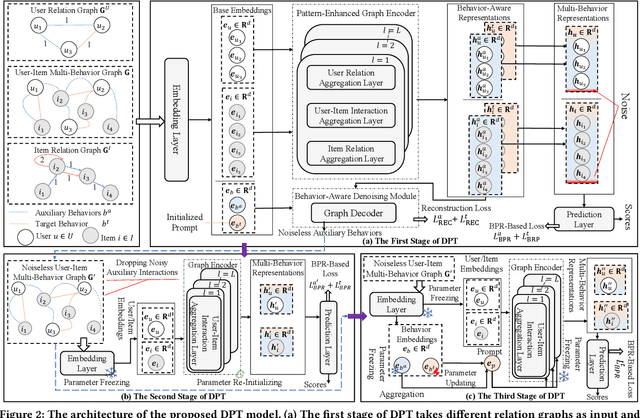

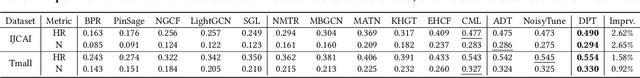

In practical recommendation scenarios, users often interact with items under multi-typed behaviors (e.g., click, add-to-cart, and purchase). Traditional collaborative filtering techniques typically assume that users only have a single type of behavior with items, making it insufficient to utilize complex collaborative signals to learn informative representations and infer actual user preferences. Consequently, some pioneer studies explore modeling multi-behavior heterogeneity to learn better representations and boost the performance of recommendations for a target behavior. However, a large number of auxiliary behaviors (i.e., click and add-to-cart) could introduce irrelevant information to recommenders, which could mislead the target behavior (i.e., purchase) recommendation, rendering two critical challenges: (i) denoising auxiliary behaviors and (ii) bridging the semantic gap between auxiliary and target behaviors. Motivated by the above observation, we propose a novel framework-Denoising and Prompt-Tuning (DPT) with a three-stage learning paradigm to solve the aforementioned challenges. In particular, DPT is equipped with a pattern-enhanced graph encoder in the first stage to learn complex patterns as prior knowledge in a data-driven manner to guide learning informative representation and pinpointing reliable noise for subsequent stages. Accordingly, we adopt different lightweight tuning approaches with effectiveness and efficiency in the following stages to further attenuate the influence of noise and alleviate the semantic gap among multi-typed behaviors. Extensive experiments on two real-world datasets demonstrate the superiority of DPT over a wide range of state-of-the-art methods. The implementation code is available online at https://github.com/zc-97/DPT.

ChemVise: Maximizing Out-of-Distribution Chemical Detection with the Novel Application of Zero-Shot Learning

Feb 09, 2023

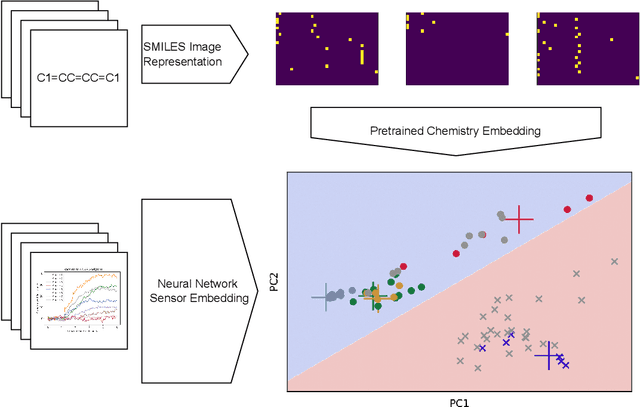

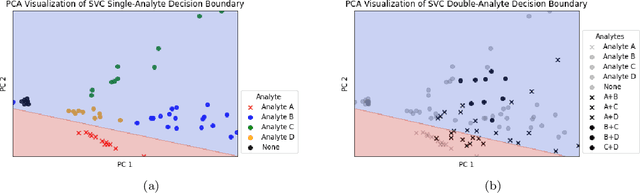

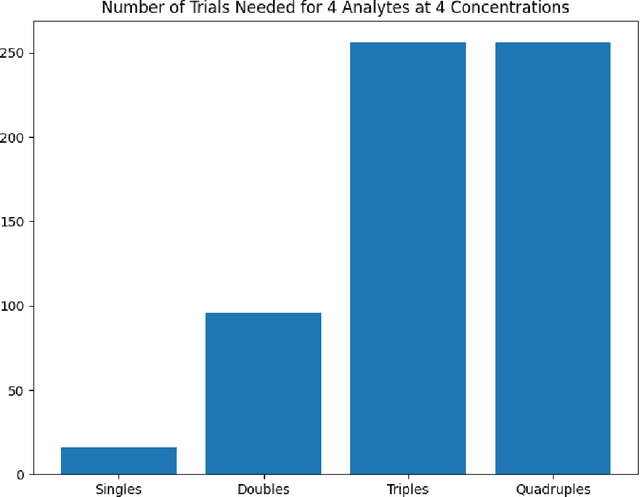

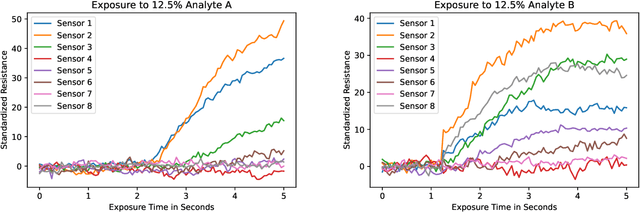

Accurate chemical sensors are vital in medical, military, and home safety applications. Training machine learning models to be accurate on real world chemical sensor data requires performing many diverse, costly experiments in controlled laboratory settings to create a data set. In practice even expensive, large data sets may be insufficient for generalization of a trained model to a real-world testing distribution. Rather than perform greater numbers of experiments requiring exhaustive mixtures of chemical analytes, this research proposes learning approximations of complex exposures from training sets of simple ones by using single-analyte exposure signals as building blocks of a multiple-analyte space. We demonstrate this approach to synthetic sensor responses surprisingly improves the detection of out-of-distribution obscured chemical analytes. Further, we pair these synthetic signals to targets in an information-dense representation space utilizing a large corpus of chemistry knowledge. Through utilization of a semantically meaningful analyte representation spaces along with synthetic targets we achieve rapid analyte classification in the presence of obscurants without corresponding obscured-analyte training data. Transfer learning for supervised learning with molecular representations makes assumptions about the input data. Instead, we borrow from the natural language and natural image processing literature for a novel approach to chemical sensor signal classification using molecular semantics for arbitrary chemical sensor hardware designs.

A Lite Fireworks Algorithm for Optimization

Jan 07, 2023

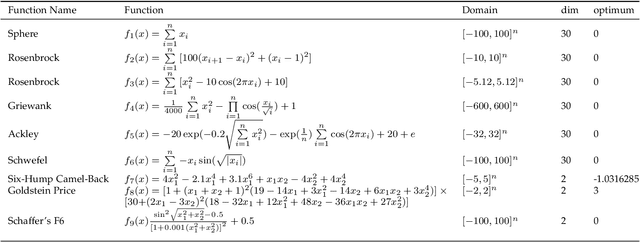

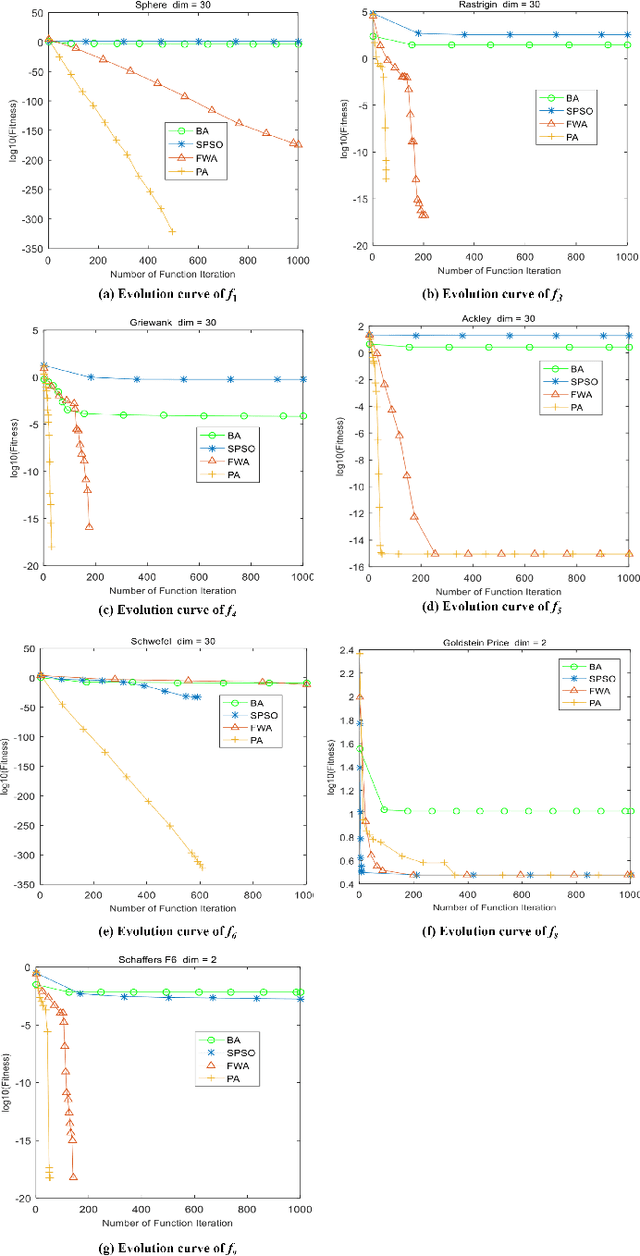

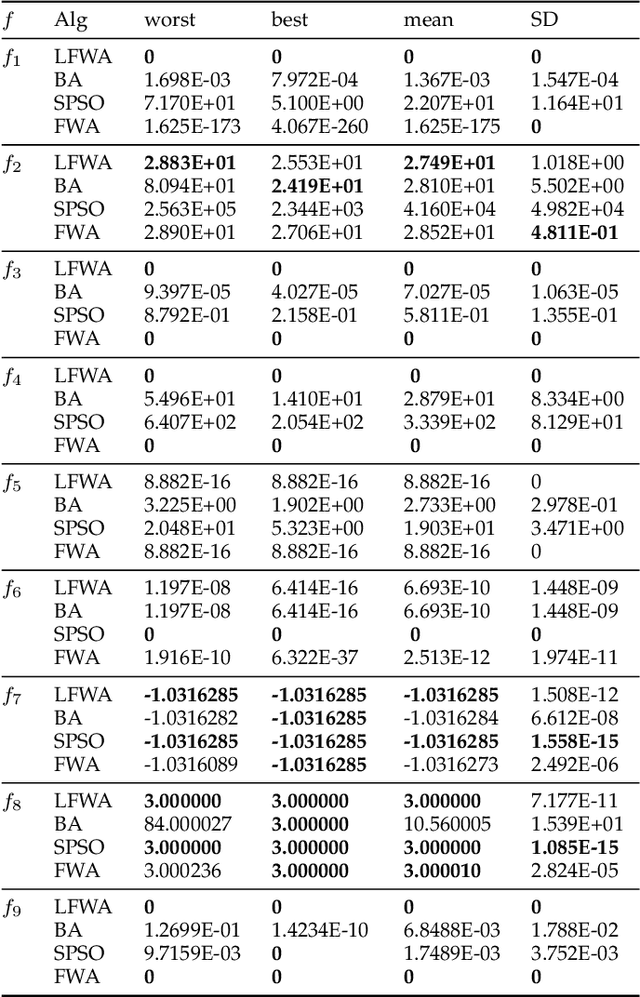

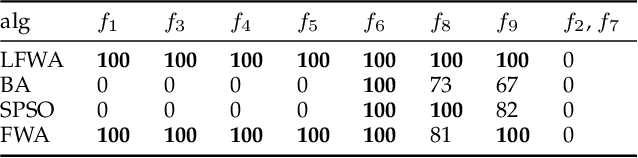

The fireworks algorithm is an optimization algorithm for simulating the explosion phenomenon of fireworks. Because of its fast convergence and high precision, it is widely used in pattern recognition, optimal scheduling, and other fields. However, most of the existing research work on the fireworks algorithm is improved based on its defects, and little consideration is given to reducing the number of parameters of the fireworks algorithm. The original fireworks algorithm has too many parameters, which increases the cost of algorithm adjustment and is not conducive to engineering applications. In addition, in the fireworks population, the unselected individuals are discarded, thus causing a waste of their location information. To reduce the number of parameters of the original Fireworks Algorithm and make full use of the location information of discarded individuals, we propose a simplified version of the Fireworks Algorithm. It reduces the number of algorithm parameters by redesigning the explosion operator of the fireworks algorithm and constructs an adaptive explosion radius by using the historical optimal information to balance the local mining and global exploration capabilities. The comparative experimental results of function optimization show that the overall performance of our proposed LFWA is better than that of comparative algorithms, such as the fireworks algorithm, particle swarm algorithm, and bat algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge