"Information": models, code, and papers

Time Series Representation Learning with Supervised Contrastive Temporal Transformer

Mar 16, 2024

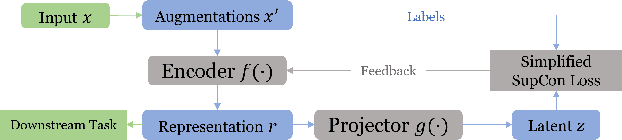

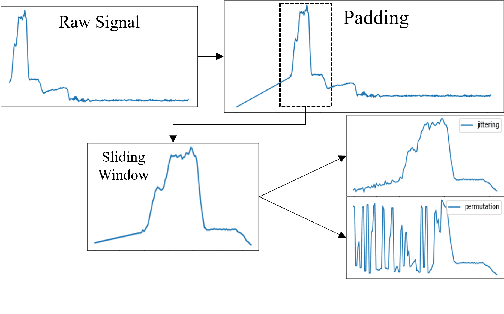

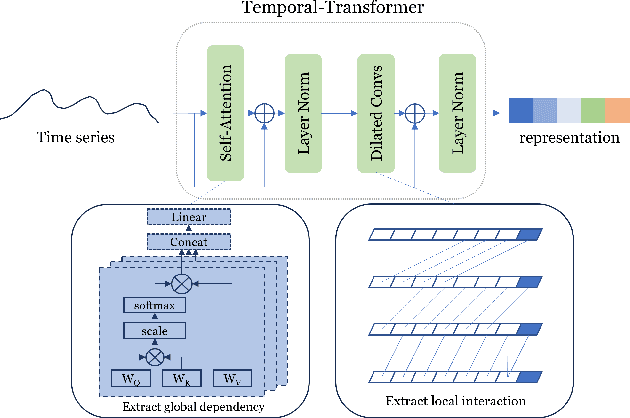

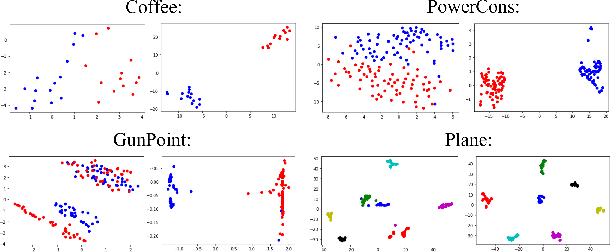

Finding effective representations for time series data is a useful but challenging task. Several works utilize self-supervised or unsupervised learning methods to address this. However, there still remains the open question of how to leverage available label information for better representations. To answer this question, we exploit pre-existing techniques in time series and representation learning domains and develop a simple, yet novel fusion model, called: \textbf{S}upervised \textbf{CO}ntrastive \textbf{T}emporal \textbf{T}ransformer (SCOTT). We first investigate suitable augmentation methods for various types of time series data to assist with learning change-invariant representations. Secondly, we combine Transformer and Temporal Convolutional Networks in a simple way to efficiently learn both global and local features. Finally, we simplify Supervised Contrastive Loss for representation learning of labelled time series data. We preliminarily evaluate SCOTT on a downstream task, Time Series Classification, using 45 datasets from the UCR archive. The results show that with the representations learnt by SCOTT, even a weak classifier can perform similar to or better than existing state-of-the-art models (best performance on 23/45 datasets and highest rank against 9 baseline models). Afterwards, we investigate SCOTT's ability to address a real-world task, online Change Point Detection (CPD), on two datasets: a human activity dataset and a surgical patient dataset. We show that the model performs with high reliability and efficiency on the online CPD problem ($\sim$98\% and $\sim$97\% area under precision-recall curve respectively). Furthermore, we demonstrate the model's potential in tackling early detection and show it performs best compared to other candidates.

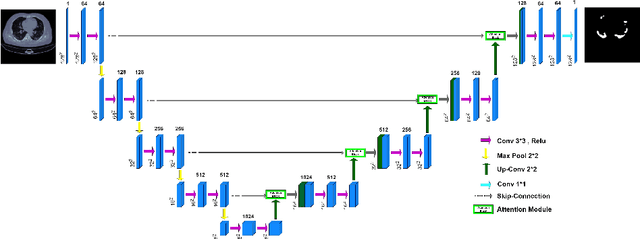

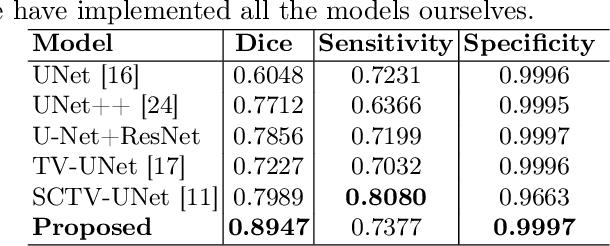

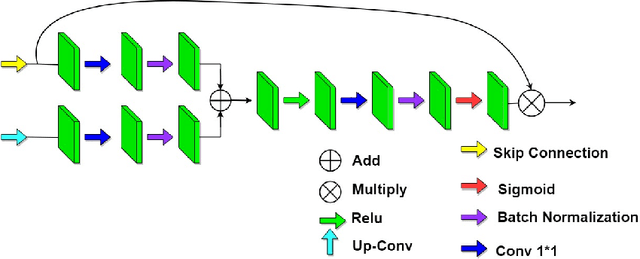

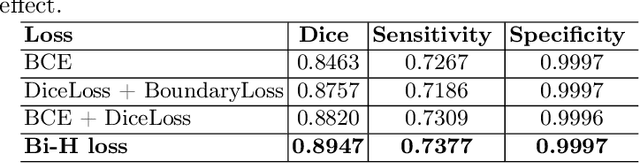

COVID-CT-H-UNet: a novel COVID-19 CT segmentation network based on attention mechanism and Bi-category Hybrid loss

Mar 16, 2024

Since 2019, the global COVID-19 outbreak has emerged as a crucial focus in healthcare research. Although RT-PCR stands as the primary method for COVID-19 detection, its extended detection time poses a significant challenge. Consequently, supplementing RT-PCR with the pathological study of COVID-19 through CT imaging has become imperative. The current segmentation approach based on TVLoss enhances the connectivity of afflicted areas. Nevertheless, it tends to misclassify normal pixels between certain adjacent diseased regions as diseased pixels. The typical Binary cross entropy(BCE) based U-shaped network only concentrates on the entire CT images without emphasizing on the affected regions, which results in hazy borders and low contrast in the projected output. In addition, the fraction of infected pixels in CT images is much less, which makes it a challenge for segmentation models to make accurate predictions. In this paper, we propose COVID-CT-H-UNet, a COVID-19 CT segmentation network to solve these problems. To recognize the unaffected pixels between neighbouring diseased regions, extra visual layer information is captured by combining the attention module on the skip connections with the proposed composite function Bi-category Hybrid Loss. The issue of hazy boundaries and poor contrast brought on by the BCE Loss in conventional techniques is resolved by utilizing the composite function Bi-category Hybrid Loss that concentrates on the pixels in the diseased area. The experiment shows when compared to the previous COVID-19 segmentation networks, the proposed COVID-CT-H-UNet's segmentation impact has greatly improved, and it may be used to identify and study clinical COVID-19.

Reliable Spatial-Temporal Voxels For Multi-Modal Test-Time Adaptation

Mar 15, 2024

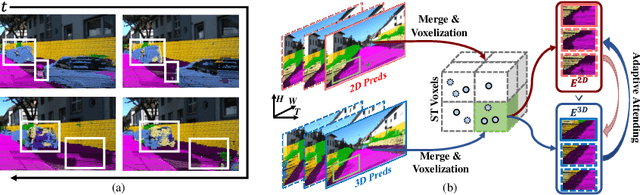

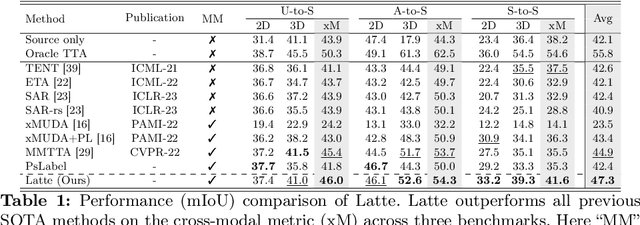

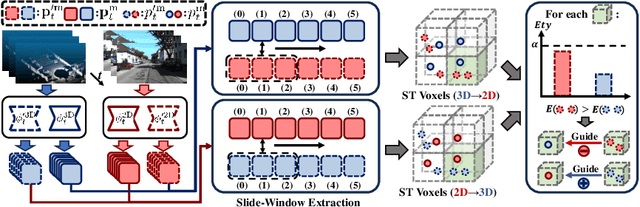

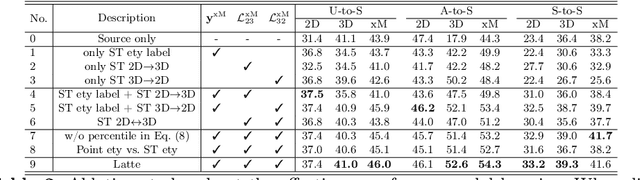

Multi-modal test-time adaptation (MM-TTA) is proposed to adapt models to an unlabeled target domain by leveraging the complementary multi-modal inputs in an online manner. Previous MM-TTA methods rely on predictions of cross-modal information in each input frame, while they ignore the fact that predictions of geometric neighborhoods within consecutive frames are highly correlated, leading to unstable predictions across time. To fulfill this gap, we propose ReLiable Spatial-temporal Voxels (Latte), an MM-TTA method that leverages reliable cross-modal spatial-temporal correspondences for multi-modal 3D segmentation. Motivated by the fact that reliable predictions should be consistent with their spatial-temporal correspondences, Latte aggregates consecutive frames in a slide window manner and constructs ST voxel to capture temporally local prediction consistency for each modality. After filtering out ST voxels with high ST entropy, Latte conducts cross-modal learning for each point and pixel by attending to those with reliable and consistent predictions among both spatial and temporal neighborhoods. Experimental results show that Latte achieves state-of-the-art performance on three different MM-TTA benchmarks compared to previous MM-TTA or TTA methods.

A Novel Framework for Multi-Person Temporal Gaze Following and Social Gaze Prediction

Mar 15, 2024

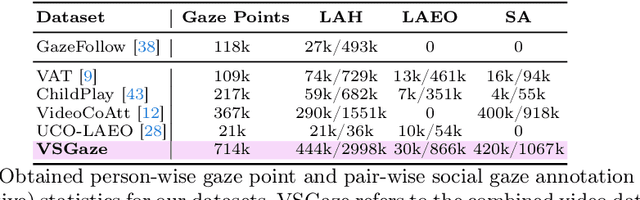

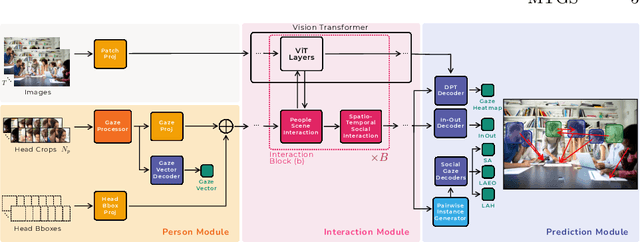

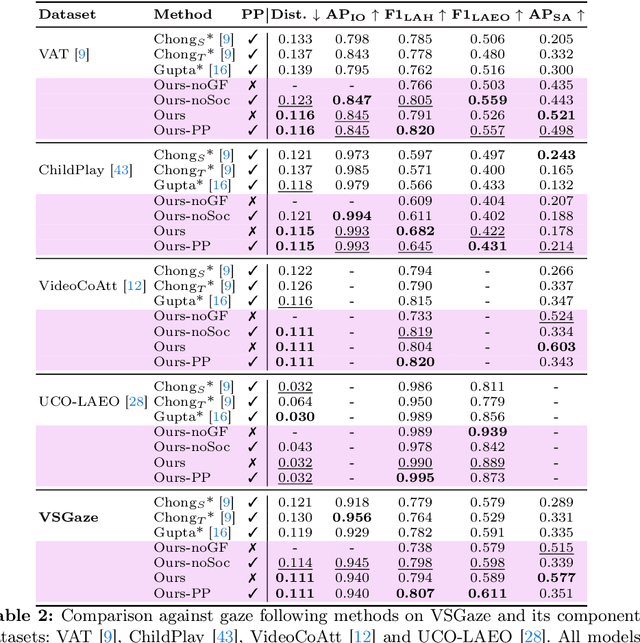

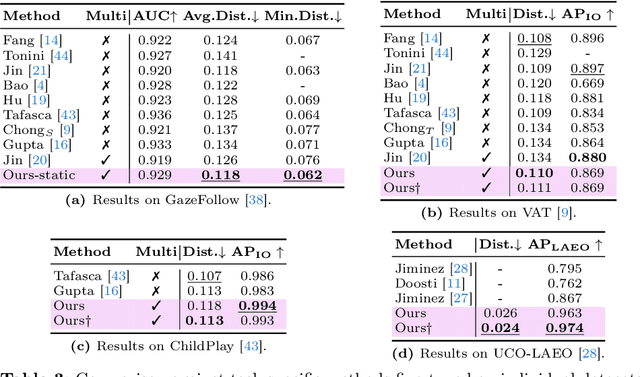

Gaze following and social gaze prediction are fundamental tasks providing insights into human communication behaviors, intent, and social interactions. Most previous approaches addressed these tasks separately, either by designing highly specialized social gaze models that do not generalize to other social gaze tasks or by considering social gaze inference as an ad-hoc post-processing of the gaze following task. Furthermore, the vast majority of gaze following approaches have proposed static models that can handle only one person at a time, therefore failing to take advantage of social interactions and temporal dynamics. In this paper, we address these limitations and introduce a novel framework to jointly predict the gaze target and social gaze label for all people in the scene. The framework comprises of: (i) a temporal, transformer-based architecture that, in addition to image tokens, handles person-specific tokens capturing the gaze information related to each individual; (ii) a new dataset, VSGaze, that unifies annotation types across multiple gaze following and social gaze datasets. We show that our model trained on VSGaze can address all tasks jointly, and achieves state-of-the-art results for multi-person gaze following and social gaze prediction.

HawkEye: Training Video-Text LLMs for Grounding Text in Videos

Mar 15, 2024

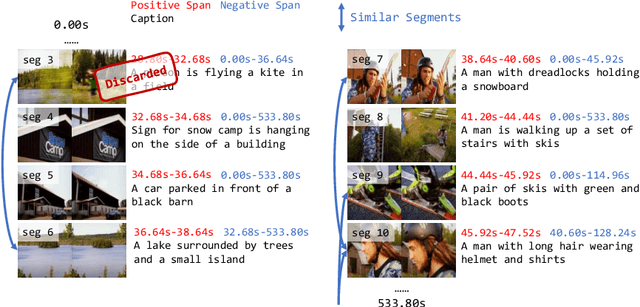

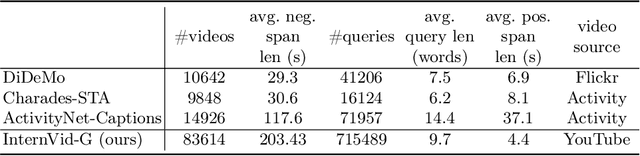

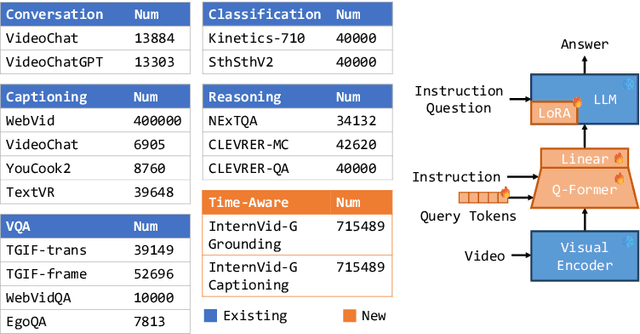

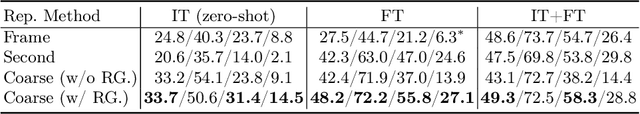

Video-text Large Language Models (video-text LLMs) have shown remarkable performance in answering questions and holding conversations on simple videos. However, they perform almost the same as random on grounding text queries in long and complicated videos, having little ability to understand and reason about temporal information, which is the most fundamental difference between videos and images. In this paper, we propose HawkEye, one of the first video-text LLMs that can perform temporal video grounding in a fully text-to-text manner. To collect training data that is applicable for temporal video grounding, we construct InternVid-G, a large-scale video-text corpus with segment-level captions and negative spans, with which we introduce two new time-aware training objectives to video-text LLMs. We also propose a coarse-grained method of representing segments in videos, which is more robust and easier for LLMs to learn and follow than other alternatives. Extensive experiments show that HawkEye is better at temporal video grounding and comparable on other video-text tasks with existing video-text LLMs, which verifies its superior video-text multi-modal understanding abilities.

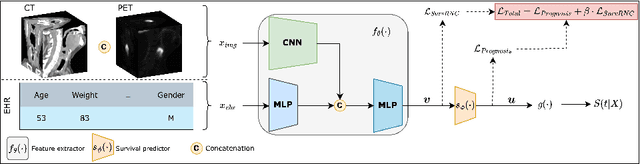

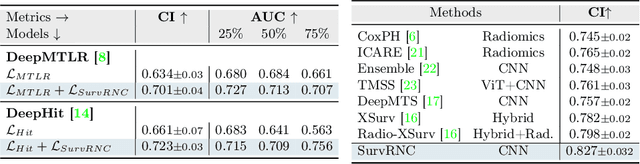

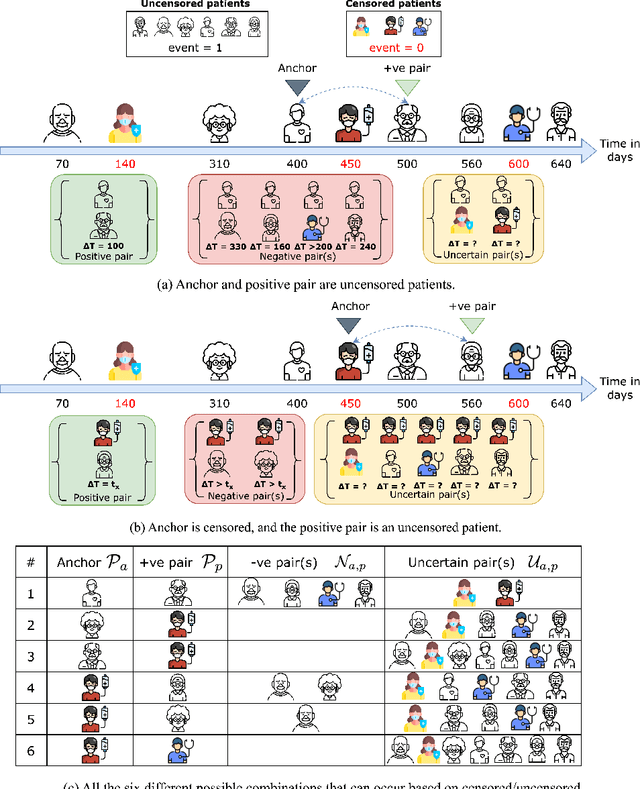

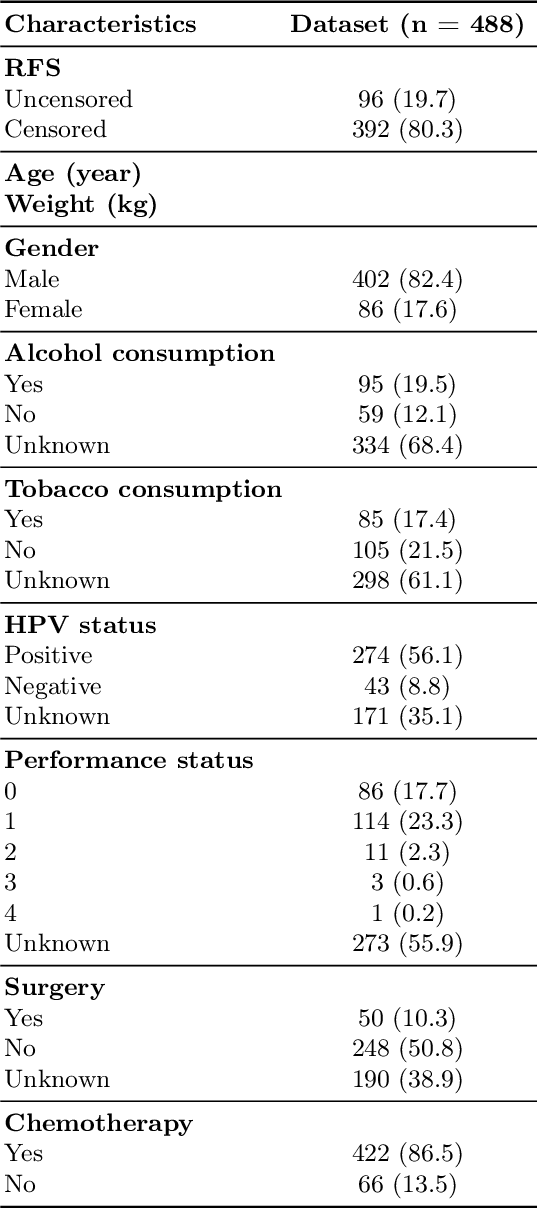

SurvRNC: Learning Ordered Representations for Survival Prediction using Rank-N-Contrast

Mar 15, 2024

Predicting the likelihood of survival is of paramount importance for individuals diagnosed with cancer as it provides invaluable information regarding prognosis at an early stage. This knowledge enables the formulation of effective treatment plans that lead to improved patient outcomes. In the past few years, deep learning models have provided a feasible solution for assessing medical images, electronic health records, and genomic data to estimate cancer risk scores. However, these models often fall short of their potential because they struggle to learn regression-aware feature representations. In this study, we propose Survival Rank-N Contrast (SurvRNC) method, which introduces a loss function as a regularizer to obtain an ordered representation based on the survival times. This function can handle censored data and can be incorporated into any survival model to ensure that the learned representation is ordinal. The model was extensively evaluated on a HEad \& NeCK TumOR (HECKTOR) segmentation and the outcome-prediction task dataset. We demonstrate that using the SurvRNC method for training can achieve higher performance on different deep survival models. Additionally, it outperforms state-of-the-art methods by 3.6% on the concordance index. The code is publicly available on https://github.com/numanai/SurvRNC

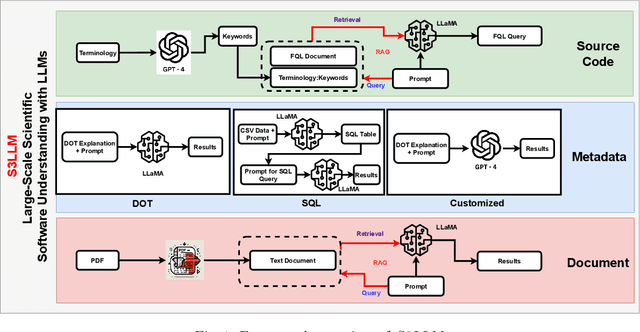

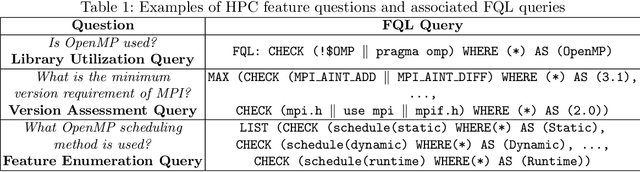

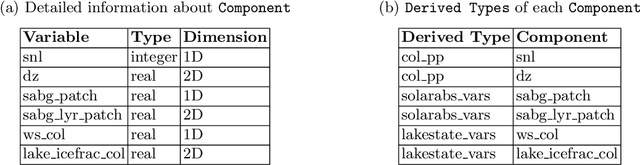

S3LLM: Large-Scale Scientific Software Understanding with LLMs using Source, Metadata, and Document

Mar 15, 2024

The understanding of large-scale scientific software poses significant challenges due to its diverse codebase, extensive code length, and target computing architectures. The emergence of generative AI, specifically large language models (LLMs), provides novel pathways for understanding such complex scientific codes. This paper presents S3LLM, an LLM-based framework designed to enable the examination of source code, code metadata, and summarized information in conjunction with textual technical reports in an interactive, conversational manner through a user-friendly interface. S3LLM leverages open-source LLaMA-2 models to enhance code analysis through the automatic transformation of natural language queries into domain-specific language (DSL) queries. Specifically, it translates these queries into Feature Query Language (FQL), enabling efficient scanning and parsing of entire code repositories. In addition, S3LLM is equipped to handle diverse metadata types, including DOT, SQL, and customized formats. Furthermore, S3LLM incorporates retrieval augmented generation (RAG) and LangChain technologies to directly query extensive documents. S3LLM demonstrates the potential of using locally deployed open-source LLMs for the rapid understanding of large-scale scientific computing software, eliminating the need for extensive coding expertise, and thereby making the process more efficient and effective. S3LLM is available at https://github.com/ResponsibleAILab/s3llm.

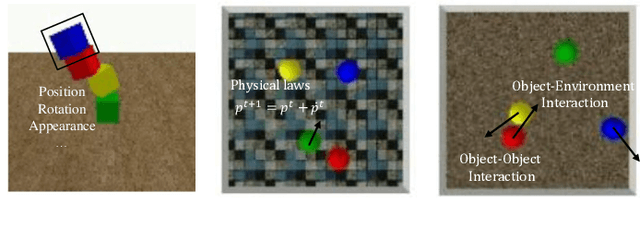

Learning Physical Dynamics for Object-centric Visual Prediction

Mar 15, 2024

The ability to model the underlying dynamics of visual scenes and reason about the future is central to human intelligence. Many attempts have been made to empower intelligent systems with such physical understanding and prediction abilities. However, most existing methods focus on pixel-to-pixel prediction, which suffers from heavy computational costs while lacking a deep understanding of the physical dynamics behind videos. Recently, object-centric prediction methods have emerged and attracted increasing interest. Inspired by it, this paper proposes an unsupervised object-centric prediction model that makes future predictions by learning visual dynamics between objects. Our model consists of two modules, perceptual, and dynamic module. The perceptual module is utilized to decompose images into several objects and synthesize images with a set of object-centric representations. The dynamic module fuses contextual information, takes environment-object and object-object interaction into account, and predicts the future trajectory of objects. Extensive experiments are conducted to validate the effectiveness of the proposed method. Both quantitative and qualitative experimental results demonstrate that our model generates higher visual quality and more physically reliable predictions compared to the state-of-the-art methods.

CoRAL: Collaborative Retrieval-Augmented Large Language Models Improve Long-tail Recommendation

Mar 11, 2024

The long-tail recommendation is a challenging task for traditional recommender systems, due to data sparsity and data imbalance issues. The recent development of large language models (LLMs) has shown their abilities in complex reasoning, which can help to deduce users' preferences based on very few previous interactions. However, since most LLM-based systems rely on items' semantic meaning as the sole evidence for reasoning, the collaborative information of user-item interactions is neglected, which can cause the LLM's reasoning to be misaligned with task-specific collaborative information of the dataset. To further align LLMs' reasoning to task-specific user-item interaction knowledge, we introduce collaborative retrieval-augmented LLMs, CoRAL, which directly incorporate collaborative evidence into the prompts. Based on the retrieved user-item interactions, the LLM can analyze shared and distinct preferences among users, and summarize the patterns indicating which types of users would be attracted by certain items. The retrieved collaborative evidence prompts the LLM to align its reasoning with the user-item interaction patterns in the dataset. However, since the capacity of the input prompt is limited, finding the minimally-sufficient collaborative information for recommendation tasks can be challenging. We propose to find the optimal interaction set through a sequential decision-making process and develop a retrieval policy learned through a reinforcement learning (RL) framework, CoRAL. Our experimental results show that CoRAL can significantly improve LLMs' reasoning abilities on specific recommendation tasks. Our analysis also reveals that CoRAL can more efficiently explore collaborative information through reinforcement learning.

Regret Minimization in Stackelberg Games with Side Information

Feb 22, 2024In its most basic form, a Stackelberg game is a two-player game in which a leader commits to a (mixed) strategy, and a follower best-responds. Stackelberg games are perhaps one of the biggest success stories of algorithmic game theory over the last decade, as algorithms for playing in Stackelberg games have been deployed in many real-world domains including airport security, anti-poaching efforts, and cyber-crime prevention. However, these algorithms often fail to take into consideration the additional information available to each player (e.g. traffic patterns, weather conditions, network congestion), a salient feature of reality which may significantly affect both players' optimal strategies. We formalize such settings as Stackelberg games with side information, in which both players observe an external context before playing. The leader then commits to a (possibly context-dependent) strategy, and the follower best-responds to both the leader's strategy and the context. We focus on the online setting in which a sequence of followers arrive over time, and the context may change from round-to-round. In sharp contrast to the non-contextual version, we show that it is impossible for the leader to achieve good performance (measured by regret) in the full adversarial setting (i.e., when both the context and the follower are chosen by an adversary). However, it turns out that a little bit of randomness goes a long way. Motivated by our impossibility result, we show that no-regret learning is possible in two natural relaxations: the setting in which the sequence of followers is chosen stochastically and the sequence of contexts is adversarial, and the setting in which the sequence of contexts is stochastic and the sequence of followers is chosen by an adversary.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge