"Information": models, code, and papers

HAMUR: Hyper Adapter for Multi-Domain Recommendation

Sep 12, 2023

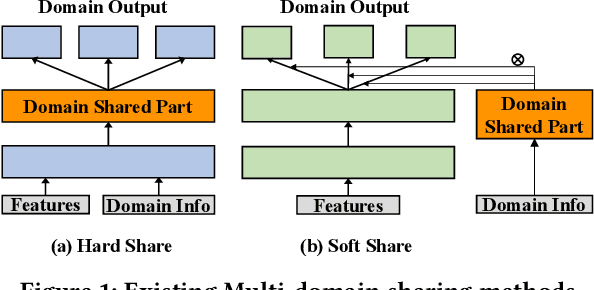

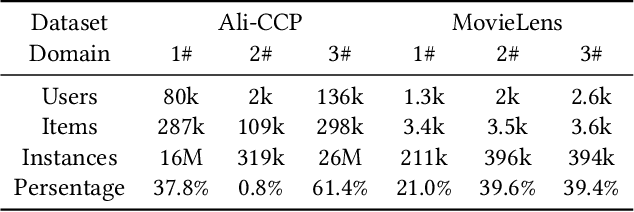

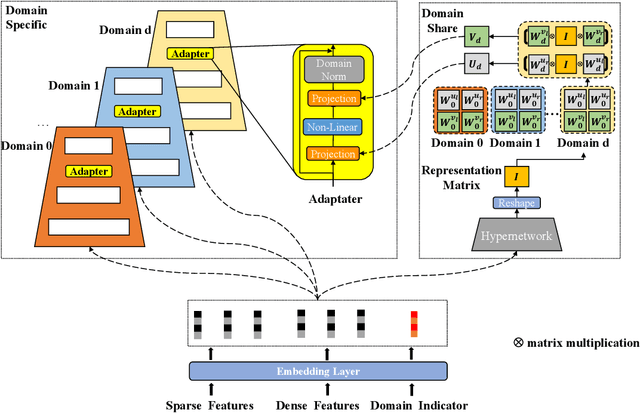

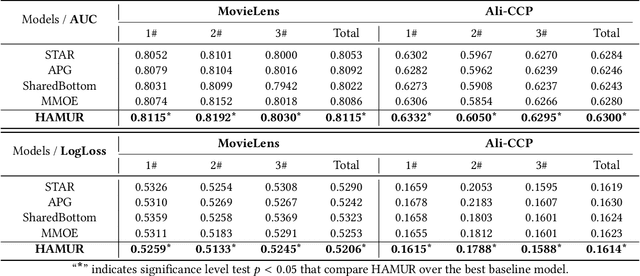

Multi-Domain Recommendation (MDR) has gained significant attention in recent years, which leverages data from multiple domains to enhance their performance concurrently.However, current MDR models are confronted with two limitations. Firstly, the majority of these models adopt an approach that explicitly shares parameters between domains, leading to mutual interference among them. Secondly, due to the distribution differences among domains, the utilization of static parameters in existing methods limits their flexibility to adapt to diverse domains. To address these challenges, we propose a novel model Hyper Adapter for Multi-Domain Recommendation (HAMUR). Specifically, HAMUR consists of two components: (1). Domain-specific adapter, designed as a pluggable module that can be seamlessly integrated into various existing multi-domain backbone models, and (2). Domain-shared hyper-network, which implicitly captures shared information among domains and dynamically generates the parameters for the adapter. We conduct extensive experiments on two public datasets using various backbone networks. The experimental results validate the effectiveness and scalability of the proposed model.

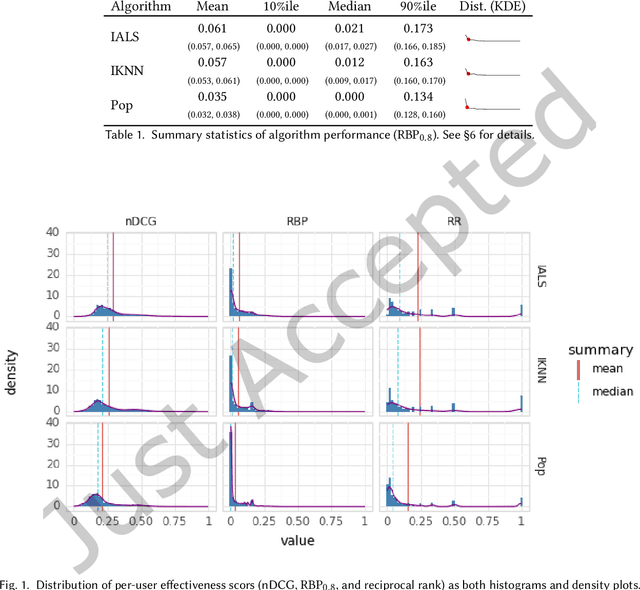

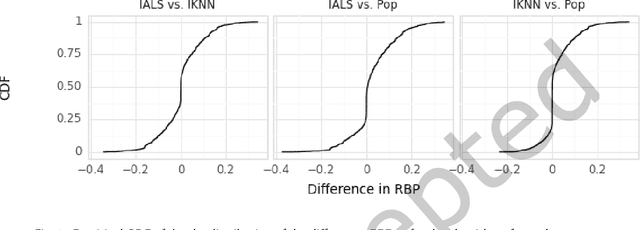

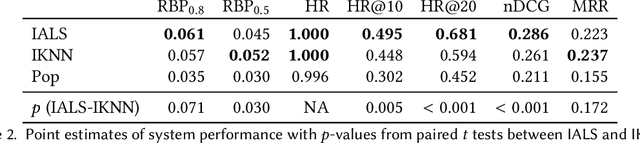

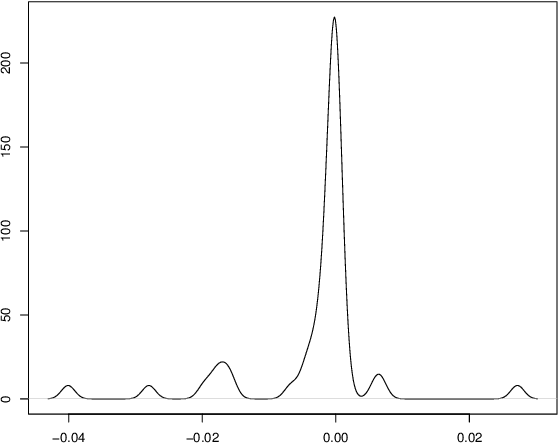

Distributionally-Informed Recommender System Evaluation

Sep 12, 2023

Current practice for evaluating recommender systems typically focuses on point estimates of user-oriented effectiveness metrics or business metrics, sometimes combined with additional metrics for considerations such as diversity and novelty. In this paper, we argue for the need for researchers and practitioners to attend more closely to various distributions that arise from a recommender system (or other information access system) and the sources of uncertainty that lead to these distributions. One immediate implication of our argument is that both researchers and practitioners must report and examine more thoroughly the distribution of utility between and within different stakeholder groups. However, distributions of various forms arise in many more aspects of the recommender systems experimental process, and distributional thinking has substantial ramifications for how we design, evaluate, and present recommender systems evaluation and research results. Leveraging and emphasizing distributions in the evaluation of recommender systems is a necessary step to ensure that the systems provide appropriate and equitably-distributed benefit to the people they affect.

FedJudge: Federated Legal Large Language Model

Sep 15, 2023

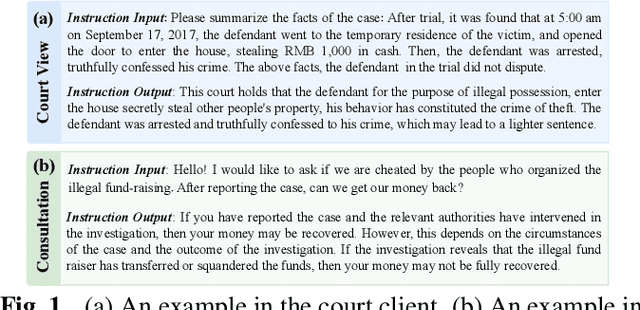

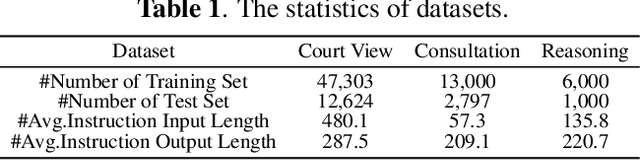

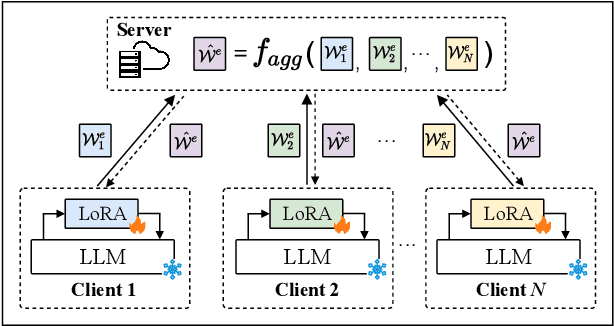

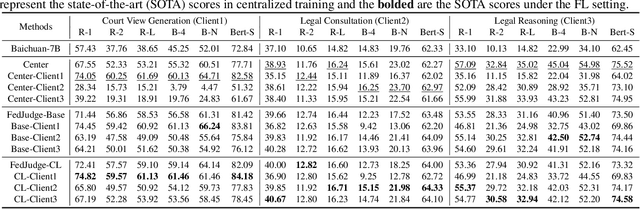

Large Language Models (LLMs) have gained prominence in the field of Legal Intelligence, offering potential applications in assisting legal professionals and laymen. However, the centralized training of these Legal LLMs raises data privacy concerns, as legal data is distributed among various institutions containing sensitive individual information. This paper addresses this challenge by exploring the integration of Legal LLMs with Federated Learning (FL) methodologies. By employing FL, Legal LLMs can be fine-tuned locally on devices or clients, and their parameters are aggregated and distributed on a central server, ensuring data privacy without directly sharing raw data. However, computation and communication overheads hinder the full fine-tuning of LLMs under the FL setting. Moreover, the distribution shift of legal data reduces the effectiveness of FL methods. To this end, in this paper, we propose the first Federated Legal Large Language Model (FedJudge) framework, which fine-tunes Legal LLMs efficiently and effectively. Specifically, FedJudge utilizes parameter-efficient fine-tuning methods to update only a few additional parameters during the FL training. Besides, we explore the continual learning methods to preserve the global model's important parameters when training local clients to mitigate the problem of data shifts. Extensive experimental results on three real-world datasets clearly validate the effectiveness of FedJudge. Code is released at https://github.com/yuelinan/FedJudge.

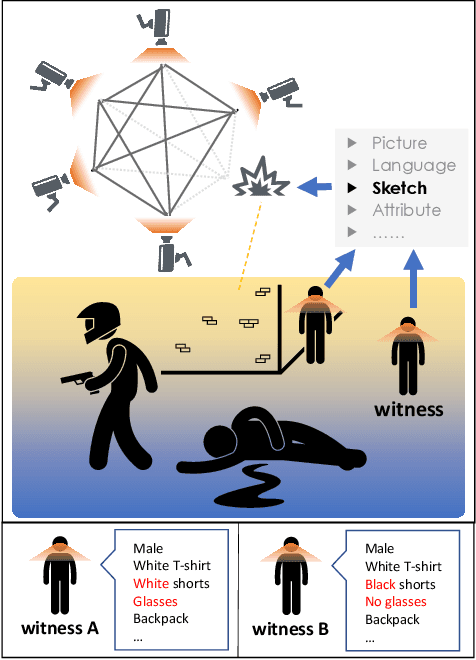

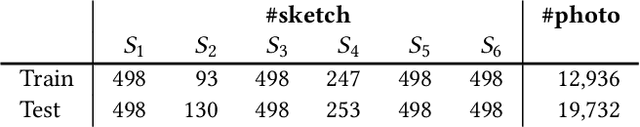

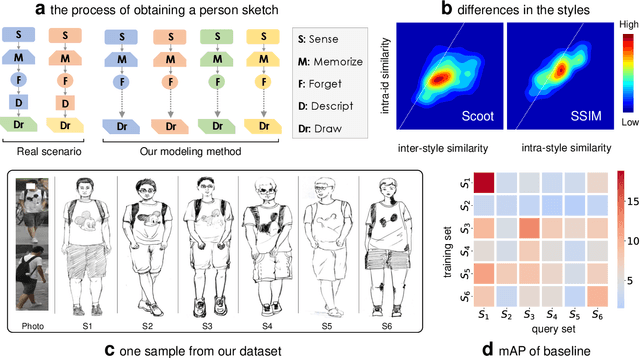

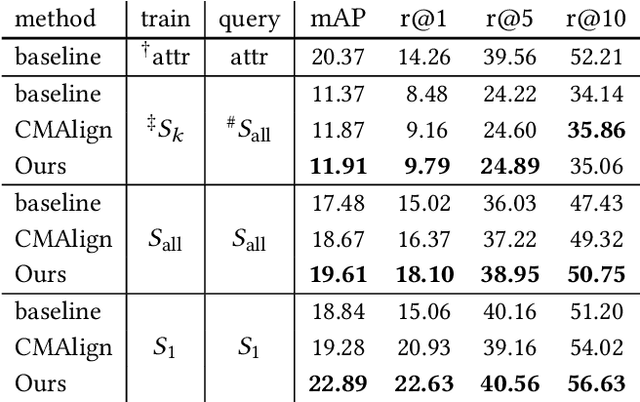

Beyond Domain Gap: Exploiting Subjectivity in Sketch-Based Person Retrieval

Sep 15, 2023

Person re-identification (re-ID) requires densely distributed cameras. In practice, the person of interest may not be captured by cameras and, therefore, needs to be retrieved using subjective information (e.g., sketches from witnesses). Previous research defines this case using the sketch as sketch re-identification (Sketch re-ID) and focuses on eliminating the domain gap. Actually, subjectivity is another significant challenge. We model and investigate it by posing a new dataset with multi-witness descriptions. It features two aspects. 1) Large-scale. It contains over 4,763 sketches and 32,668 photos, making it the largest Sketch re-ID dataset. 2) Multi-perspective and multi-style. Our dataset offers multiple sketches for each identity. Witnesses' subjective cognition provides multiple perspectives on the same individual, while different artists' drawing styles provide variation in sketch styles. We further have two novel designs to alleviate the challenge of subjectivity. 1) Fusing subjectivity. We propose a non-local (NL) fusion module that gathers sketches from different witnesses for the same identity. 2) Introducing objectivity. An AttrAlign module utilizes attributes as an implicit mask to align cross-domain features. To push forward the advance of Sketch re-ID, we set three benchmarks (large-scale, multi-style, cross-style). Extensive experiments demonstrate our leading performance in these benchmarks. Dataset and Codes are publicly available at: https://github.com/Lin-Kayla/subjectivity-sketch-reid

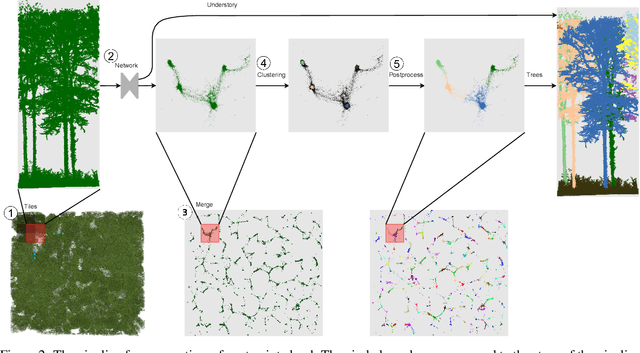

TreeLearn: A Comprehensive Deep Learning Method for Segmenting Individual Trees from Forest Point Clouds

Sep 15, 2023

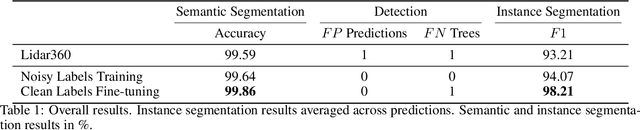

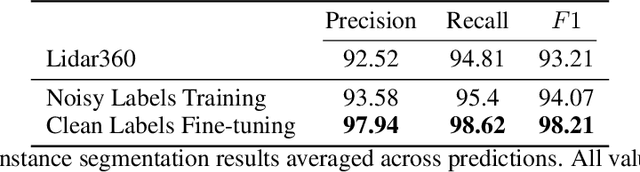

Laser-scanned point clouds of forests make it possible to extract valuable information for forest management. To consider single trees, a forest point cloud needs to be segmented into individual tree point clouds. Existing segmentation methods are usually based on hand-crafted algorithms, such as identifying trunks and growing trees from them, and face difficulties in dense forests with overlapping tree crowns. In this study, we propose \mbox{TreeLearn}, a deep learning-based approach for semantic and instance segmentation of forest point clouds. Unlike previous methods, TreeLearn is trained on already segmented point clouds in a data-driven manner, making it less reliant on predefined features and algorithms. Additionally, we introduce a new manually segmented benchmark forest dataset containing 156 full trees, and 79 partial trees, that have been cleanly segmented by hand. This enables the evaluation of instance segmentation performance going beyond just evaluating the detection of individual trees. We trained TreeLearn on forest point clouds of 6665 trees, labeled using the Lidar360 software. An evaluation on the benchmark dataset shows that TreeLearn performs equally well or better than the algorithm used to generate its training data. Furthermore, the method's performance can be vastly improved by fine-tuning on the cleanly labeled benchmark dataset. The TreeLearn code is availabe from https://github.com/ecker-lab/TreeLearn. The data as well as trained models can be found at https://doi.org/10.25625/VPMPID.

Robust IRS-Element Activation for Energy Efficiency Optimization in IRS-Assisted Communication Systems With Imperfect CSI

Sep 15, 2023In this paper, we study an intelligent reflecting surface (IRS)-aided communication system with single-antenna transmitter and receiver, under imperfect channel state information (CSI). More specifically, we deal with the robust selection of binary (on/off) states of the IRS elements in order to maximize the worst-case energy efficiency (EE), given a bounded CSI uncertainty, while satisfying a minimum signal-to-noise ratio (SNR). In addition, we consider not only continuous but also discrete IRS phase shifts. First, we derive closed-form expressions of the worst-case SNRs, and then formulate the robust (discrete) optimization problems for each case. In the case of continuous phase shifts, we design a dynamic programming (DP) algorithm that is theoretically guaranteed to achieve the global maximum with polynomial complexity $O(L\,{\log L})$, where $L$ is the number of IRS elements. In the case of discrete phase shifts, we develop a convex-relaxation-based method (CRBM) to obtain a feasible (sub-optimal) solution in polynomial time $O(L^{3.5})$, with a posteriori performance guarantee. Furthermore, numerical simulations provide useful insights and confirm the theoretical results. In particular, the proposed algorithms are several orders of magnitude faster than the exhaustive search when $L$ is large, thus being highly scalable and suitable for practical applications. Moreover, both algorithms outperform a baseline scheme, namely, the activation of all IRS elements.

Efficient Polyp Segmentation Via Integrity Learning

Sep 15, 2023

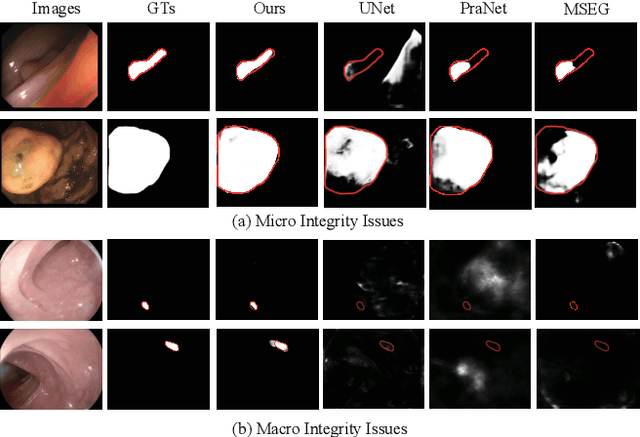

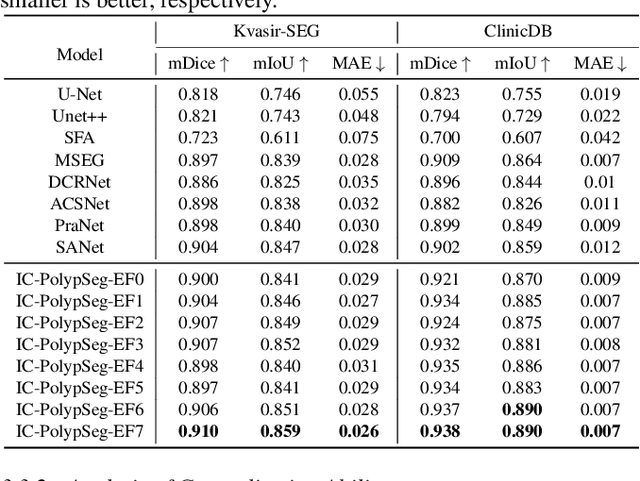

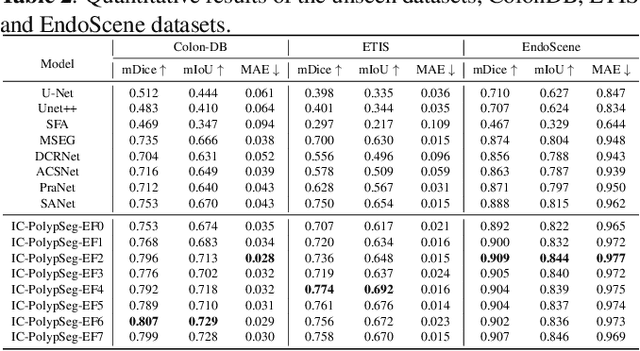

Accurate polyp delineation in colonoscopy is crucial for assisting in diagnosis, guiding interventions, and treatments. However, current deep-learning approaches fall short due to integrity deficiency, which often manifests as missing lesion parts. This paper introduces the integrity concept in polyp segmentation at both macro and micro levels, aiming to alleviate integrity deficiency. Specifically, the model should distinguish entire polyps at the macro level and identify all components within polyps at the micro level. Our Integrity Capturing Polyp Segmentation (IC-PolypSeg) network utilizes lightweight backbones and 3 key components for integrity ameliorating: 1) Pixel-wise feature redistribution (PFR) module captures global spatial correlations across channels in the final semantic-rich encoder features. 2) Cross-stage pixel-wise feature redistribution (CPFR) module dynamically fuses high-level semantics and low-level spatial features to capture contextual information. 3) Coarse-to-fine calibration module combines PFR and CPFR modules to achieve precise boundary detection. Extensive experiments on 5 public datasets demonstrate that the proposed IC-PolypSeg outperforms 8 state-of-the-art methods in terms of higher precision and significantly improved computational efficiency with lower computational consumption. IC-PolypSeg-EF0 employs 300 times fewer parameters than PraNet while achieving a real-time processing speed of 235 FPS. Importantly, IC-PolypSeg reduces the false negative ratio on five datasets, meeting clinical requirements.

Semi-Supervised Semantic Depth Estimation using Symbiotic Transformer and NearFarMix Augmentation

Aug 28, 2023In computer vision, depth estimation is crucial for domains like robotics, autonomous vehicles, augmented reality, and virtual reality. Integrating semantics with depth enhances scene understanding through reciprocal information sharing. However, the scarcity of semantic information in datasets poses challenges. Existing convolutional approaches with limited local receptive fields hinder the full utilization of the symbiotic potential between depth and semantics. This paper introduces a dataset-invariant semi-supervised strategy to address the scarcity of semantic information. It proposes the Depth Semantics Symbiosis module, leveraging the Symbiotic Transformer for achieving comprehensive mutual awareness by information exchange within both local and global contexts. Additionally, a novel augmentation, NearFarMix is introduced to combat overfitting and compensate both depth-semantic tasks by strategically merging regions from two images, generating diverse and structurally consistent samples with enhanced control. Extensive experiments on NYU-Depth-V2 and KITTI datasets demonstrate the superiority of our proposed techniques in indoor and outdoor environments.

Fine-Grained Spatiotemporal Motion Alignment for Contrastive Video Representation Learning

Sep 01, 2023

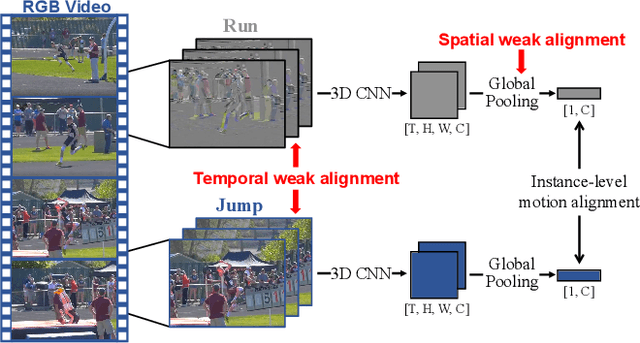

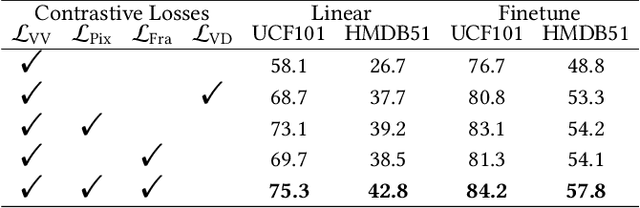

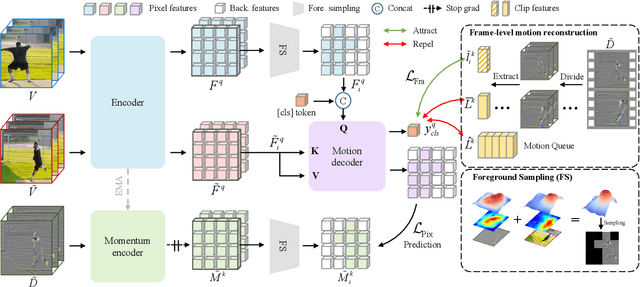

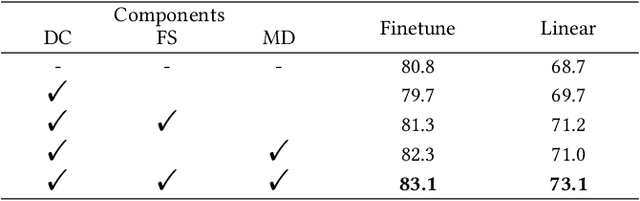

As the most essential property in a video, motion information is critical to a robust and generalized video representation. To inject motion dynamics, recent works have adopted frame difference as the source of motion information in video contrastive learning, considering the trade-off between quality and cost. However, existing works align motion features at the instance level, which suffers from spatial and temporal weak alignment across modalities. In this paper, we present a \textbf{Fi}ne-grained \textbf{M}otion \textbf{A}lignment (FIMA) framework, capable of introducing well-aligned and significant motion information. Specifically, we first develop a dense contrastive learning framework in the spatiotemporal domain to generate pixel-level motion supervision. Then, we design a motion decoder and a foreground sampling strategy to eliminate the weak alignments in terms of time and space. Moreover, a frame-level motion contrastive loss is presented to improve the temporal diversity of the motion features. Extensive experiments demonstrate that the representations learned by FIMA possess great motion-awareness capabilities and achieve state-of-the-art or competitive results on downstream tasks across UCF101, HMDB51, and Diving48 datasets. Code is available at \url{https://github.com/ZMHH-H/FIMA}.

CPSP: Learning Speech Concepts From Phoneme Supervision

Sep 01, 2023

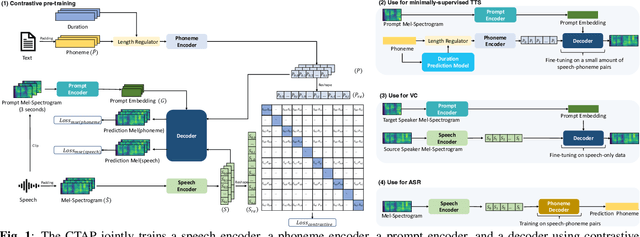

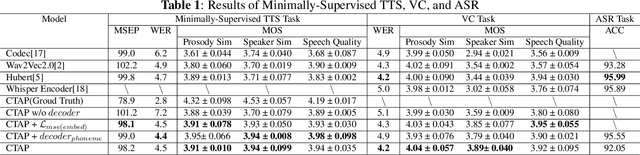

For fine-grained generation and recognition tasks such as minimally-supervised text-to-speech (TTS), voice conversion (VC), and automatic speech recognition (ASR), the intermediate representation extracted from speech should contain information that is between text coding and acoustic coding. The linguistic content is salient, while the paralinguistic information such as speaker identity and acoustic details should be removed. However, existing methods for extracting fine-grained intermediate representations from speech suffer from issues of excessive redundancy and dimension explosion. Additionally, existing contrastive learning methods in the audio field focus on extracting global descriptive information for downstream audio classification tasks, making them unsuitable for TTS, VC, and ASR tasks. To address these issues, we propose a method named Contrastive Phoneme-Speech Pretraining (CPSP), which uses three encoders, one decoder, and contrastive learning to bring phoneme and speech into a joint multimodal space, learning how to connect phoneme and speech at the frame level. The CPSP model is trained on 210k speech and phoneme text pairs, achieving minimally-supervised TTS, VC, and ASR. The proposed CPSP method offers a promising solution for fine-grained generation and recognition downstream tasks in speech processing. We provide a website with audio samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge