"Information Extraction": models, code, and papers

Leveraging deep active learning to identify low-resource mobility functioning information in public clinical notes

Nov 27, 2023Function is increasingly recognized as an important indicator of whole-person health, although it receives little attention in clinical natural language processing research. We introduce the first public annotated dataset specifically on the Mobility domain of the International Classification of Functioning, Disability and Health (ICF), aiming to facilitate automatic extraction and analysis of functioning information from free-text clinical notes. We utilize the National NLP Clinical Challenges (n2c2) research dataset to construct a pool of candidate sentences using keyword expansion. Our active learning approach, using query-by-committee sampling weighted by density representativeness, selects informative sentences for human annotation. We train BERT and CRF models, and use predictions from these models to guide the selection of new sentences for subsequent annotation iterations. Our final dataset consists of 4,265 sentences with a total of 11,784 entities, including 5,511 Action entities, 5,328 Mobility entities, 306 Assistance entities, and 639 Quantification entities. The inter-annotator agreement (IAA), averaged over all entity types, is 0.72 for exact matching and 0.91 for partial matching. We also train and evaluate common BERT models and state-of-the-art Nested NER models. The best F1 scores are 0.84 for Action, 0.7 for Mobility, 0.62 for Assistance, and 0.71 for Quantification. Empirical results demonstrate promising potential of NER models to accurately extract mobility functioning information from clinical text. The public availability of our annotated dataset will facilitate further research to comprehensively capture functioning information in electronic health records (EHRs).

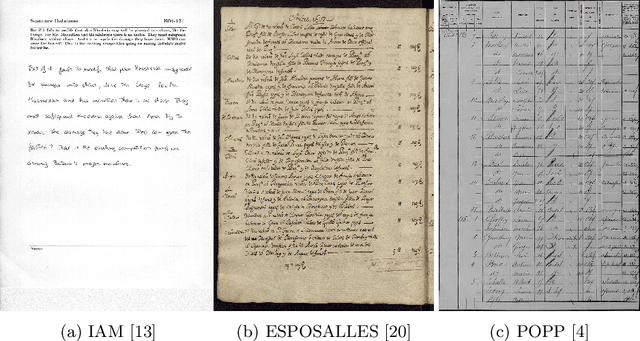

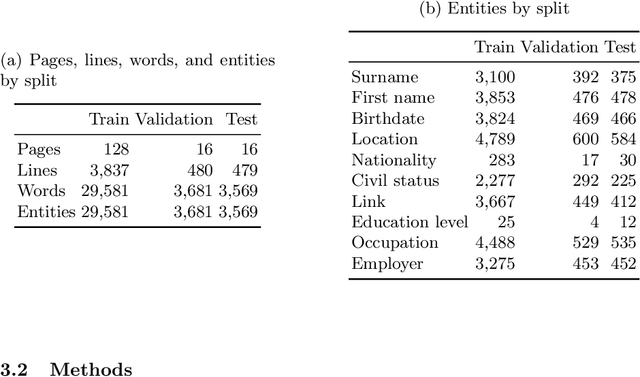

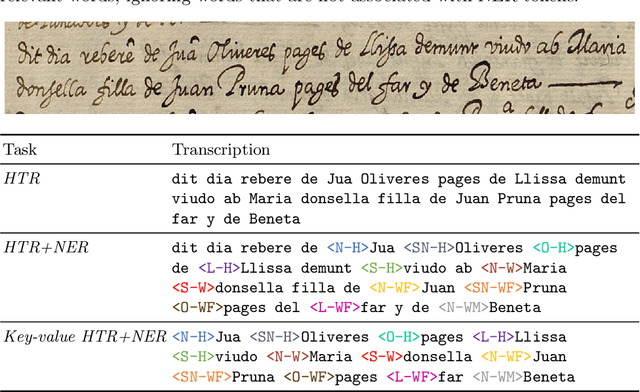

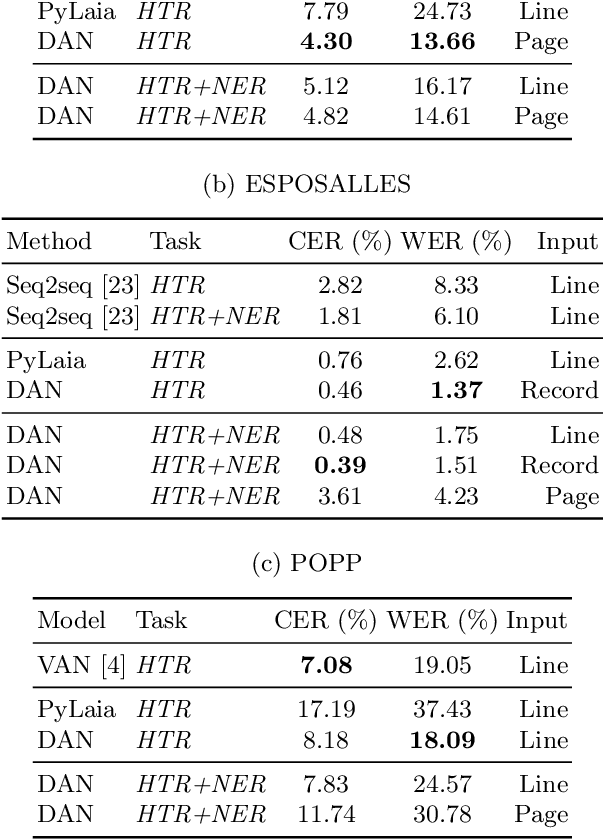

Key-value information extraction from full handwritten pages

Apr 26, 2023

We propose a Transformer-based approach for information extraction from digitized handwritten documents. Our approach combines, in a single model, the different steps that were so far performed by separate models: feature extraction, handwriting recognition and named entity recognition. We compare this integrated approach with traditional two-stage methods that perform handwriting recognition before named entity recognition, and present results at different levels: line, paragraph, and page. Our experiments show that attention-based models are especially interesting when applied on full pages, as they do not require any prior segmentation step. Finally, we show that they are able to learn from key-value annotations: a list of important words with their corresponding named entities. We compare our models to state-of-the-art methods on three public databases (IAM, ESPOSALLES, and POPP) and outperform previous performances on all three datasets.

Extracting individual variable information for their decoupling, direct mutual information and multi-feature Granger causality

Nov 22, 2023Working with multiple variables they usually contain difficult to control complex dependencies. This article proposes extraction of their individual information, e.g. $\overline{X|Y}$ as random variable containing information from $X$, but with removed information about $Y$, by using $(x,y) \leftrightarrow (\bar{x}=\textrm{CDF}_{X|Y=y}(x),y)$ reversible normalization. One application can be decoupling of individual information of variables: reversibly transform $(X_1,\ldots,X_n)\leftrightarrow(\tilde{X}_1,\ldots \tilde{X}_n)$ together containing the same information, but being independent: $\forall_{i\neq j} \tilde{X}_i\perp \tilde{X}_j, \tilde{X}_i\perp X_j$. It requires detailed models of complex conditional probability distributions - it is generally a difficult task, but here can be done through multiple dependency reducing iterations, using imperfect methods (here HCR: Hierarchical Correlation Reconstruction). It could be also used for direct mutual information - evaluating direct information transfer: without use of intermediate variables. For causality direction there is discussed multi-feature Granger causality, e.g. to trace various types of individual information transfers between such decoupled variables, including propagation time (delay).

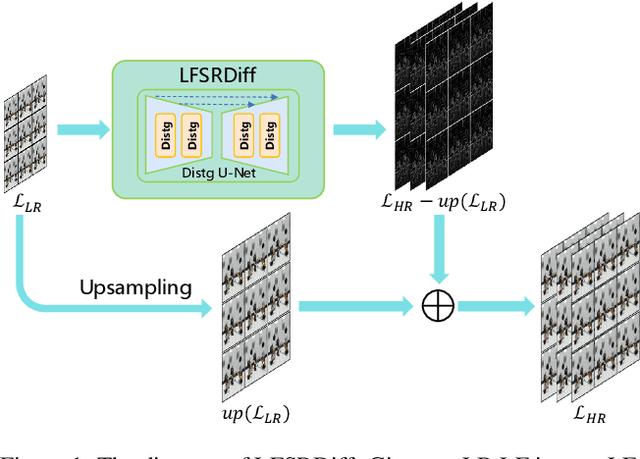

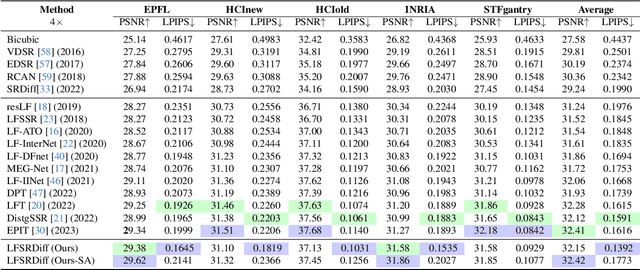

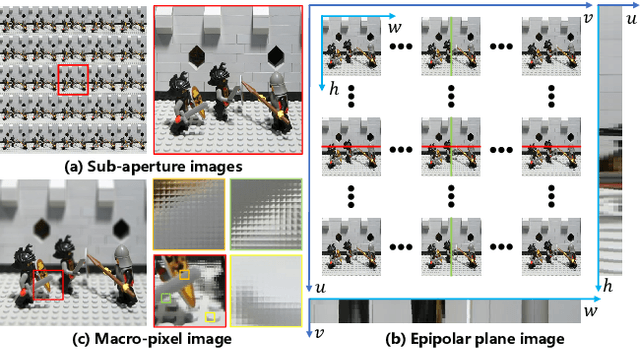

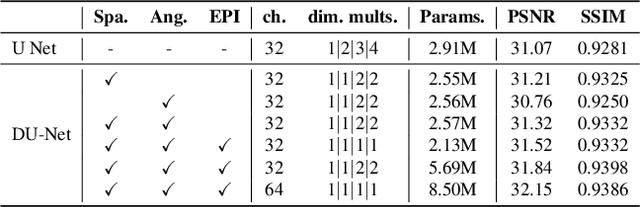

LFSRDiff: Light Field Image Super-Resolution via Diffusion Models

Nov 27, 2023

Light field (LF) image super-resolution (SR) is a challenging problem due to its inherent ill-posed nature, where a single low-resolution (LR) input LF image can correspond to multiple potential super-resolved outcomes. Despite this complexity, mainstream LF image SR methods typically adopt a deterministic approach, generating only a single output supervised by pixel-wise loss functions. This tendency often results in blurry and unrealistic results. Although diffusion models can capture the distribution of potential SR results by iteratively predicting Gaussian noise during the denoising process, they are primarily designed for general images and struggle to effectively handle the unique characteristics and information present in LF images. To address these limitations, we introduce LFSRDiff, the first diffusion-based LF image SR model, by incorporating the LF disentanglement mechanism. Our novel contribution includes the introduction of a disentangled U-Net for diffusion models, enabling more effective extraction and fusion of both spatial and angular information within LF images. Through comprehensive experimental evaluations and comparisons with the state-of-the-art LF image SR methods, the proposed approach consistently produces diverse and realistic SR results. It achieves the highest perceptual metric in terms of LPIPS. It also demonstrates the ability to effectively control the trade-off between perception and distortion. The code is available at \url{https://github.com/chaowentao/LFSRDiff}.

Multi-scale Semantic Correlation Mining for Visible-Infrared Person Re-Identification

Nov 24, 2023The main challenge in the Visible-Infrared Person Re-Identification (VI-ReID) task lies in how to extract discriminative features from different modalities for matching purposes. While the existing well works primarily focus on minimizing the modal discrepancies, the modality information can not thoroughly be leveraged. To solve this problem, a Multi-scale Semantic Correlation Mining network (MSCMNet) is proposed to comprehensively exploit semantic features at multiple scales and simultaneously reduce modality information loss as small as possible in feature extraction. The proposed network contains three novel components. Firstly, after taking into account the effective utilization of modality information, the Multi-scale Information Correlation Mining Block (MIMB) is designed to explore semantic correlations across multiple scales. Secondly, in order to enrich the semantic information that MIMB can utilize, a quadruple-stream feature extractor (QFE) with non-shared parameters is specifically designed to extract information from different dimensions of the dataset. Finally, the Quadruple Center Triplet Loss (QCT) is further proposed to address the information discrepancy in the comprehensive features. Extensive experiments on the SYSU-MM01, RegDB, and LLCM datasets demonstrate that the proposed MSCMNet achieves the greatest accuracy.

Empirical Study of Zero-Shot NER with ChatGPT

Oct 16, 2023Large language models (LLMs) exhibited powerful capability in various natural language processing tasks. This work focuses on exploring LLM performance on zero-shot information extraction, with a focus on the ChatGPT and named entity recognition (NER) task. Inspired by the remarkable reasoning capability of LLM on symbolic and arithmetic reasoning, we adapt the prevalent reasoning methods to NER and propose reasoning strategies tailored for NER. First, we explore a decomposed question-answering paradigm by breaking down the NER task into simpler subproblems by labels. Second, we propose syntactic augmentation to stimulate the model's intermediate thinking in two ways: syntactic prompting, which encourages the model to analyze the syntactic structure itself, and tool augmentation, which provides the model with the syntactic information generated by a parsing tool. Besides, we adapt self-consistency to NER by proposing a two-stage majority voting strategy, which first votes for the most consistent mentions, then the most consistent types. The proposed methods achieve remarkable improvements for zero-shot NER across seven benchmarks, including Chinese and English datasets, and on both domain-specific and general-domain scenarios. In addition, we present a comprehensive analysis of the error types with suggestions for optimization directions. We also verify the effectiveness of the proposed methods on the few-shot setting and other LLMs.

Constructing a Knowledge Graph for Vietnamese Legal Cases with Heterogeneous Graphs

Sep 16, 2023

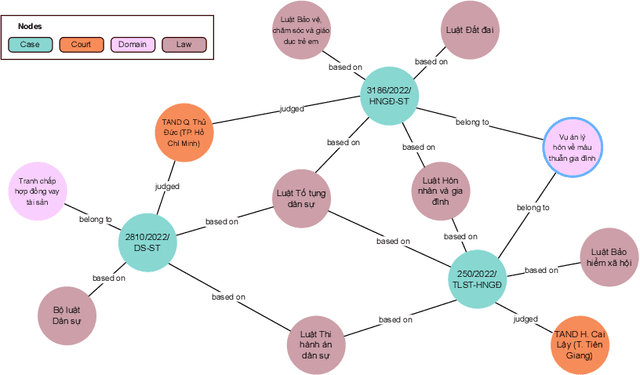

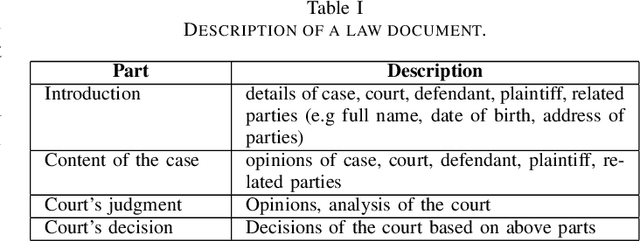

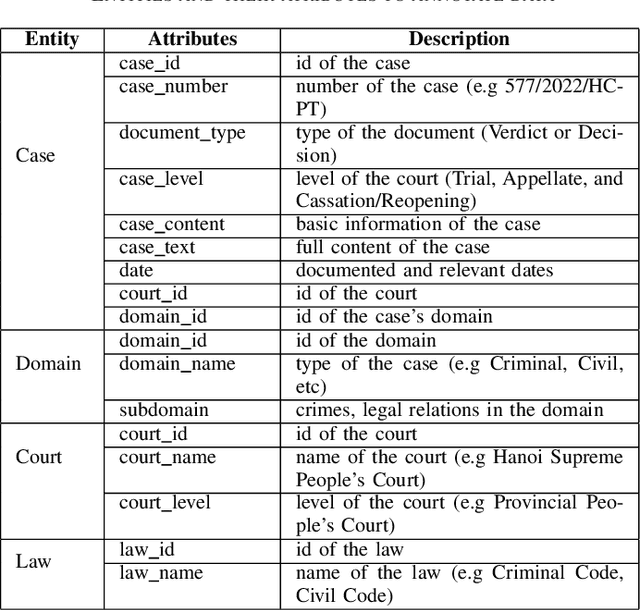

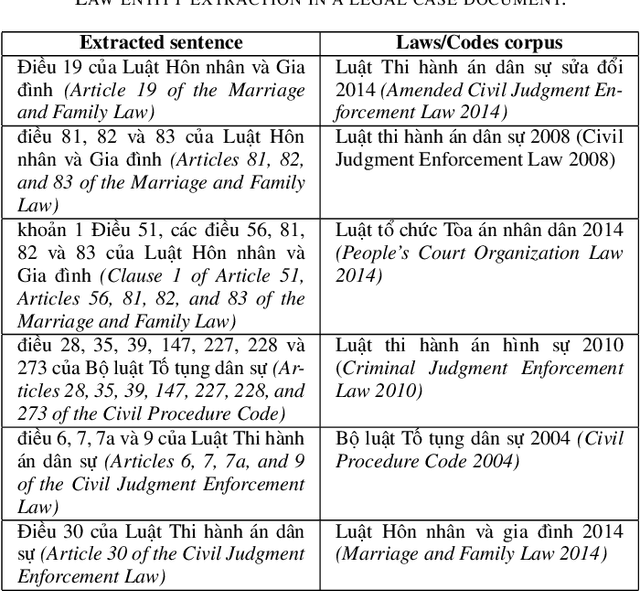

This paper presents a knowledge graph construction method for legal case documents and related laws, aiming to organize legal information efficiently and enhance various downstream tasks. Our approach consists of three main steps: data crawling, information extraction, and knowledge graph deployment. First, the data crawler collects a large corpus of legal case documents and related laws from various sources, providing a rich database for further processing. Next, the information extraction step employs natural language processing techniques to extract entities such as courts, cases, domains, and laws, as well as their relationships from the unstructured text. Finally, the knowledge graph is deployed, connecting these entities based on their extracted relationships, creating a heterogeneous graph that effectively represents legal information and caters to users such as lawyers, judges, and scholars. The established baseline model leverages unsupervised learning methods, and by incorporating the knowledge graph, it demonstrates the ability to identify relevant laws for a given legal case. This approach opens up opportunities for various applications in the legal domain, such as legal case analysis, legal recommendation, and decision support.

Information Extraction in Domain and Generic Documents: Findings from Heuristic-based and Data-driven Approaches

Jun 30, 2023Information extraction (IE) plays very important role in natural language processing (NLP) and is fundamental to many NLP applications that used to extract structured information from unstructured text data. Heuristic-based searching and data-driven learning are two main stream implementation approaches. However, no much attention has been paid to document genre and length influence on IE tasks. To fill the gap, in this study, we investigated the accuracy and generalization abilities of heuristic-based searching and data-driven to perform two IE tasks: named entity recognition (NER) and semantic role labeling (SRL) on domain-specific and generic documents with different length. We posited two hypotheses: first, short documents may yield better accuracy results compared to long documents; second, generic documents may exhibit superior extraction outcomes relative to domain-dependent documents due to training document genre limitations. Our findings reveals that no single method demonstrated overwhelming performance in both tasks. For named entity extraction, data-driven approaches outperformed symbolic methods in terms of accuracy, particularly in short texts. In the case of semantic roles extraction, we observed that heuristic-based searching method and data-driven based model with syntax representation surpassed the performance of pure data-driven approach which only consider semantic information. Additionally, we discovered that different semantic roles exhibited varying accuracy levels with the same method. This study offers valuable insights for downstream text mining tasks, such as NER and SRL, when addressing various document features and genres.

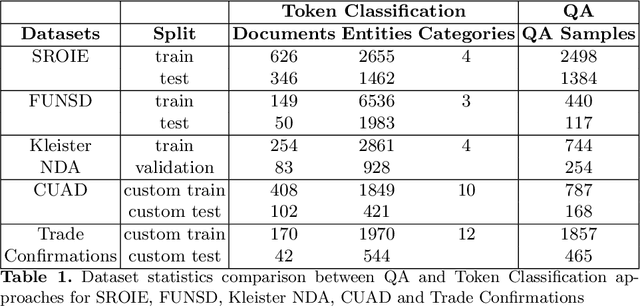

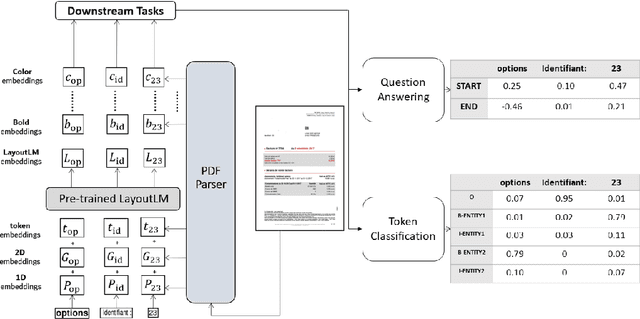

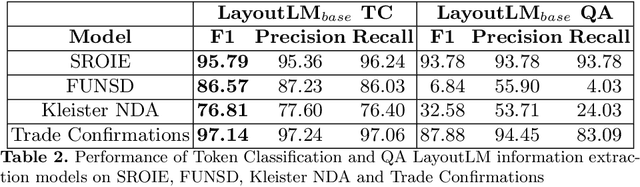

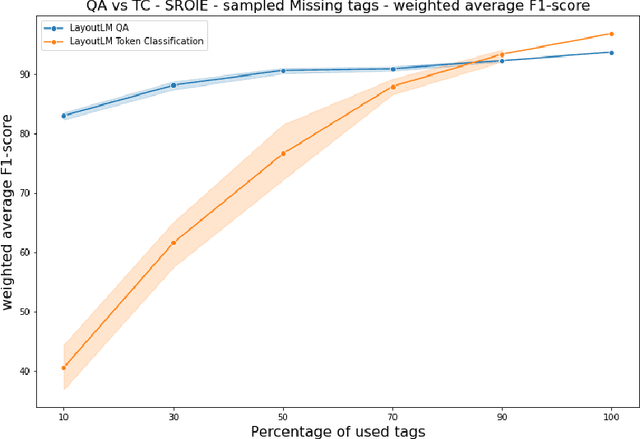

Information Extraction from Documents: Question Answering vs Token Classification in real-world setups

Apr 21, 2023

Research in Document Intelligence and especially in Document Key Information Extraction (DocKIE) has been mainly solved as Token Classification problem. Recent breakthroughs in both natural language processing (NLP) and computer vision helped building document-focused pre-training methods, leveraging a multimodal understanding of the document text, layout and image modalities. However, these breakthroughs also led to the emergence of a new DocKIE subtask of extractive document Question Answering (DocQA), as part of the Machine Reading Comprehension (MRC) research field. In this work, we compare the Question Answering approach with the classical token classification approach for document key information extraction. We designed experiments to benchmark five different experimental setups : raw performances, robustness to noisy environment, capacity to extract long entities, fine-tuning speed on Few-Shot Learning and finally Zero-Shot Learning. Our research showed that when dealing with clean and relatively short entities, it is still best to use token classification-based approach, while the QA approach could be a good alternative for noisy environment or long entities use-cases.

Graph-Guided Reasoning for Multi-Hop Question Answering in Large Language Models

Nov 16, 2023Chain-of-Thought (CoT) prompting has boosted the multi-step reasoning capabilities of Large Language Models (LLMs) by generating a series of rationales before the final answer. We analyze the reasoning paths generated by CoT and find two issues in multi-step reasoning: (i) Generating rationales irrelevant to the question, (ii) Unable to compose subquestions or queries for generating/retrieving all the relevant information. To address them, we propose a graph-guided CoT prompting method, which guides the LLMs to reach the correct answer with graph representation/verification steps. Specifically, we first leverage LLMs to construct a "question/rationale graph" by using knowledge extraction prompting given the initial question and the rationales generated in the previous steps. Then, the graph verification step diagnoses the current rationale triplet by comparing it with the existing question/rationale graph to filter out irrelevant rationales and generate follow-up questions to obtain relevant information. Additionally, we generate CoT paths that exclude the extracted graph information to represent the context information missed from the graph extraction. Our graph-guided reasoning method shows superior performance compared to previous CoT prompting and the variants on multi-hop question answering benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge