"Image": models, code, and papers

VolterraNet: A higher order convolutional network with group equivariance for homogeneous manifolds

Jun 05, 2021

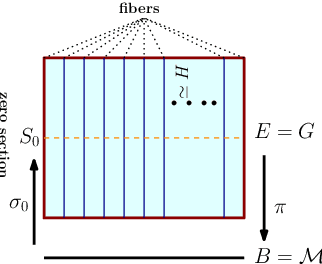

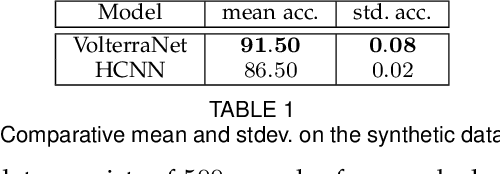

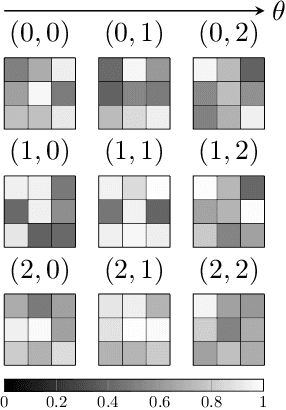

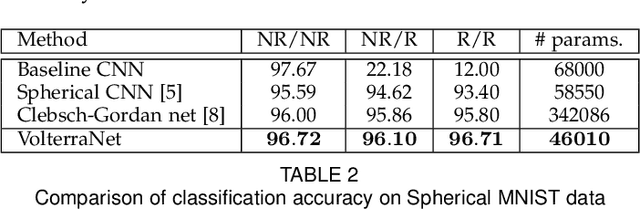

Convolutional neural networks have been highly successful in image-based learning tasks due to their translation equivariance property. Recent work has generalized the traditional convolutional layer of a convolutional neural network to non-Euclidean spaces and shown group equivariance of the generalized convolution operation. In this paper, we present a novel higher order Volterra convolutional neural network (VolterraNet) for data defined as samples of functions on Riemannian homogeneous spaces. Analagous to the result for traditional convolutions, we prove that the Volterra functional convolutions are equivariant to the action of the isometry group admitted by the Riemannian homogeneous spaces, and under some restrictions, any non-linear equivariant function can be expressed as our homogeneous space Volterra convolution, generalizing the non-linear shift equivariant characterization of Volterra expansions in Euclidean space. We also prove that second order functional convolution operations can be represented as cascaded convolutions which leads to an efficient implementation. Beyond this, we also propose a dilated VolterraNet model. These advances lead to large parameter reductions relative to baseline non-Euclidean CNNs. To demonstrate the efficacy of the VolterraNet performance, we present several real data experiments involving classification tasks on spherical-MNIST, atomic energy, Shrec17 data sets, and group testing on diffusion MRI data. Performance comparisons to the state-of-the-art are also presented.

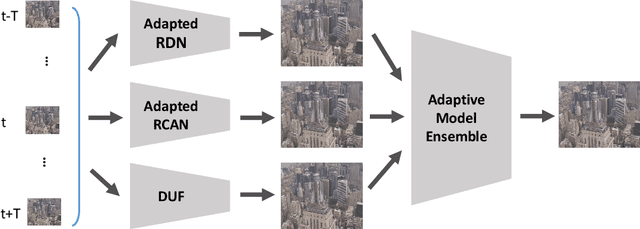

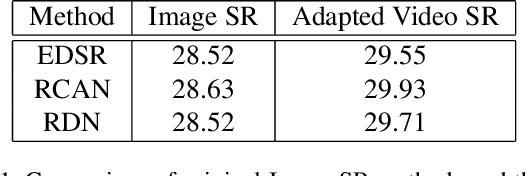

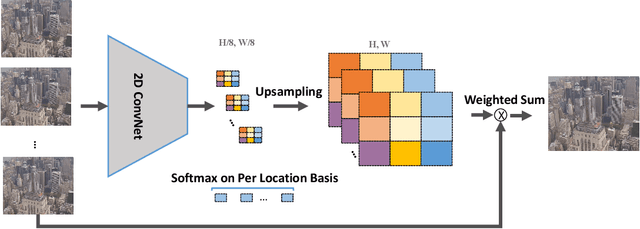

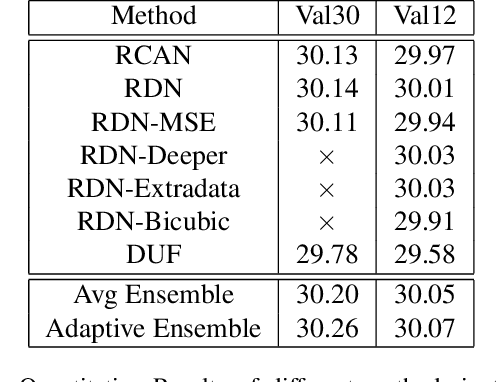

Adapting Image Super-Resolution State-of-the-arts and Learning Multi-model Ensemble for Video Super-Resolution

May 07, 2019

Recently, image super-resolution has been widely studied and achieved significant progress by leveraging the power of deep convolutional neural networks. However, there has been limited advancement in video super-resolution (VSR) due to the complex temporal patterns in videos. In this paper, we investigate how to adapt state-of-the-art methods of image super-resolution for video super-resolution. The proposed adapting method is straightforward. The information among successive frames is well exploited, while the overhead on the original image super-resolution method is negligible. Furthermore, we propose a learning-based method to ensemble the outputs from multiple super-resolution models. Our methods show superior performance and rank second in the NTIRE2019 Video Super-Resolution Challenge Track 1.

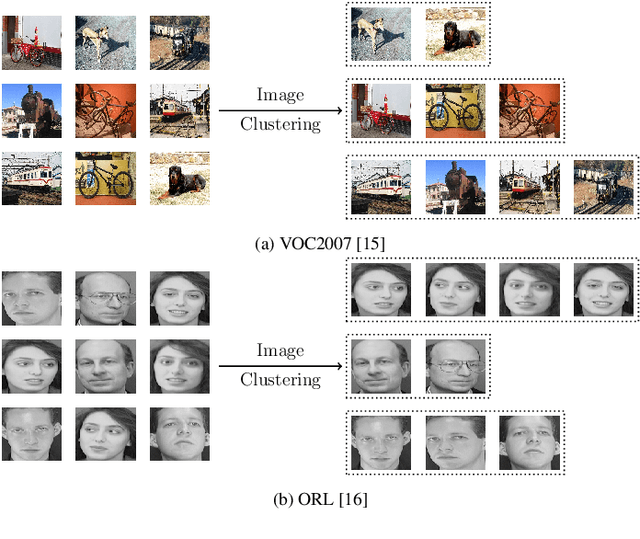

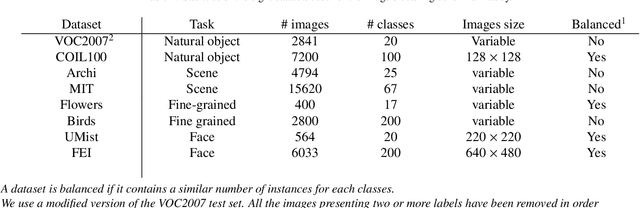

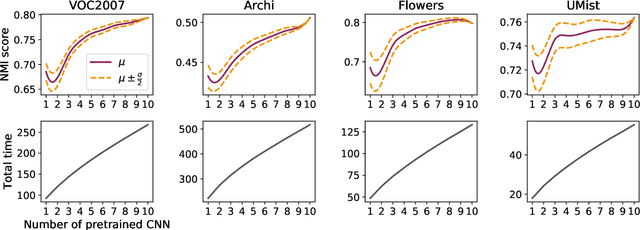

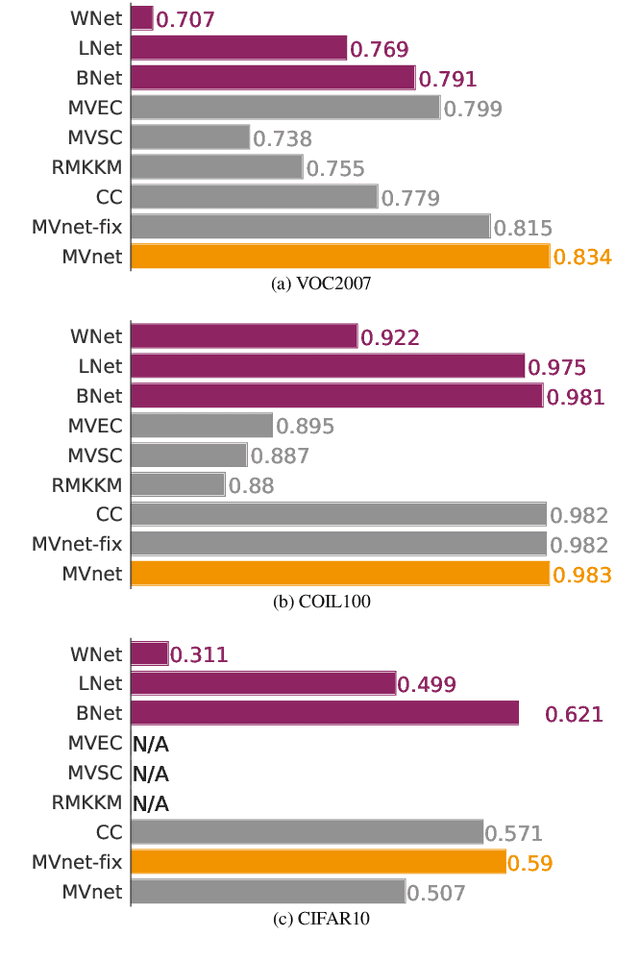

Combining pretrained CNN feature extractors to enhance clustering of complex natural images

Jan 07, 2021

Recently, a common starting point for solving complex unsupervised image classification tasks is to use generic features, extracted with deep Convolutional Neural Networks (CNN) pretrained on a large and versatile dataset (ImageNet). However, in most research, the CNN architecture for feature extraction is chosen arbitrarily, without justification. This paper aims at providing insight on the use of pretrained CNN features for image clustering (IC). First, extensive experiments are conducted and show that, for a given dataset, the choice of the CNN architecture for feature extraction has a huge impact on the final clustering. These experiments also demonstrate that proper extractor selection for a given IC task is difficult. To solve this issue, we propose to rephrase the IC problem as a multi-view clustering (MVC) problem that considers features extracted from different architectures as different "views" of the same data. This approach is based on the assumption that information contained in the different CNN may be complementary, even when pretrained on the same data. We then propose a multi-input neural network architecture that is trained end-to-end to solve the MVC problem effectively. This approach is tested on nine natural image datasets, and produces state-of-the-art results for IC.

* 21 pages, 16 figures, 10 tables, preprint of our paper published in Neurocomputing

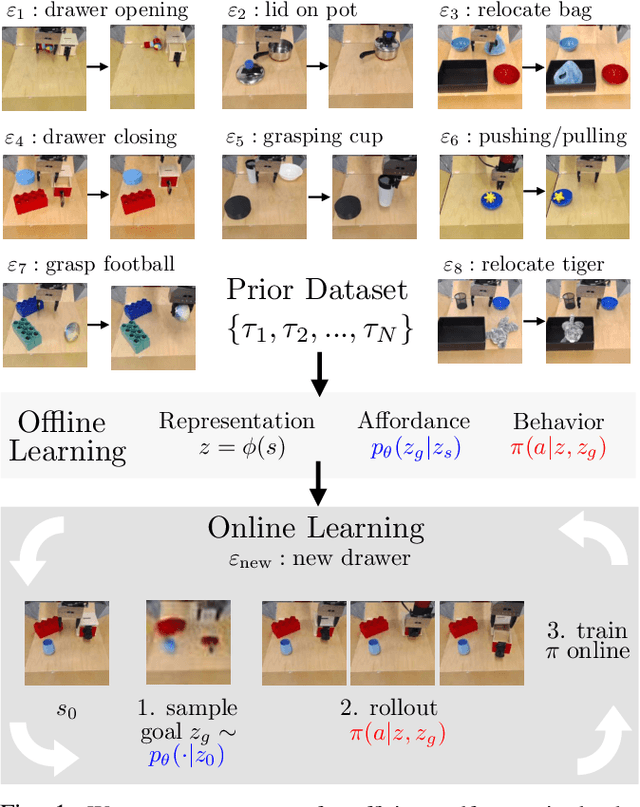

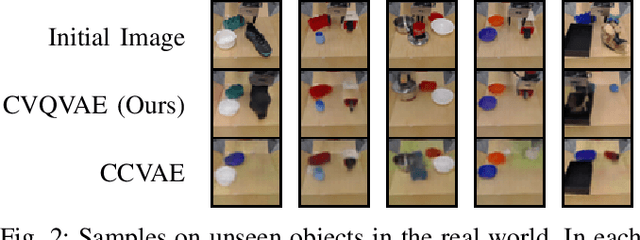

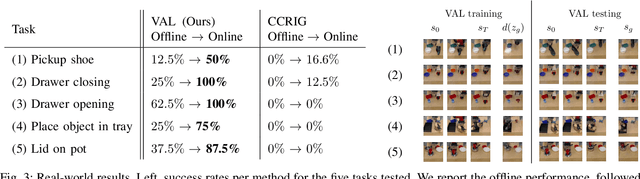

What Can I Do Here? Learning New Skills by Imagining Visual Affordances

Jun 13, 2021

A generalist robot equipped with learned skills must be able to perform many tasks in many different environments. However, zero-shot generalization to new settings is not always possible. When the robot encounters a new environment or object, it may need to finetune some of its previously learned skills to accommodate this change. But crucially, previously learned behaviors and models should still be suitable to accelerate this relearning. In this paper, we aim to study how generative models of possible outcomes can allow a robot to learn visual representations of affordances, so that the robot can sample potentially possible outcomes in new situations, and then further train its policy to achieve those outcomes. In effect, prior data is used to learn what kinds of outcomes may be possible, such that when the robot encounters an unfamiliar setting, it can sample potential outcomes from its model, attempt to reach them, and thereby update both its skills and its outcome model. This approach, visuomotor affordance learning (VAL), can be used to train goal-conditioned policies that operate on raw image inputs, and can rapidly learn to manipulate new objects via our proposed affordance-directed exploration scheme. We show that VAL can utilize prior data to solve real-world tasks such drawer opening, grasping, and placing objects in new scenes with only five minutes of online experience in the new scene.

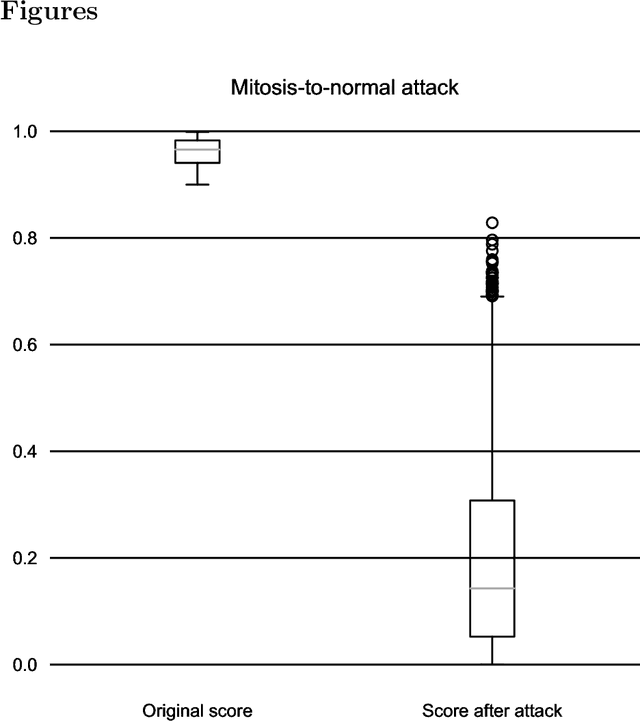

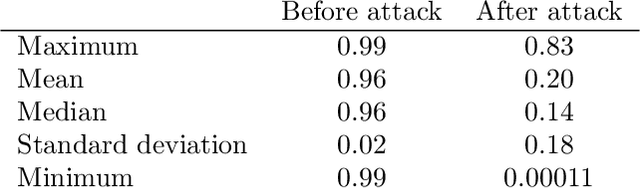

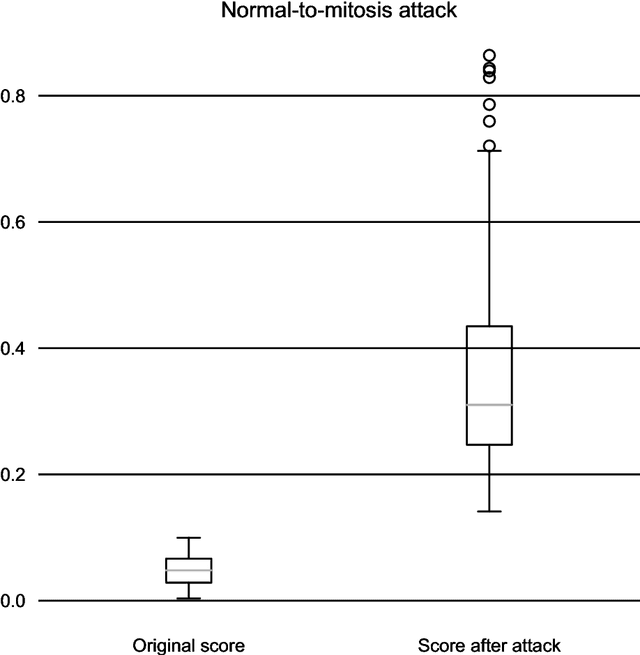

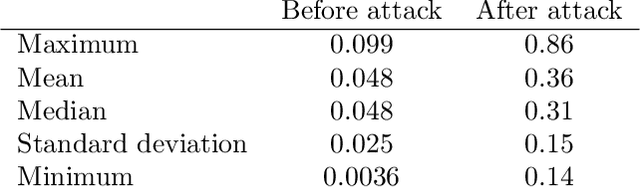

One-Pixel Attack Deceives Automatic Detection of Breast Cancer

Dec 01, 2020

In this article we demonstrate that a state-of-the-art machine learning model predicting whether a whole slide image contains mitosis can be fooled by changing just a single pixel in the input image. Computer vision and machine learning can be used to automate various tasks in cancer diagnostic and detection. If an attacker can manipulate the automated processing, the results can be devastating and in the worst case lead to wrong diagnostic and treatments. In this research one-pixel attack is demonstrated in a real-life scenario with a real tumor dataset. The results indicate that a minor one-pixel modification of a whole slide image under analysis can affect the diagnosis. The attack poses a threat from the cyber security perspective: the one-pixel method can be used as an attack vector by a motivated attacker.

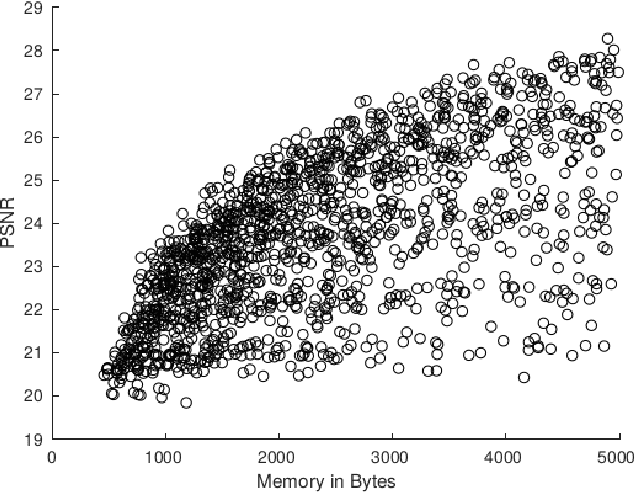

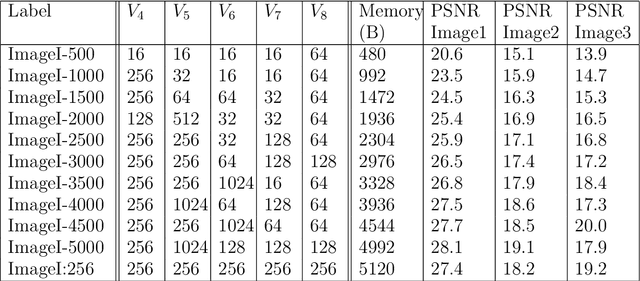

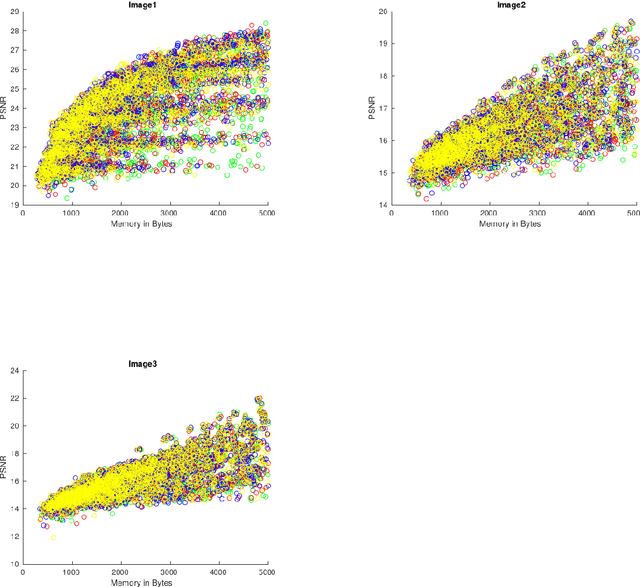

Extreme compression of grayscale images

Sep 21, 2020

Given an grayscale digital image, and a positive integer $n$, how well can we store the image at a compression ratio of $n:1$? In this paper we address the above question in extreme cases when $n>>50$ using "$\mathbf{V}$-variable image compression".

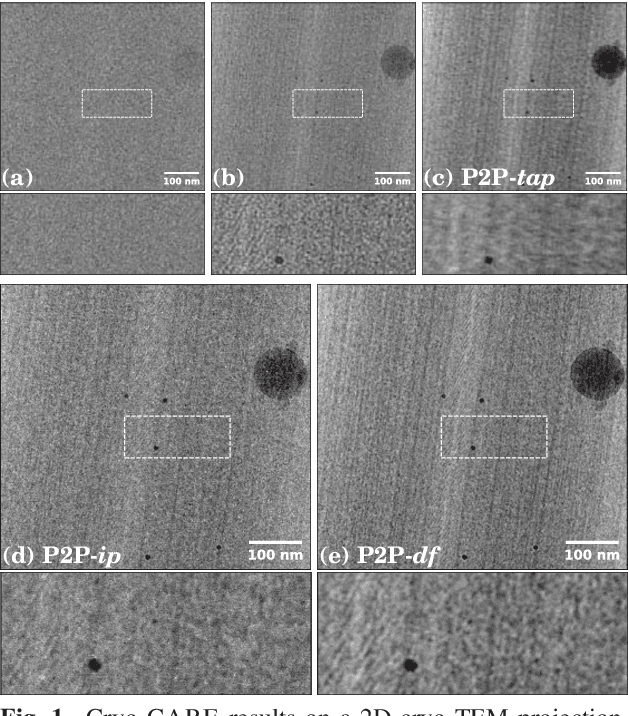

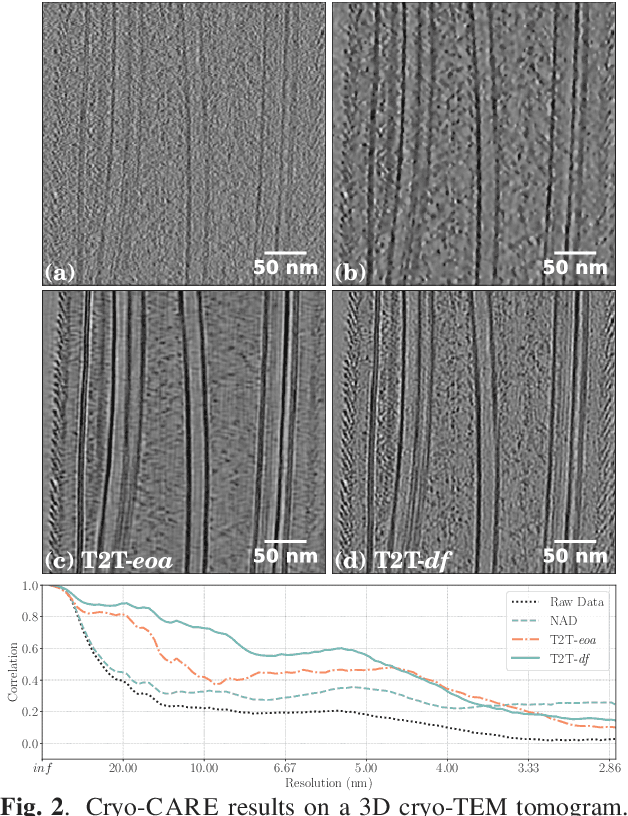

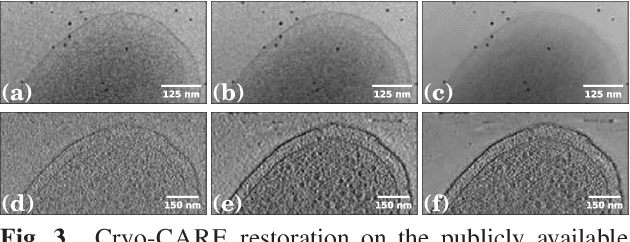

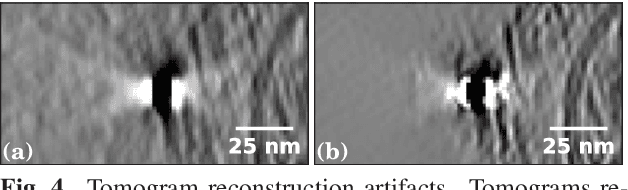

Cryo-CARE: Content-Aware Image Restoration for Cryo-Transmission Electron Microscopy Data

Oct 15, 2018

Multiple approaches to use deep learning for image restoration have recently been proposed. Training such approaches requires well registered pairs of high and low quality images. While this is easily achievable for many imaging modalities, e.g. fluorescence light microscopy, for others it is not. Cryo-transmission electron microscopy (cryo-TEM) could profoundly benefit from improved denoising methods, unfortunately it is one of the latter. Here we show how recent advances in network training for image restoration tasks, i.e. denoising, can be applied to cryo-TEM data. We describe our proposed method and show how it can be applied to single cryo-TEM projections and whole cryo-tomographic image volumes. Our proposed restoration method dramatically increases contrast in cryo-TEM images, which improves the interpretability of the acquired data. Furthermore we show that automated downstream processing on restored image data, demonstrated on a dense segmentation task, leads to improved results.

Generative Adversarial Networks (GANs) in Networking: A Comprehensive Survey & Evaluation

May 10, 2021

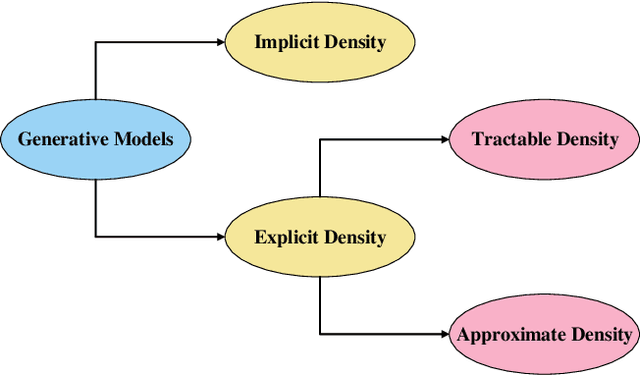

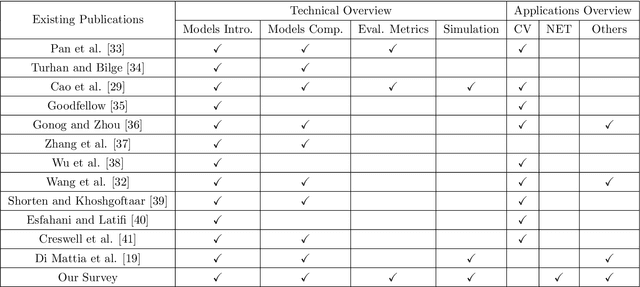

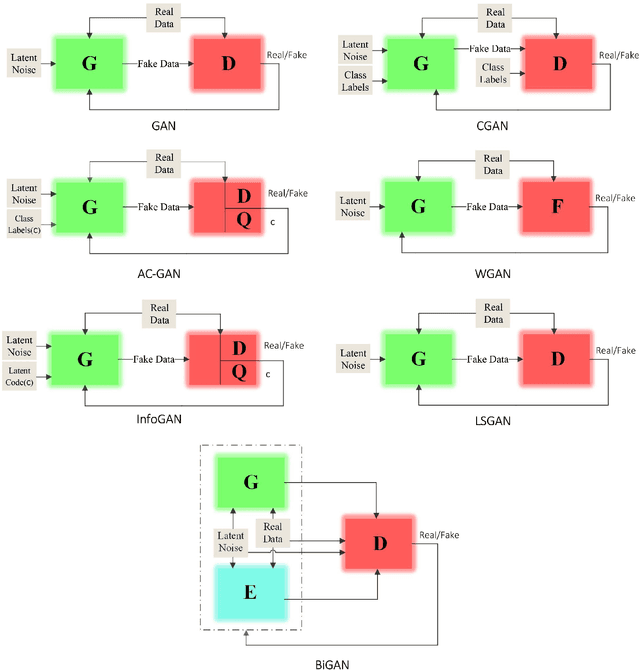

Despite the recency of their conception, Generative Adversarial Networks (GANs) constitute an extensively researched machine learning sub-field for the creation of synthetic data through deep generative modeling. GANs have consequently been applied in a number of domains, most notably computer vision, in which they are typically used to generate or transform synthetic images. Given their relative ease of use, it is therefore natural that researchers in the field of networking (which has seen extensive application of deep learning methods) should take an interest in GAN-based approaches. The need for a comprehensive survey of such activity is therefore urgent. In this paper, we demonstrate how this branch of machine learning can benefit multiple aspects of computer and communication networks, including mobile networks, network analysis, internet of things, physical layer, and cybersecurity. In doing so, we shall provide a novel evaluation framework for comparing the performance of different models in non-image applications, applying this to a number of reference network datasets.

TransFG: A Transformer Architecture for Fine-grained Recognition

Mar 28, 2021

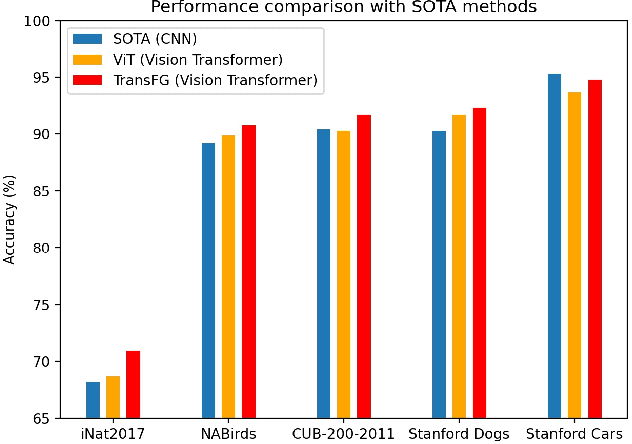

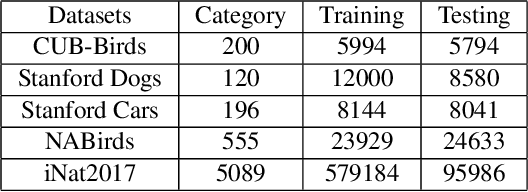

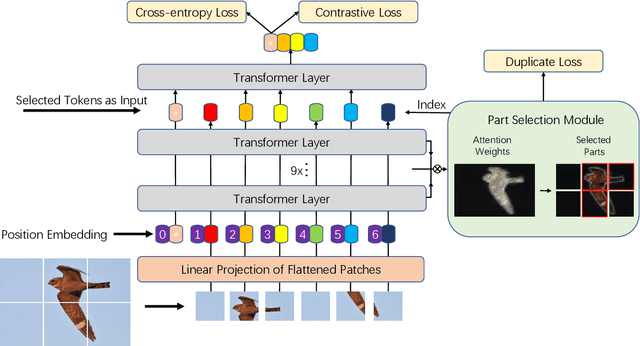

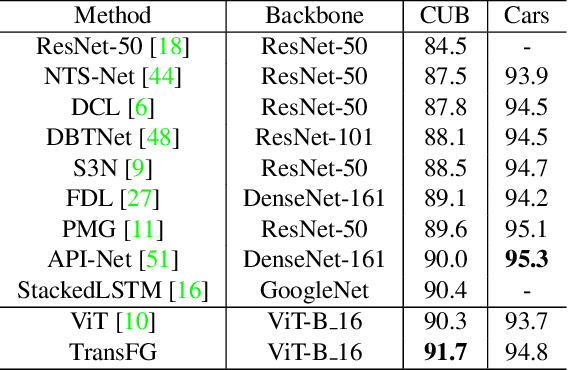

Fine-grained visual classification (FGVC) which aims at recognizing objects from subcategories is a very challenging task due to the inherently subtle inter-class differences. Recent works mainly tackle this problem by focusing on how to locate the most discriminative image regions and rely on them to improve the capability of networks to capture subtle variances. Most of these works achieve this by re-using the backbone network to extract features of selected regions. However, this strategy inevitably complicates the pipeline and pushes the proposed regions to contain most parts of the objects. Recently, vision transformer (ViT) shows its strong performance in the traditional classification task. The self-attention mechanism of the transformer links every patch token to the classification token. The strength of the attention link can be intuitively considered as an indicator of the importance of tokens. In this work, we propose a novel transformer-based framework TransFG where we integrate all raw attention weights of the transformer into an attention map for guiding the network to effectively and accurately select discriminative image patches and compute their relations. A contrastive loss is applied to further enlarge the distance between feature representations of similar sub-classes. We demonstrate the value of TransFG by conducting experiments on five popular fine-grained benchmarks: CUB-200-2011, Stanford Cars, Stanford Dogs, NABirds and iNat2017 where we achieve state-of-the-art performance. Qualitative results are presented for better understanding of our model. Code is available at https://github.com/TACJu/TransFG.

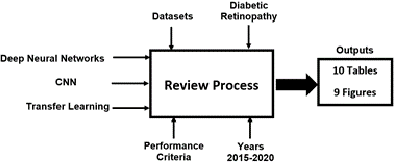

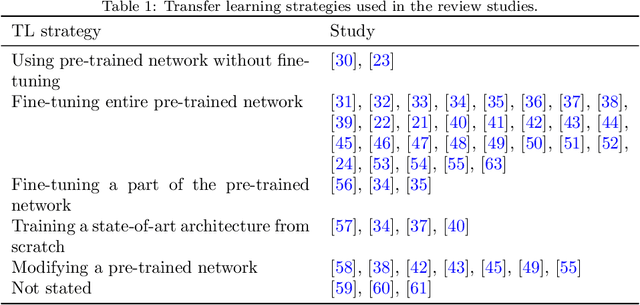

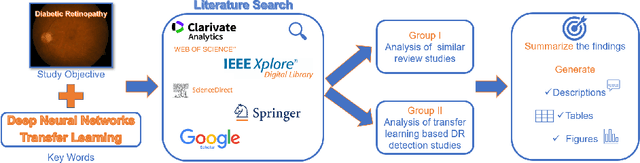

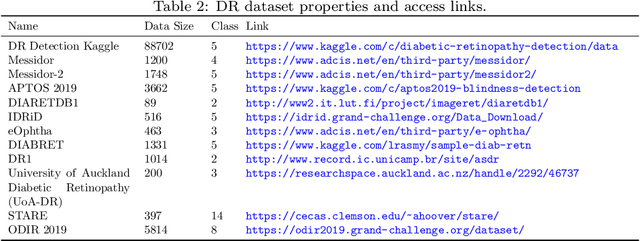

A systematic review of transfer learning based approaches for diabetic retinopathy detection

May 28, 2021

Cases of diabetes and related diabetic retinopathy (DR) have been increasing at an alarming rate in modern times. Early detection of DR is an important problem since it may cause permanent blindness in the late stages. In the last two decades, many different approaches have been applied in DR detection. Reviewing academic literature shows that deep neural networks (DNNs) have become the most preferred approach for DR detection. Among these DNN approaches, Convolutional Neural Network (CNN) models are the most used ones in the field of medical image classification. Designing a new CNN architecture is a tedious and time-consuming approach. Additionally, training an enormous number of parameters is also a difficult task. Due to this reason, instead of training CNNs from scratch, using pre-trained models has been suggested in recent years as transfer learning approach. Accordingly, the present study as a review focuses on DNN and Transfer Learning based applications of DR detection considering 38 publications between 2015 and 2020. The published papers are summarized using 9 figures and 10 tables, giving information about 22 pre-trained CNN models, 12 DR data sets and standard performance metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge