"Image": models, code, and papers

Few-Shot Real Image Restoration via Distortion-Relation Guided Transfer Learning

Nov 25, 2021

Collecting large clean-distorted training image pairs in real world is non-trivial, which seriously limits the practical applications of these supervised learning based image restoration (IR) methods. Previous works attempt to address this problem by leveraging unsupervised learning technologies to alleviate the dependency for paired training samples. However, these methods typically suffer from unsatisfactory textures synthesis due to the lack of clean image supervision. Compared with purely unsupervised solution, the under-explored scheme with Few-Shot clean images (FS-IR) is more feasible to tackle this challenging real Image Restoration task. In this paper, we are the first to investigate the few-shot real image restoration and propose a Distortion-Relation guided Transfer Learning (termed as DRTL) framework. DRTL assigns a knowledge graph to capture the distortion relation between auxiliary tasks (i.e., synthetic distortions) and target tasks (i.e., real distortions with few images), and then adopt a gradient weighting strategy to guide the knowledge transfer from auxiliary task to target task. In this way, DRTL could quickly learn the most relevant knowledge from the prior distortions for target distortion. We instantiate DRTL integrated with pre-training and meta-learning pipelines as an embodiment to realize a distortion-relation aware FS-IR. Extensive experiments on multiple benchmarks demonstrate the effectiveness of DRTL on few-shot real image restoration.

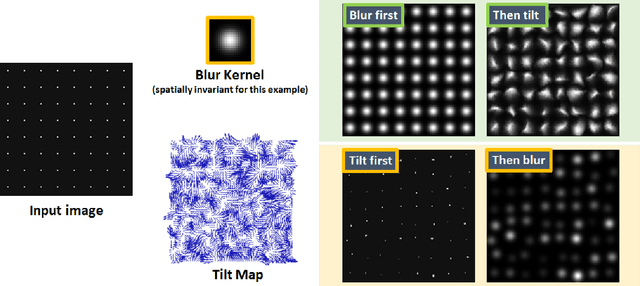

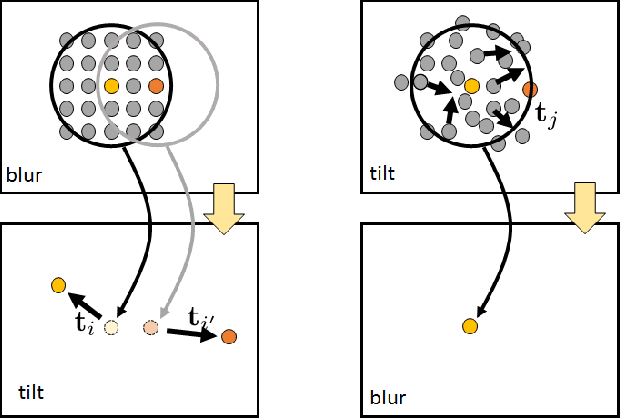

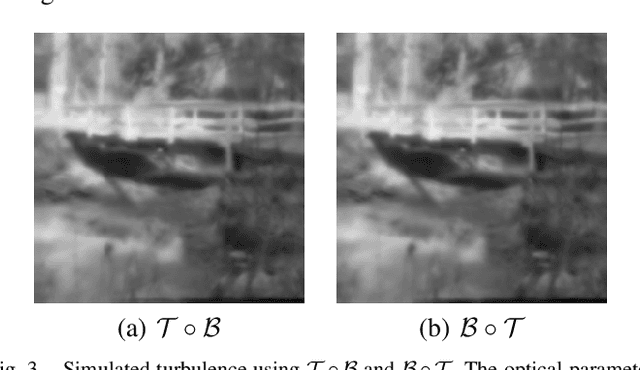

Tilt-then-Blur or Blur-then-Tilt? Clarifying the Atmospheric Turbulence Model

Jul 19, 2022

Imaging at a long distance often requires advanced image restoration algorithms to compensate for the distortions caused by atmospheric turbulence. However, unlike many standard restoration problems such as deconvolution, the forward image formation model of the atmospheric turbulence does not have a simple expression. Thanks to the Zernike representation of the phase, one can show that the forward model is a combination of tilt (pixel shifting due to the linear phase terms) and blur (image smoothing due to the high order aberrations). Confusions then arise between the ordering of the two operators. Should the model be tilt-then-blur, or blur-then-tilt? Some papers in the literature say that the model is tilt-then-blur, whereas more papers say that it is blur-then-tilt. This paper clarifies the differences between the two and discusses why the tilt-then-blur is the correct model. Recommendations are given to the research community.

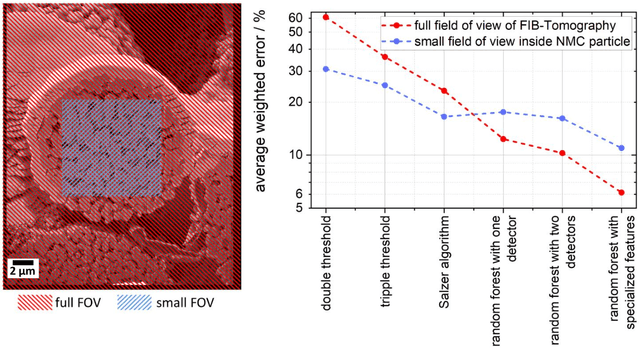

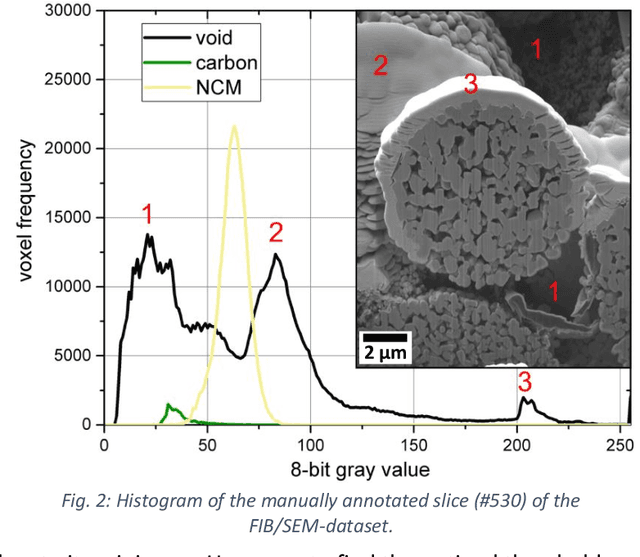

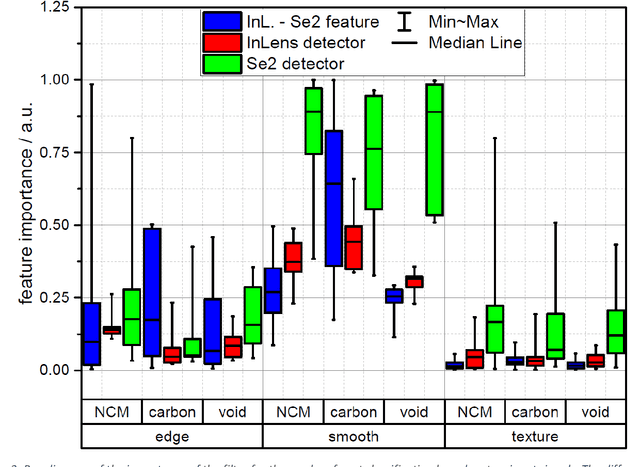

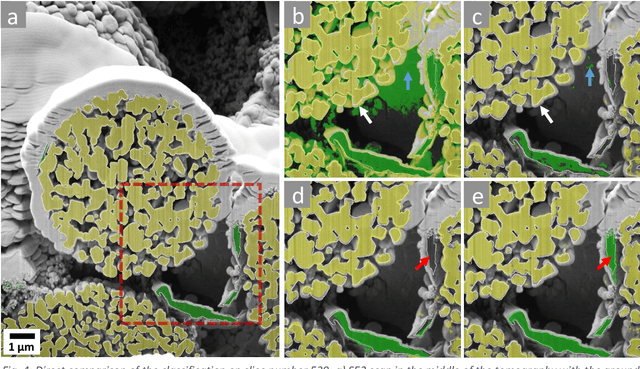

Classification of FIB/SEM-tomography images for highly porous multiphase materials using random forest classifiers

Jul 28, 2022

FIB/SEM tomography represents an indispensable tool for the characterization of three-dimensional nanostructures in battery research and many other fields. However, contrast and 3D classification/reconstruction problems occur in many cases, which strongly limits the applicability of the technique especially on porous materials, like those used for electrode materials in batteries or fuel cells. Distinguishing the different components like active Li storage particles and carbon/binder materials is difficult and often prevents a reliable quantitative analysis of image data, or may even lead to wrong conclusions about structure-property relationships. In this contribution, we present a novel approach for data classification in three-dimensional image data obtained by FIB/SEM tomography and its applications to NMC battery electrode materials. We use two different image signals, namely the signal of the angled SE2 chamber detector and the Inlens detector signal, combine both signals and train a random forest, i.e. a particular machine learning algorithm. We demonstrate that this approach can overcome current limitations of existing techniques suitable for multi-phase measurements and that it allows for quantitative data reconstruction even where current state-of the art techniques fail, or demand for large training sets. This approach may yield as guideline for future research using FIB/SEM tomography.

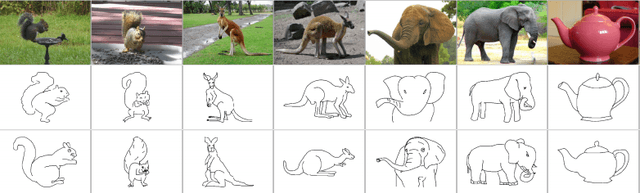

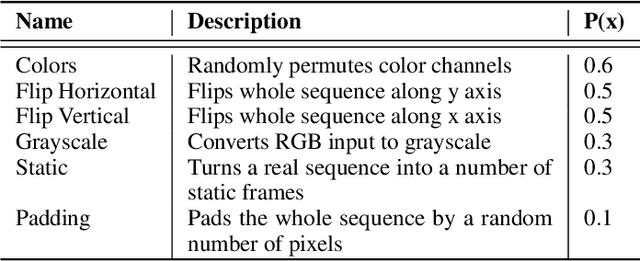

Transformers and CNNs both Beat Humans on SBIR

Sep 14, 2022

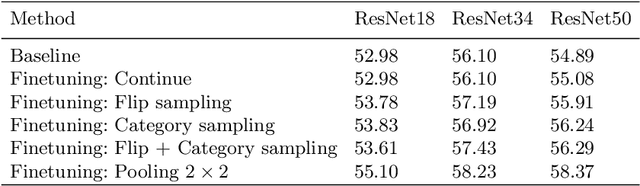

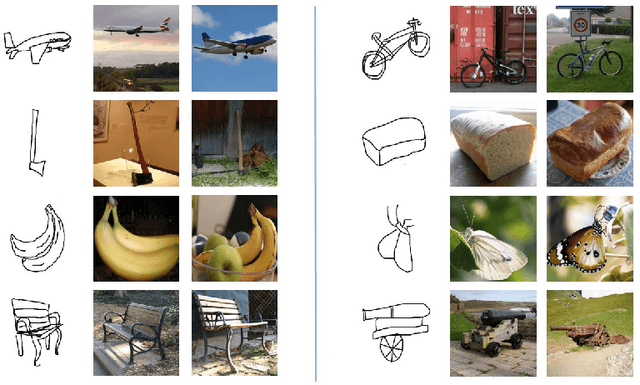

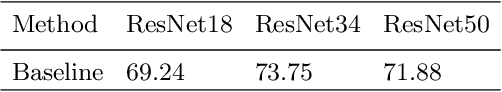

Sketch-based image retrieval (SBIR) is the task of retrieving natural images (photos) that match the semantics and the spatial configuration of hand-drawn sketch queries. The universality of sketches extends the scope of possible applications and increases the demand for efficient SBIR solutions. In this paper, we study classic triplet-based SBIR solutions and show that a persistent invariance to horizontal flip (even after model finetuning) is harming performance. To overcome this limitation, we propose several approaches and evaluate in depth each of them to check their effectiveness. Our main contributions are twofold: We propose and evaluate several intuitive modifications to build SBIR solutions with better flip equivariance. We show that vision transformers are more suited for the SBIR task, and that they outperform CNNs with a large margin. We carried out numerous experiments and introduce the first models to outperform human performance on a large-scale SBIR benchmark (Sketchy). Our best model achieves a recall of 62.25% (at k = 1) on the sketchy benchmark compared to previous state-of-the-art methods 46.2%.

FIBA: Frequency-Injection based Backdoor Attack in Medical Image Analysis

Dec 02, 2021

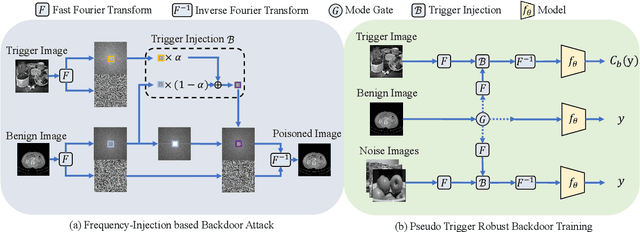

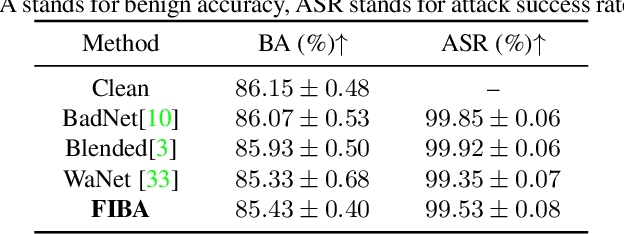

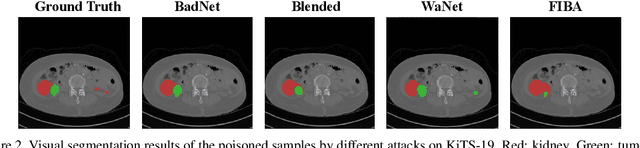

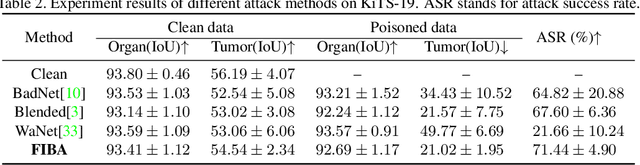

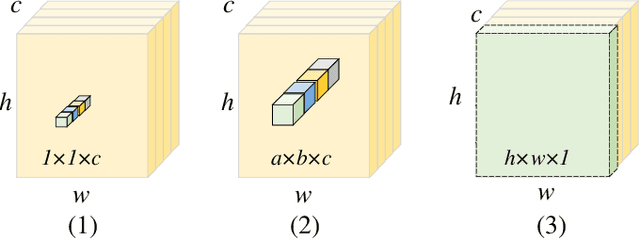

In recent years, the security of AI systems has drawn increasing research attention, especially in the medical imaging realm. To develop a secure medical image analysis (MIA) system, it is a must to study possible backdoor attacks (BAs), which can embed hidden malicious behaviors into the system. However, designing a unified BA method that can be applied to various MIA systems is challenging due to the diversity of imaging modalities (e.g., X-Ray, CT, and MRI) and analysis tasks (e.g., classification, detection, and segmentation). Most existing BA methods are designed to attack natural image classification models, which apply spatial triggers to training images and inevitably corrupt the semantics of poisoned pixels, leading to the failures of attacking dense prediction models. To address this issue, we propose a novel Frequency-Injection based Backdoor Attack method (FIBA) that is capable of delivering attacks in various MIA tasks. Specifically, FIBA leverages a trigger function in the frequency domain that can inject the low-frequency information of a trigger image into the poisoned image by linearly combining the spectral amplitude of both images. Since it preserves the semantics of the poisoned image pixels, FIBA can perform attacks on both classification and dense prediction models. Experiments on three benchmarks in MIA (i.e., ISIC-2019 for skin lesion classification, KiTS-19 for kidney tumor segmentation, and EAD-2019 for endoscopic artifact detection), validate the effectiveness of FIBA and its superiority over state-of-the-art methods in attacking MIA models as well as bypassing backdoor defense. The code will be available at https://github.com/HazardFY/FIBA.

Visual Anomaly Detection Via Partition Memory Bank Module and Error Estimation

Sep 26, 2022

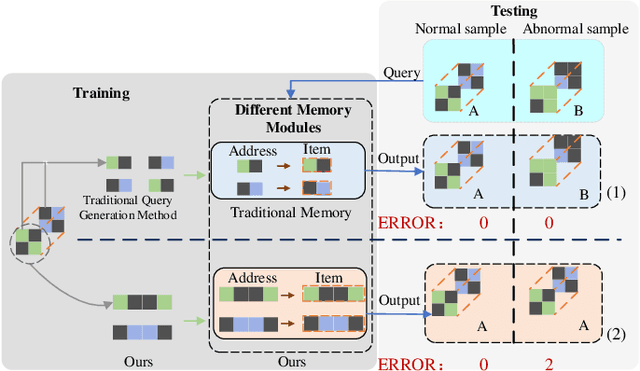

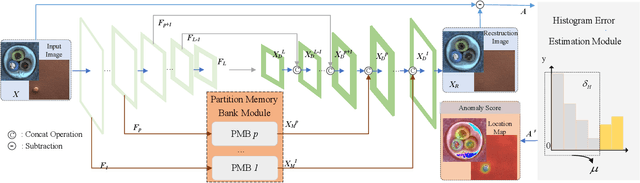

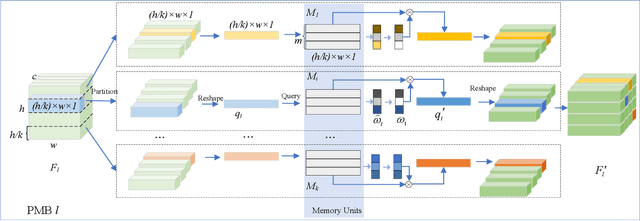

Reconstruction method based on the memory module for visual anomaly detection attempts to narrow the reconstruction error for normal samples while enlarging it for anomalous samples. Unfortunately, the existing memory module is not fully applicable to the anomaly detection task, and the reconstruction error of the anomaly samples remains small. Towards this end, this work proposes a new unsupervised visual anomaly detection method to jointly learn effective normal features and eliminate unfavorable reconstruction errors. Specifically, a novel Partition Memory Bank (PMB) module is proposed to effectively learn and store detailed features with semantic integrity of normal samples. It develops a new partition mechanism and a unique query generation method to preserve the context information and then improves the learning ability of the memory module. The proposed PMB and the skip connection are alternatively explored to make the reconstruction of abnormal samples worse. To obtain more precise anomaly localization results and solve the problem of cumulative reconstruction error, a novel Histogram Error Estimation module is proposed to adaptively eliminate the unfavorable errors by the histogram of the difference image. It improves the anomaly localization performance without increasing the cost. To evaluate the effectiveness of the proposed method for anomaly detection and localization, extensive experiments are conducted on three widely-used anomaly detection datasets. The encouraging performance of the proposed method compared to the recent approaches based on the memory module demonstrates its superiority.

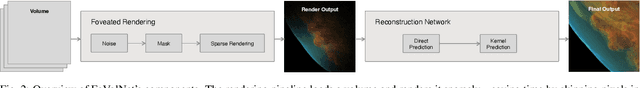

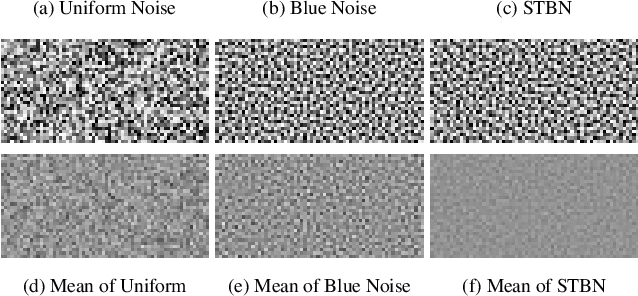

FoVolNet: Fast Volume Rendering using Foveated Deep Neural Networks

Sep 20, 2022

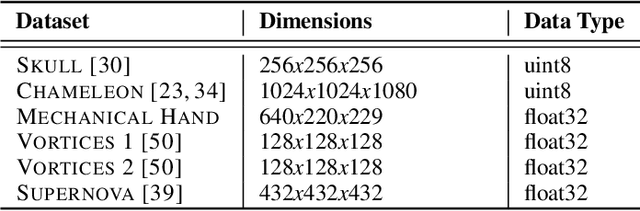

Volume data is found in many important scientific and engineering applications. Rendering this data for visualization at high quality and interactive rates for demanding applications such as virtual reality is still not easily achievable even using professional-grade hardware. We introduce FoVolNet -- a method to significantly increase the performance of volume data visualization. We develop a cost-effective foveated rendering pipeline that sparsely samples a volume around a focal point and reconstructs the full-frame using a deep neural network. Foveated rendering is a technique that prioritizes rendering computations around the user's focal point. This approach leverages properties of the human visual system, thereby saving computational resources when rendering data in the periphery of the user's field of vision. Our reconstruction network combines direct and kernel prediction methods to produce fast, stable, and perceptually convincing output. With a slim design and the use of quantization, our method outperforms state-of-the-art neural reconstruction techniques in both end-to-end frame times and visual quality. We conduct extensive evaluations of the system's rendering performance, inference speed, and perceptual properties, and we provide comparisons to competing neural image reconstruction techniques. Our test results show that FoVolNet consistently achieves significant time saving over conventional rendering while preserving perceptual quality.

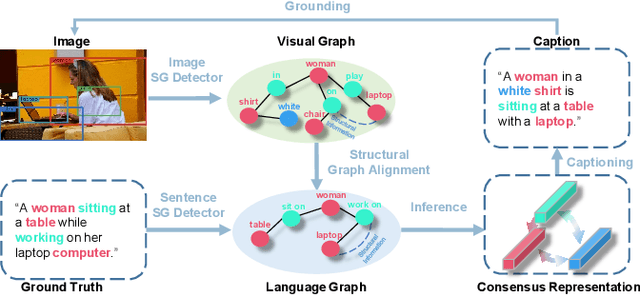

Consensus Graph Representation Learning for Better Grounded Image Captioning

Dec 02, 2021

The contemporary visual captioning models frequently hallucinate objects that are not actually in a scene, due to the visual misclassification or over-reliance on priors that resulting in the semantic inconsistency between the visual information and the target lexical words. The most common way is to encourage the captioning model to dynamically link generated object words or phrases to appropriate regions of the image, i.e., the grounded image captioning (GIC). However, GIC utilizes an auxiliary task (grounding objects) that has not solved the key issue of object hallucination, i.e., the semantic inconsistency. In this paper, we take a novel perspective on the issue above - exploiting the semantic coherency between the visual and language modalities. Specifically, we propose the Consensus Rraph Representation Learning framework (CGRL) for GIC that incorporates a consensus representation into the grounded captioning pipeline. The consensus is learned by aligning the visual graph (e.g., scene graph) to the language graph that consider both the nodes and edges in a graph. With the aligned consensus, the captioning model can capture both the correct linguistic characteristics and visual relevance, and then grounding appropriate image regions further. We validate the effectiveness of our model, with a significant decline in object hallucination (-9% CHAIRi) on the Flickr30k Entities dataset. Besides, our CGRL also evaluated by several automatic metrics and human evaluation, the results indicate that the proposed approach can simultaneously improve the performance of image captioning (+2.9 Cider) and grounding (+2.3 F1LOC).

BadHash: Invisible Backdoor Attacks against Deep Hashing with Clean Label

Jul 13, 2022

Due to its powerful feature learning capability and high efficiency, deep hashing has achieved great success in large-scale image retrieval. Meanwhile, extensive works have demonstrated that deep neural networks (DNNs) are susceptible to adversarial examples, and exploring adversarial attack against deep hashing has attracted many research efforts. Nevertheless, backdoor attack, another famous threat to DNNs, has not been studied for deep hashing yet. Although various backdoor attacks have been proposed in the field of image classification, existing approaches failed to realize a truly imperceptive backdoor attack that enjoys invisible triggers and clean label setting simultaneously, and they also cannot meet the intrinsic demand of image retrieval backdoor. In this paper, we propose BadHash, the first generative-based imperceptible backdoor attack against deep hashing, which can effectively generate invisible and input-specific poisoned images with clean label. Specifically, we first propose a new conditional generative adversarial network (cGAN) pipeline to effectively generate poisoned samples. For any given benign image, it seeks to generate a natural-looking poisoned counterpart with a unique invisible trigger. In order to improve the attack effectiveness, we introduce a label-based contrastive learning network LabCLN to exploit the semantic characteristics of different labels, which are subsequently used for confusing and misleading the target model to learn the embedded trigger. We finally explore the mechanism of backdoor attacks on image retrieval in the hash space. Extensive experiments on multiple benchmark datasets verify that BadHash can generate imperceptible poisoned samples with strong attack ability and transferability over state-of-the-art deep hashing schemes.

A Strong Transfer Baseline for RGB-D Fusion in Vision Transformers

Oct 03, 2022

The Vision Transformer (ViT) architecture has recently established its place in the computer vision literature, with multiple architectures for recognition of image data or other visual modalities. However, training ViTs for RGB-D object recognition remains an understudied topic, viewed in recent literature only through the lens of multi-task pretraining in multiple modalities. Such approaches are often computationally intensive and have not yet been applied for challenging object-level classification tasks. In this work, we propose a simple yet strong recipe for transferring pretrained ViTs in RGB-D domains for single-view 3D object recognition, focusing on fusing RGB and depth representations encoded jointly by the ViT. Compared to previous works in multimodal Transformers, the key challenge here is to use the atested flexibility of ViTs to capture cross-modal interactions at the downstream and not the pretraining stage. We explore which depth representation is better in terms of resulting accuracy and compare two methods for injecting RGB-D fusion within the ViT architecture (i.e., early vs. late fusion). Our results in the Washington RGB-D Objects dataset demonstrates that in such RGB $\rightarrow$ RGB-D scenarios, late fusion techniques work better than most popularly employed early fusion. With our transfer baseline, adapted ViTs score up to 95.1\% top-1 accuracy in Washington, achieving new state-of-the-art results in this benchmark. We additionally evaluate our approach with an open-ended lifelong learning protocol, where we show that our adapted RGB-D encoder leads to features that outperform unimodal encoders, even without explicit fine-tuning. We further integrate our method with a robot framework and demonstrate how it can serve as a perception utility in an interactive robot learning scenario, both in simulation and with a real robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge