"Image": models, code, and papers

The Cascaded Forward Algorithm for Neural Network Training

Mar 17, 2023

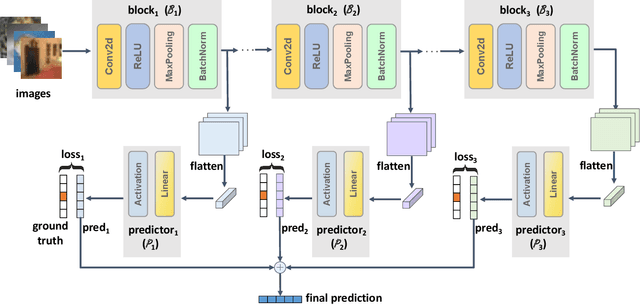

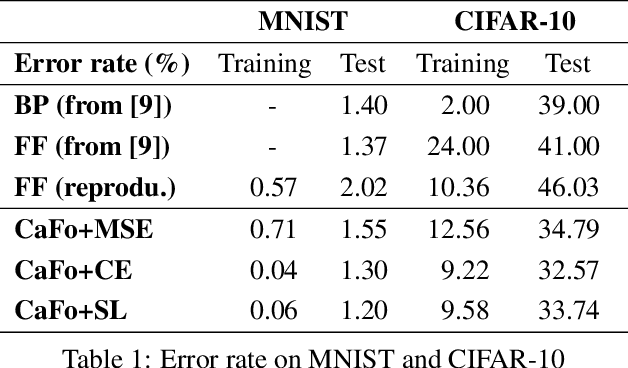

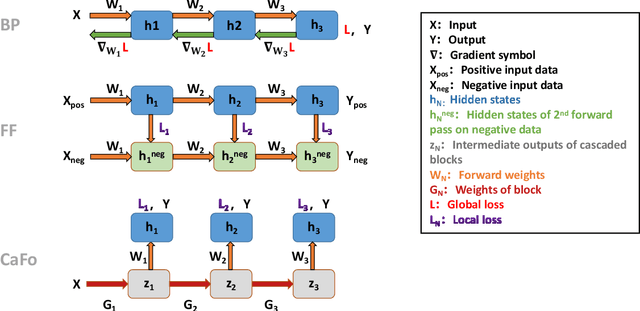

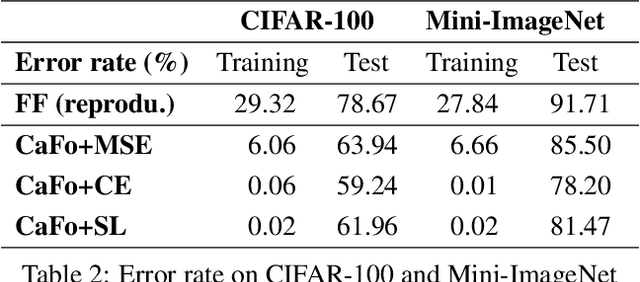

Backpropagation algorithm has been widely used as a mainstream learning procedure for neural networks in the past decade, and has played a significant role in the development of deep learning. However, there exist some limitations associated with this algorithm, such as getting stuck in local minima and experiencing vanishing/exploding gradients, which have led to questions about its biological plausibility. To address these limitations, alternative algorithms to backpropagation have been preliminarily explored, with the Forward-Forward (FF) algorithm being one of the most well-known. In this paper we propose a new learning framework for neural networks, namely Cascaded Forward (CaFo) algorithm, which does not rely on BP optimization as that in FF. Unlike FF, our framework directly outputs label distributions at each cascaded block, which does not require generation of additional negative samples and thus leads to a more efficient process at both training and testing. Moreover, in our framework each block can be trained independently, so it can be easily deployed into parallel acceleration systems. The proposed method is evaluated on four public image classification benchmarks, and the experimental results illustrate significant improvement in prediction accuracy in comparison with the baseline.

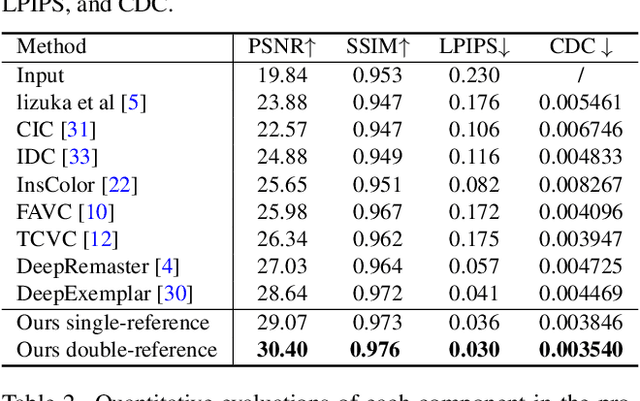

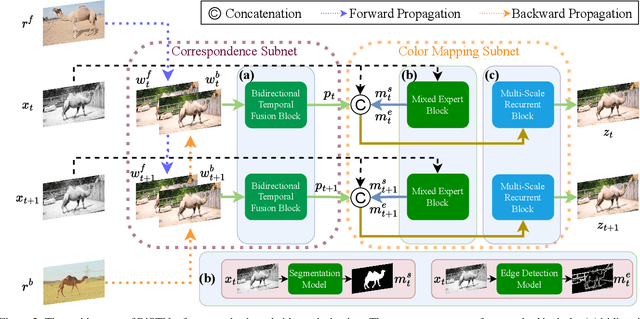

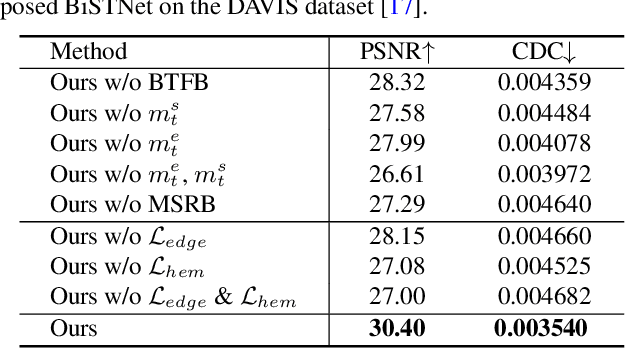

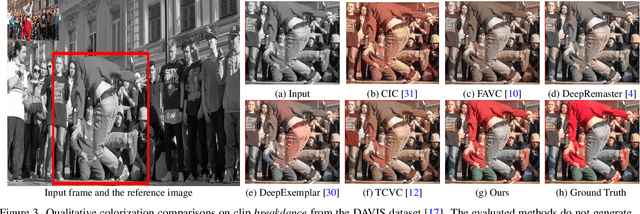

BiSTNet: Semantic Image Prior Guided Bidirectional Temporal Feature Fusion for Deep Exemplar-based Video Colorization

Dec 05, 2022

How to effectively explore the colors of reference exemplars and propagate them to colorize each frame is vital for exemplar-based video colorization. In this paper, we present an effective BiSTNet to explore colors of reference exemplars and utilize them to help video colorization by a bidirectional temporal feature fusion with the guidance of semantic image prior. We first establish the semantic correspondence between each frame and the reference exemplars in deep feature space to explore color information from reference exemplars. Then, to better propagate the colors of reference exemplars into each frame and avoid the inaccurate matches colors from exemplars we develop a simple yet effective bidirectional temporal feature fusion module to better colorize each frame. We note that there usually exist color-bleeding artifacts around the boundaries of the important objects in videos. To overcome this problem, we further develop a mixed expert block to extract semantic information for modeling the object boundaries of frames so that the semantic image prior can better guide the colorization process for better performance. In addition, we develop a multi-scale recurrent block to progressively colorize frames in a coarse-to-fine manner. Extensive experimental results demonstrate that the proposed BiSTNet performs favorably against state-of-the-art methods on the benchmark datasets. Our code will be made available at \url{https://yyang181.github.io/BiSTNet/}

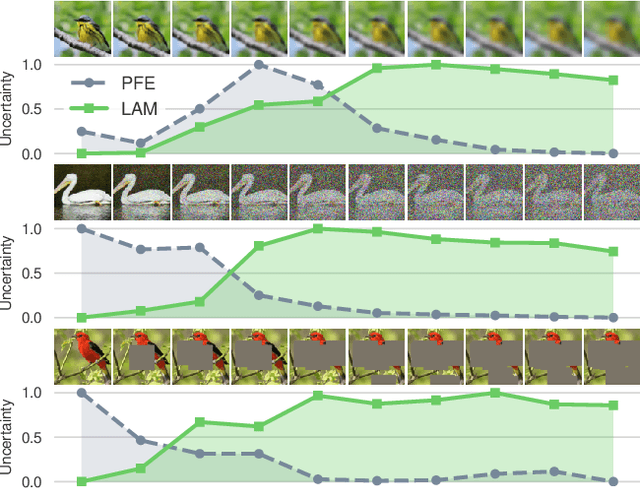

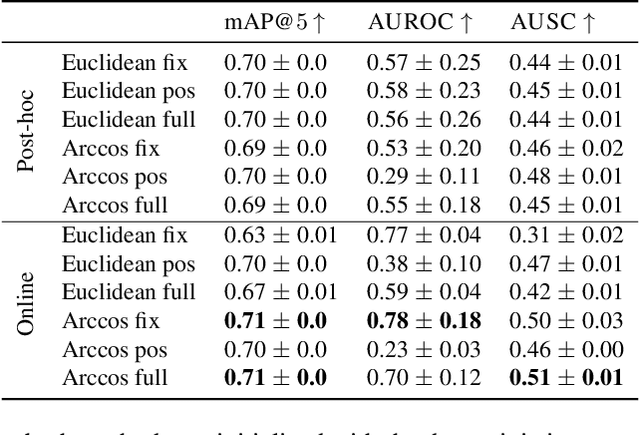

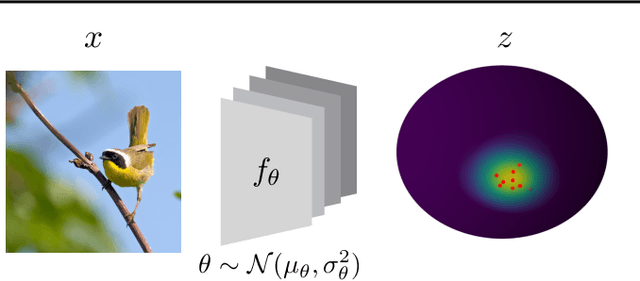

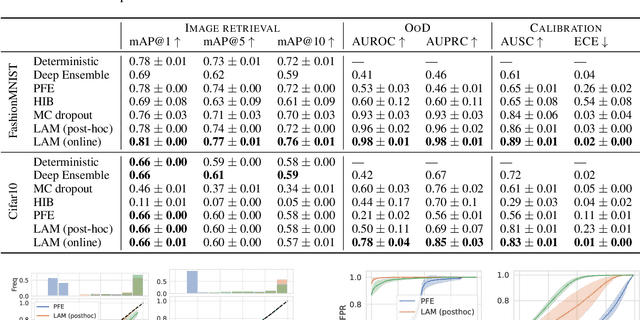

Bayesian Metric Learning for Uncertainty Quantification in Image Retrieval

Feb 02, 2023

We propose the first Bayesian encoder for metric learning. Rather than relying on neural amortization as done in prior works, we learn a distribution over the network weights with the Laplace Approximation. We actualize this by first proving that the contrastive loss is a valid log-posterior. We then propose three methods that ensure a positive definite Hessian. Lastly, we present a novel decomposition of the Generalized Gauss-Newton approximation. Empirically, we show that our Laplacian Metric Learner (LAM) estimates well-calibrated uncertainties, reliably detects out-of-distribution examples, and yields state-of-the-art predictive performance.

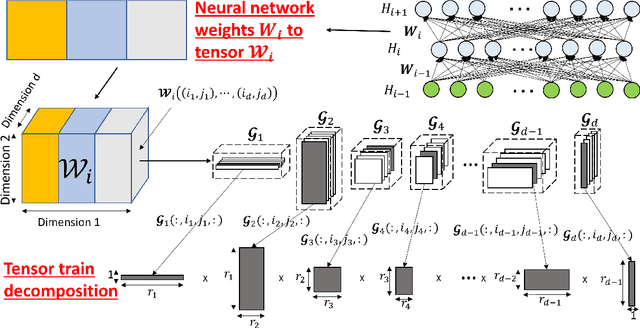

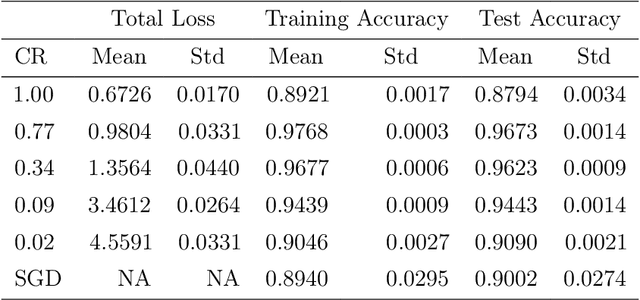

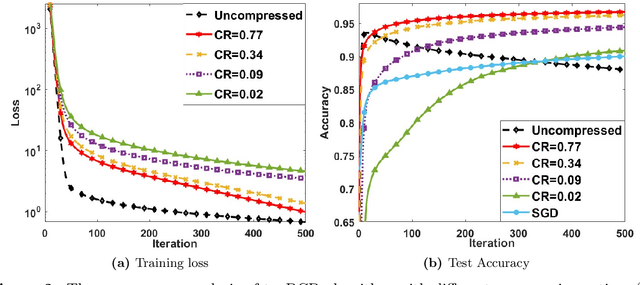

Provable Convergence of Tensor Decomposition-Based Neural Network Training

Mar 13, 2023

Advanced tensor decomposition, such as tensor train (TT), has been widely studied for tensor decomposition-based neural network (NN) training, which is one of the most common model compression methods. However, training NN with tensor decomposition always suffers significant accuracy loss and convergence issues. In this paper, a holistic framework is proposed for tensor decomposition-based NN training by formulating TT decomposition-based NN training as a nonconvex optimization problem. This problem can be solved by the proposed tensor block coordinate descent (tenBCD) method, which is a gradient-free algorithm. The global convergence of tenBCD to a critical point at a rate of O(1/k) is established with the Kurdyka {\L}ojasiewicz (K{\L}) property, where k is the number of iterations. The theoretical results can be extended to the popular residual neural networks (ResNets). The effectiveness and efficiency of our proposed framework are verified through an image classification dataset, where our proposed method can converge efficiently in training and prevent overfitting.

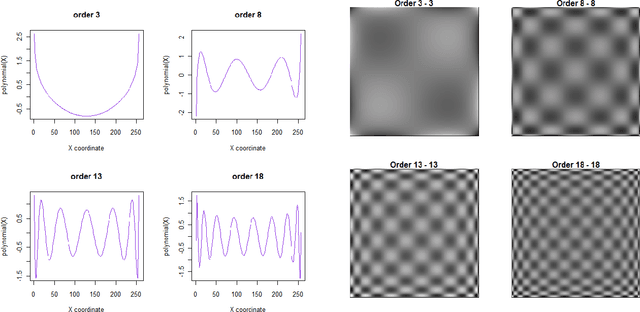

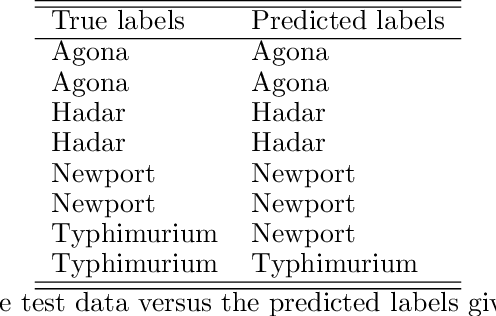

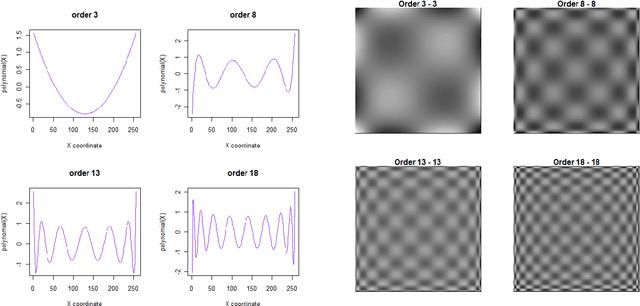

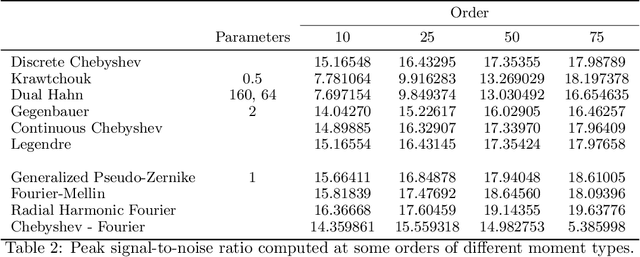

IM: An R-Package for Computation of Image Moments and Moment Invariants

Oct 29, 2022

Moment invariants are well-established and effective shape descriptors for image classification. In this report, we introduce a package for R-language, named IM, that implements the calculation of moments for images and allows the reconstruction of images from moments within an object-oriented framework. Several types of moments may be computed using the IM library, including discrete and continuous Chebyshev, Gegenbauer, Legendre, Krawtchouk, dual Hahn, generalized pseudo-Zernike, Fourier-Mellin, and radial harmonic Fourier moments. In addition, custom bivariate types of moments can be calculated using combinations of two different types of polynomials. A method of polar transformation of pixel coordinates is used to provide an approximate invariance to rotation for moments that are orthogonal over a rectangle. The different types of polynomials used to calculate moments are discussed in this report, as well as comparisons of reconstruction and running time. Examples of image classification using image moments are provided.

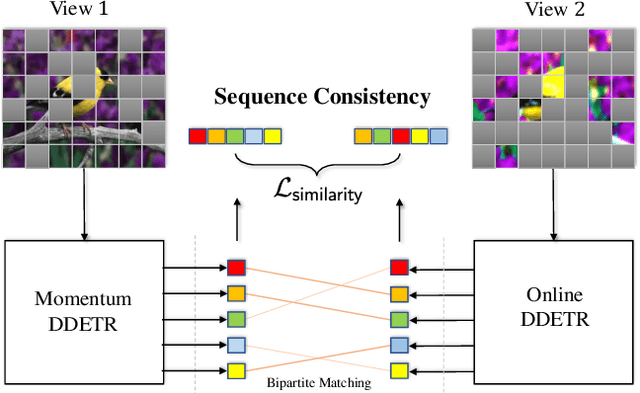

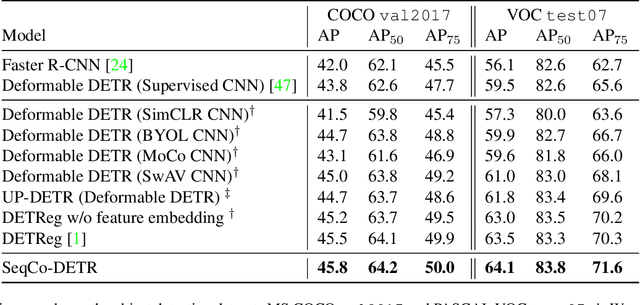

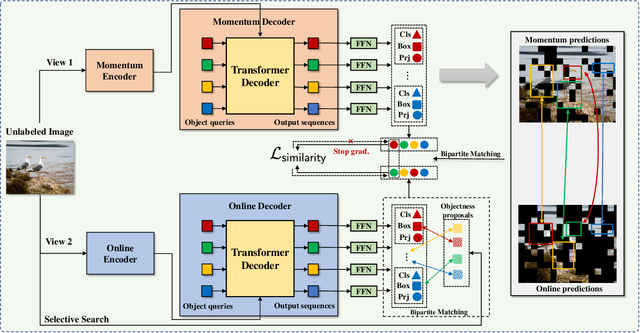

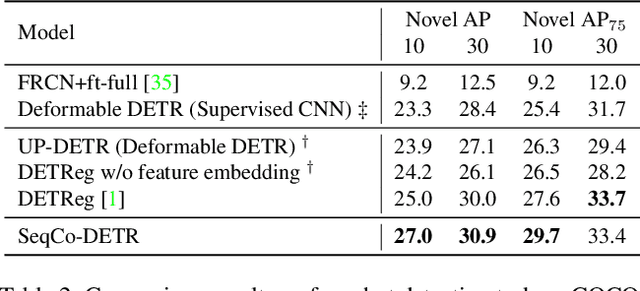

SeqCo-DETR: Sequence Consistency Training for Self-Supervised Object Detection with Transformers

Mar 15, 2023

Self-supervised pre-training and transformer-based networks have significantly improved the performance of object detection. However, most of the current self-supervised object detection methods are built on convolutional-based architectures. We believe that the transformers' sequence characteristics should be considered when designing a transformer-based self-supervised method for the object detection task. To this end, we propose SeqCo-DETR, a novel Sequence Consistency-based self-supervised method for object DEtection with TRansformers. SeqCo-DETR defines a simple but effective pretext by minimizes the discrepancy of the output sequences of transformers with different image views as input and leverages bipartite matching to find the most relevant sequence pairs to improve the sequence-level self-supervised representation learning performance. Furthermore, we provide a mask-based augmentation strategy incorporated with the sequence consistency strategy to extract more representative contextual information about the object for the object detection task. Our method achieves state-of-the-art results on MS COCO (45.8 AP) and PASCAL VOC (64.1 AP), demonstrating the effectiveness of our approach.

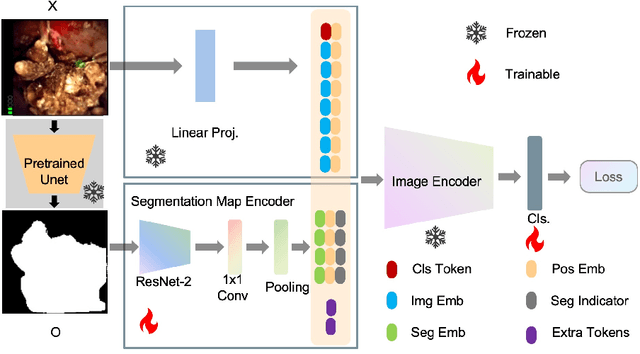

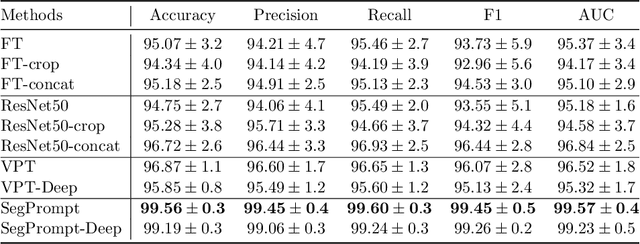

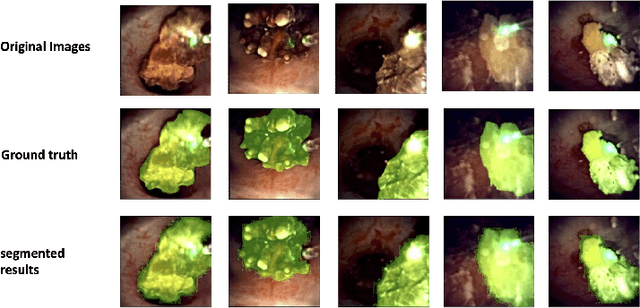

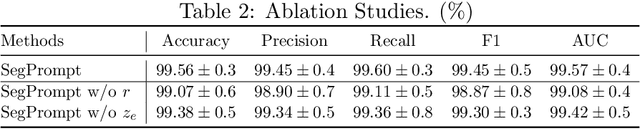

SegPrompt: Using Segmentation Map as a Better Prompt to Finetune Deep Models for Kidney Stone Classification

Mar 15, 2023

Recently, deep learning has produced encouraging results for kidney stone classification using endoscope images. However, the shortage of annotated training data poses a severe problem in improving the performance and generalization ability of the trained model. It is thus crucial to fully exploit the limited data at hand. In this paper, we propose SegPrompt to alleviate the data shortage problems by exploiting segmentation maps from two aspects. First, SegPrompt integrates segmentation maps to facilitate classification training so that the classification model is aware of the regions of interest. The proposed method allows the image and segmentation tokens to interact with each other to fully utilize the segmentation map information. Second, we use the segmentation maps as prompts to tune the pretrained deep model, resulting in much fewer trainable parameters than vanilla finetuning. We perform extensive experiments on the collected kidney stone dataset. The results show that SegPrompt can achieve an advantageous balance between the model fitting ability and the generalization ability, eventually leading to an effective model with limited training data.

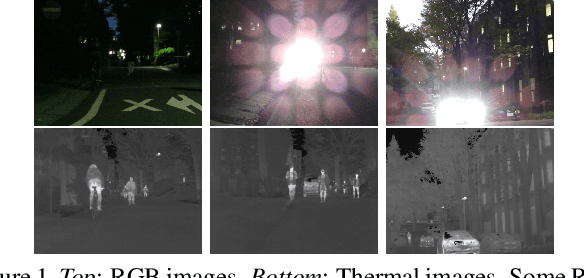

SpiderMesh: Spatial-aware Demand-guided Recursive Meshing for RGB-T Semantic Segmentation

Mar 15, 2023

For semantic segmentation in urban scene understanding, RGB cameras alone often fail to capture a clear holistic topology, especially in challenging lighting conditions. Thermal signal is an informative additional channel that can bring to light the contour and fine-grained texture of blurred regions in low-quality RGB image. Aiming at RGB-T (thermal) segmentation, existing methods either use simple passive channel/spatial-wise fusion for cross-modal interaction, or rely on heavy labeling of ambiguous boundaries for fine-grained supervision. We propose a Spatial-aware Demand-guided Recursive Meshing (SpiderMesh) framework that: 1) proactively compensates inadequate contextual semantics in optically-impaired regions via a demand-guided target masking algorithm; 2) refines multimodal semantic features with recursive meshing to improve pixel-level semantic analysis performance. We further introduce an asymmetric data augmentation technique M-CutOut, and enable semi-supervised learning to fully utilize RGB-T labels only sparsely available in practical use. Extensive experiments on MFNet and PST900 datasets demonstrate that SpiderMesh achieves new state-of-the-art performance on standard RGB-T segmentation benchmarks.

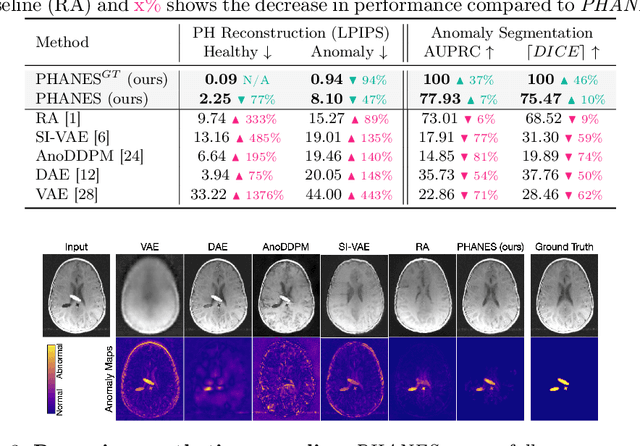

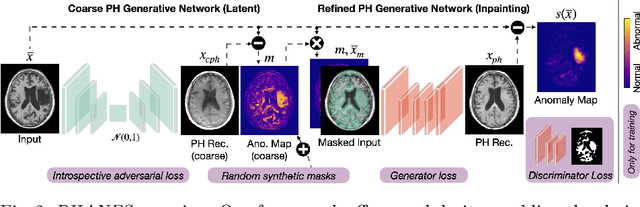

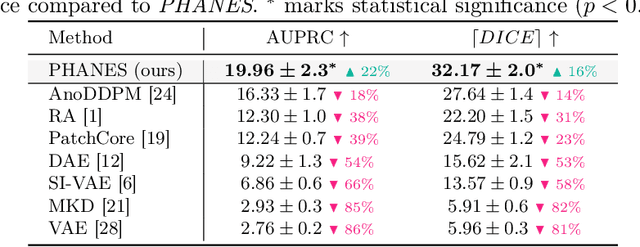

Reversing the Abnormal: Pseudo-Healthy Generative Networks for Anomaly Detection

Mar 15, 2023

Early and accurate disease detection is crucial for patient management and successful treatment outcomes. However, the automatic identification of anomalies in medical images can be challenging. Conventional methods rely on large labeled datasets which are difficult to obtain. To overcome these limitations, we introduce a novel unsupervised approach, called PHANES (Pseudo Healthy generative networks for ANomaly Segmentation). Our method has the capability of reversing anomalies, i.e., preserving healthy tissue and replacing anomalous regions with pseudo-healthy (PH) reconstructions. Unlike recent diffusion models, our method does not rely on a learned noise distribution nor does it introduce random alterations to the entire image. Instead, we use latent generative networks to create masks around possible anomalies, which are refined using inpainting generative networks. We demonstrate the effectiveness of PHANES in detecting stroke lesions in T1w brain MRI datasets and show significant improvements over state-of-the-art (SOTA) methods. We believe that our proposed framework will open new avenues for interpretable, fast, and accurate anomaly segmentation with the potential to support various clinical-oriented downstream tasks.

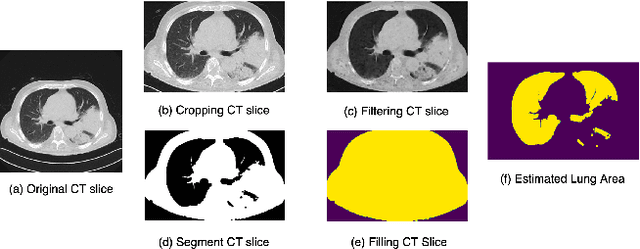

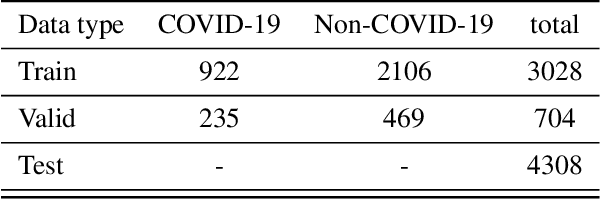

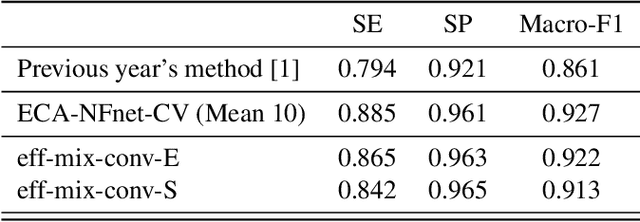

Strong Baseline and Bag of Tricks for COVID-19 Detection of CT Scans

Mar 15, 2023

This paper investigates the application of deep learning models for lung Computed Tomography (CT) image analysis. Traditional deep learning frameworks encounter compatibility issues due to variations in slice numbers and resolutions in CT images, which stem from the use of different machines. Commonly, individual slices are predicted and subsequently merged to obtain the final result; however, this approach lacks slice-wise feature learning and consequently results in decreased performance. We propose a novel slice selection method for each CT dataset to address this limitation, effectively filtering out uncertain slices and enhancing the model's performance. Furthermore, we introduce a spatial-slice feature learning (SSFL) technique\cite{hsu2022} that employs a conventional and efficient backbone model for slice feature training, followed by extracting one-dimensional data from the trained model for COVID and non-COVID classification using a dedicated classification model. Leveraging these experimental steps, we integrate one-dimensional features with multiple slices for channel merging and employ a 2D convolutional neural network (CNN) model for classification. In addition to the aforementioned methods, we explore various high-performance classification models, ultimately achieving promising results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge