Multimodal In-bed Pose and Shape Estimation under the Blankets

Paper and Code

Dec 12, 2020

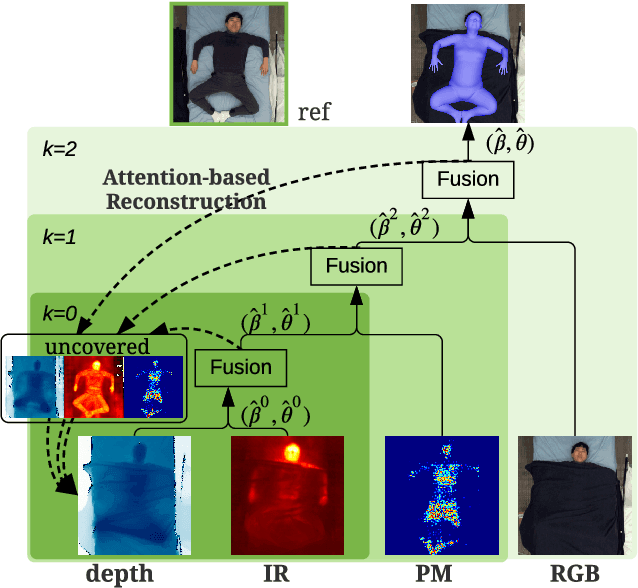

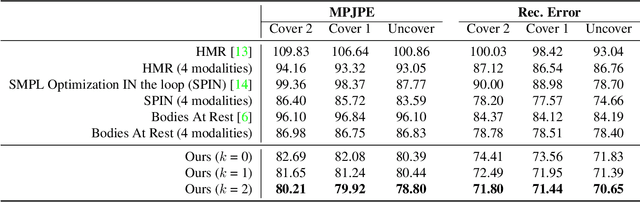

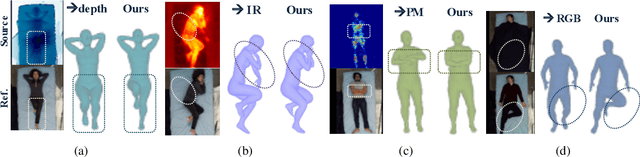

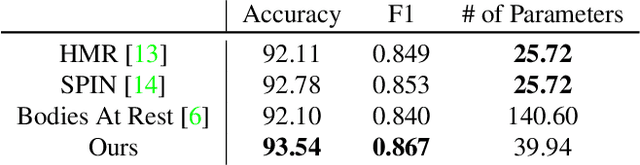

Humans spend vast hours in bed -- about one-third of the lifetime on average. Besides, a human at rest is vital in many healthcare applications. Typically, humans are covered by a blanket when resting, for which we propose a multimodal approach to uncover the subjects so their bodies at rest can be viewed without the occlusion of the blankets above. We propose a pyramid scheme to effectively fuse the different modalities in a way that best leverages the knowledge captured by the multimodal sensors. Specifically, the two most informative modalities (i.e., depth and infrared images) are first fused to generate good initial pose and shape estimation. Then pressure map and RGB images are further fused one by one to refine the result by providing occlusion-invariant information for the covered part, and accurate shape information for the uncovered part, respectively. However, even with multimodal data, the task of detecting human bodies at rest is still very challenging due to the extreme occlusion of bodies. To further reduce the negative effects of the occlusion from blankets, we employ an attention-based reconstruction module to generate uncovered modalities, which are further fused to update current estimation via a cyclic fashion. Extensive experiments validate the superiority of the proposed model over others.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge