Wonyong Sung

Stochastic Precision Ensemble: Self-Knowledge Distillation for Quantized Deep Neural Networks

Sep 30, 2020

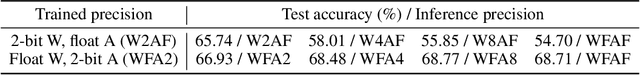

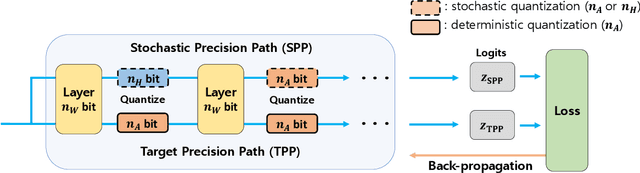

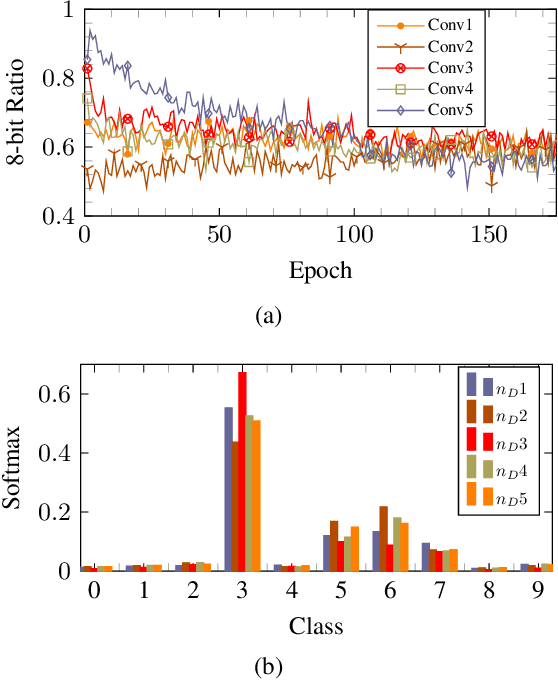

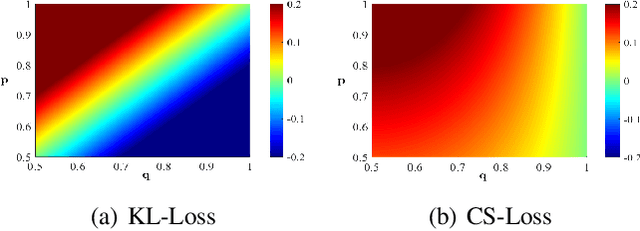

Abstract:The quantization of deep neural networks (QDNNs) has been actively studied for deployment in edge devices. Recent studies employ the knowledge distillation (KD) method to improve the performance of quantized networks. In this study, we propose stochastic precision ensemble training for QDNNs (SPEQ). SPEQ is a knowledge distillation training scheme; however, the teacher is formed by sharing the model parameters of the student network. We obtain the soft labels of the teacher by changing the bit precision of the activation stochastically at each layer of the forward-pass computation. The student model is trained with these soft labels to reduce the activation quantization noise. The cosine similarity loss is employed, instead of the KL-divergence, for KD training. As the teacher model changes continuously by random bit-precision assignment, it exploits the effect of stochastic ensemble KD. SPEQ outperforms the existing quantization training methods in various tasks, such as image classification, question-answering, and transfer learning without the need for cumbersome teacher networks.

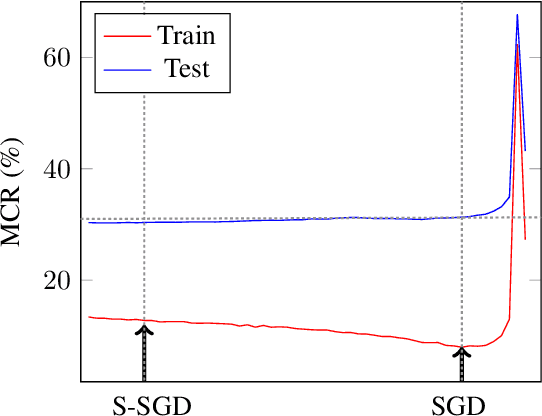

S-SGD: Symmetrical Stochastic Gradient Descent with Weight Noise Injection for Reaching Flat Minima

Sep 05, 2020

Abstract:The stochastic gradient descent (SGD) method is most widely used for deep neural network (DNN) training. However, the method does not always converge to a flat minimum of the loss surface that can demonstrate high generalization capability. Weight noise injection has been extensively studied for finding flat minima using the SGD method. We devise a new weight-noise injection-based SGD method that adds symmetrical noises to the DNN weights. The training with symmetrical noise evaluates the loss surface at two adjacent points, by which convergence to sharp minima can be avoided. Fixed-magnitude symmetric noises are added to minimize training instability. The proposed method is compared with the conventional SGD method and previous weight-noise injection algorithms using convolutional neural networks for image classification. Particularly, performance improvements in large batch training are demonstrated. This method shows superior performance compared with conventional SGD and weight-noise injection methods regardless of the batch-size and learning rate scheduling algorithms.

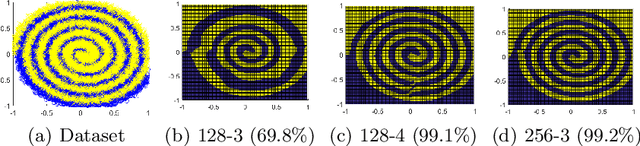

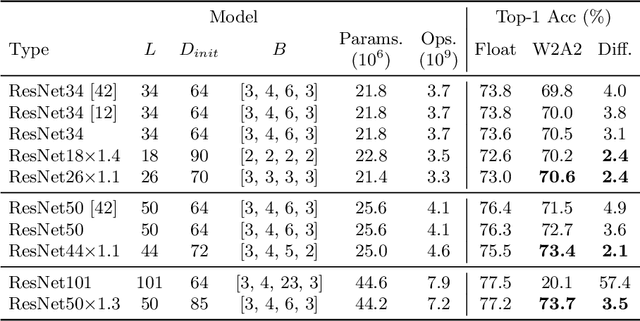

Quantized Neural Networks: Characterization and Holistic Optimization

May 31, 2020

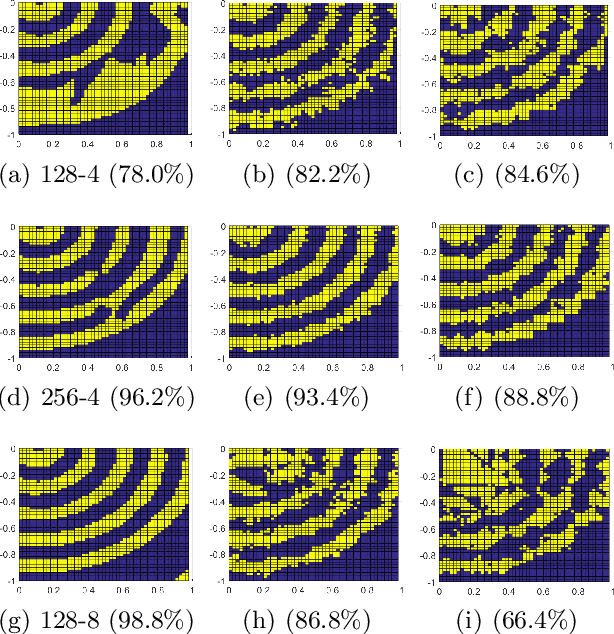

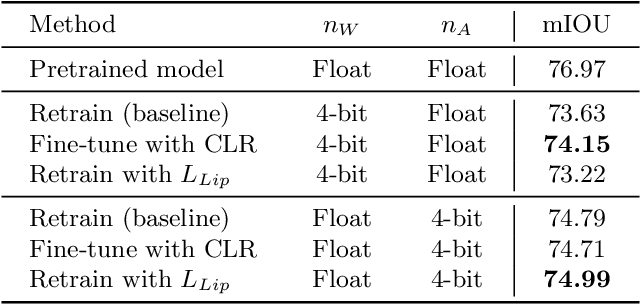

Abstract:Quantized deep neural networks (QDNNs) are necessary for low-power, high throughput, and embedded applications. Previous studies mostly focused on developing optimization methods for the quantization of given models. However, quantization sensitivity depends on the model architecture. Therefore, the model selection needs to be a part of the QDNN design process. Also, the characteristics of weight and activation quantization are quite different. This study proposes a holistic approach for the optimization of QDNNs, which contains QDNN training methods as well as quantization-friendly architecture design. Synthesized data is used to visualize the effects of weight and activation quantization. The results indicate that deeper models are more prone to activation quantization, while wider models improve the resiliency to both weight and activation quantization. This study can provide insight into better optimization of QDNNs.

SQWA: Stochastic Quantized Weight Averaging for Improving the Generalization Capability of Low-Precision Deep Neural Networks

Feb 02, 2020

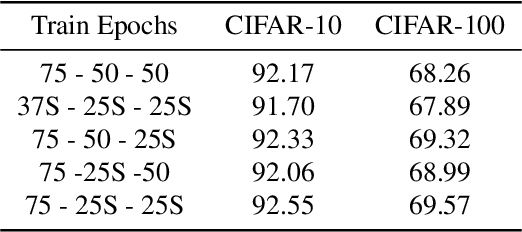

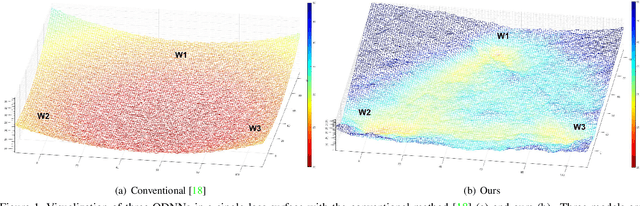

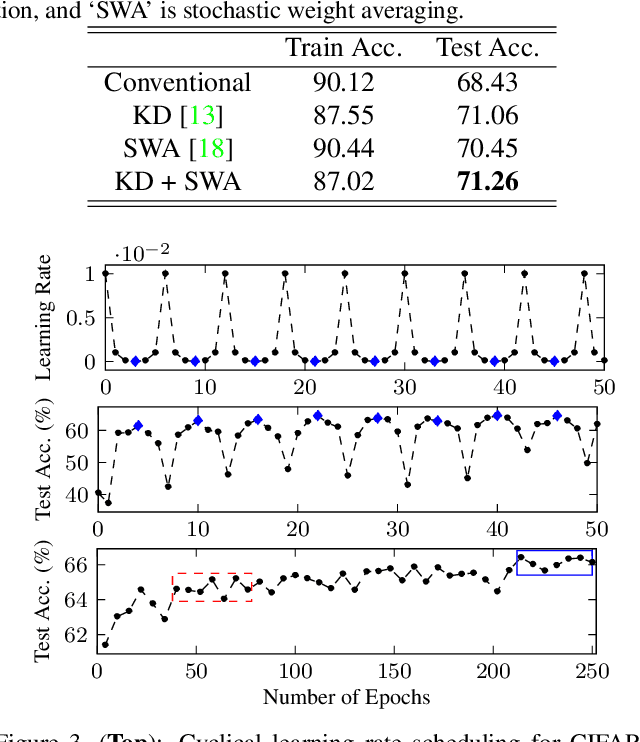

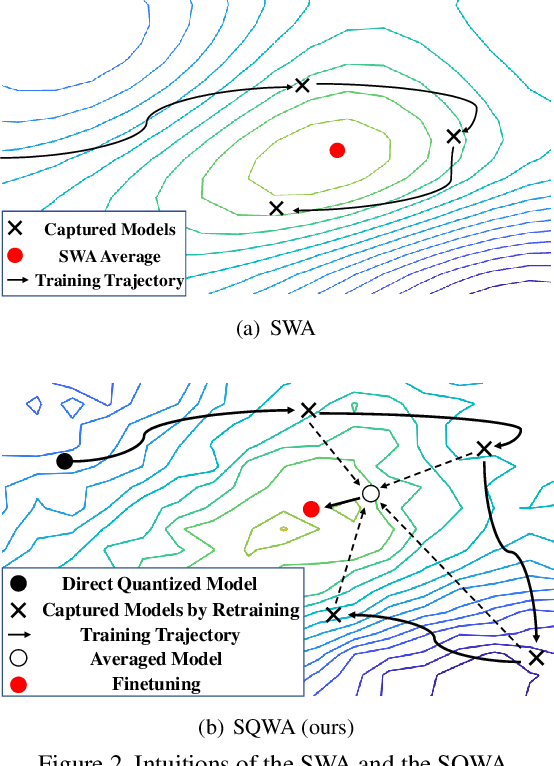

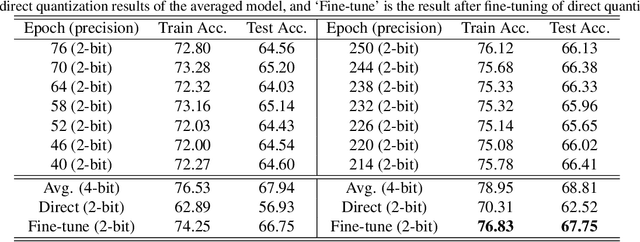

Abstract:Designing a deep neural network (DNN) with good generalization capability is a complex process especially when the weights are severely quantized. Model averaging is a promising approach for achieving the good generalization capability of DNNs, especially when the loss surface for training contains many sharp minima. We present a new quantized neural network optimization approach, stochastic quantized weight averaging (SQWA), to design low-precision DNNs with good generalization capability using model averaging. The proposed approach includes (1) floating-point model training, (2) direct quantization of weights, (3) capturing multiple low-precision models during retraining with cyclical learning rates, (4) averaging the captured models, and (5) re-quantizing the averaged model and fine-tuning it with low-learning rates. Additionally, we present a loss-visualization technique on the quantized weight domain to clearly elucidate the behavior of the proposed method. Visualization results indicate that a quantized DNN (QDNN) optimized with the proposed approach is located near the center of the flat minimum in the loss surface. With SQWA training, we achieved state-of-the-art results for 2-bit QDNNs on CIFAR-100 and ImageNet datasets. Although we only employed a uniform quantization scheme for the sake of implementation in VLSI or low-precision neural processing units, the performance achieved exceeded those of previous studies employing non-uniform quantization.

Empirical Analysis of Knowledge Distillation Technique for Optimization of Quantized Deep Neural Networks

Oct 05, 2019

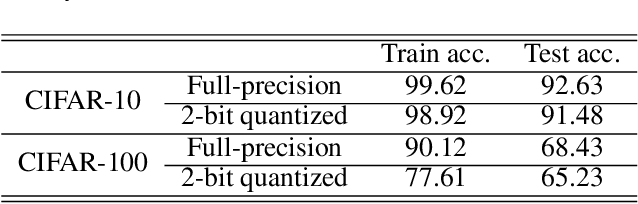

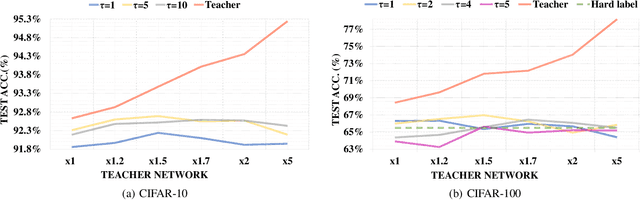

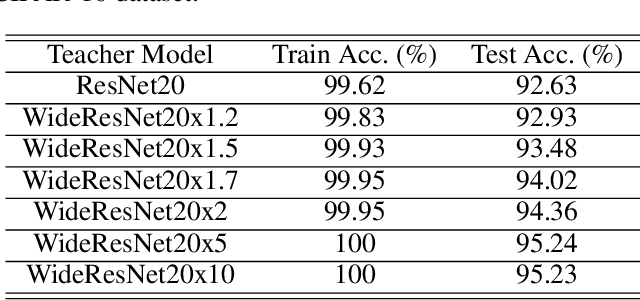

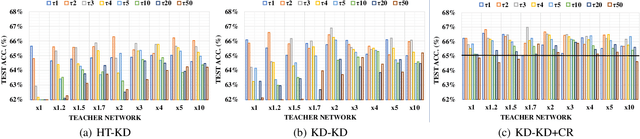

Abstract:Knowledge distillation (KD) is a very popular method for model size reduction. Recently, the technique is exploited for quantized deep neural networks (QDNNs) training as a way to restore the performance sacrificed by word-length reduction. KD, however, employs additional hyper-parameters, such as temperature, coefficient, and the size of teacher network for QDNN training. We analyze the effect of these hyper-parameters for QDNN optimization with KD. We find that these hyper-parameters are inter-related, and also introduce a simple and effective technique that reduces \textit{coefficient} during training. With KD employing the proposed hyper-parameters, we achieve the test accuracy of 92.7% and 67.0% on Resnet20 with 2-bit ternary weights for CIFAR-10 and CIFAR-100 data sets, respectively.

Single Stream Parallelization of Recurrent Neural Networks for Low Power and Fast Inference

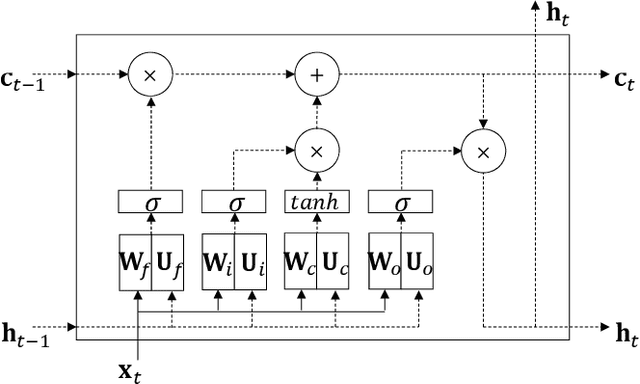

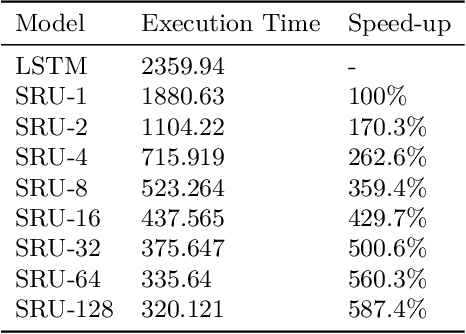

Mar 30, 2018

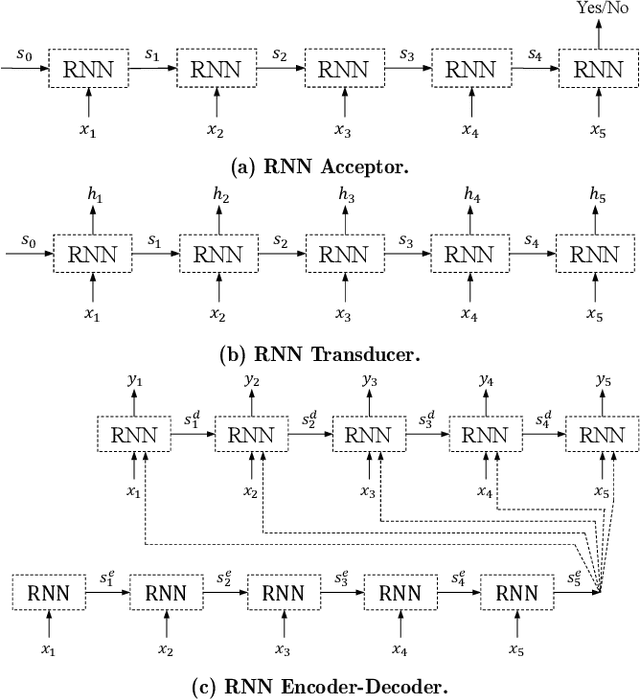

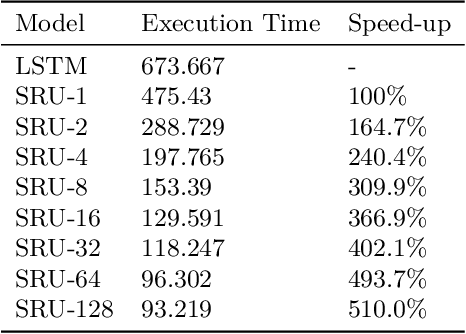

Abstract:As neural network algorithms show high performance in many applications, their efficient inference on mobile and embedded systems are of great interests. When a single stream recurrent neural network (RNN) is executed for a personal user in embedded systems, it demands a large amount of DRAM accesses because the network size is usually much bigger than the cache size and the weights of an RNN are used only once at each time step. We overcome this problem by parallelizing the algorithm and executing it multiple time steps at a time. This approach also reduces the power consumption by lowering the number of DRAM accesses. QRNN (Quasi Recurrent Neural Networks) and SRU (Simple Recurrent Unit) based recurrent neural networks are used for implementation. The experiments for SRU showed about 300% and 930% of speed-up when the numbers of multi time steps are 4 and 16, respectively, in an ARM CPU based system.

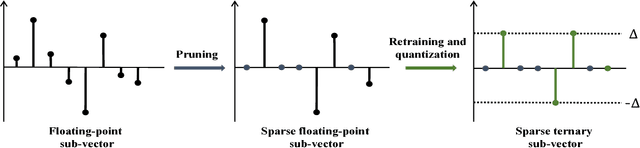

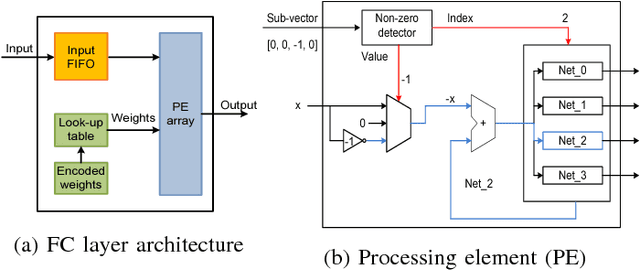

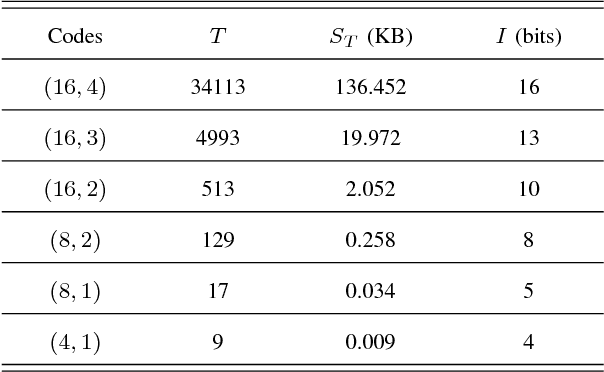

Structured Sparse Ternary Weight Coding of Deep Neural Networks for Efficient Hardware Implementations

Jul 01, 2017

Abstract:Deep neural networks (DNNs) usually demand a large amount of operations for real-time inference. Especially, fully-connected layers contain a large number of weights, thus they usually need many off-chip memory accesses for inference. We propose a weight compression method for deep neural networks, which allows values of +1 or -1 only at predetermined positions of the weights so that decoding using a table can be conducted easily. For example, the structured sparse (8,2) coding allows at most two non-zero values among eight weights. This method not only enables multiplication-free DNN implementations but also compresses the weight storage by up to x32 compared to floating-point networks. Weight distribution normalization and gradual pruning techniques are applied to mitigate the performance degradation. The experiments are conducted with fully-connected deep neural networks and convolutional neural networks.

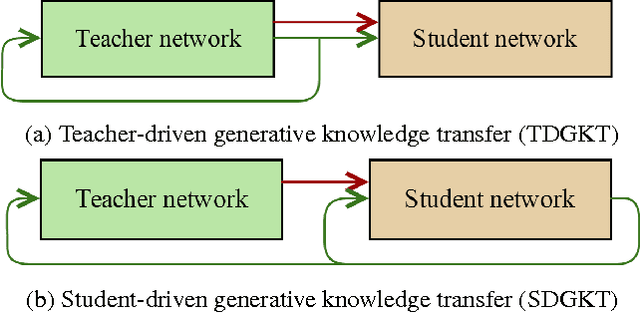

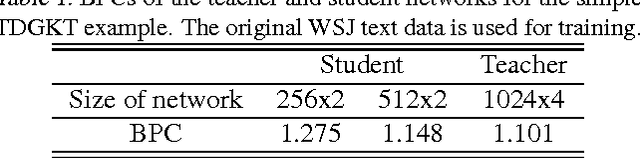

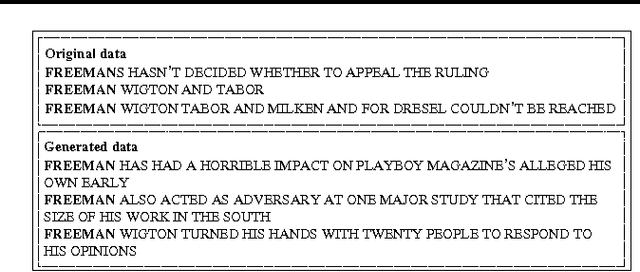

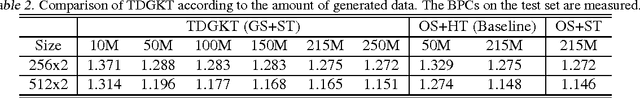

Generative Knowledge Transfer for Neural Language Models

Feb 28, 2017

Abstract:In this paper, we propose a generative knowledge transfer technique that trains an RNN based language model (student network) using text and output probabilities generated from a previously trained RNN (teacher network). The text generation can be conducted by either the teacher or the student network. We can also improve the performance by taking the ensemble of soft labels obtained from multiple teacher networks. This method can be used for privacy conscious language model adaptation because no user data is directly used for training. Especially, when the soft labels of multiple devices are aggregated via a trusted third party, we can expect very strong privacy protection.

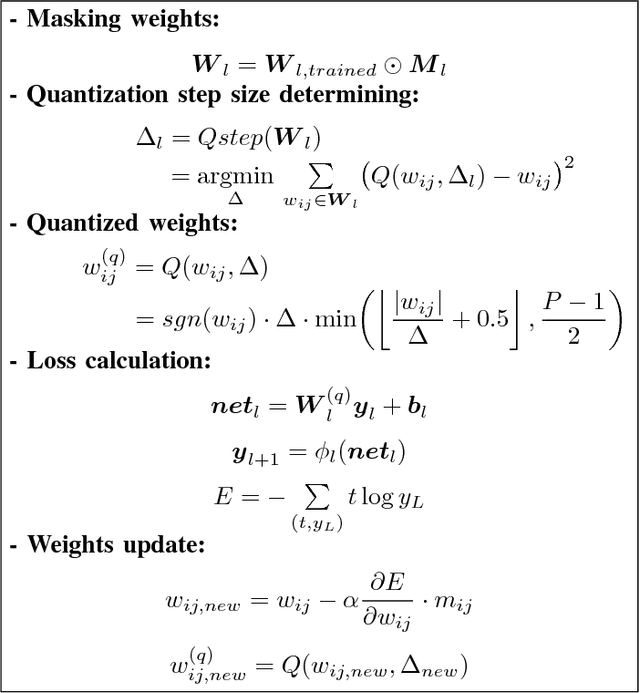

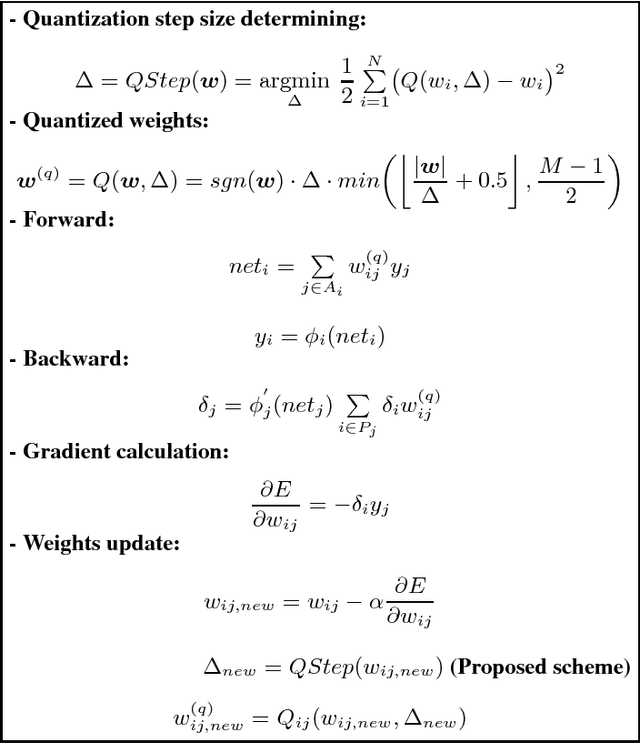

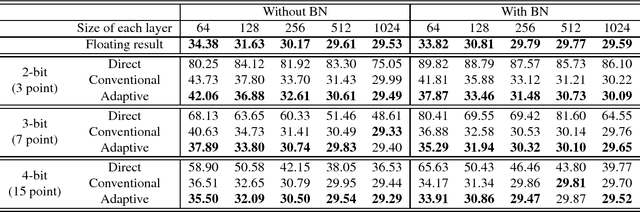

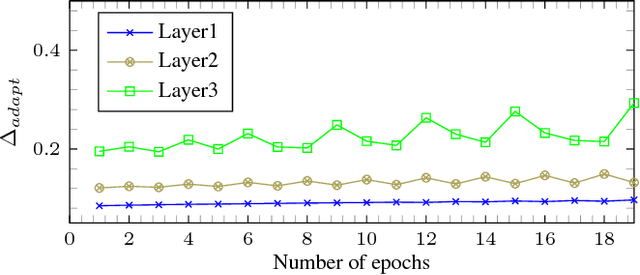

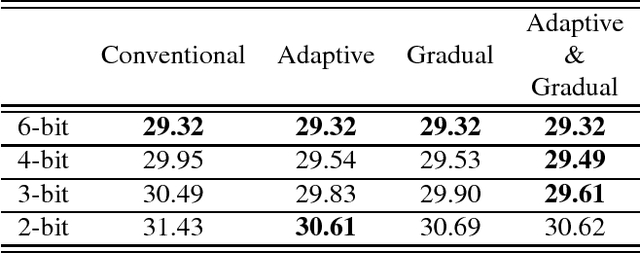

Fixed-point optimization of deep neural networks with adaptive step size retraining

Feb 27, 2017

Abstract:Fixed-point optimization of deep neural networks plays an important role in hardware based design and low-power implementations. Many deep neural networks show fairly good performance even with 2- or 3-bit precision when quantized weights are fine-tuned by retraining. We propose an improved fixedpoint optimization algorithm that estimates the quantization step size dynamically during the retraining. In addition, a gradual quantization scheme is also tested, which sequentially applies fixed-point optimizations from high- to low-precision. The experiments are conducted for feed-forward deep neural networks (FFDNNs), convolutional neural networks (CNNs), and recurrent neural networks (RNNs).

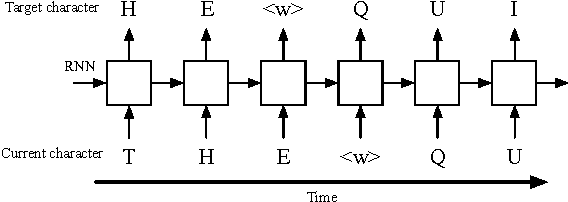

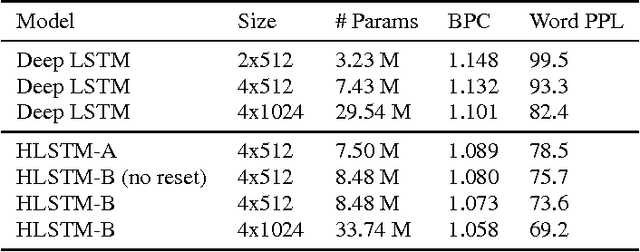

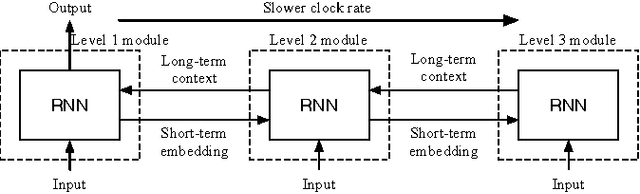

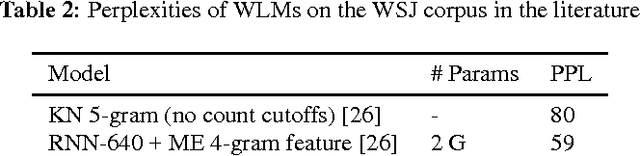

Character-Level Language Modeling with Hierarchical Recurrent Neural Networks

Feb 02, 2017

Abstract:Recurrent neural network (RNN) based character-level language models (CLMs) are extremely useful for modeling out-of-vocabulary words by nature. However, their performance is generally much worse than the word-level language models (WLMs), since CLMs need to consider longer history of tokens to properly predict the next one. We address this problem by proposing hierarchical RNN architectures, which consist of multiple modules with different timescales. Despite the multi-timescale structures, the input and output layers operate with the character-level clock, which allows the existing RNN CLM training approaches to be directly applicable without any modifications. Our CLM models show better perplexity than Kneser-Ney (KN) 5-gram WLMs on the One Billion Word Benchmark with only 2% of parameters. Also, we present real-time character-level end-to-end speech recognition examples on the Wall Street Journal (WSJ) corpus, where replacing traditional mono-clock RNN CLMs with the proposed models results in better recognition accuracies even though the number of parameters are reduced to 30%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge