Shuchin Aeron

Clustering multi-way data: a novel algebraic approach

Feb 22, 2015

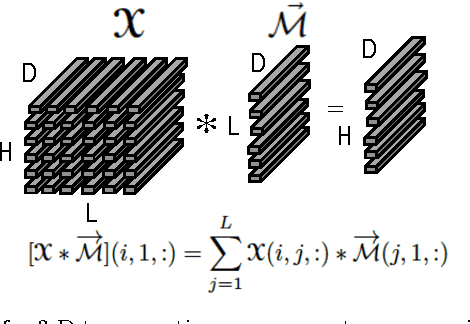

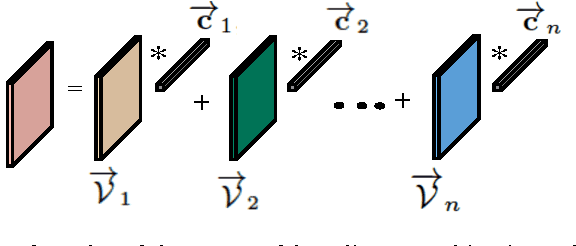

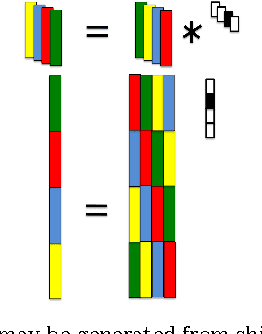

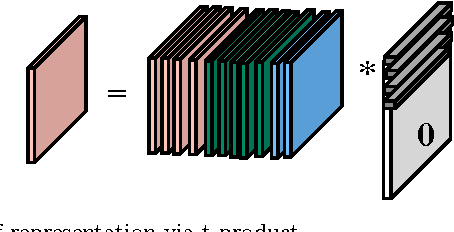

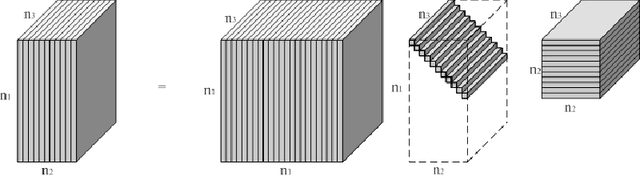

Abstract:In this paper, we develop a method for unsupervised clustering of two-way (matrix) data by combining two recent innovations from different fields: the Sparse Subspace Clustering (SSC) algorithm [10], which groups points coming from a union of subspaces into their respective subspaces, and the t-product [18], which was introduced to provide a matrix-like multiplication for third order tensors. Our algorithm is analogous to SSC in that an "affinity" between different data points is built using a sparse self-representation of the data. Unlike SSC, we employ the t-product in the self-representation. This allows us more flexibility in modeling; infact, SSC is a special case of our method. When using the t-product, three-way arrays are treated as matrices whose elements (scalars) are n-tuples or tubes. Convolutions take the place of scalar multiplication. This framework allows us to embed the 2-D data into a vector-space-like structure called a free module over a commutative ring. These free modules retain many properties of complex inner-product spaces, and we leverage that to provide theoretical guarantees on our algorithm. We show that compared to vector-space counterparts, SSmC achieves higher accuracy and better able to cluster data with less preprocessing in some image clustering problems. In particular we show the performance of the proposed method on Weizmann face database, the Extended Yale B Face database and the MNIST handwritten digits database.

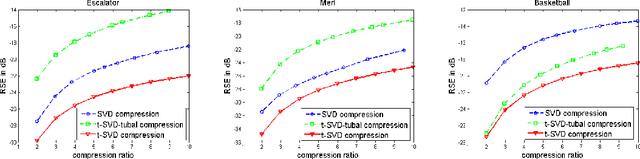

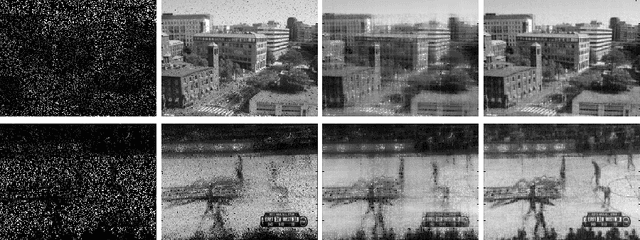

Novel methods for multilinear data completion and de-noising based on tensor-SVD

Oct 30, 2014

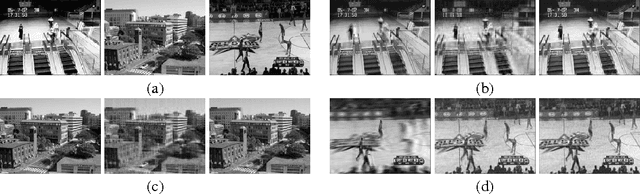

Abstract:In this paper we propose novel methods for completion (from limited samples) and de-noising of multilinear (tensor) data and as an application consider 3-D and 4- D (color) video data completion and de-noising. We exploit the recently proposed tensor-Singular Value Decomposition (t-SVD)[11]. Based on t-SVD, the notion of multilinear rank and a related tensor nuclear norm was proposed in [11] to characterize informational and structural complexity of multilinear data. We first show that videos with linear camera motion can be represented more efficiently using t-SVD compared to the approaches based on vectorizing or flattening of the tensors. Since efficiency in representation implies efficiency in recovery, we outline a tensor nuclear norm penalized algorithm for video completion from missing entries. Application of the proposed algorithm for video recovery from missing entries is shown to yield a superior performance over existing methods. We also consider the problem of tensor robust Principal Component Analysis (PCA) for de-noising 3-D video data from sparse random corruptions. We show superior performance of our method compared to the matrix robust PCA adapted to this setting as proposed in [4].

First Order Methods for Robust Non-negative Matrix Factorization for Large Scale Noisy Data

Mar 24, 2014

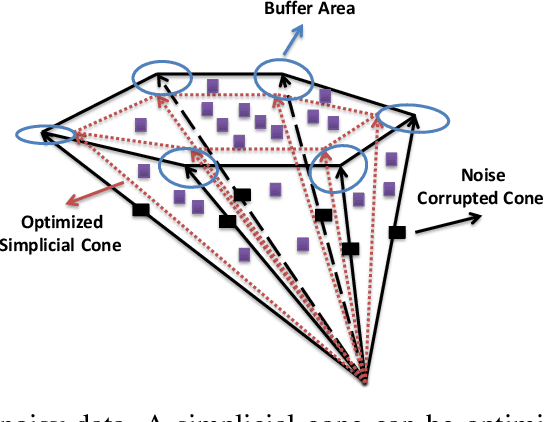

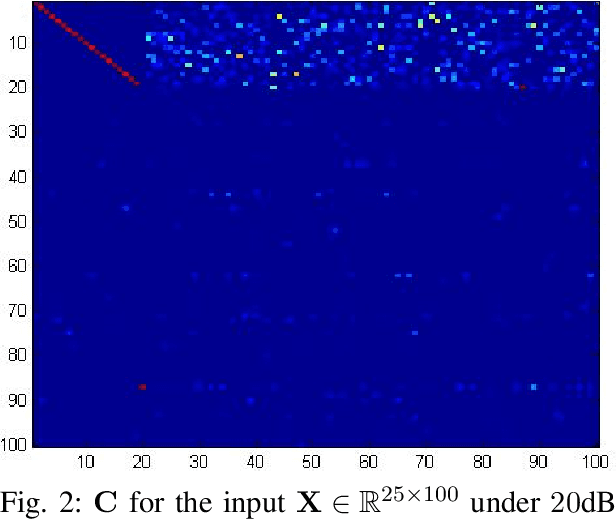

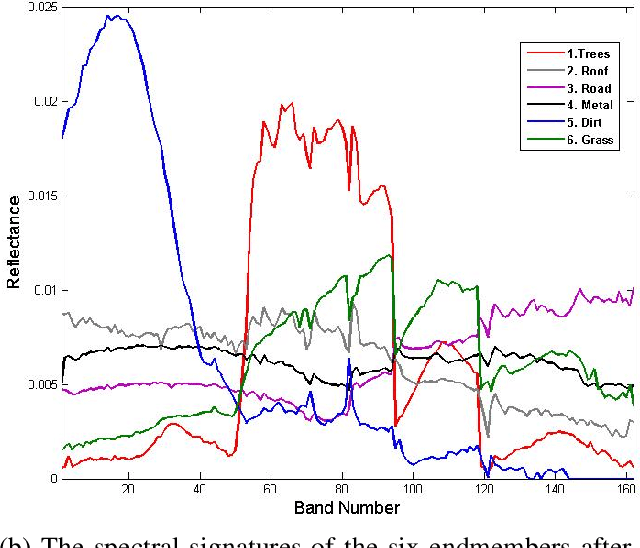

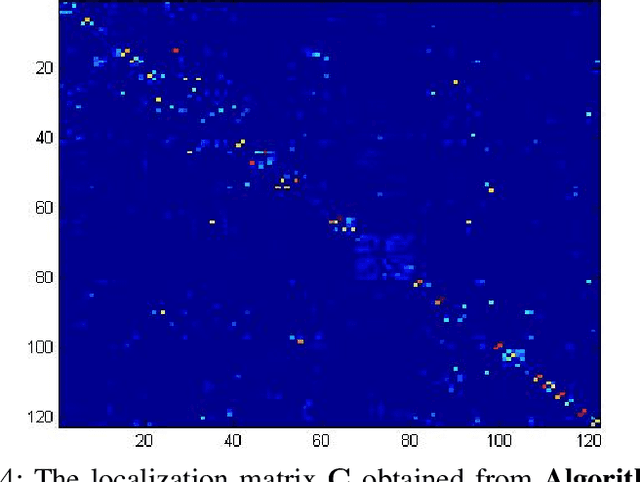

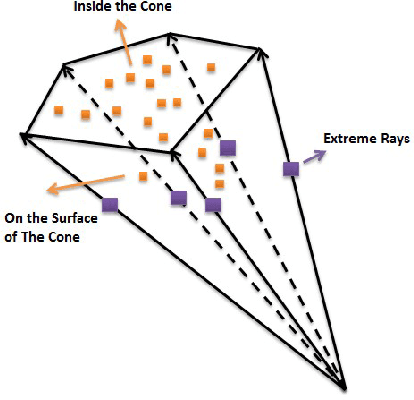

Abstract:Nonnegative matrix factorization (NMF) has been shown to be identifiable under the separability assumption, under which all the columns(or rows) of the input data matrix belong to the convex cone generated by only a few of these columns(or rows) [1]. In real applications, however, such separability assumption is hard to satisfy. Following [4] and [5], in this paper, we look at the Linear Programming (LP) based reformulation to locate the extreme rays of the convex cone but in a noisy setting. Furthermore, in order to deal with the large scale data, we employ First-Order Methods (FOM) to mitigate the computational complexity of LP, which primarily results from a large number of constraints. We show the performance of the algorithm on real and synthetic data sets.

Robust Large Scale Non-negative Matrix Factorization using Proximal Point Algorithm

Jan 08, 2014

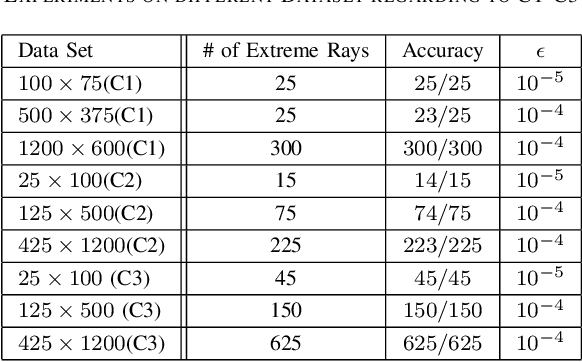

Abstract:A robust algorithm for non-negative matrix factorization (NMF) is presented in this paper with the purpose of dealing with large-scale data, where the separability assumption is satisfied. In particular, we modify the Linear Programming (LP) algorithm of [9] by introducing a reduced set of constraints for exact NMF. In contrast to the previous approaches, the proposed algorithm does not require the knowledge of factorization rank (extreme rays [3] or topics [7]). Furthermore, motivated by a similar problem arising in the context of metabolic network analysis [13], we consider an entirely different regime where the number of extreme rays or topics can be much larger than the dimension of the data vectors. The performance of the algorithm for different synthetic data sets are provided.

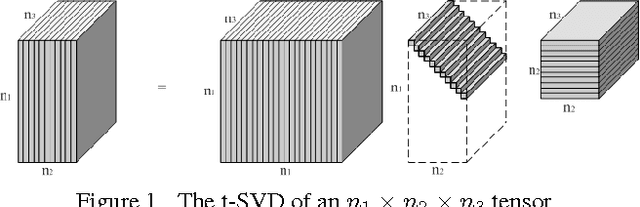

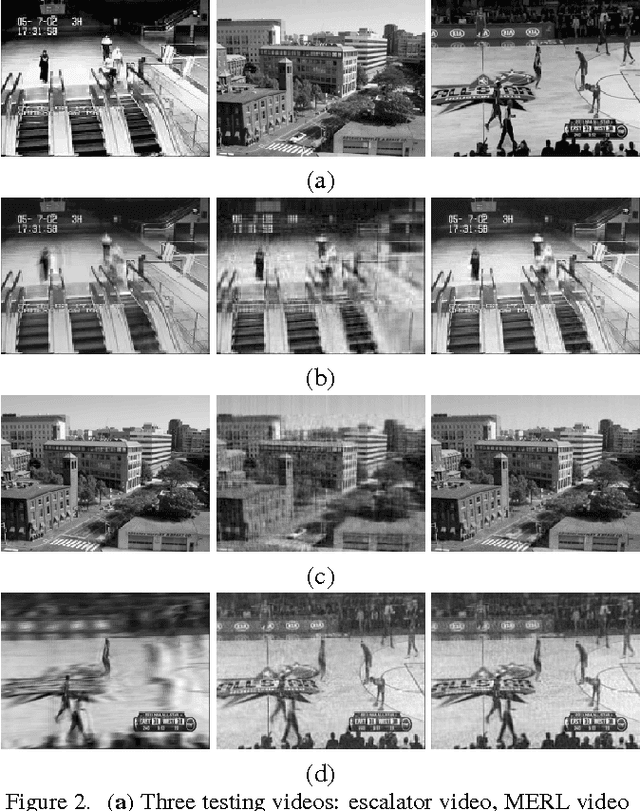

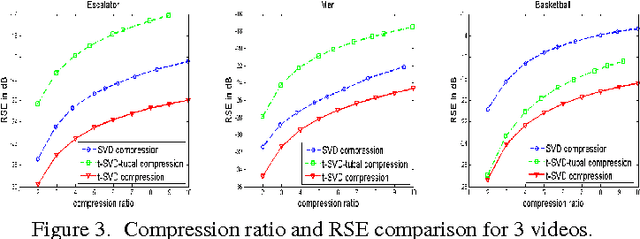

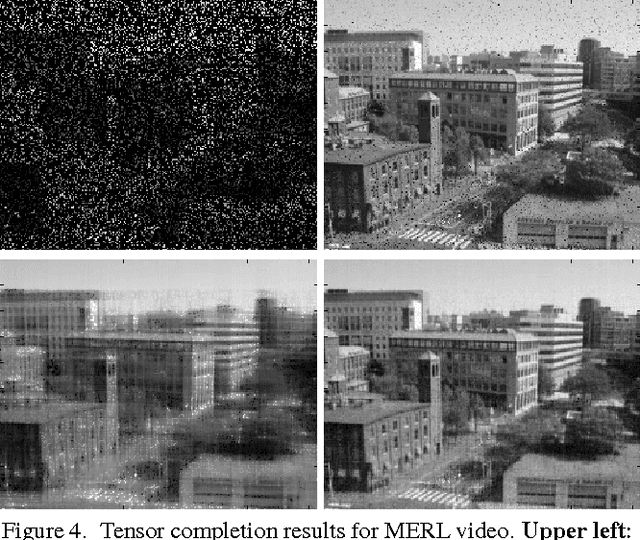

Novel Factorization Strategies for Higher Order Tensors: Implications for Compression and Recovery of Multi-linear Data

Oct 31, 2013

Abstract:In this paper we propose novel methods for compression and recovery of multilinear data under limited sampling. We exploit the recently proposed tensor- Singular Value Decomposition (t-SVD)[1], which is a group theoretic framework for tensor decomposition. In contrast to popular existing tensor decomposition techniques such as higher-order SVD (HOSVD), t-SVD has optimality properties similar to the truncated SVD for matrices. Based on t-SVD, we first construct novel tensor-rank like measures to characterize informational and structural complexity of multilinear data. Following that we outline a complexity penalized algorithm for tensor completion from missing entries. As an application, 3-D and 4-D (color) video data compression and recovery are considered. We show that videos with linear camera motion can be represented more efficiently using t-SVD compared to traditional approaches based on vectorizing or flattening of the tensors. Application of the proposed tensor completion algorithm for video recovery from missing entries is shown to yield a superior performance over existing methods. In conclusion we point out several research directions and implications to online prediction of multilinear data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge