Shoichi Koyama

Spatial Active Noise Control Method Based On Sound Field Interpolation From Reference Microphone Signals

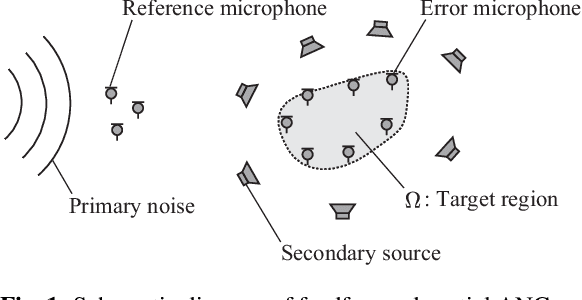

Mar 28, 2023Abstract:A spatial active noise control (ANC) method based on the interpolation of a sound field from reference microphone signals is proposed. In most current spatial ANC methods, a sufficient number of error microphones are required to reduce noise over the target region because the sound field is estimated from error microphone signals. However, in practical applications, it is preferable that the number of error microphones is as small as possible to keep a space in the target region for ANC users. We propose to interpolate the sound field from reference microphones, which are normally placed outside the target region, instead of the error microphones. We derive a fixed filter for spatial noise reduction on the basis of the kernel ridge regression for sound field interpolation. Furthermore, to compensate for estimation errors, we combine the proposed fixed filter with multichannel ANC based on a transition of the control filter using the error microphone signals. Numerical experimental results indicate that regional noise can be sufficiently reduced by the proposed methods even when the number of error microphones is particularly small.

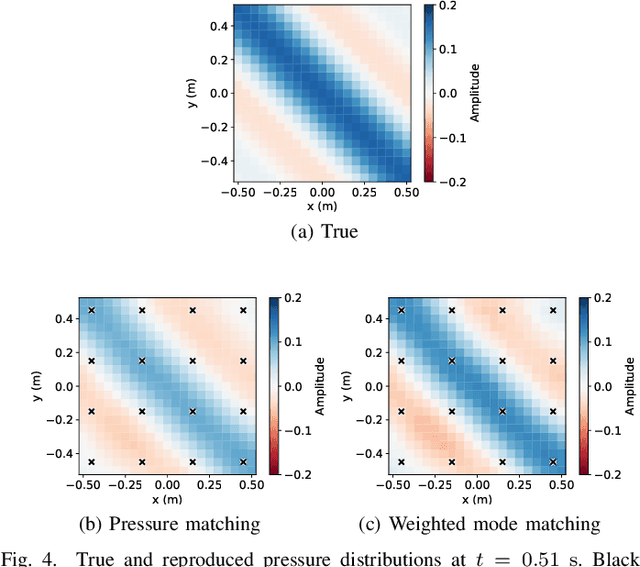

Weighted Pressure and Mode Matching for Sound Field Reproduction: Theoretical and Experimental Comparisons

Mar 23, 2023Abstract:Two sound field reproduction methods, weighted pressure matching and weighted mode matching, are theoretically and experimentally compared. The weighted pressure and mode matching are a generalization of conventional pressure and mode matching, respectively. Both methods are derived by introducing a weighting matrix in the pressure and mode matching. The weighting matrix in the weighted pressure matching is defined on the basis of the kernel interpolation of the sound field from pressure at a discrete set of control points. In the weighted mode matching, the weighting matrix is defined by a regional integration of spherical wavefunctions. It is theoretically shown that the weighted pressure matching is a special case of the weighted mode matching by infinite-dimensional harmonic analysis for estimating expansion coefficients from pressure observations. The difference between the two methods are discussed through experiments.

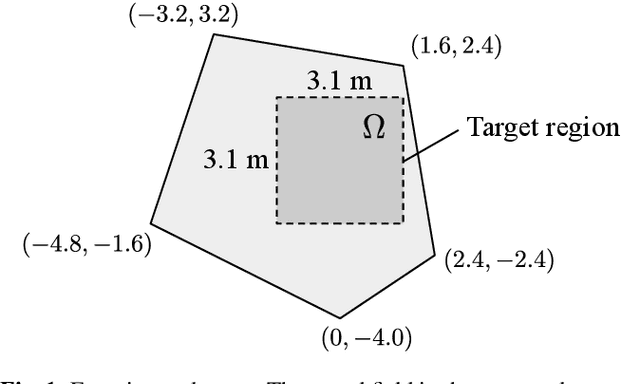

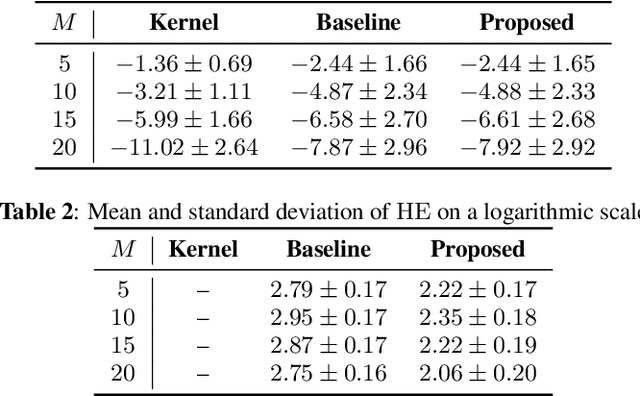

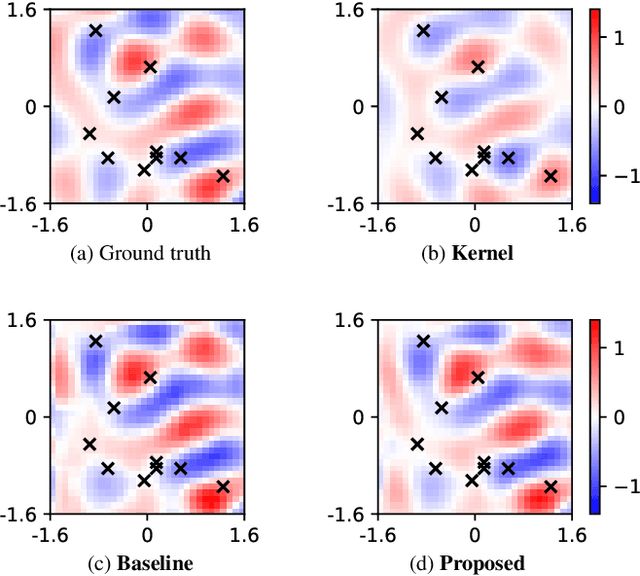

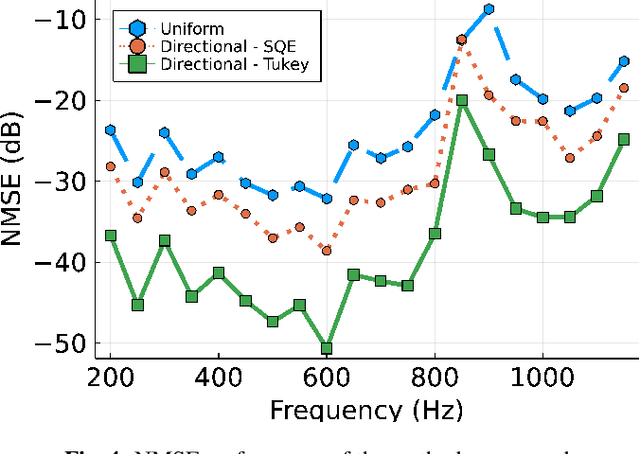

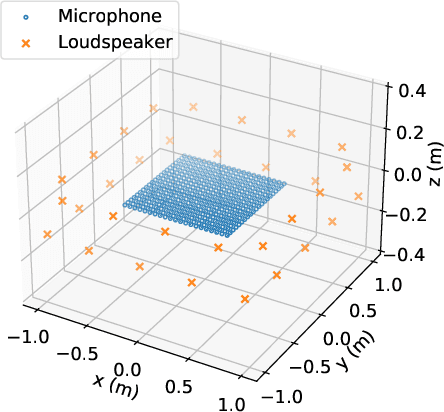

Kernel interpolation of acoustic transfer functions with adaptive kernel for directed and residual reverberations

Mar 07, 2023Abstract:An interpolation method for region-to-region acoustic transfer functions (ATFs) based on kernel ridge regression with an adaptive kernel is proposed. Most current ATF interpolation methods do not incorporate the acoustic properties for which measurements are performed. Our proposed method is based on a separate adaptation of directional weighting functions to directed and residual reverberations, which are used for adapting kernel functions. Thus, the proposed method can not only impose constraints on fundamental acoustic properties, but can also adapt to the acoustic environment. Numerical experimental results indicated that our proposed method outperforms the current methods in terms of interpolation accuracy, especially at high frequencies.

* To appear in ICASSP 2023

Weighted Pressure Matching Based on Kernel Interpolation For Sound Field Reproduction

Oct 26, 2022Abstract:A sound field reproduction method called weighted pressure matching is proposed. Sound field reproduction is aimed at synthesizing the desired sound field using multiple loudspeakers inside a target region. Optimization-based methods are derived from the minimization of errors between synthesized and desired sound fields, which enable the use of an arbitrary array geometry in contrast with integral-equation-based methods. Pressure matching is widely used in the optimization-based sound field reproduction methods because of its simplicity of implementation. Its cost function is defined as the synthesis errors at multiple control points inside the target region; then, the driving signals of the loudspeakers are obtained by solving a least-squares problem. However, in pressure matching, the region between the control points is not taken into consideration. We define the cost function as the regional integration of the synthesis error over the target region. On the basis of the kernel interpolation of the sound field, this cost function is represented as the weighted square error of the synthesized pressures at the control points. Experimental results indicate that the proposed weighted pressure matching outperforms conventional pressure matching.

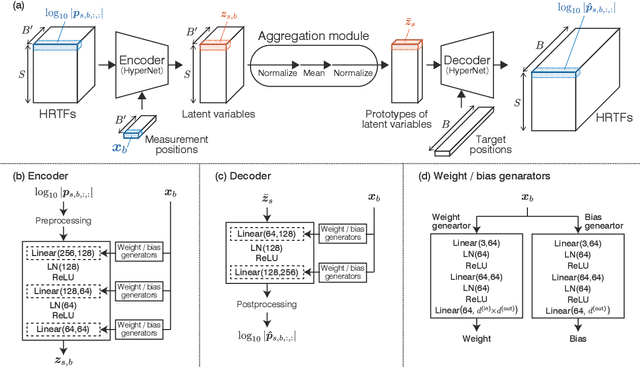

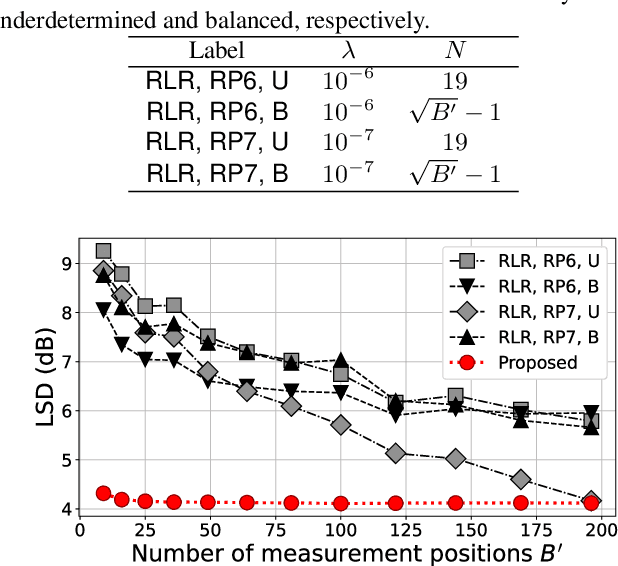

Head-Related Transfer Function Interpolation from Spatially Sparse Measurements Using Autoencoder with Source Position Conditioning

Jul 22, 2022

Abstract:We propose a method of head-related transfer function (HRTF) interpolation from sparsely measured HRTFs using an autoencoder with source position conditioning. The proposed method is drawn from an analogy between an HRTF interpolation method based on regularized linear regression (RLR) and an autoencoder. Through this analogy, we found the key feature of the RLR-based method that HRTFs are decomposed into source-position-dependent and source-position-independent factors. On the basis of this finding, we design the encoder and decoder so that their weights and biases are generated from source positions. Furthermore, we introduce an aggregation module that reduces the dependence of latent variables on source position for obtaining a source-position-independent representation of each subject. Numerical experiments show that the proposed method can work well for unseen subjects and achieve an interpolation performance with only one-eighth measurements comparable to that of the RLR-based method.

Physics-informed convolutional neural network with bicubic spline interpolation for sound field estimation

Jul 22, 2022

Abstract:A sound field estimation method based on a physics-informed convolutional neural network (PICNN) using spline interpolation is proposed. Most of the sound field estimation methods are based on wavefunction expansion, making the estimated function satisfy the Helmholtz equation. However, these methods rely only on physical properties; thus, they suffer from a significant deterioration of accuracy when the number of measurements is small. Recent learning-based methods based on neural networks have advantages in estimating from sparse measurements when training data are available. However, since physical properties are not taken into consideration, the estimated function can be a physically infeasible solution. We propose the application of PICNN to the sound field estimation problem by using a loss function that penalizes deviation from the Helmholtz equation. Since the output of CNN is a spatially discretized pressure distribution, it is difficult to directly evaluate the Helmholtz-equation loss function. Therefore, we incorporate bicubic spline interpolation in the PICNN framework. Experimental results indicated that accurate and physically feasible estimation from sparse measurements can be achieved with the proposed method.

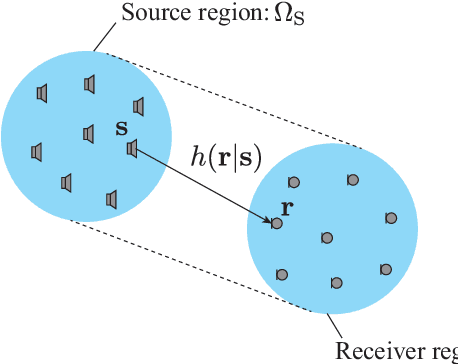

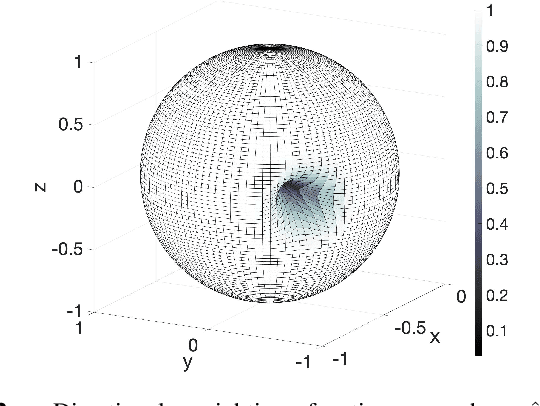

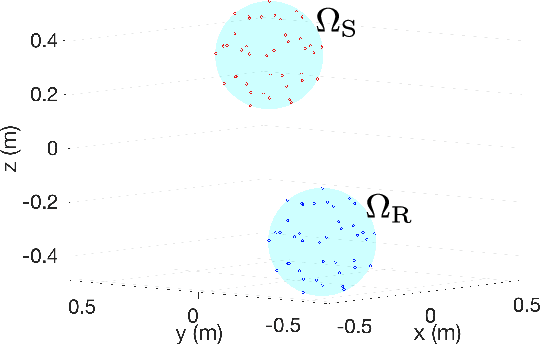

Region-to-region kernel interpolation of acoustic transfer function with directional weighting

May 05, 2022

Abstract:A method of interpolating the acoustic transfer function (ATF) between regions that takes into account both the physical properties of the ATF and the directionality of region configurations is proposed. Most spatial ATF interpolation methods are limited to estimation in the region of receivers. A kernel method for region-to-region ATF interpolation makes it possible to estimate the ATFs for both source and receiver regions from a discrete set of ATF measurements. We newly formulate the reproducing kernel Hilbert space and associated kernel function incorporating directional weight to enhance the interpolation accuracy. We also investigate hyperparameter optimization methods for this kernel function. Numerical experiments indicate that the proposed method outperforms the method without the use of directional weighting.

* To appear in ICASSP 2022 - 2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)

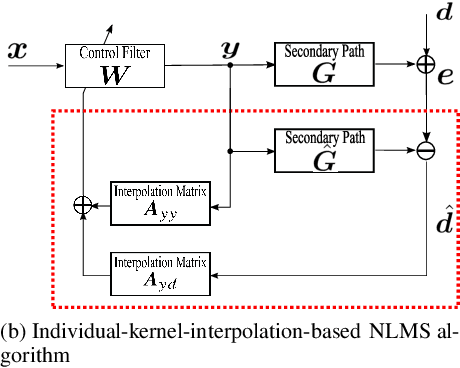

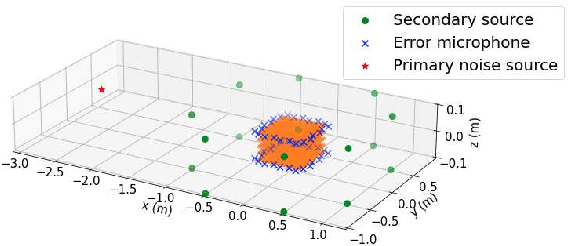

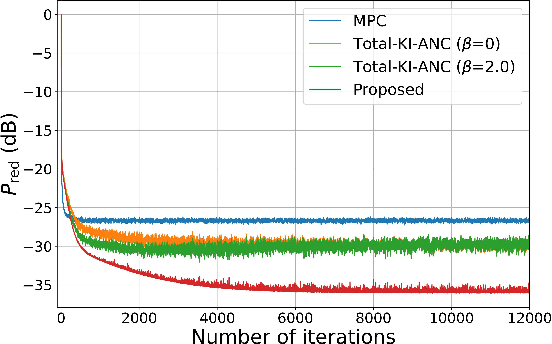

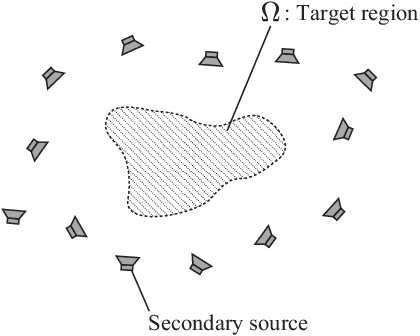

Spatial active noise control based on individual kernel interpolation of primary and secondary sound fields

Feb 10, 2022

Abstract:A spatial active noise control (ANC) method based on the individual kernel interpolation of primary and secondary sound fields is proposed. Spatial ANC is aimed at cancelling unwanted primary noise within a continuous region by using multiple secondary sources and microphones. A method based on the kernel interpolation of a sound field makes it possible to attenuate noise over the target region with flexible array geometry. Furthermore, by using the kernel function with directional weighting, prior information on primary noise source directions can be taken into consideration. However, whereas the sound field to be interpolated is a superposition of primary and secondary sound fields, the directional weight for the primary noise source was applied to the total sound field in previous work; therefore, the performance improvement was limited. We propose a method of individually interpolating the primary and secondary sound fields and formulate a normalized least-mean-square algorithm based on this interpolation method. Experimental results indicate that the proposed method outperforms the method based on total kernel interpolation.

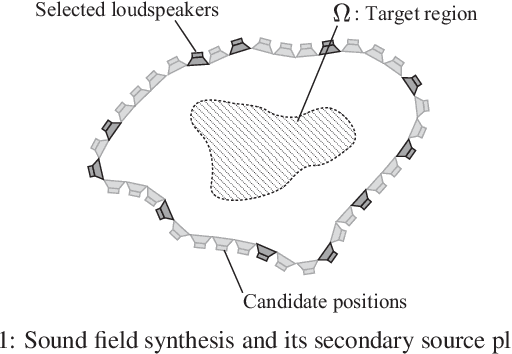

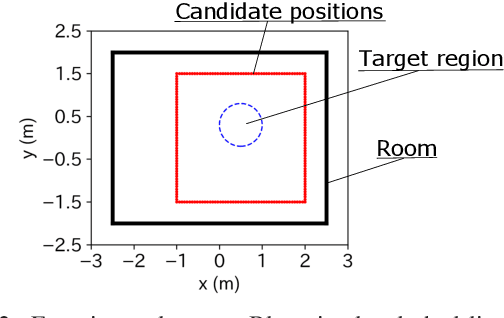

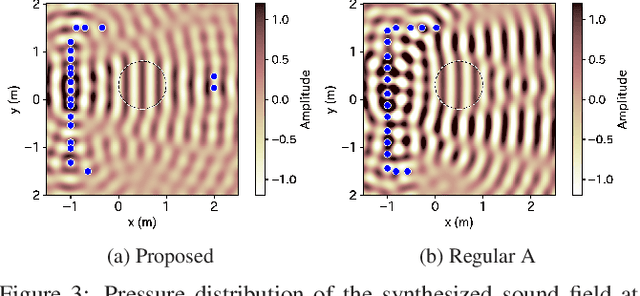

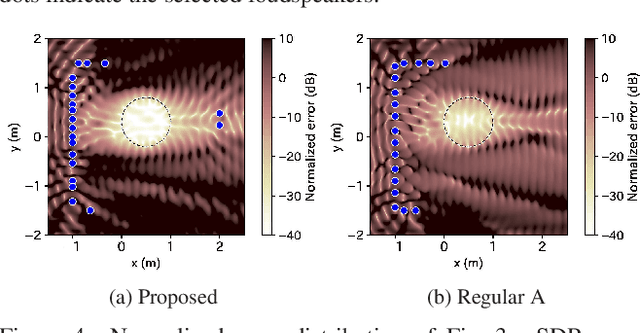

Mean-square-error-based secondary source placement in sound field synthesis with prior information on desired field

Dec 10, 2021

Abstract:A method of optimizing secondary source placement in sound field synthesis is proposed. Such an optimization method will be useful when the allowable placement region and available number of loudspeakers are limited. We formulate a mean-square-error-based cost function, incorporating the statistical properties of possible desired sound fields, for general linear-least-squares-based sound field synthesis methods, including pressure matching and (weighted) mode matching, whereas most of the current methods are applicable only to the pressure-matching method. An efficient greedy algorithm for minimizing the proposed cost function is also derived. Numerical experiments indicated that a high reproduction accuracy can be achieved by the placement optimized by the proposed method compared with the empirically used regular placement.

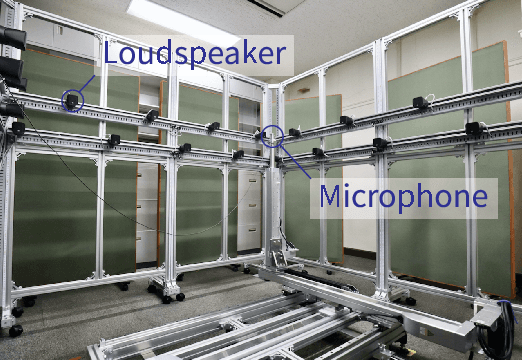

Sound Field Reproduction With Weighted Mode Matching and Infinite-Dimensional Harmonic Analysis: An Experimental Evaluation

Nov 22, 2021

Abstract:Sound field reproduction methods based on numerical optimization, which aim to minimize the error between synthesized and desired sound fields, are useful in many practical scenarios because of their flexibility in the array geometry of loudspeakers. However, the reproduction performance of these methods in a practical environment has not been sufficiently investigated. We evaluate weighted mode matching, which is a sound field reproduction method based on the spherical wavefunction expansion of the sound field, in comparison with conventional pressure matching. We also introduce a method of infinite-dimensional harmonic analysis for estimating the expansion coefficients of the sound field from microphone measurements. Experimental results indicated that weighted mode matching using the expansion coefficients of the transfer functions estimated by the infinite-dimensional harmonic analysis outperforms conventional pressure matching, especially when the number of microphones is small.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge