Shivam Kalra

Learning Opposites Using Neural Networks

Sep 16, 2016

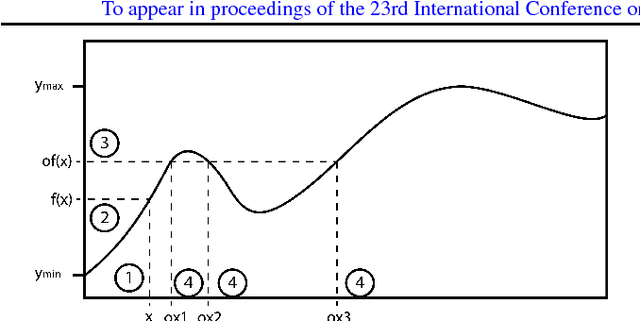

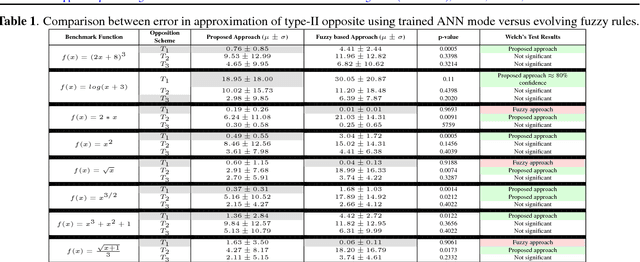

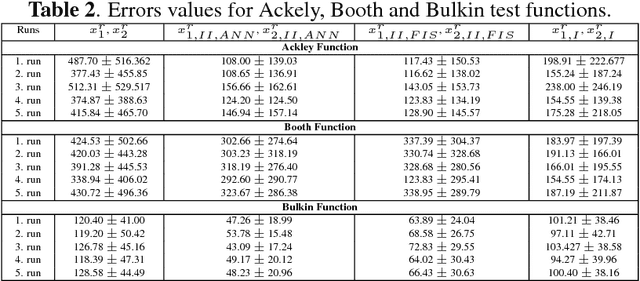

Abstract:Many research works have successfully extended algorithms such as evolutionary algorithms, reinforcement agents and neural networks using "opposition-based learning" (OBL). Two types of the "opposites" have been defined in the literature, namely \textit{type-I} and \textit{type-II}. The former are linear in nature and applicable to the variable space, hence easy to calculate. On the other hand, type-II opposites capture the "oppositeness" in the output space. In fact, type-I opposites are considered a special case of type-II opposites where inputs and outputs have a linear relationship. However, in many real-world problems, inputs and outputs do in fact exhibit a nonlinear relationship. Therefore, type-II opposites are expected to be better in capturing the sense of "opposition" in terms of the input-output relation. In the absence of any knowledge about the problem at hand, there seems to be no intuitive way to calculate the type-II opposites. In this paper, we introduce an approach to learn type-II opposites from the given inputs and their outputs using the artificial neural networks (ANNs). We first perform \emph{opposition mining} on the sample data, and then use the mined data to learn the relationship between input $x$ and its opposite $\breve{x}$. We have validated our algorithm using various benchmark functions to compare it against an evolving fuzzy inference approach that has been recently introduced. The results show the better performance of a neural approach to learn the opposites. This will create new possibilities for integrating oppositional schemes within existing algorithms promising a potential increase in convergence speed and/or accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge