Morteza Ashraphijuo

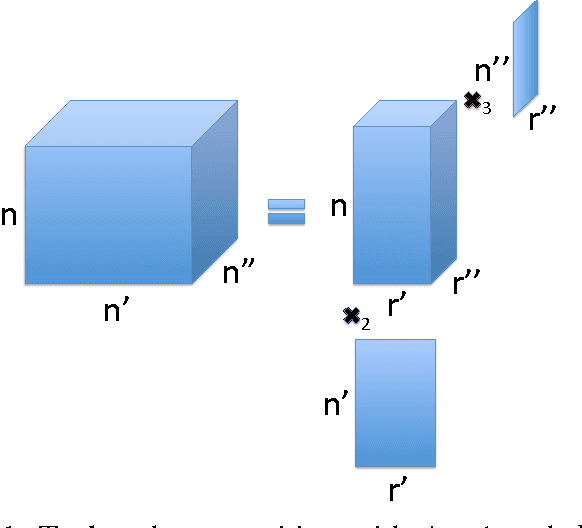

Deterministic and Probabilistic Conditions for Finite Completability of Low-Tucker-Rank Tensor

Feb 28, 2018

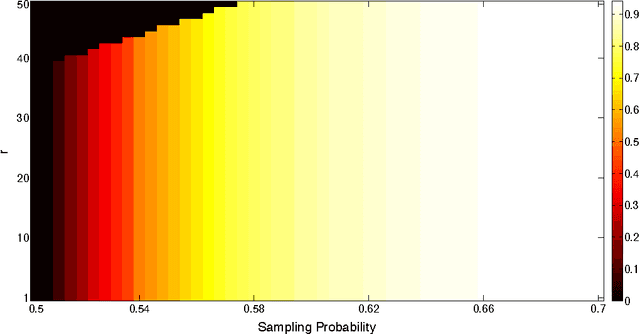

Abstract:We investigate the fundamental conditions on the sampling pattern, i.e., locations of the sampled entries, for finite completability of a low-rank tensor given some components of its Tucker rank. In order to find the deterministic necessary and sufficient conditions, we propose an algebraic geometric analysis on the Tucker manifold, which allows us to incorporate multiple rank components in the proposed analysis in contrast with the conventional geometric approaches on the Grassmannian manifold. This analysis characterizes the algebraic independence of a set of polynomials defined based on the sampling pattern, which is closely related to finite completion. Probabilistic conditions are then studied and a lower bound on the sampling probability is given, which guarantees that the proposed deterministic conditions on the sampling patterns for finite completability hold with high probability. Furthermore, using the proposed geometric approach for finite completability, we propose a sufficient condition on the sampling pattern that ensures there exists exactly one completion for the sampled tensor.

On Deterministic Sampling Patterns for Robust Low-Rank Matrix Completion

Dec 05, 2017

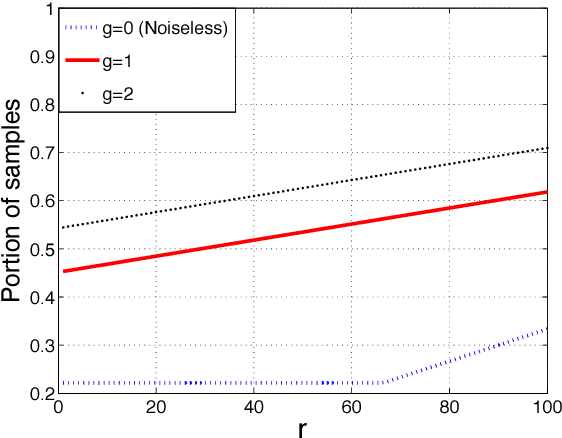

Abstract:In this letter, we study the deterministic sampling patterns for the completion of low rank matrix, when corrupted with a sparse noise, also known as robust matrix completion. We extend the recent results on the deterministic sampling patterns in the absence of noise based on the geometric analysis on the Grassmannian manifold. A special case where each column has a certain number of noisy entries is considered, where our probabilistic analysis performs very efficiently. Furthermore, assuming that the rank of the original matrix is not given, we provide an analysis to determine if the rank of a valid completion is indeed the actual rank of the data corrupted with sparse noise by verifying some conditions.

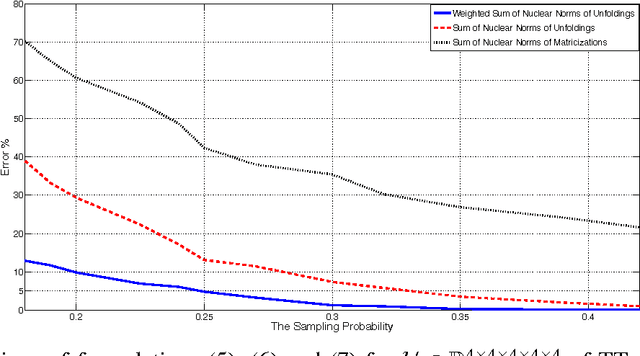

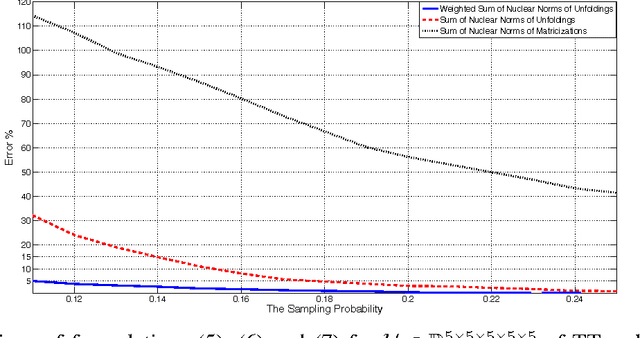

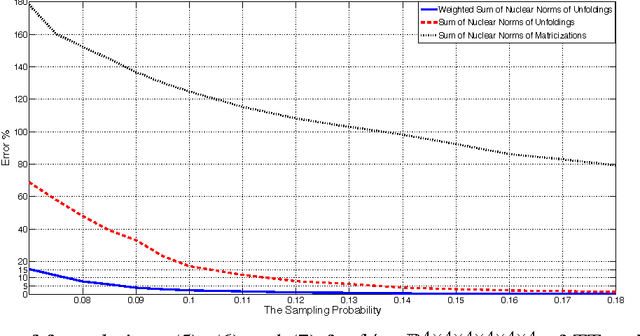

Scaled Nuclear Norm Minimization for Low-Rank Tensor Completion

Jul 25, 2017

Abstract:Minimizing the nuclear norm of a matrix has been shown to be very efficient in reconstructing a low-rank sampled matrix. Furthermore, minimizing the sum of nuclear norms of matricizations of a tensor has been shown to be very efficient in recovering a low-Tucker-rank sampled tensor. In this paper, we propose to recover a low-TT-rank sampled tensor by minimizing a weighted sum of nuclear norms of unfoldings of the tensor. We provide numerical results to show that our proposed method requires significantly less number of samples to recover to the original tensor in comparison with simply minimizing the sum of nuclear norms since the structure of the unfoldings in the TT tensor model is fundamentally different from that of matricizations in the Tucker tensor model.

Rank Determination for Low-Rank Data Completion

Jul 03, 2017

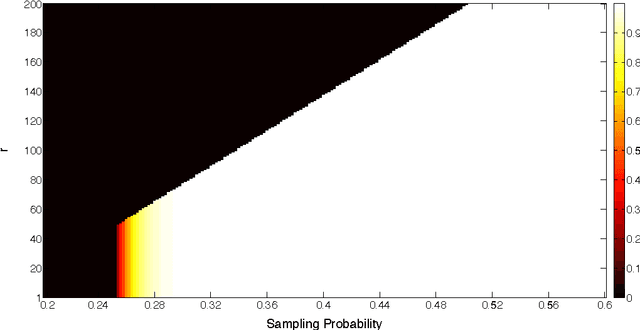

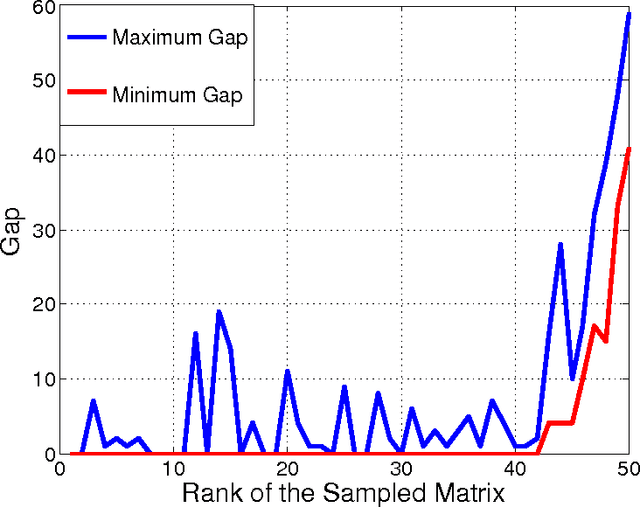

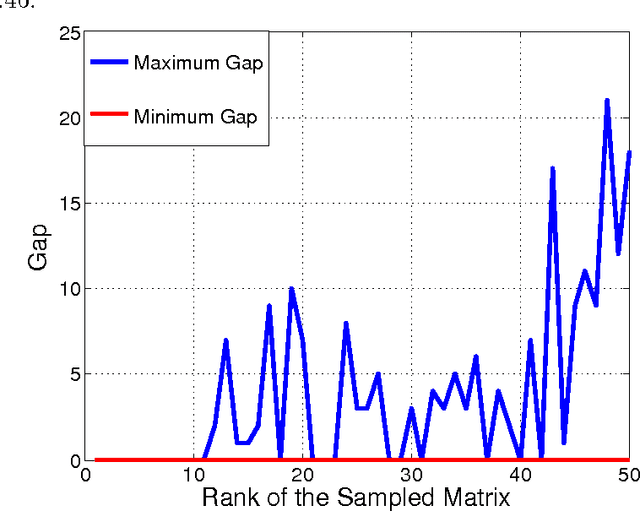

Abstract:Recently, fundamental conditions on the sampling patterns have been obtained for finite completability of low-rank matrices or tensors given the corresponding ranks. In this paper, we consider the scenario where the rank is not given and we aim to approximate the unknown rank based on the location of sampled entries and some given completion. We consider a number of data models, including single-view matrix, multi-view matrix, CP tensor, tensor-train tensor and Tucker tensor. For each of these data models, we provide an upper bound on the rank when an arbitrary low-rank completion is given. We characterize these bounds both deterministically, i.e., with probability one given that the sampling pattern satisfies certain combinatorial properties, and probabilistically, i.e., with high probability given that the sampling probability is above some threshold. Moreover, for both single-view matrix and CP tensor, we are able to show that the obtained upper bound is exactly equal to the unknown rank if the lowest-rank completion is given. Furthermore, we provide numerical experiments for the case of single-view matrix, where we use nuclear norm minimization to find a low-rank completion of the sampled data and we observe that in most of the cases the proposed upper bound on the rank is equal to the true rank.

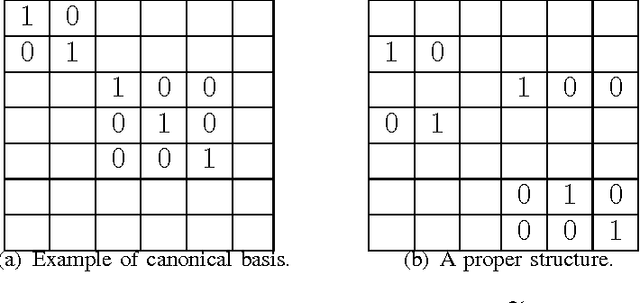

Deterministic and Probabilistic Conditions for Finite Completability of Low-rank Multi-View Data

Apr 26, 2017

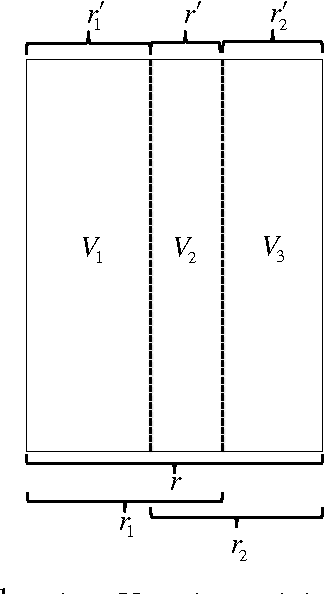

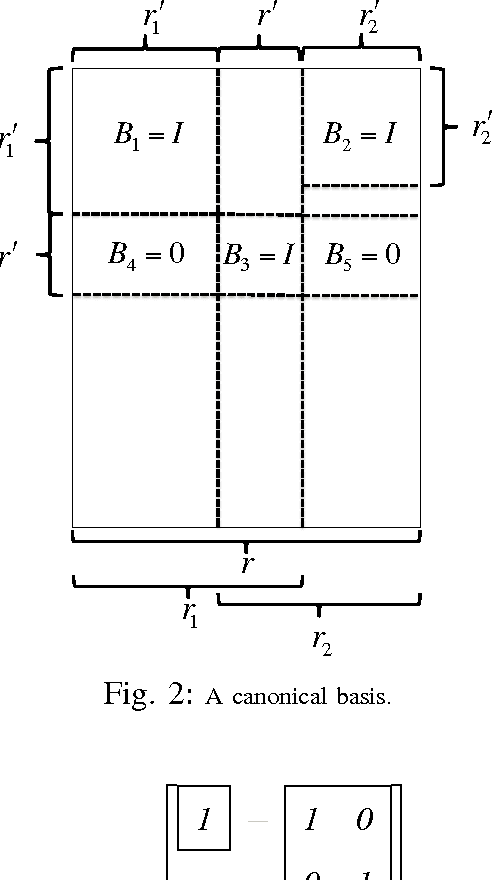

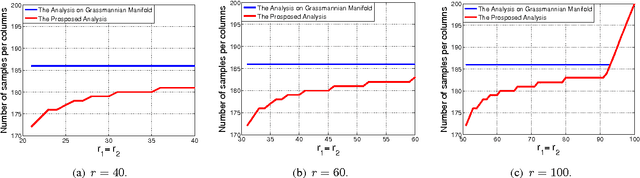

Abstract:We consider the multi-view data completion problem, i.e., to complete a matrix $\mathbf{U}=[\mathbf{U}_1|\mathbf{U}_2]$ where the ranks of $\mathbf{U},\mathbf{U}_1$, and $\mathbf{U}_2$ are given. In particular, we investigate the fundamental conditions on the sampling pattern, i.e., locations of the sampled entries for finite completability of such a multi-view data given the corresponding rank constraints. In contrast with the existing analysis on Grassmannian manifold for a single-view matrix, i.e., conventional matrix completion, we propose a geometric analysis on the manifold structure for multi-view data to incorporate more than one rank constraint. We provide a deterministic necessary and sufficient condition on the sampling pattern for finite completability. We also give a probabilistic condition in terms of the number of samples per column that guarantees finite completability with high probability. Finally, using the developed tools, we derive the deterministic and probabilistic guarantees for unique completability.

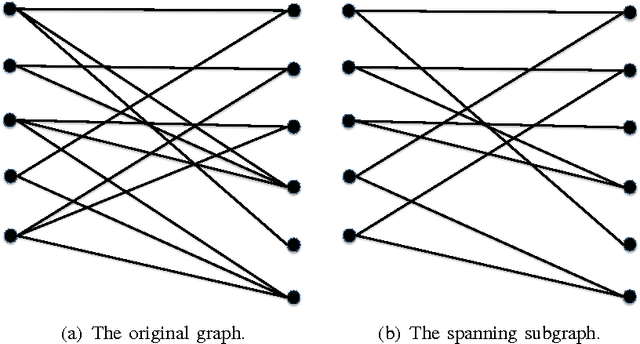

Fundamental Conditions for Low-CP-Rank Tensor Completion

Mar 31, 2017

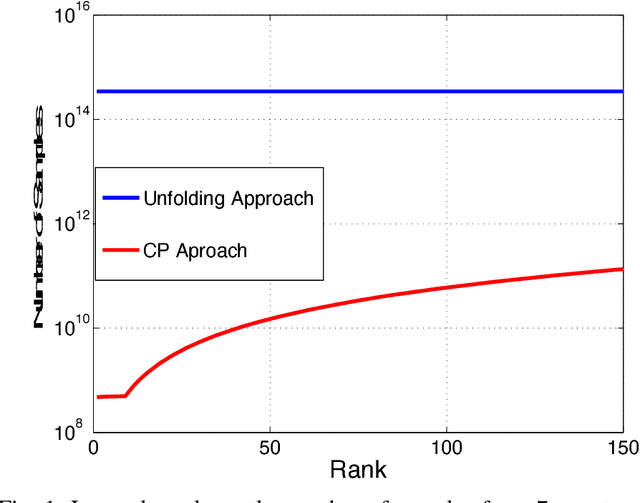

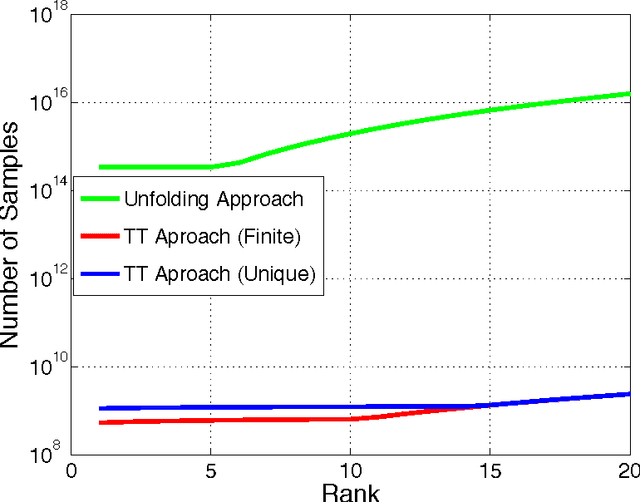

Abstract:We consider the problem of low canonical polyadic (CP) rank tensor completion. A completion is a tensor whose entries agree with the observed entries and its rank matches the given CP rank. We analyze the manifold structure corresponding to the tensors with the given rank and define a set of polynomials based on the sampling pattern and CP decomposition. Then, we show that finite completability of the sampled tensor is equivalent to having a certain number of algebraically independent polynomials among the defined polynomials. Our proposed approach results in characterizing the maximum number of algebraically independent polynomials in terms of a simple geometric structure of the sampling pattern, and therefore we obtain the deterministic necessary and sufficient condition on the sampling pattern for finite completability of the sampled tensor. Moreover, assuming that the entries of the tensor are sampled independently with probability $p$ and using the mentioned deterministic analysis, we propose a combinatorial method to derive a lower bound on the sampling probability $p$, or equivalently, the number of sampled entries that guarantees finite completability with high probability. We also show that the existing result for the matrix completion problem can be used to obtain a loose lower bound on the sampling probability $p$. In addition, we obtain deterministic and probabilistic conditions for unique completability. It is seen that the number of samples required for finite or unique completability obtained by the proposed analysis on the CP manifold is orders-of-magnitude lower than that is obtained by the existing analysis on the Grassmannian manifold.

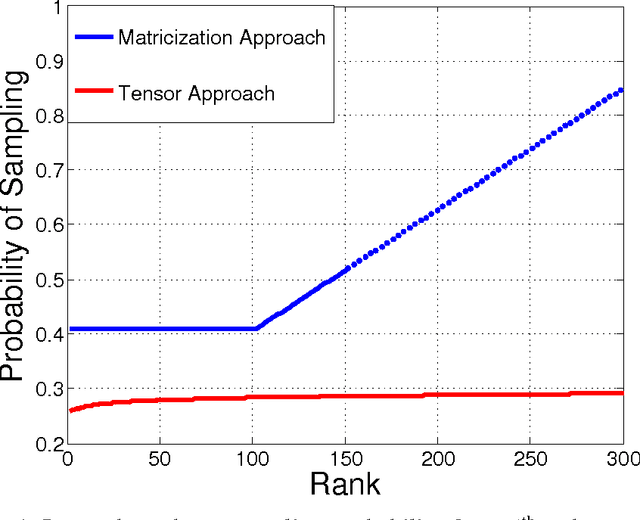

Characterization of Deterministic and Probabilistic Sampling Patterns for Finite Completability of Low Tensor-Train Rank Tensor

Mar 22, 2017

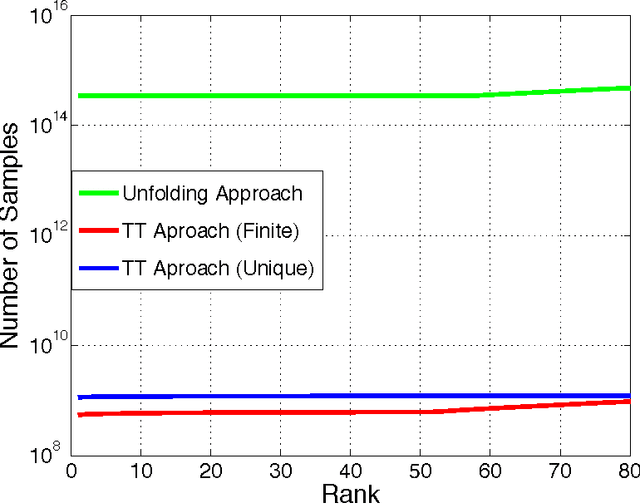

Abstract:In this paper, we analyze the fundamental conditions for low-rank tensor completion given the separation or tensor-train (TT) rank, i.e., ranks of unfoldings. We exploit the algebraic structure of the TT decomposition to obtain the deterministic necessary and sufficient conditions on the locations of the samples to ensure finite completability. Specifically, we propose an algebraic geometric analysis on the TT manifold that can incorporate the whole rank vector simultaneously in contrast to the existing approach based on the Grassmannian manifold that can only incorporate one rank component. Our proposed technique characterizes the algebraic independence of a set of polynomials defined based on the sampling pattern and the TT decomposition, which is instrumental to obtaining the deterministic condition on the sampling pattern for finite completability. In addition, based on the proposed analysis, assuming that the entries of the tensor are sampled independently with probability $p$, we derive a lower bound on the sampling probability $p$, or equivalently, the number of sampled entries that ensures finite completability with high probability. Moreover, we also provide the deterministic and probabilistic conditions for unique completability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge