Customizable Avatars with Dynamic Facial Action Coded Expressions (CADyFACE) for Improved User Engagement

Mar 12, 2024Megan A. Witherow, Crystal Butler, Winston J. Shields, Furkan Ilgin, Norou Diawara, Janice Keener, John W. Harrington, Khan M. Iftekharuddin

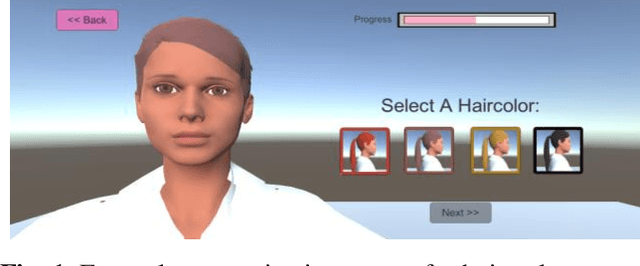

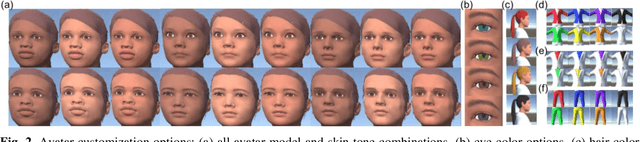

Customizable 3D avatar-based facial expression stimuli may improve user engagement in behavioral biomarker discovery and therapeutic intervention for autism, Alzheimer's disease, facial palsy, and more. However, there is a lack of customizable avatar-based stimuli with Facial Action Coding System (FACS) action unit (AU) labels. Therefore, this study focuses on (1) FACS-labeled, customizable avatar-based expression stimuli for maintaining subjects' engagement, (2) learning-based measurements that quantify subjects' facial responses to such stimuli, and (3) validation of constructs represented by stimulus-measurement pairs. We propose Customizable Avatars with Dynamic Facial Action Coded Expressions (CADyFACE) labeled with AUs by a certified FACS expert. To measure subjects' AUs in response to CADyFACE, we propose a novel Beta-guided Correlation and Multi-task Expression learning neural network (BeCoME-Net) for multi-label AU detection. The beta-guided correlation loss encourages feature correlation with AUs while discouraging correlation with subject identities for improved generalization. We train BeCoME-Net for unilateral and bilateral AU detection and compare with state-of-the-art approaches. To assess construct validity of CADyFACE and BeCoME-Net, twenty healthy adult volunteers complete expression recognition and mimicry tasks in an online feasibility study while webcam-based eye-tracking and video are collected. We test validity of multiple constructs, including face preference during recognition and AUs during mimicry.

Deep Adaptation of Adult-Child Facial Expressions by Fusing Landmark Features

Sep 18, 2022Megan A. Witherow, Manar D. Samad, Norou Diawara, Haim Y. Bar, Khan M. Iftekharuddin

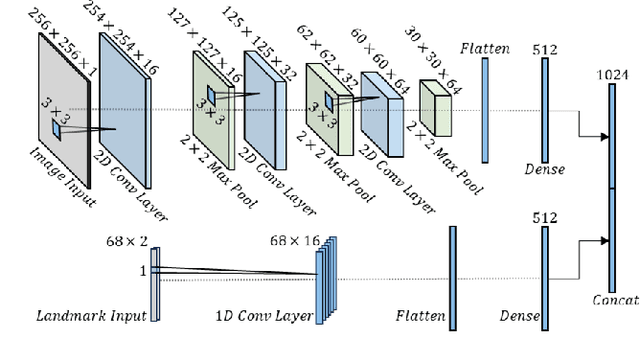

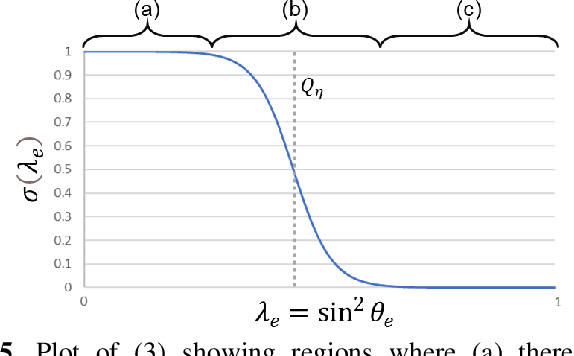

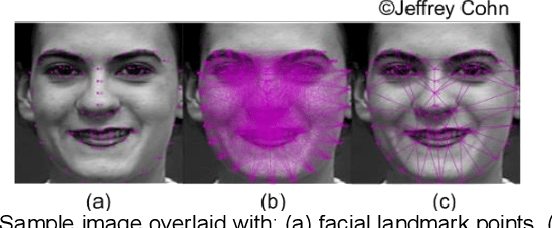

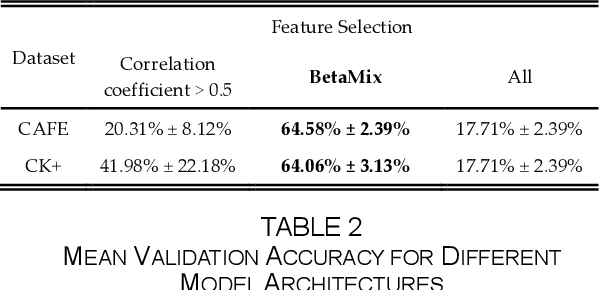

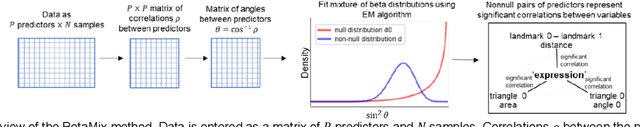

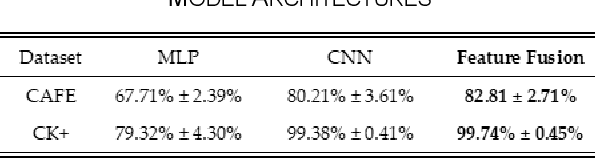

Imaging of facial affects may be used to measure psychophysiological attributes of children through their adulthood, especially for monitoring lifelong conditions like Autism Spectrum Disorder. Deep convolutional neural networks have shown promising results in classifying facial expressions of adults. However, classifier models trained with adult benchmark data are unsuitable for learning child expressions due to discrepancies in psychophysical development. Similarly, models trained with child data perform poorly in adult expression classification. We propose domain adaptation to concurrently align distributions of adult and child expressions in a shared latent space to ensure robust classification of either domain. Furthermore, age variations in facial images are studied in age-invariant face recognition yet remain unleveraged in adult-child expression classification. We take inspiration from multiple fields and propose deep adaptive FACial Expressions fusing BEtaMix SElected Landmark Features (FACE-BE-SELF) for adult-child facial expression classification. For the first time in the literature, a mixture of Beta distributions is used to decompose and select facial features based on correlations with expression, domain, and identity factors. We evaluate FACE-BE-SELF on two pairs of adult-child data sets. Our proposed FACE-BE-SELF approach outperforms adult-child transfer learning and other baseline domain adaptation methods in aligning latent representations of adult and child expressions.

Effect of Text Processing Steps on Twitter Sentiment Classification using Word Embedding

Jul 25, 2020Manar D. Samad, Nalin D. Khounviengxay, Megan A. Witherow

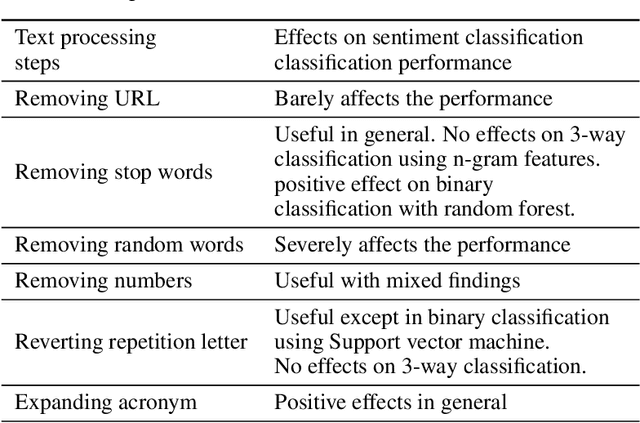

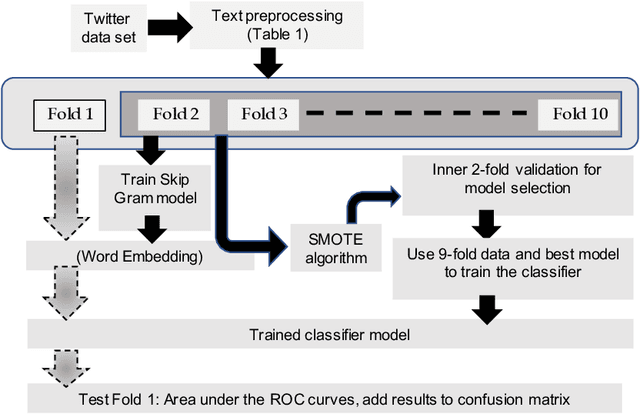

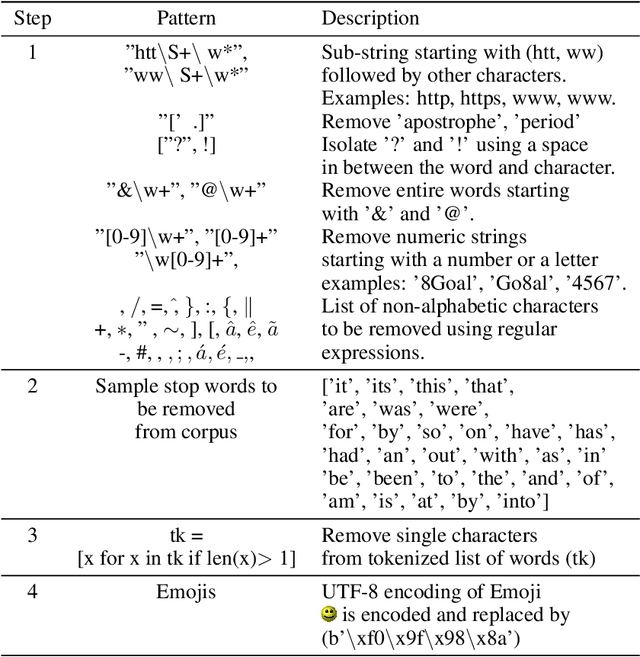

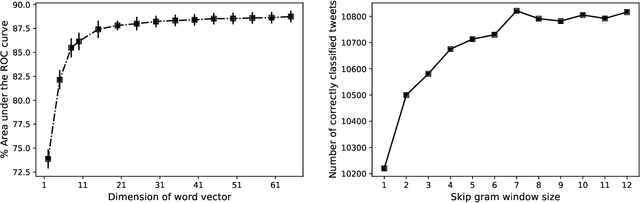

Processing of raw text is the crucial first step in text classification and sentiment analysis. However, text processing steps are often performed using off-the-shelf routines and pre-built word dictionaries without optimizing for domain, application, and context. This paper investigates the effect of seven text processing scenarios on a particular text domain (Twitter) and application (sentiment classification). Skip gram-based word embeddings are developed to include Twitter colloquial words, emojis, and hashtag keywords that are often removed for being unavailable in conventional literature corpora. Our experiments reveal negative effects on sentiment classification of two common text processing steps: 1) stop word removal and 2) averaging of word vectors to represent individual tweets. New effective steps for 1) including non-ASCII emoji characters, 2) measuring word importance from word embedding, 3) aggregating word vectors into a tweet embedding, and 4) developing linearly separable feature space have been proposed to optimize the sentiment classification pipeline. The best combination of text processing steps yields the highest average area under the curve (AUC) of 88.4 (+/-0.4) in classifying 14,640 tweets with three sentiment labels. Word selection from context-driven word embedding reveals that only the ten most important words in Tweets cumulatively yield over 98% of the maximum accuracy. Results demonstrate a means for data-driven selection of important words in tweet classification as opposed to using pre-built word dictionaries. The proposed tweet embedding is robust to and alleviates the need for several text processing steps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge