Approximating Aggregated SQL Queries With LSTM Networks

Nov 02, 2020Nir Regev, Lior Rokach, Asaf Shabtai

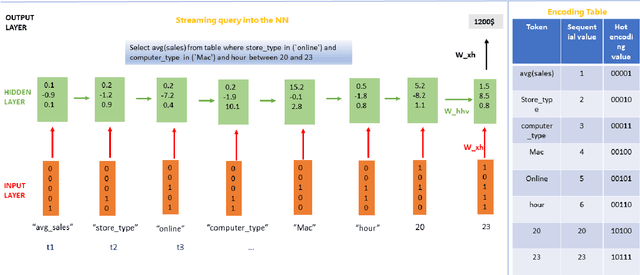

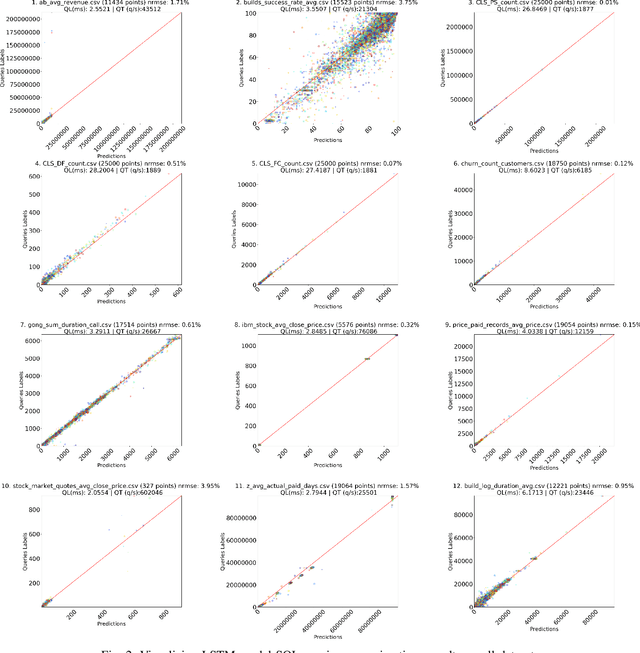

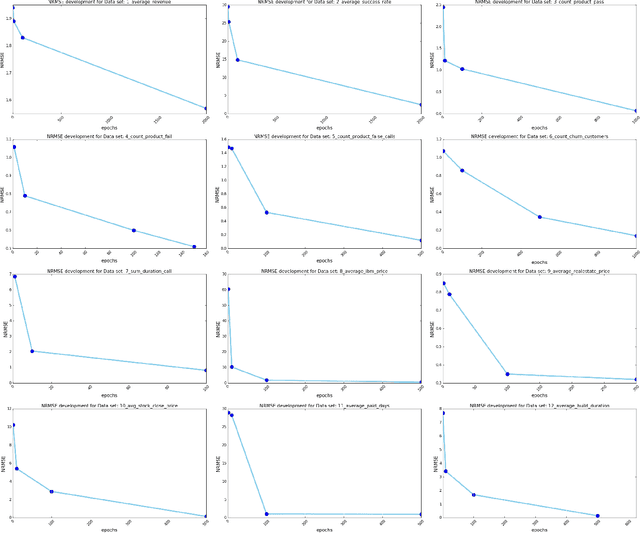

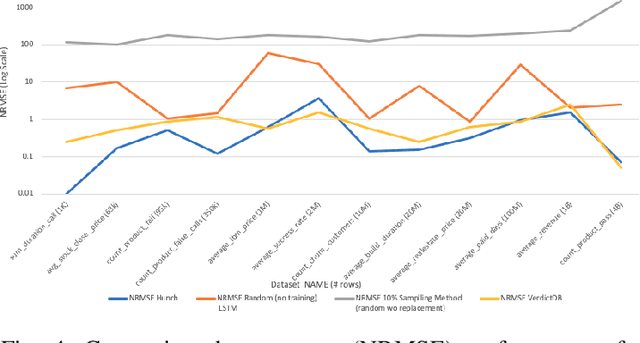

Despite continuous investments in data technologies, the latency of querying data still poses a significant challenge. Modern analytic solutions require near real-time responsiveness both to make them interactive and to support automated processing. Current technologies (Hadoop, Spark, Dataflow) scan the dataset to execute queries. They focus on providing a scalable data storage to maximize task execution speed. We argue that these solutions fail to offer an adequate level of interactivity since they depend on continual access to data. In this paper we present a method for query approximation, also known as approximate query processing (AQP), that reduce the need to scan data during inference (query calculation), thus enabling a rapid query processing tool. We use LSTM network to learn the relationship between queries and their results, and to provide a rapid inference layer for predicting query results. Our method (referred as ``Hunch``) produces a lightweight LSTM network which provides a high query throughput. We evaluated our method using 12 datasets. The results show that our method predicted queries' results with a normalized root mean squared error (NRMSE) ranging from approximately 1\% to 4\%. Moreover, our method was able to predict up to 120,000 queries in a second (streamed together), and with a single query latency of no more than 2ms.

Lessons Learned from Applying off-the-shelf BERT: There is no Silver Bullet

Sep 18, 2020Victor Makarenkov, Lior Rokach

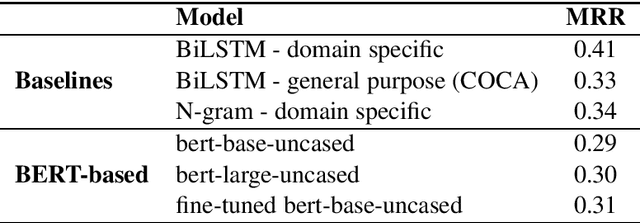

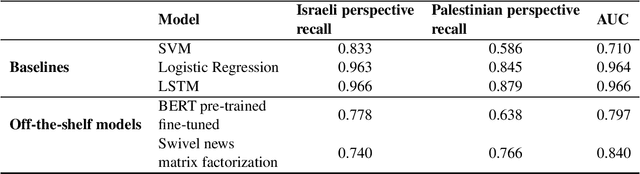

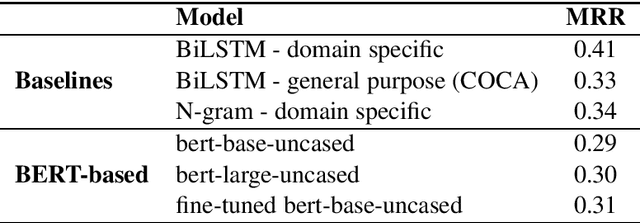

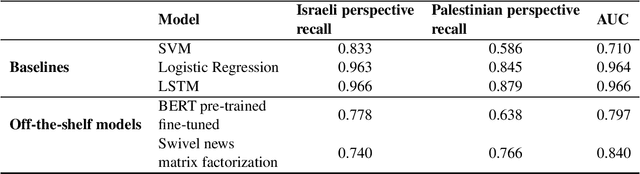

One of the challenges in the NLP field is training large classification models, a task that is both difficult and tedious. It is even harder when GPU hardware is unavailable. The increased availability of pre-trained and off-the-shelf word embeddings, models, and modules aim at easing the process of training large models and achieving a competitive performance. We explore the use of off-the-shelf BERT models and share the results of our experiments and compare their results to those of LSTM networks and more simple baselines. We show that the complexity and computational cost of BERT is not a guarantee for enhanced predictive performance in the classification tasks at hand.

Lessons Learned from Applying off-the-shelf BERT: There is no SilverBullet

Sep 15, 2020Victor Makarenkov, Lior Rokach

One of the challenges in the NLP field is training large classification models, a task that is both difficult and tedious. It is even harder when GPU hardware is unavailable. The increased availability of pre-trained and off-the-shelf word embeddings, models, and modules aim at easing the process of training large models and achieving a competitive performance. We explore the use of off-the-shelf BERT models and share the results of our experiments and compare their results to those of LSTM networks and more simple baselines. We show that the complexity and computational cost of BERT is not a guarantee for enhanced predictive performance in the classification tasks at hand.

Evolving Context-Aware Recommender Systems With Users in Mind

Jul 30, 2020Amit Livne, Eliad Shem Tov, Adir Solomon, Achiya Elyasaf, Bracha Shapira, Lior Rokach

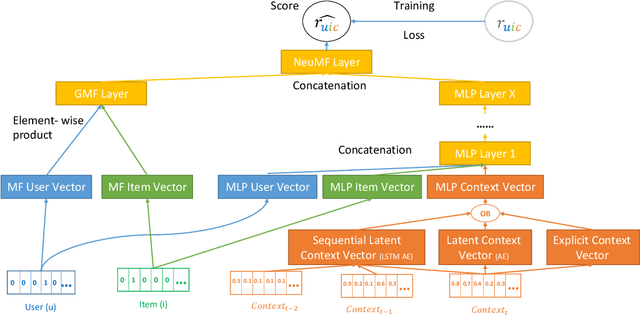

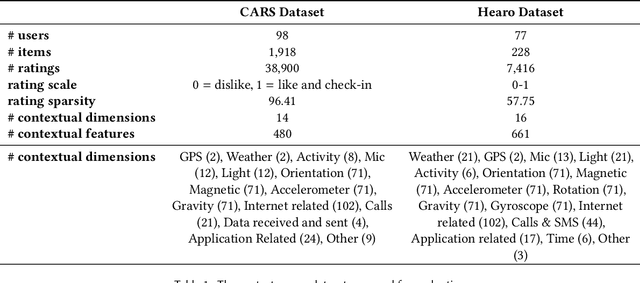

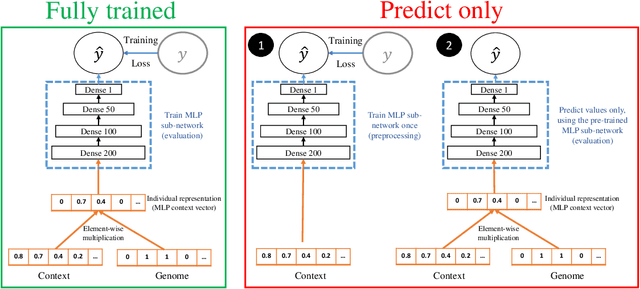

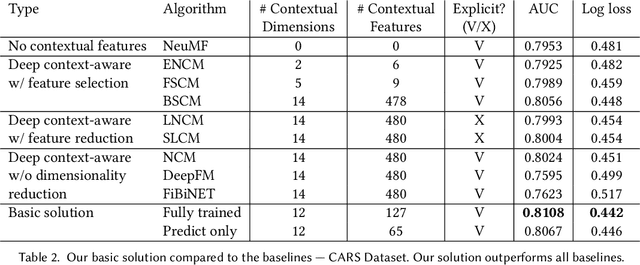

A context-aware recommender system (CARS) applies sensing and analysis of user context to provide personalized services. The contextual information can be driven from sensors in order to improve the accuracy of the recommendations. Yet, generating accurate recommendations is not enough to constitute a useful system from the users' perspective, since certain contextual information may cause different issues, such as draining the user's battery, privacy issues, and more. Adding high-dimensional contextual information may increase both the dimensionality and sparsity of the model. Previous studies suggest reducing the amount of contextual information by selecting the most suitable contextual information using a domain knowledge. Another solution is compressing it into a denser latent space, thus disrupting the ability to explain the recommendation item to the user, and damaging users' trust. In this paper we present an approach for selecting low-dimensional subsets of the contextual information and incorporating them explicitly within CARS. Specifically, we present a novel feature-selection algorithm, based on genetic algorithms (GA), that outperforms SOTA dimensional-reduction CARS algorithms, improves the accuracy and the explainability of the recommendations, and allows for controlling user aspects, such as privacy and battery consumption. Furthermore, we exploit the top subsets that are generated along the evolutionary process, by learning multiple deep context-aware models and applying a stacking technique on them, thus improving the accuracy while remaining at the explicit space. We evaluated our approach on two high-dimensional context-aware datasets driven from smartphones. An empirical analysis of our results validates that our proposed approach outperforms SOTA CARS models while improving transparency and explainability to the user.

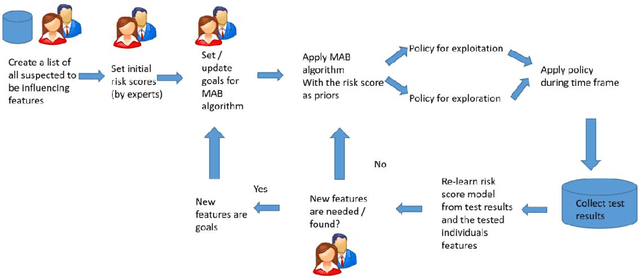

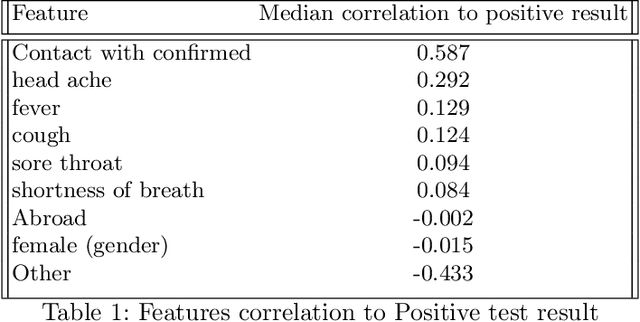

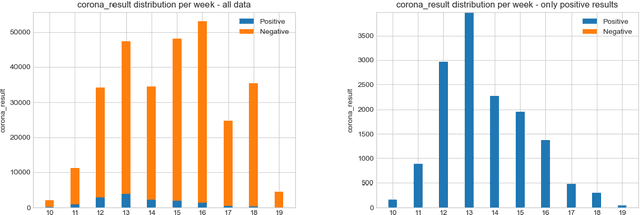

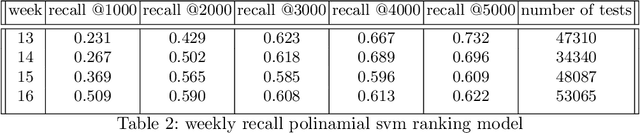

A framework for optimizing COVID-19 testing policy using a Multi Armed Bandit approach

Jul 28, 2020Hagit Grushka-Cohen, Raphael Cohen, Bracha Shapira, Jacob Moran-Gilad, Lior Rokach

Testing is an important part of tackling the COVID-19 pandemic. Availability of testing is a bottleneck due to constrained resources and effective prioritization of individuals is necessary. Here, we discuss the impact of different prioritization policies on COVID-19 patient discovery and the ability of governments and health organizations to use the results for effective decision making. We suggest a framework for testing that balances the maximal discovery of positive individuals with the need for population-based surveillance aimed at understanding disease spread and characteristics. This framework draws from similar approaches to prioritization in the domain of cyber-security based on ranking individuals using a risk score and then reserving a portion of the capacity for random sampling. This approach is an application of Multi-Armed-Bandits maximizing exploration/exploitation of the underlying distribution. We find that individuals can be ranked for effective testing using a few simple features, and that ranking them using such models we can capture 65% (CI: 64.7%-68.3%) of the positive individuals using less than 20% of the testing capacity or 92.1% (CI: 91.1%-93.2%) of positives individuals using 70% of the capacity, allowing reserving a significant portion of the tests for population studies. Our approach allows experts and decision-makers to tailor the resulting policies as needed allowing transparency into the ranking policy and the ability to understand the disease spread in the population and react quickly and in an informed manner.

Iterative Boosting Deep Neural Networks for Predicting Click-Through Rate

Jul 26, 2020Amit Livne, Roy Dor, Eyal Mazuz, Tamar Didi, Bracha Shapira, Lior Rokach

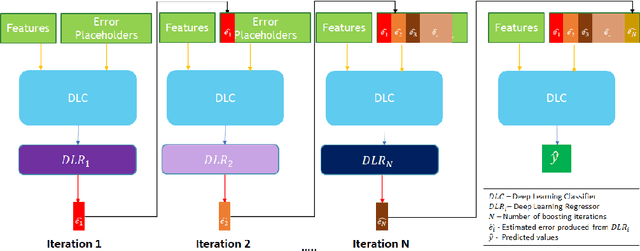

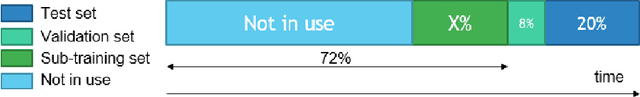

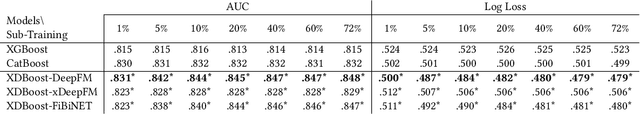

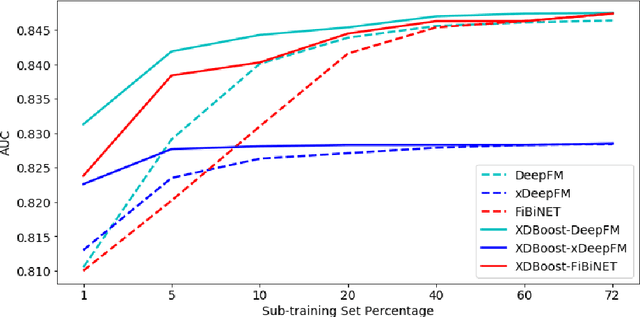

The click-through rate (CTR) reflects the ratio of clicks on a specific item to its total number of views. It has significant impact on websites' advertising revenue. Learning sophisticated models to understand and predict user behavior is essential for maximizing the CTR in recommendation systems. Recent works have suggested new methods that replace the expensive and time-consuming feature engineering process with a variety of deep learning (DL) classifiers capable of capturing complicated patterns from raw data; these methods have shown significant improvement on the CTR prediction task. While DL techniques can learn intricate user behavior patterns, it relies on a vast amount of data and does not perform as well when there is a limited amount of data. We propose XDBoost, a new DL method for capturing complex patterns that requires just a limited amount of raw data. XDBoost is an iterative three-stage neural network model influenced by the traditional machine learning boosting mechanism. XDBoost's components operate sequentially similar to boosting; However, unlike conventional boosting, XDBoost does not sum the predictions generated by its components. Instead, it utilizes these predictions as new artificial features and enhances CTR prediction by retraining the model using these features. Comprehensive experiments conducted to illustrate the effectiveness of XDBoost on two datasets demonstrated its ability to outperform existing state-of-the-art (SOTA) models for CTR prediction.

Adversarial Learning in the Cyber Security Domain

Jul 05, 2020Ihai Rosenberg, Asaf Shabtai, Yuval Elovici, Lior Rokach

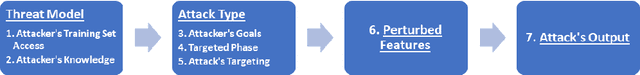

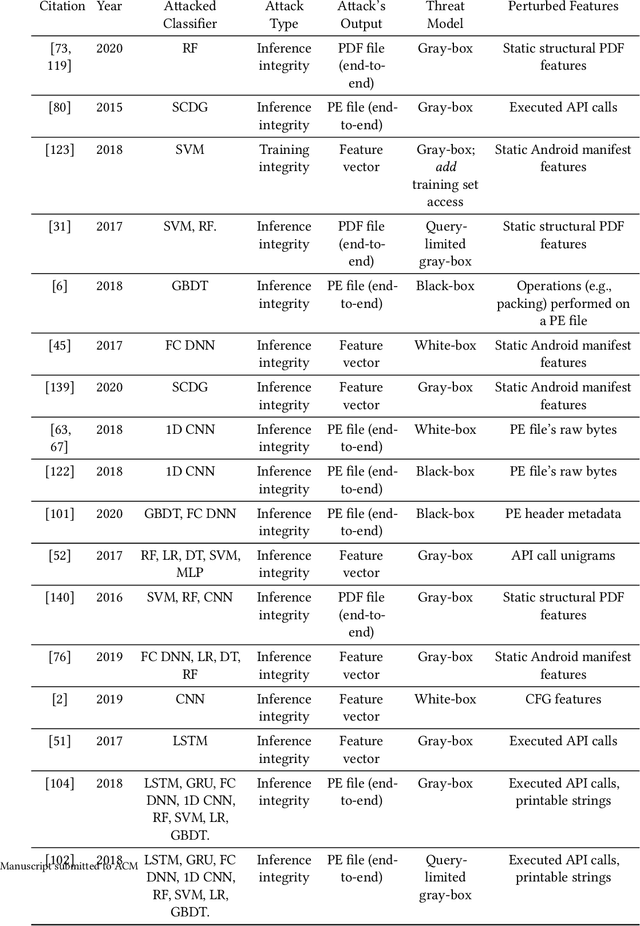

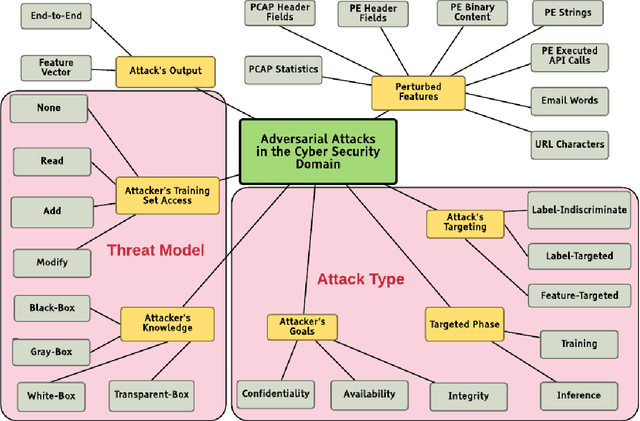

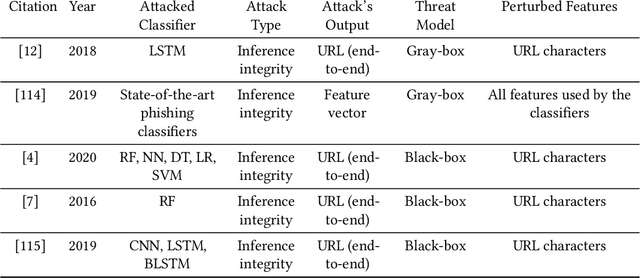

In recent years, machine learning algorithms, and more specially, deep learning algorithms, have been widely used in many fields, including cyber security. However, machine learning systems are vulnerable to adversarial attacks, and this limits the application of machine learning, especially in non-stationary, adversarial environments, such as the cyber security domain, where actual adversaries (e.g., malware developers) exist. This paper comprehensively summarizes the latest research on adversarial attacks against security solutions that are based on machine learning techniques and presents the risks they pose to cyber security solutions. First, we discuss the unique challenges of implementing end-to-end adversarial attacks in the cyber security domain. Following that, we define a unified taxonomy, where the adversarial attack methods are characterized based on their stage of occurrence, and the attacker's goals and capabilities. Then, we categorize the applications of adversarial attack techniques in the cyber security domain. Finally, we use our taxonomy to shed light on gaps in the cyber security domain that have already been addressed in other adversarial learning domains and discuss their impact on future adversarial learning trends in the cyber security domain.

PIVEN: A Deep Neural Network for Prediction Intervals with Specific Value Prediction

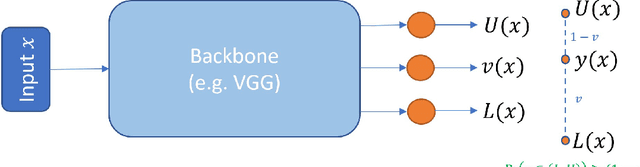

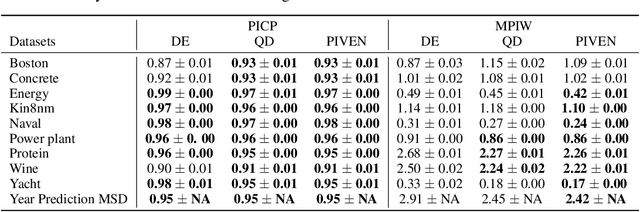

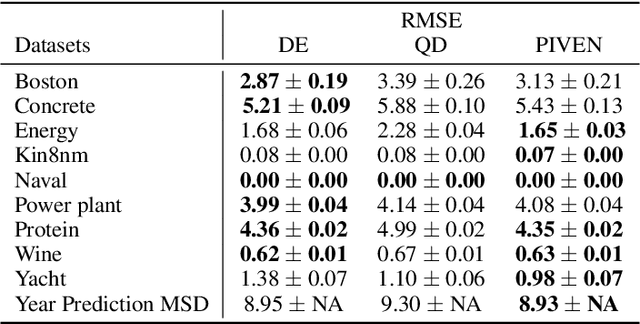

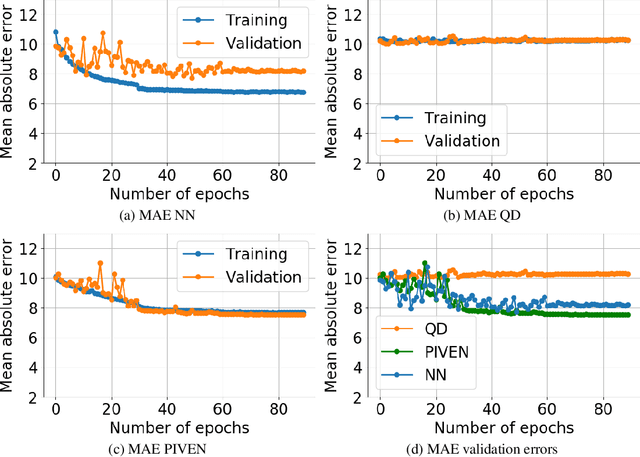

Jun 09, 2020Eli Simhayev, Gilad Katz, Lior Rokach

Improving the robustness of neural nets in regression tasks is key to their application in multiple domains. Deep learning-based approaches aim to achieve this goal either by improving the manner in which they produce their prediction of specific values (i.e., point prediction), or by producing prediction intervals (PIs) that quantify uncertainty. We present PIVEN, a deep neural network for producing both a PI and a prediction of specific values. Benchmark experiments show that our approach produces tighter uncertainty bounds than the current state-of-the-art approach for producing PIs, while managing to maintain comparable performance to the state-of-the-art approach for specific value-prediction. Additional evaluation on large image datasets further support our conclusions.

Automatic Machine Learning Derived from Scholarly Big Data

Mar 06, 2020Asnat Greenstein-Messica, Roman Vainshtein, Gilad Katz, Bracha Shapira, Lior Rokach

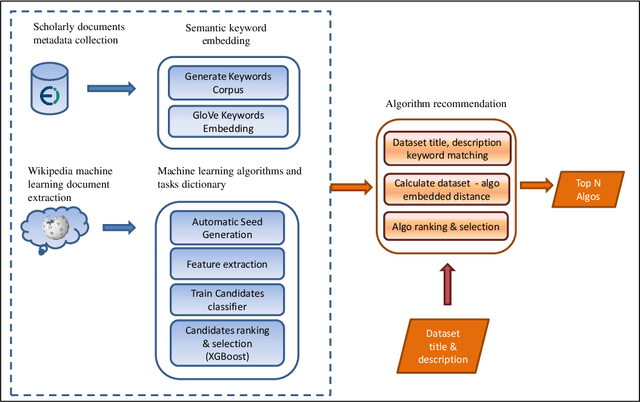

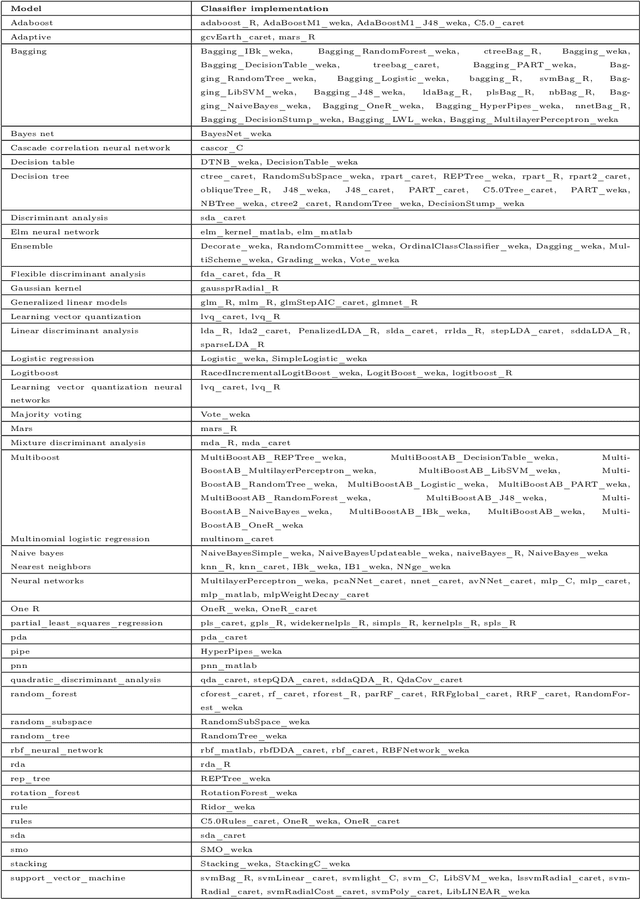

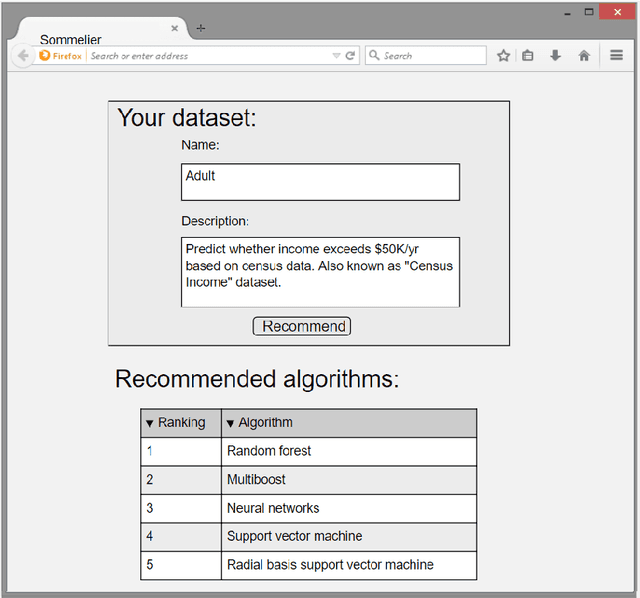

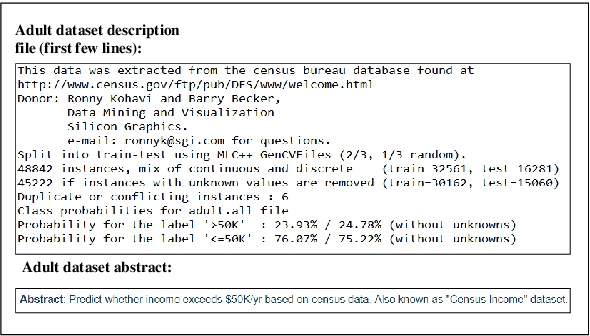

One of the challenging aspects of applying machine learning is the need to identify the algorithms that will perform best for a given dataset. This process can be difficult, time consuming and often requires a great deal of domain knowledge. We present Sommelier, an expert system for recommending the machine learning algorithms that should be applied on a previously unseen dataset. Sommelier is based on word embedding representations of the domain knowledge extracted from a large corpus of academic publications. When presented with a new dataset and its problem description, Sommelier leverages a recommendation model trained on the word embedding representation to provide a ranked list of the most relevant algorithms to be used on the dataset. We demonstrate Sommelier's effectiveness by conducting an extensive evaluation on 121 publicly available datasets and 53 classification algorithms. The top algorithms recommended for each dataset by Sommelier were able to achieve on average 97.7% of the optimal accuracy of all surveyed algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge