Kit Kuksenok

Toward Best Practices for Explainable B2B Machine Learning

Jun 11, 2019

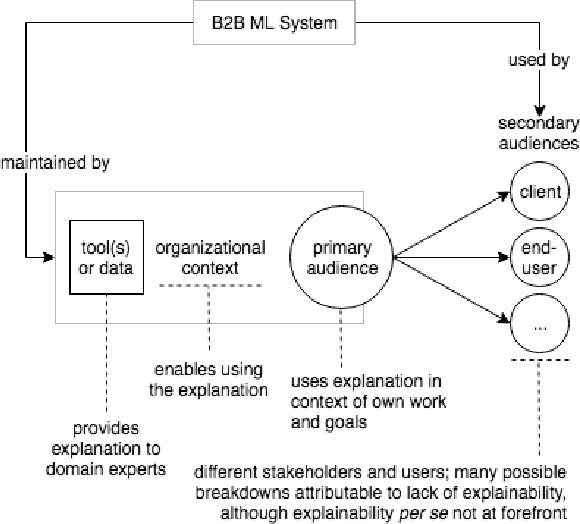

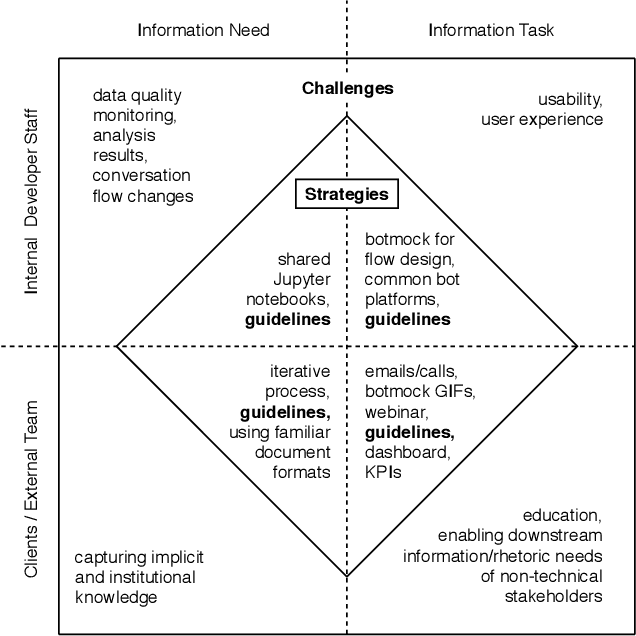

Abstract:To design tools and data pipelines for explainable B2B machine learning (ML) systems, we need to recognize not only the immediate audience of such tools and data, but also (1) their organizational context and (2) secondary audiences. Our learnings are based on building custom ML-based chatbots for recruitment. We believe that in the B2B context, "explainable" ML means not only a system that can "explain itself" through tools and data pipelines, but also enables its domain-expert users to explain it to other stakeholders.

Evaluation and Improvement of Chatbot Text Classification Data Quality Using Plausible Negative Examples

Jun 05, 2019

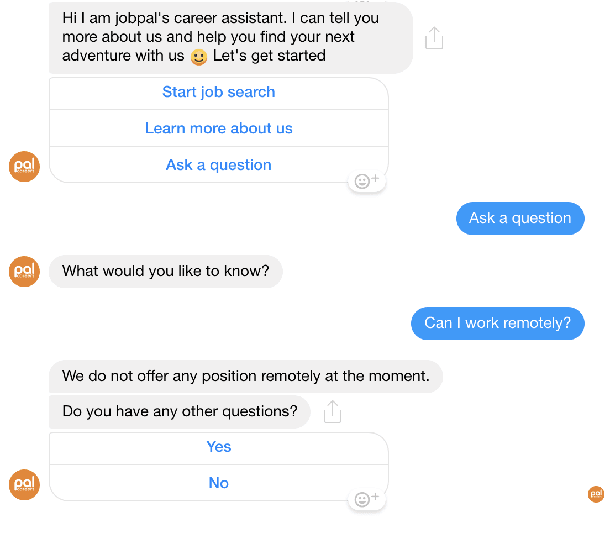

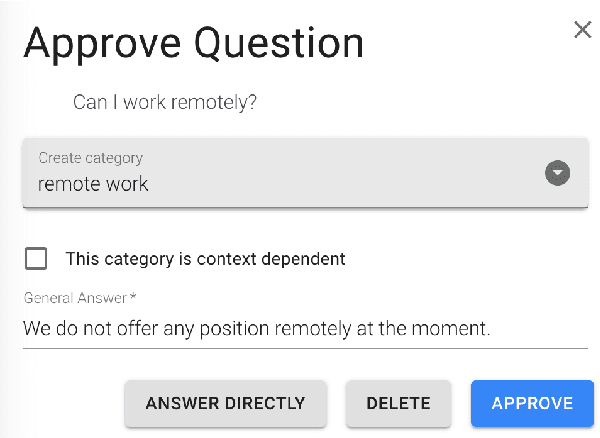

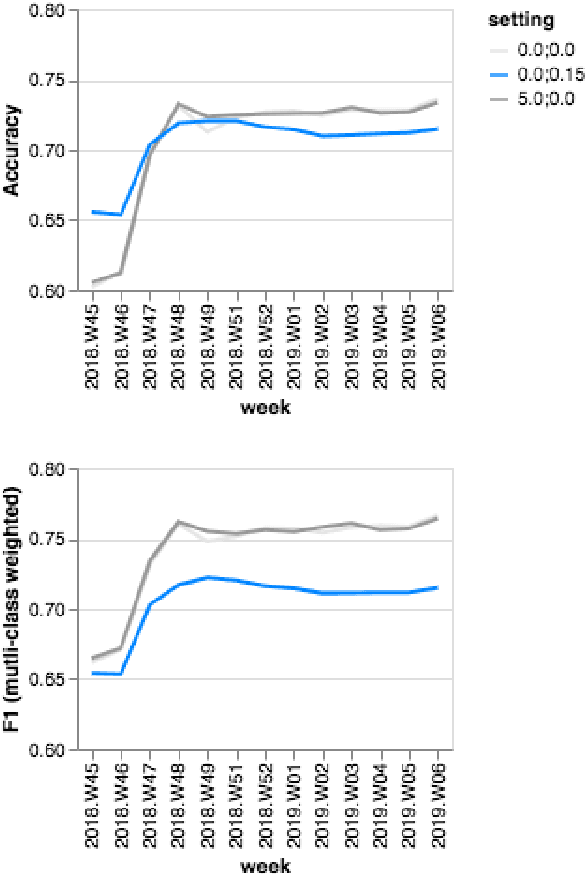

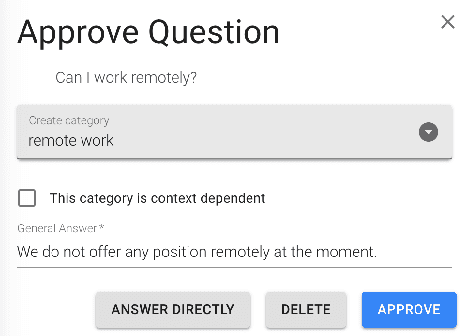

Abstract:We describe and validate a metric for estimating multi-class classifier performance based on cross-validation and adapted for improvement of small, unbalanced natural-language datasets used in chatbot design. Our experiences draw upon building recruitment chatbots that mediate communication between job-seekers and recruiters by exposing the ML/NLP dataset to the recruiting team. Evaluation approaches must be understandable to various stakeholders, and useful for improving chatbot performance. The metric, nex-cv, uses negative examples in the evaluation of text classification, and fulfils three requirements. First, it is actionable: it can be used by non-developer staff. Second, it is not overly optimistic compared to human ratings, making it a fast method for comparing classifiers. Third, it allows model-agnostic comparison, making it useful for comparing systems despite implementation differences. We validate the metric based on seven recruitment-domain datasets in English and German over the course of one year.

Transparency in Maintenance of Recruitment Chatbots

May 09, 2019

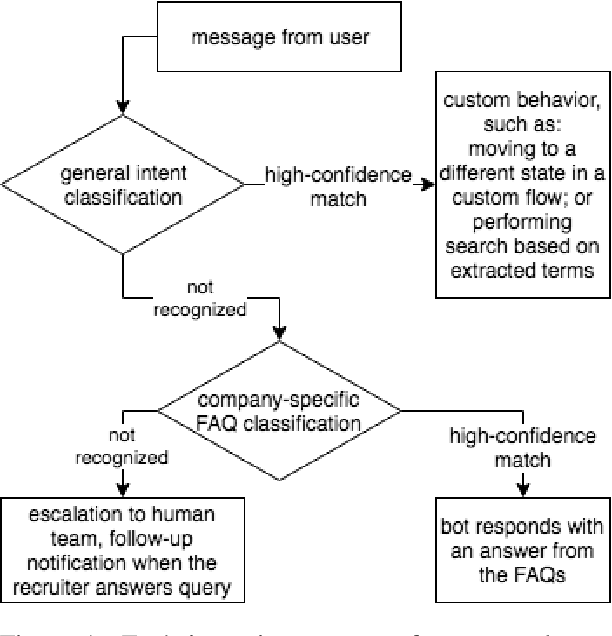

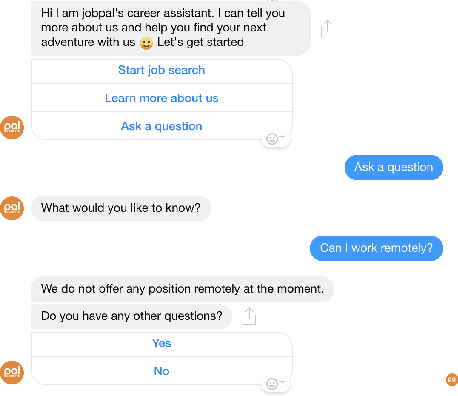

Abstract:We report on experiences with implementing conversational agents in the recruitment domain based on a machine learning (ML) system. Recruitment chatbots mediate communication between job-seekers and recruiters by exposing ML data to recruiter teams. Errors are difficult to understand, communicate, and resolve because they may span and combine UX, ML, and software issues. In an effort to improve organizational and technical transparency, we came to rely on a key contact role. Though effective for design and development, the centralization of this role poses challenges for transparency in sustained maintenance of this kind of ML-based mediating system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge