Dennis Prangle

Marginal sequential Monte Carlo for doubly intractable models

Oct 12, 2017

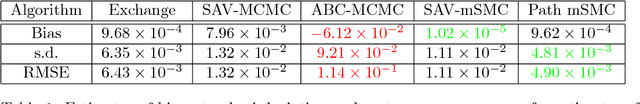

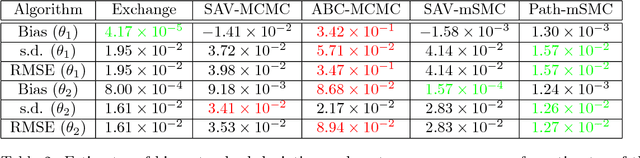

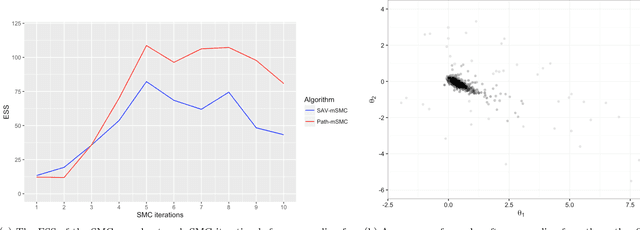

Abstract:Bayesian inference for models that have an intractable partition function is known as a doubly intractable problem, where standard Monte Carlo methods are not applicable. The past decade has seen the development of auxiliary variable Monte Carlo techniques (M{\o}ller et al., 2006; Murray et al., 2006) for tackling this problem; these approaches being members of the more general class of pseudo-marginal, or exact-approximate, Monte Carlo algorithms (Andrieu and Roberts, 2009), which make use of unbiased estimates of intractable posteriors. Everitt et al. (2017) investigated the use of exact-approximate importance sampling (IS) and sequential Monte Carlo (SMC) in doubly intractable problems, but focussed only on SMC algorithms that used data-point tempering. This paper describes SMC samplers that may use alternative sequences of distributions, and describes ways in which likelihood estimates may be improved adaptively as the algorithm progresses, building on ideas from Moores et al. (2015). This approach is compared with a number of alternative algorithms for doubly intractable problems, including approximate Bayesian computation (ABC), which we show is closely related to the method of M{\o}ller et al. (2006).

An ABC interpretation of the multiple auxiliary variable method

Apr 27, 2016Abstract:We show that the auxiliary variable method (M{\o}ller et al., 2006; Murray et al., 2006) for inference of Markov random fields can be viewed as an approximate Bayesian computation method for likelihood estimation.

Lazier ABC

Jan 21, 2015

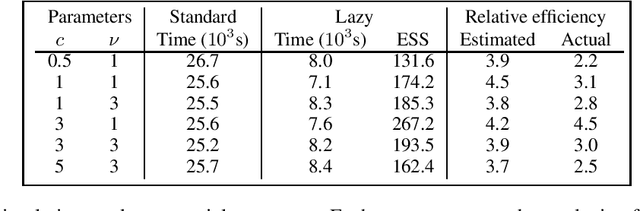

Abstract:ABC algorithms involve a large number of simulations from the model of interest, which can be very computationally costly. This paper summarises the lazy ABC algorithm of Prangle (2015), which reduces the computational demand by abandoning many unpromising simulations before completion. By using a random stopping decision and reweighting the output sample appropriately, the target distribution is the same as for standard ABC. Lazy ABC is also extended here to the case of non-uniform ABC kernels, which is shown to simplify the process of tuning the algorithm effectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge