Christopher Meek

ARMA Time-Series Modeling with Graphical Models

Aug 08, 2012

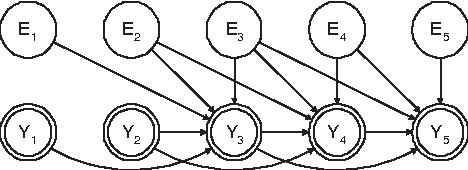

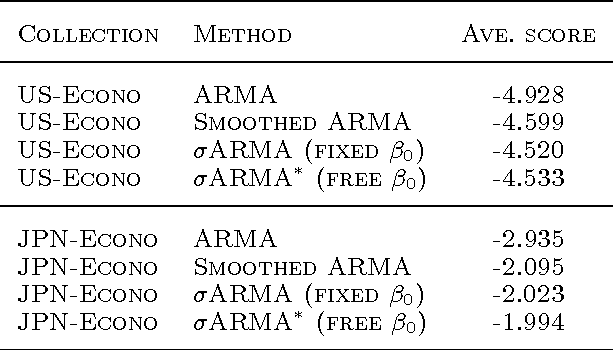

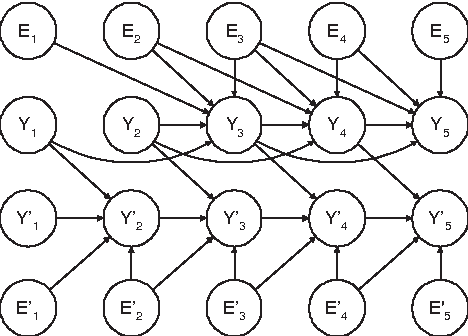

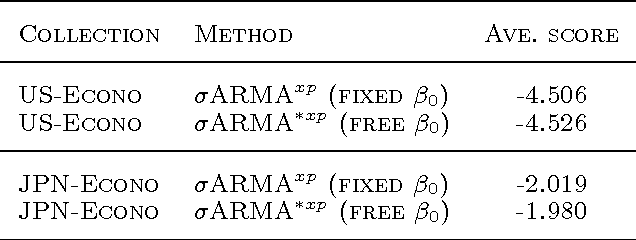

Abstract:We express the classic ARMA time-series model as a directed graphical model. In doing so, we find that the deterministic relationships in the model make it effectively impossible to use the EM algorithm for learning model parameters. To remedy this problem, we replace the deterministic relationships with Gaussian distributions having a small variance, yielding the stochastic ARMA (ARMA) model. This modification allows us to use the EM algorithm to learn parmeters and to forecast,even in situations where some data is missing. This modification, in conjunction with the graphicalmodel approach, also allows us to include cross predictors in situations where there are multiple times series and/or additional nontemporal covariates. More surprising,experiments suggest that the move to stochastic ARMA yields improved accuracy through better smoothing. We demonstrate improvements afforded by cross prediction and better smoothing on real data.

Inference for Multiplicative Models

Jun 13, 2012

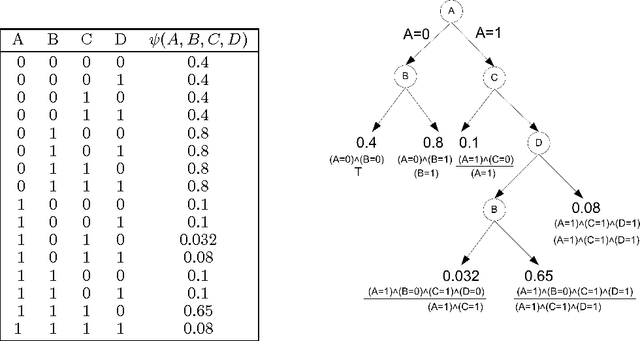

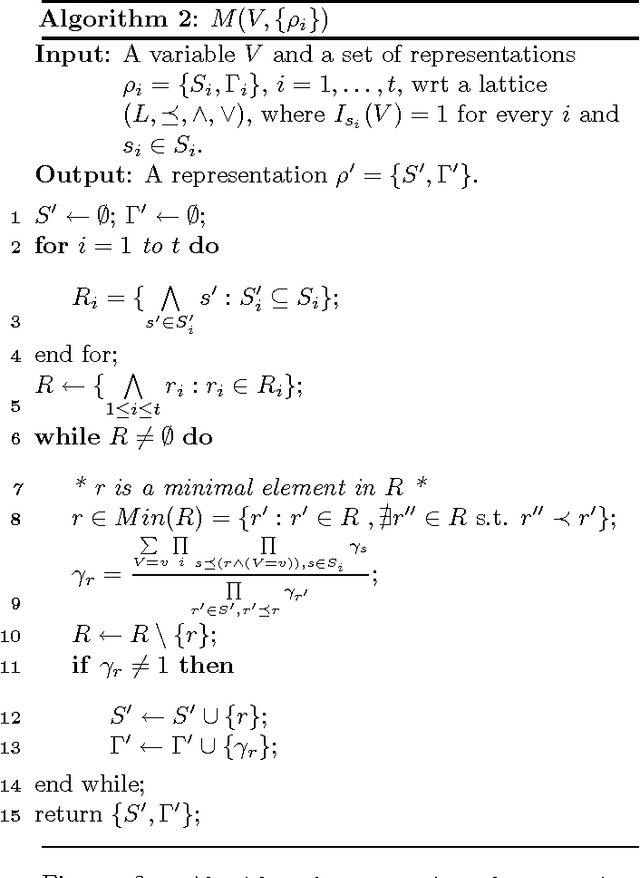

Abstract:The paper introduces a generalization for known probabilistic models such as log-linear and graphical models, called here multiplicative models. These models, that express probabilities via product of parameters are shown to capture multiple forms of contextual independence between variables, including decision graphs and noisy-OR functions. An inference algorithm for multiplicative models is provided and its correctness is proved. The complexity analysis of the inference algorithm uses a more refined parameter than the tree-width of the underlying graph, and shows the computational cost does not exceed that of the variable elimination algorithm in graphical models. The paper ends with examples where using the new models and algorithm is computationally beneficial.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge