Antonia Creswell

Adversarial Training For Sketch Retrieval

Aug 23, 2016

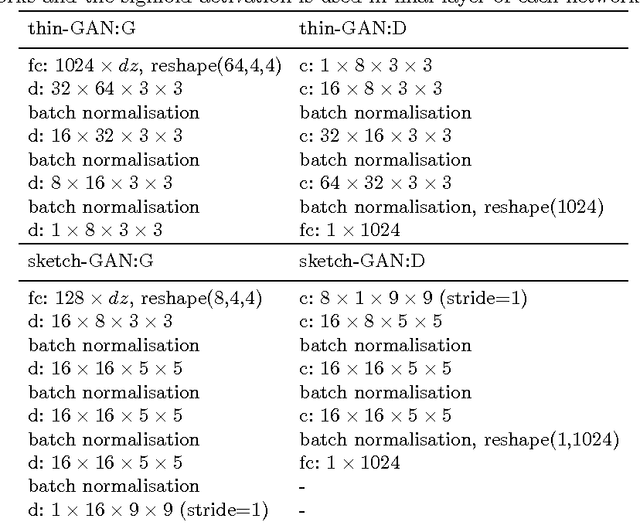

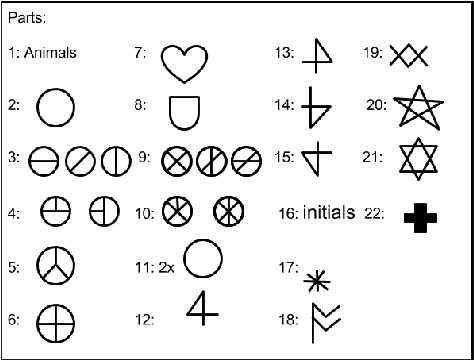

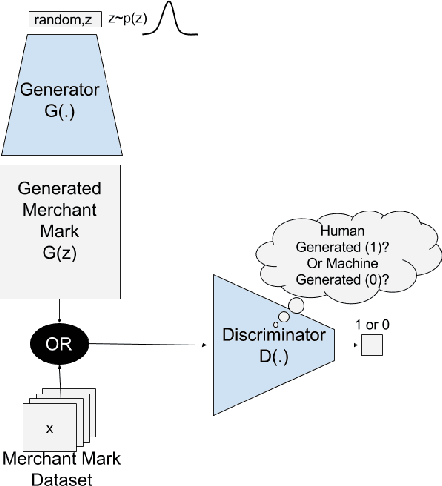

Abstract:Generative Adversarial Networks (GAN) are able to learn excellent representations for unlabelled data which can be applied to image generation and scene classification. Representations learned by GANs have not yet been applied to retrieval. In this paper, we show that the representations learned by GANs can indeed be used for retrieval. We consider heritage documents that contain unlabelled Merchant Marks, sketch-like symbols that are similar to hieroglyphs. We introduce a novel GAN architecture with design features that make it suitable for sketch retrieval. The performance of this sketch-GAN is compared to a modified version of the original GAN architecture with respect to simple invariance properties. Experiments suggest that sketch-GANs learn representations that are suitable for retrieval and which also have increased stability to rotation, scale and translation compared to the standard GAN architecture.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge