Backpropagation for Implicit Spectral Densities

Jun 01, 2018Aditya Ramesh, Yann LeCun

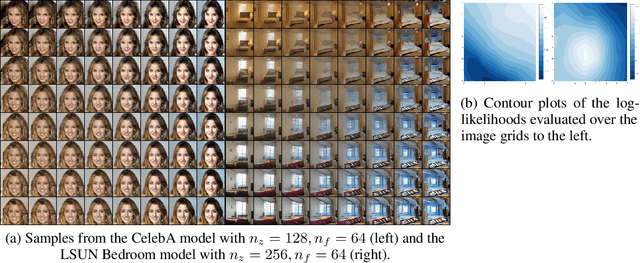

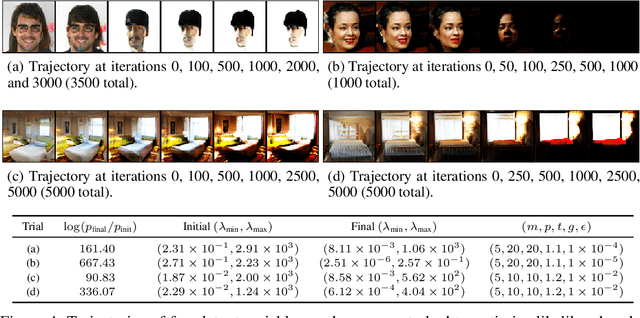

Most successful machine intelligence systems rely on gradient-based learning, which is made possible by backpropagation. Some systems are designed to aid us in interpreting data when explicit goals cannot be provided. These unsupervised systems are commonly trained by backpropagating through a likelihood function. We introduce a tool that allows us to do this even when the likelihood is not explicitly set, by instead using the implicit likelihood of the model. Explicitly defining the likelihood often entails making heavy-handed assumptions that impede our ability to solve challenging tasks. On the other hand, the implicit likelihood of the model is accessible without the need for such assumptions. Our tool, which we call spectral backpropagation, allows us to optimize it in much greater generality than what has been attempted before. GANs can also be viewed as a technique for optimizing implicit likelihoods. We study them using spectral backpropagation in order to demonstrate robustness for high-dimensional problems, and identify two novel properties of the generator G: (1) there exist aberrant, nonsensical outputs to which G assigns very high likelihood, and (2) the eigenvectors of the metric induced by G over latent space correspond to quasi-disentangled explanatory factors.

Disentangling factors of variation in deep representations using adversarial training

Nov 10, 2016Michael Mathieu, Junbo Zhao, Pablo Sprechmann, Aditya Ramesh, Yann LeCun

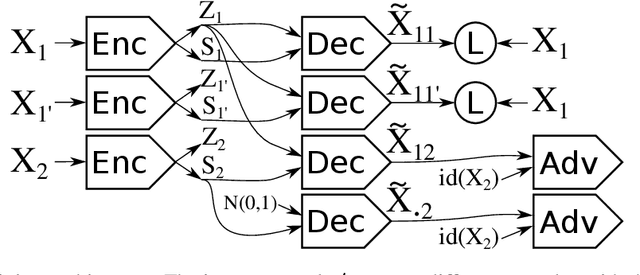

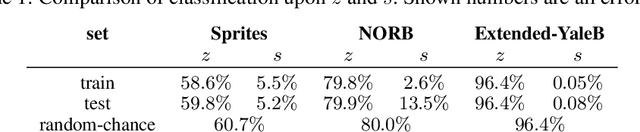

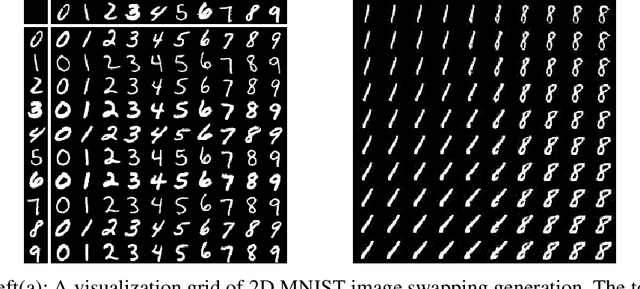

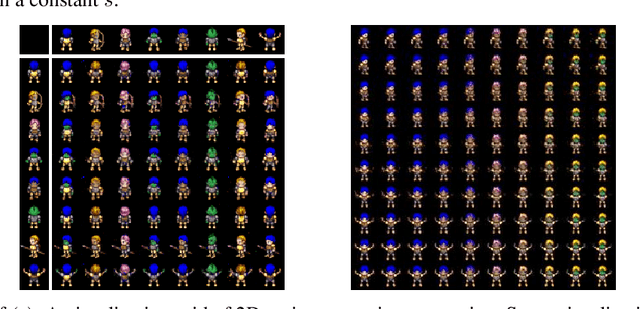

We introduce a conditional generative model for learning to disentangle the hidden factors of variation within a set of labeled observations, and separate them into complementary codes. One code summarizes the specified factors of variation associated with the labels. The other summarizes the remaining unspecified variability. During training, the only available source of supervision comes from our ability to distinguish among different observations belonging to the same class. Examples of such observations include images of a set of labeled objects captured at different viewpoints, or recordings of set of speakers dictating multiple phrases. In both instances, the intra-class diversity is the source of the unspecified factors of variation: each object is observed at multiple viewpoints, and each speaker dictates multiple phrases. Learning to disentangle the specified factors from the unspecified ones becomes easier when strong supervision is possible. Suppose that during training, we have access to pairs of images, where each pair shows two different objects captured from the same viewpoint. This source of alignment allows us to solve our task using existing methods. However, labels for the unspecified factors are usually unavailable in realistic scenarios where data acquisition is not strictly controlled. We address the problem of disentanglement in this more general setting by combining deep convolutional autoencoders with a form of adversarial training. Both factors of variation are implicitly captured in the organization of the learned embedding space, and can be used for solving single-image analogies. Experimental results on synthetic and real datasets show that the proposed method is capable of generalizing to unseen classes and intra-class variabilities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge